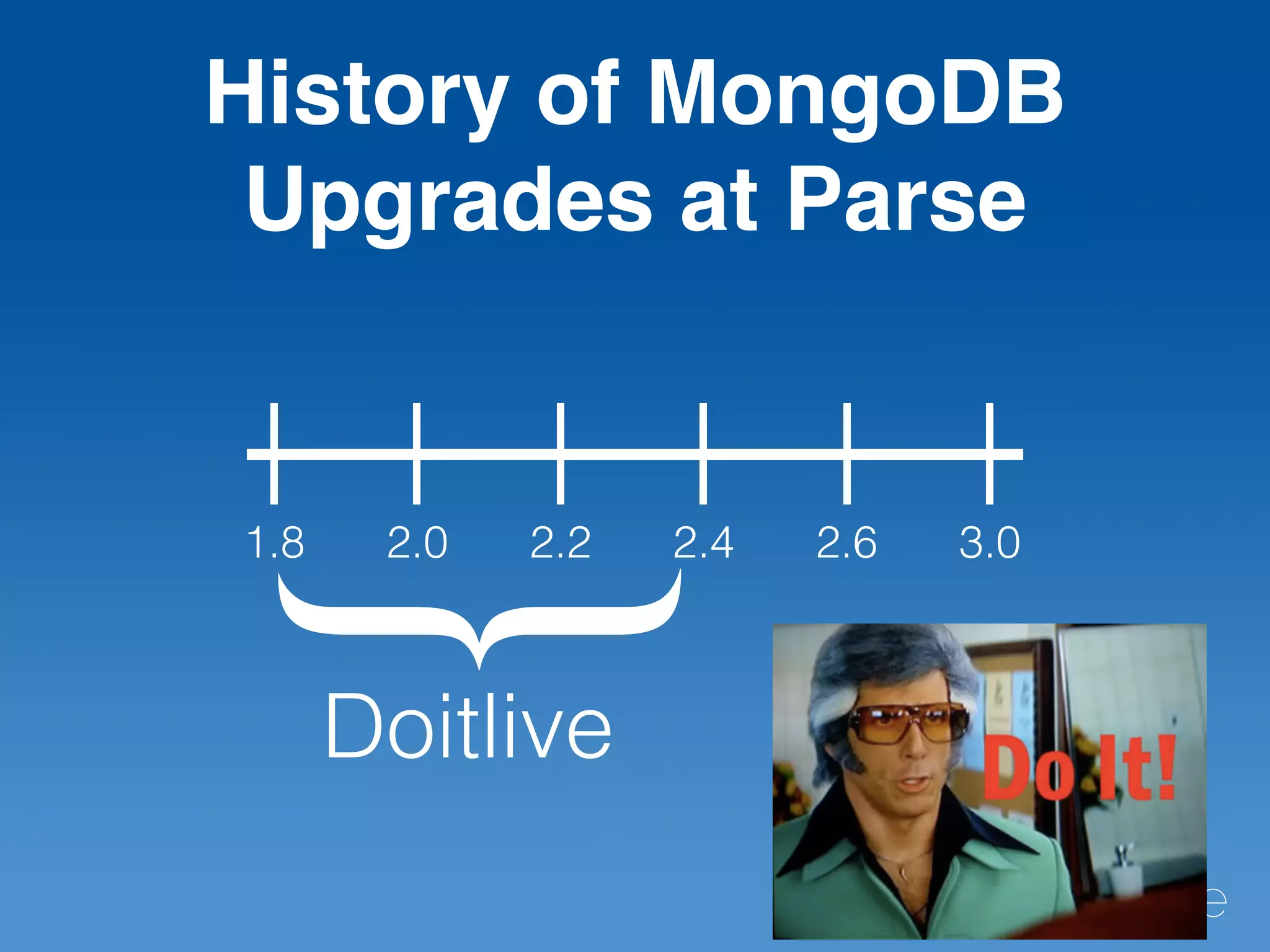

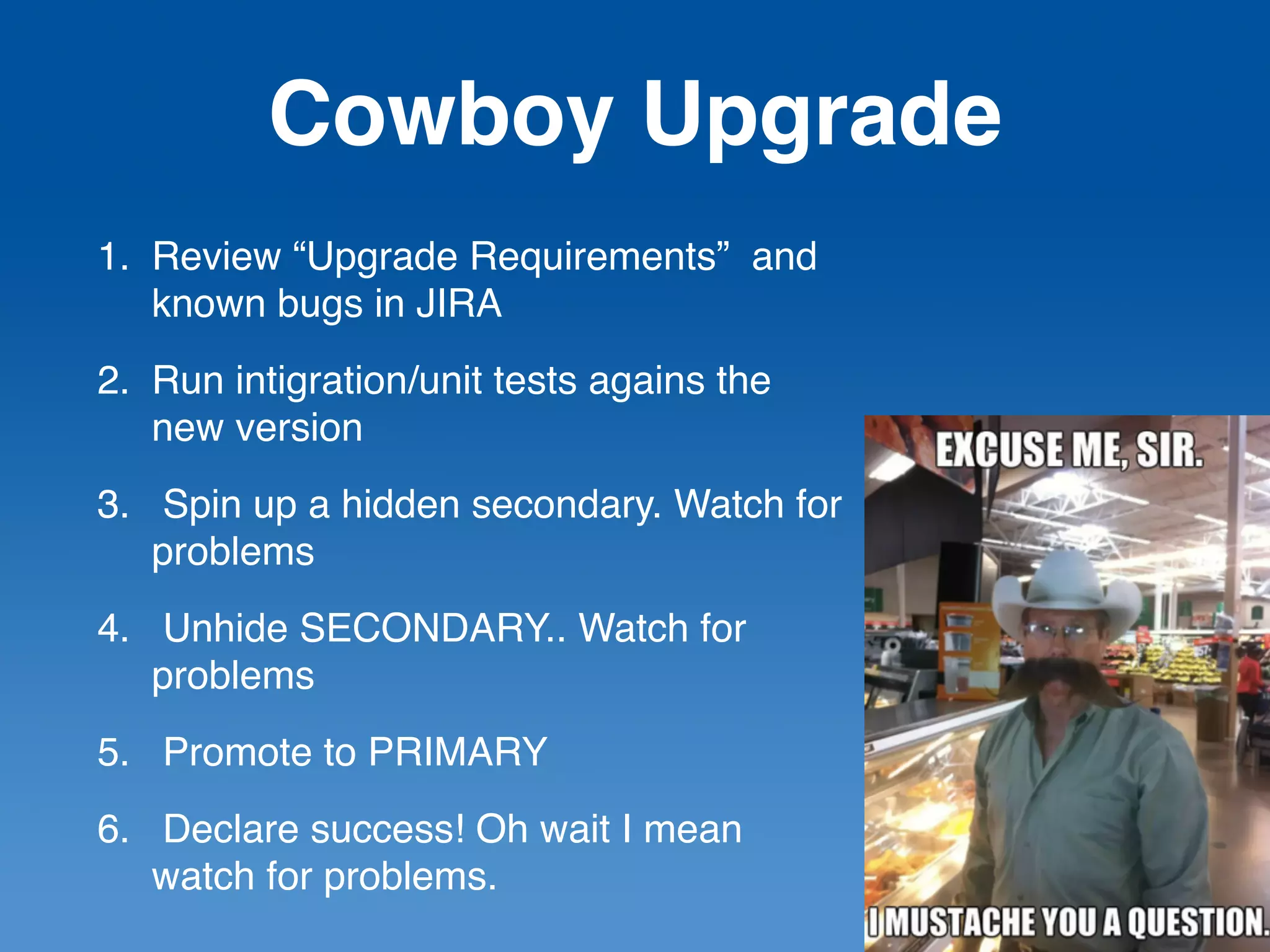

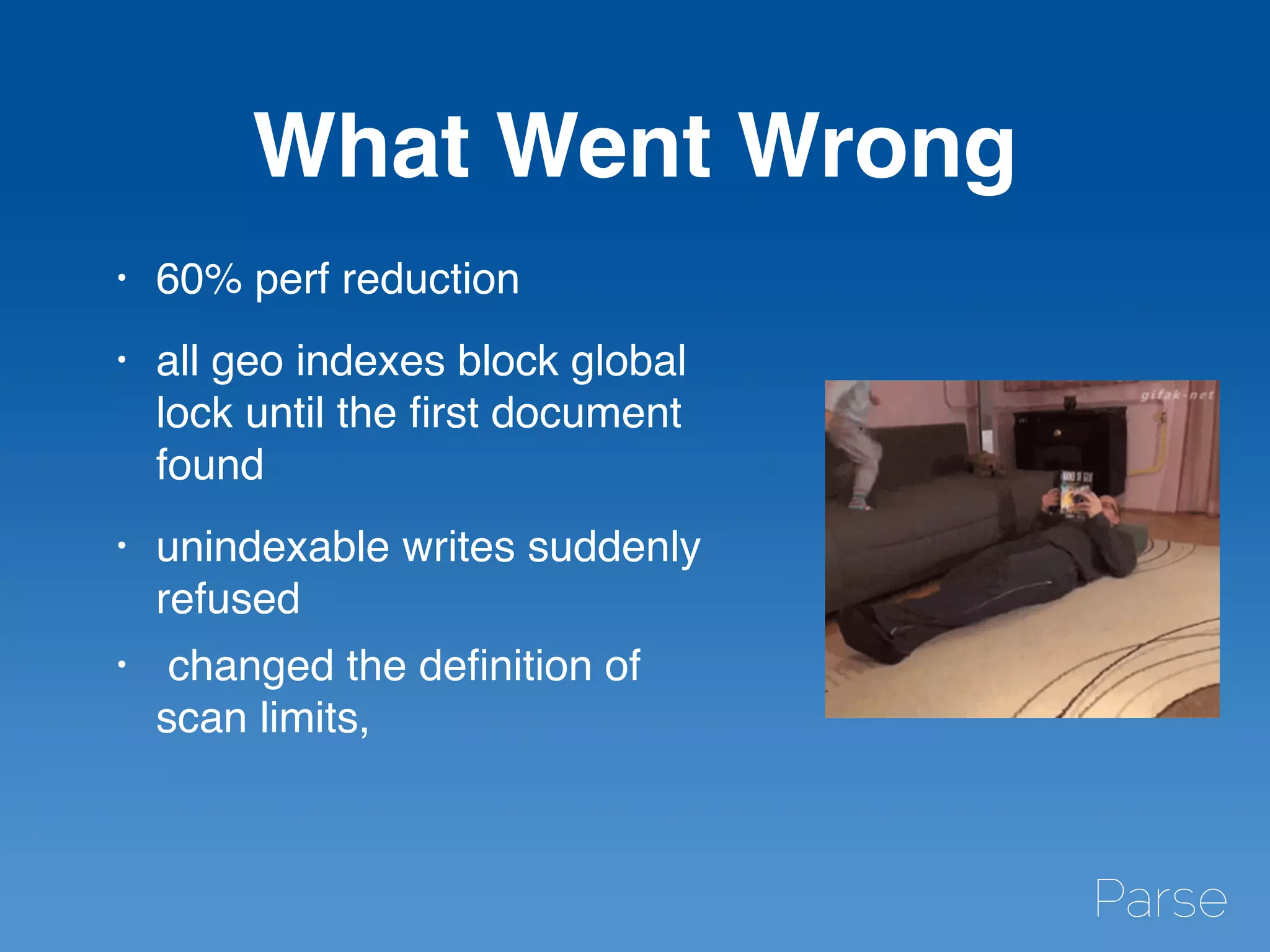

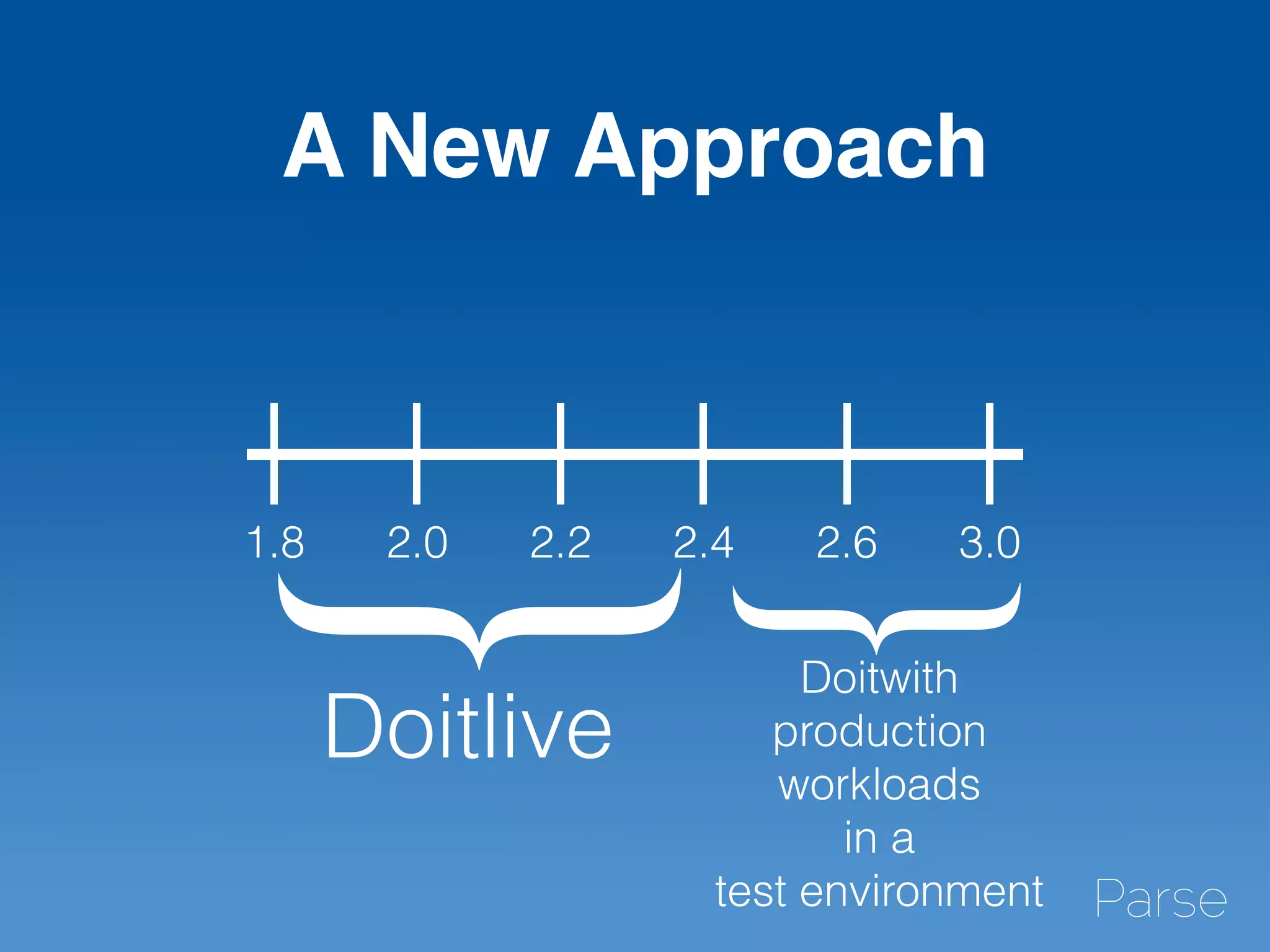

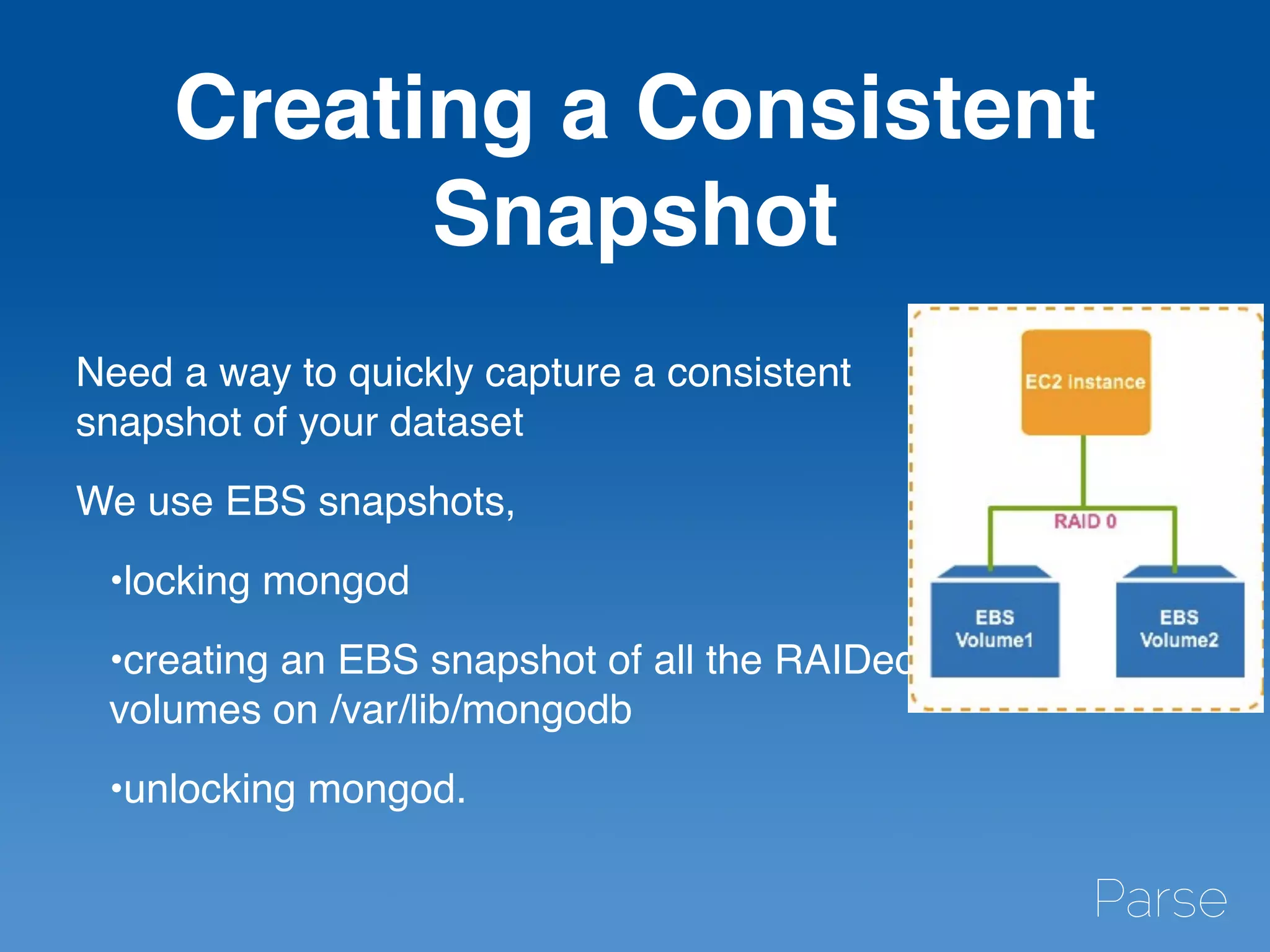

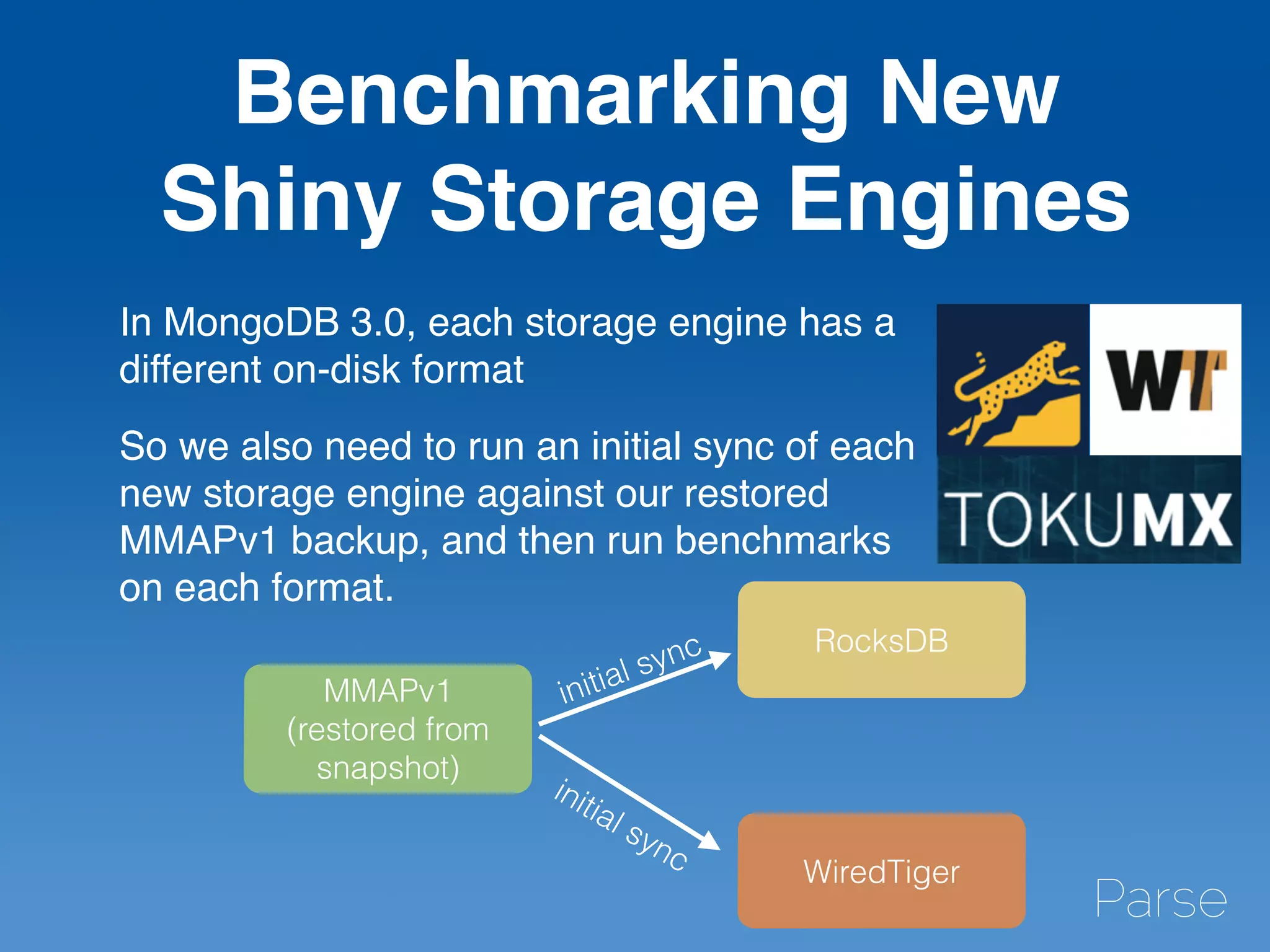

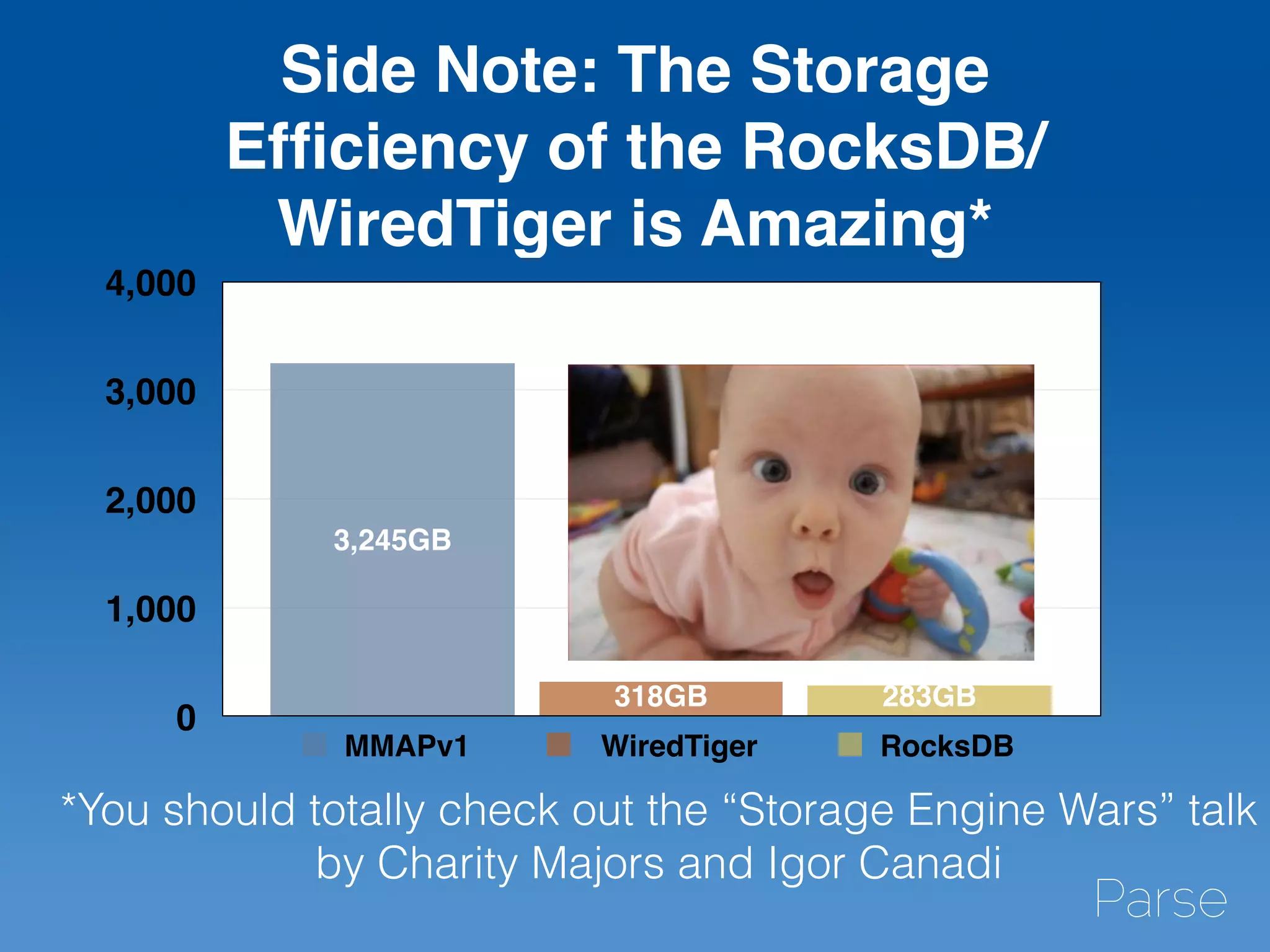

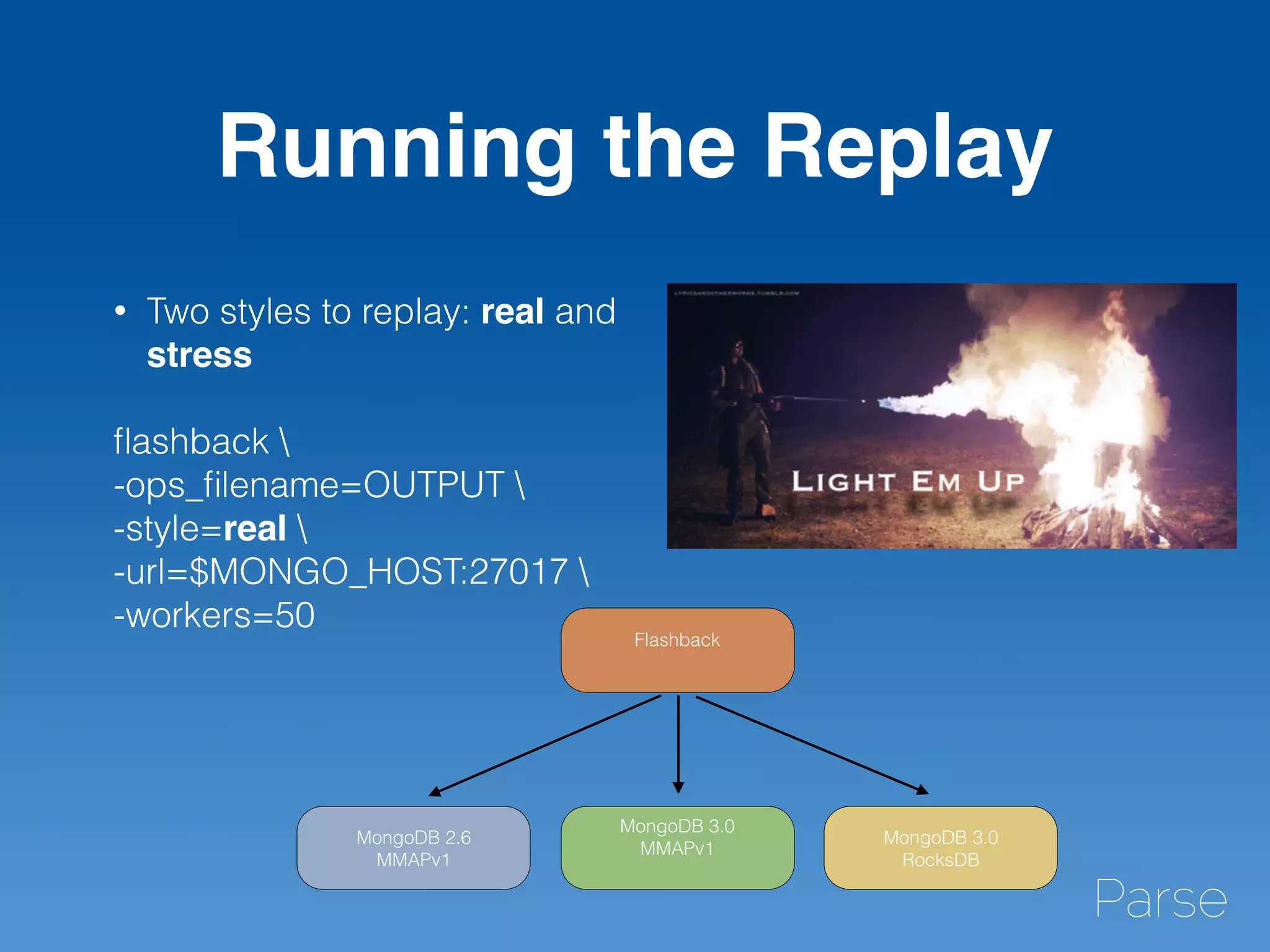

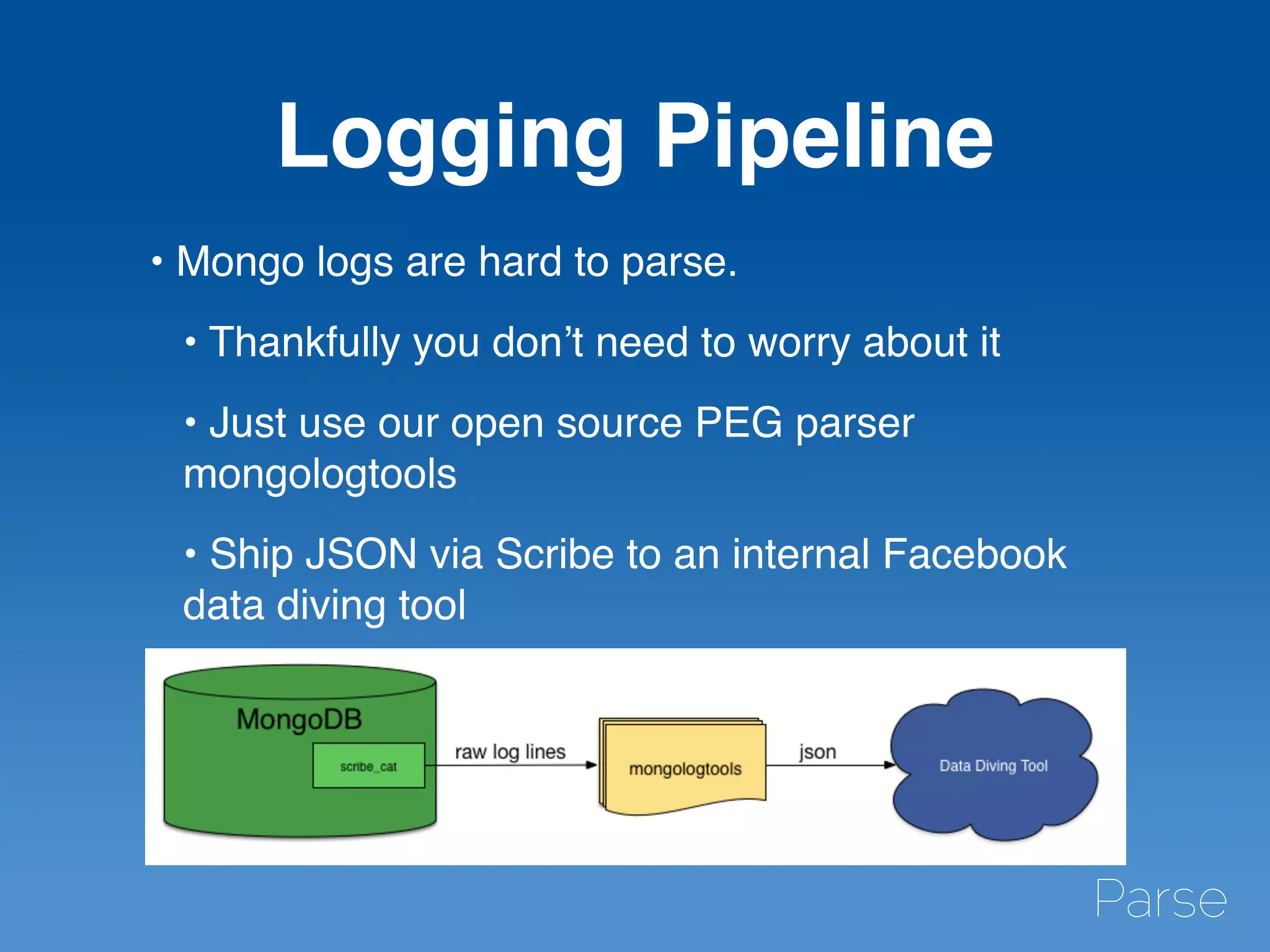

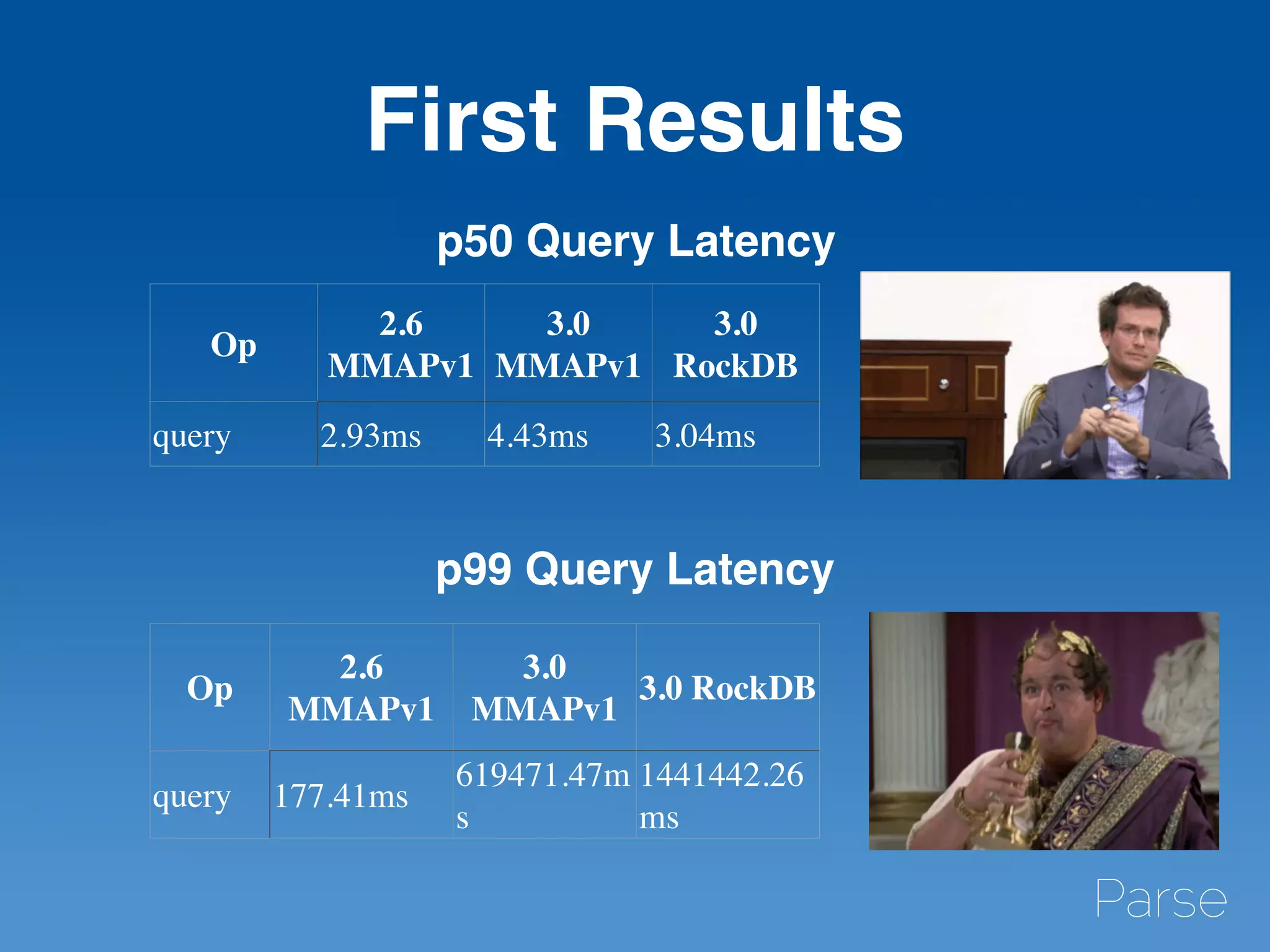

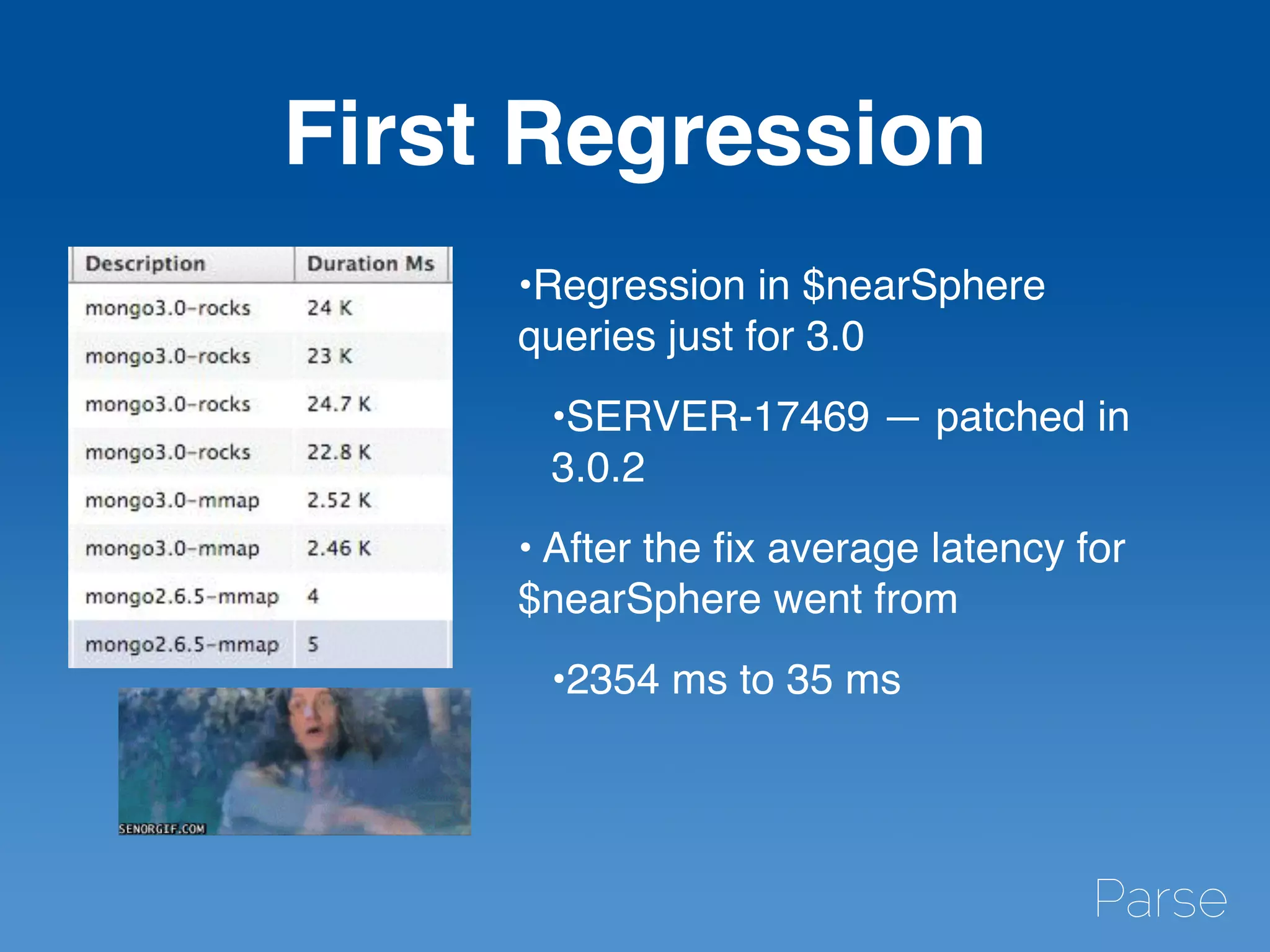

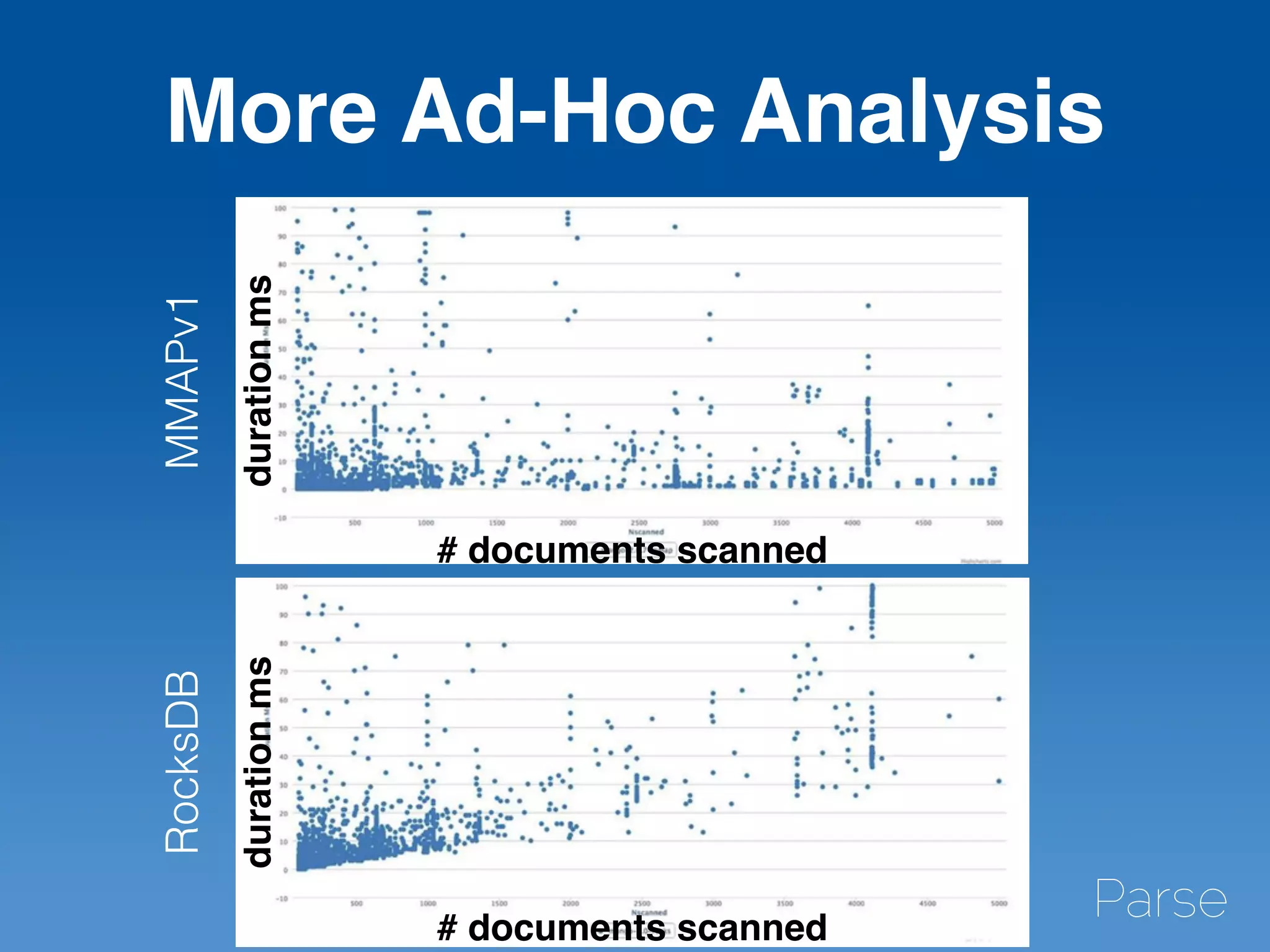

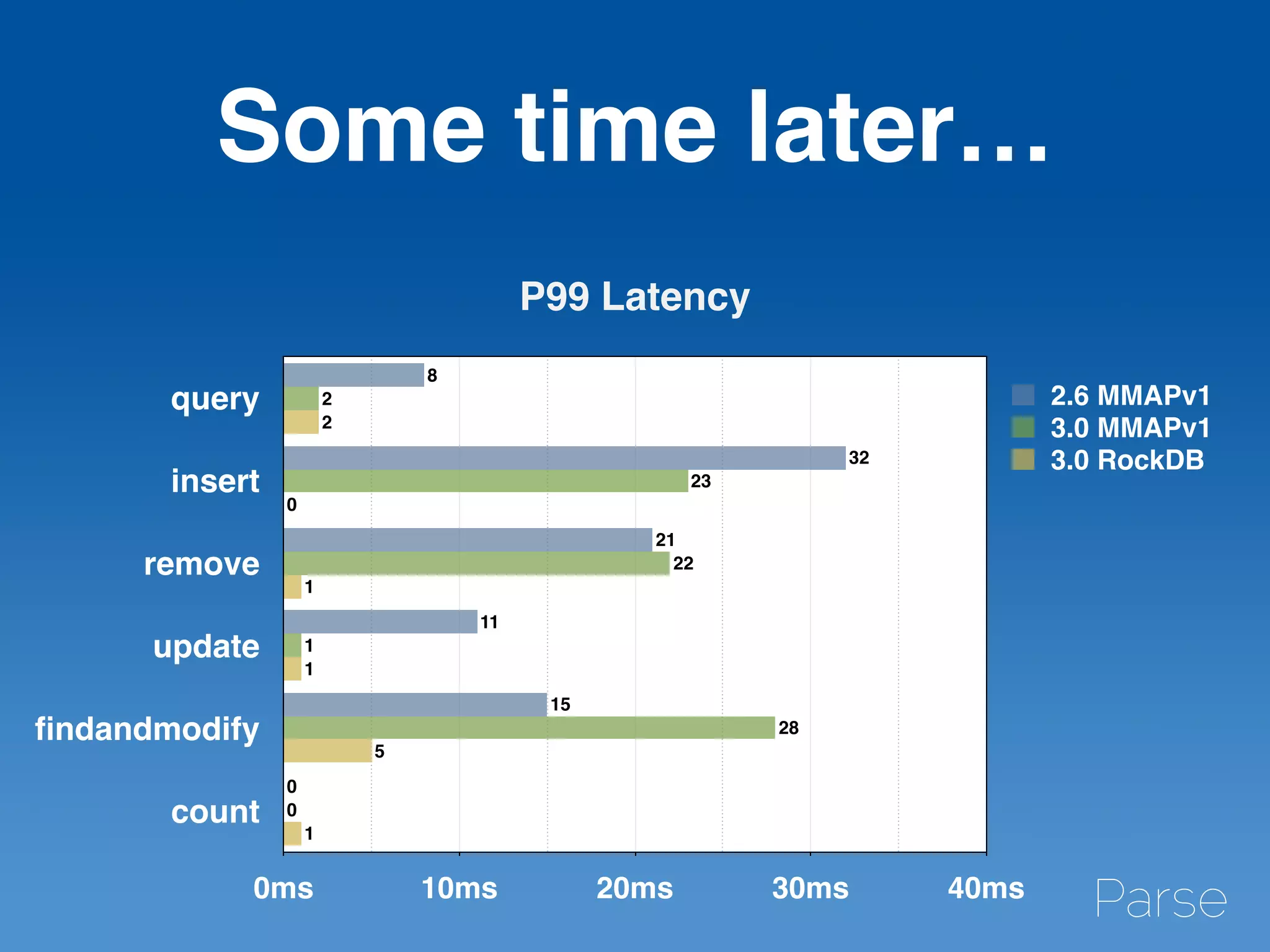

The document discusses Mike Kania's experiences and insights as a production engineer at Parse, focusing on benchmarking and load testing with MongoDB. It outlines the complexities of Parse's infrastructure, including processes for upgrading MongoDB versions, potential performance issues, and the use of open-source tools for capturing workloads. Additionally, it provides details on how Parse has implemented storage solutions, benchmarking strategies, and the performance comparisons between different storage engines in MongoDB 3.0.

![Benchmarks Won’t

Find Everything

•[RocksDB] Prefix collision could happen between

restarts

https://github.com/mongodb-partners/mongo/

commit/

da8a90b3b71bf291684ffc5a6d2fd32118ce1a7b

•[MongoDB] Secondary reads block replication

https://jira.mongodb.org/browse/SERVER-18190](https://image.slidesharecdn.com/mongobenchmarkingcopy-150605202722-lva1-app6891/75/Benchmarking-Load-Testing-and-Preventing-Terrible-Disasters-30-2048.jpg)