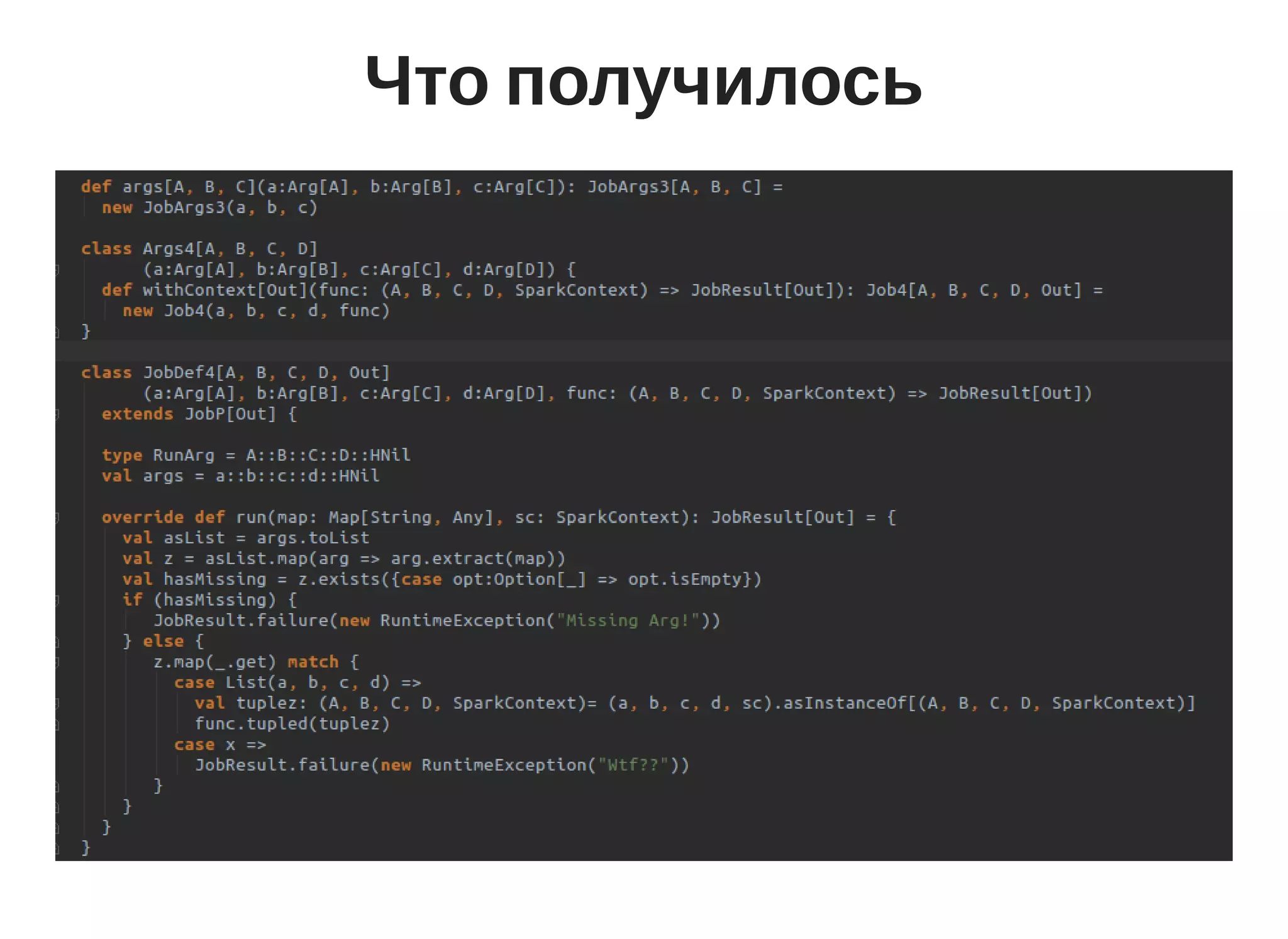

Shapeless is used to create a generic and type-level API for Spark jobs. It allows defining job arguments and context in a typesafe way using HList and type classes. The boilerplate of defining job arguments and context is eliminated by using Shapeless combinators and type level programming.

![Spark app

spark/bin/spark-submit

--class "SimpleApp"

--master local[4]

target/scala-2.11/simple-project_2.11-1.0.jar](https://image.slidesharecdn.com/mytalk-171204131636/75/Api-Shapeless-10-2048.jpg)

![Library Api

object UserJob extends Job {

def execute(a: String, b: Int): Map[String, Any] = {

val wordsToCount = sc.textFile("hdfs://...").flatMap(line

wordsToCount

}

}](https://image.slidesharecdn.com/mytalk-171204131636/75/Api-Shapeless-15-2048.jpg)

![Прототип

object MyJob extends Job[R] {

override def defineJob: JobDef[R] = ???

}](https://image.slidesharecdn.com/mytalk-171204131636/75/Api-Shapeless-17-2048.jpg)

,

arg[String]("str")

)

}](https://image.slidesharecdn.com/mytalk-171204131636/75/Api-Shapeless-18-2048.jpg)

![Прототип

object MyJob extends Job[Int] {

override def define = {

args(arg[String]("path")).withSparkContext(

(p: String, sc: SparkContext) => {

sc.textFile("hdfs://" + p)

})

}

}](https://image.slidesharecdn.com/mytalk-171204131636/75/Api-Shapeless-19-2048.jpg)

![Finch

val route = get("foo" :: path[String]) { s: String =>

Ok(s"Hello $foo")

}](https://image.slidesharecdn.com/mytalk-171204131636/75/Api-Shapeless-21-2048.jpg)

![Arg

trait Arg[A]{

def extract(ctx: Ctx): Option[A]

}](https://image.slidesharecdn.com/mytalk-171204131636/75/Api-Shapeless-24-2048.jpg)

: Arg[A]

def arg[A](name: String, default: A): Arg[A]](https://image.slidesharecdn.com/mytalk-171204131636/75/Api-Shapeless-25-2048.jpg)

: Args1[A]

def args[A](a1: Arg[A], a2: Arg[B]): Args2[A, B]](https://image.slidesharecdn.com/mytalk-171204131636/75/Api-Shapeless-26-2048.jpg)

{

def withContext[Res](

f: (A, SparkContext) => Res): JobDef[Res]

}

class Args2[A, B](a1: Arg[A], a2: Arg[B]) {

def withContext[Res](

f: (A, B, SparkContext) => Res): JobDef[Res]

}](https://image.slidesharecdn.com/mytalk-171204131636/75/Api-Shapeless-27-2048.jpg)

: Args${arity}[${`A..N`}]

- new Args${arity}(${`a1..aN`})

|}

|

|object Methods extends Methods

"""](https://image.slidesharecdn.com/mytalk-171204131636/75/Api-Shapeless-29-2048.jpg)

![Что возвращать

trait Job[R] {

def define: JobDef[R]

}](https://image.slidesharecdn.com/mytalk-171204131636/75/Api-Shapeless-30-2048.jpg)

![No way

trait Job[A] {

def define: JobDef1[A, R]

def define: JobDef2[A, B, R]

}](https://image.slidesharecdn.com/mytalk-171204131636/75/Api-Shapeless-31-2048.jpg)

![Type member

trait Generic[A] {

def foo(a: A)

}

trait Member {

type A

def foo(a: A)

}](https://image.slidesharecdn.com/mytalk-171204131636/75/Api-Shapeless-32-2048.jpg)

![Сохранить типы

trait JobDef[R] {

type Args

def args: Args

def run(ctx: Ctx): R

}](https://image.slidesharecdn.com/mytalk-171204131636/75/Api-Shapeless-33-2048.jpg)

=> R)

extends JobDef[R] {

type Args = A :: HNil

def args = a :: HNil

}](https://image.slidesharecdn.com/mytalk-171204131636/75/Api-Shapeless-34-2048.jpg)

= a.extract(?)

}

https://milessabin.com/blog/2012/04/27/shapeless-

polymorphic-function-values-1/](https://image.slidesharecdn.com/mytalk-171204131636/75/Api-Shapeless-36-2048.jpg)

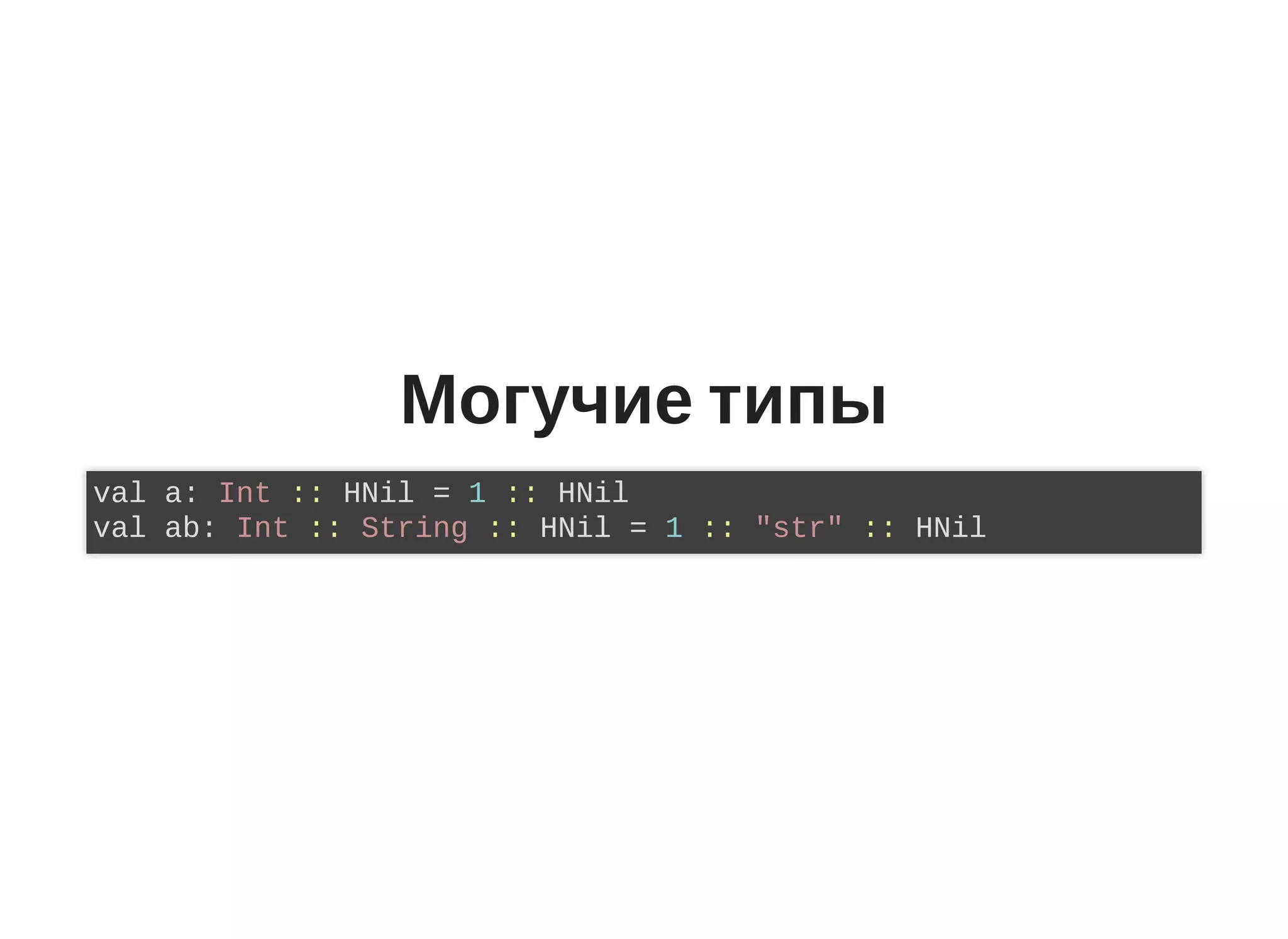

![Могучие типы

trait Foo[A]{

def map(f: A => B): Foo[B]

}](https://image.slidesharecdn.com/mytalk-171204131636/75/Api-Shapeless-40-2048.jpg)

![implicit ~= match

trait Show[A] {

def show(a: A): String

}

object Show {

implicit val forString: Show[String] = ...

implicit val forInt: Show[Int] = ...

}](https://image.slidesharecdn.com/mytalk-171204131636/75/Api-Shapeless-42-2048.jpg)

![implicit ~= match

trait DepFn[A] {

type Out

def apply(a: A): Out

}

object DepFn {

implicit val forString: DepFn[String]

implicit val forInt: DepFn[Int]

}](https://image.slidesharecdn.com/mytalk-171204131636/75/Api-Shapeless-43-2048.jpg)

![DepFn

def size(a: Any): Int = a match {

case i: Int = i

case s: String = s

}

trait Size[A] {

type Out

dep apply(a: A): Out

}

object Size[A] {

implicit val forStr: Size[String] = ...

implicit val forInt: Size[Int] = ...

}

def size[A](a: A)(implicit sz: Size[A]): sz.Out =

size(a)](https://image.slidesharecdn.com/mytalk-171204131636/75/Api-Shapeless-44-2048.jpg)

![DepFn

object ToHList {

implicit def tuple1[A] = new ToHList[Tuple1[A]] {

type Out = A :: HNil

def apply(a: Tuple1[A]): A :: HNil = a._1 :: HNil

}

}

def foo[A](a: A)(implicit thl: ToHList[A]): thl.Out =

thl(a)](https://image.slidesharecdn.com/mytalk-171204131636/75/Api-Shapeless-45-2048.jpg)

(implicit

thl: ToHList[A],

rev: Reverse[?]

): rev.Out =

rev(thl(a))](https://image.slidesharecdn.com/mytalk-171204131636/75/Api-Shapeless-46-2048.jpg)

![Aux

object ToHList {

type Aux[A, Out0] = ToHList[A] {type Out = Out0 }

implicit def tuple1[A]: Aux[Tuple1[A], A :: HNil] =

new ToHList[Tuple1[A]] {

type Out = A :: HNil

def apply(a: Tuple1[A]): A :: HNil = a._1 :: HNil

}

}](https://image.slidesharecdn.com/mytalk-171204131636/75/Api-Shapeless-47-2048.jpg)

(

implicit

thl: ToHList.Aux[A, H],

r: Reverse[H]): r.Out = r(thl(a))](https://image.slidesharecdn.com/mytalk-171204131636/75/Api-Shapeless-48-2048.jpg)

![Время поправить

object MyJob extends Job[Int] {

override def define = {

args(arg[String]("path"), arg[Int]("n")).withSparkContext(

(p: String, sc: SparkContext) => {

})

}

}](https://image.slidesharecdn.com/mytalk-171204131636/75/Api-Shapeless-50-2048.jpg)

class JobDefN[A .. N, R](

a1: Arg[A],

...

aN: Arg[N],

f: (A.. N, SparkContext) => R)

extends JobDef[R] {

type RunArgs = A :: N

def args = a :: n

}](https://image.slidesharecdn.com/mytalk-171204131636/75/Api-Shapeless-51-2048.jpg)

![Комбинаторы

trait Arg[A] { self =>

def &(b: Arg[B]): Arg[A :: B :: HNil] =

}](https://image.slidesharecdn.com/mytalk-171204131636/75/Api-Shapeless-52-2048.jpg)

![Комбинаторы

val a: ArgDef[A] = arg[A]("a")

val b: ArgDef[B] = arg[B]("b")

val c: ArgDef[C] = arg[C]("c")

val ab: ArgDef[A :: B :: HNil] = a & b

val abc: ArgDef[A :: B :: C :: HNil] = a & b & c](https://image.slidesharecdn.com/mytalk-171204131636/75/Api-Shapeless-53-2048.jpg)

![Extract

def &(b: Arg[B]): Arg[A :: B :: HNil] = {

new Arg[A :: B :: HNil] {

def extract(ctx: Ctx): Option[A :: B :: HNil] = {

val opt1 = self.extract(p)

val opt2 = b.extract(p)

(opt1, opt2) match {

case (Some(a), Some(b)) => Some(a :: b :: HNil)

}

}

}

}](https://image.slidesharecdn.com/mytalk-171204131636/75/Api-Shapeless-54-2048.jpg)

& arg[Int]("b")

val dc = arg[String]("c") & arg[Int]("d")

val abdc = ab & dc](https://image.slidesharecdn.com/mytalk-171204131636/75/Api-Shapeless-55-2048.jpg)

![Adjoin

def &(b: Arg[B])(

implicit

adj: Adjoin[A :: B :: HNil]

): Arg[adj.Out] = {

(opt1, opt2) match {

case (Some(a), Some(b)) => Some(adj(a :: b :: HNil))

}](https://image.slidesharecdn.com/mytalk-171204131636/75/Api-Shapeless-56-2048.jpg)

![Контекст это тоже аргумент

val sparkContext = new Arg[SparkContext] {

def extract(ctx: Ctx): = Some(ctx.sparkContext)

}

val args = arg[Int]("n") & sparkContext](https://image.slidesharecdn.com/mytalk-171204131636/75/Api-Shapeless-57-2048.jpg)

![FnToProduct

trait Arg[A] { self =>

def apply[F, Int <: HList, Res](f: F)(

implicit

fntp: FnToProduct.Aux[F, In => Res]): JobDef[Res] =

}

val args = arg[Int]("n") & sparkContex

val jobdef = args {(n, sparkContext) => ...}](https://image.slidesharecdn.com/mytalk-171204131636/75/Api-Shapeless-58-2048.jpg)

(

implicit

gen: Generic.Aux[A, H],

r: LeftReducer.Aux[H, reducer.type, ROut],

ev: ROut <:< ArgDef[Z]

)](https://image.slidesharecdn.com/mytalk-171204131636/75/Api-Shapeless-59-2048.jpg)

![implicitNotFound

@implicitNoFound("Not found Foo {A}")

trait Foo[A]

def foo[A](implicit foo: Foo[A])

foo(1) // Not found Foo Int](https://image.slidesharecdn.com/mytalk-171204131636/75/Api-Shapeless-61-2048.jpg)

![implicitNotFound

def bar[X, R])(x: X)(implicit x: Aux[X, R], foo: Foo[R])

bar(1) //Not found Foo R](https://image.slidesharecdn.com/mytalk-171204131636/75/Api-Shapeless-62-2048.jpg)