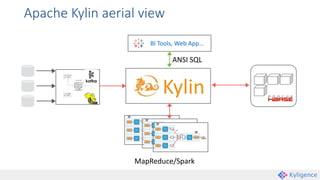

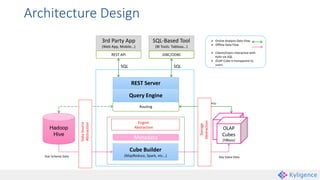

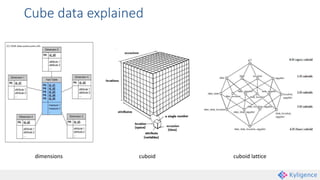

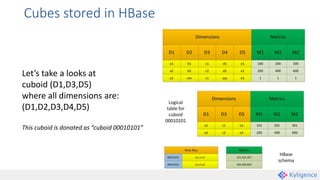

Apache Kylin is an open-source distributed analytics engine that enables SQL-based multi-dimensional analysis on large Hadoop datasets. It utilizes a pre-aggregation approach with OLAP cubes stored in HBase, designed for high performance and efficiency in querying massive data volumes. Kylin has been widely adopted by major companies and provides various interfaces for users to interact with its capabilities.