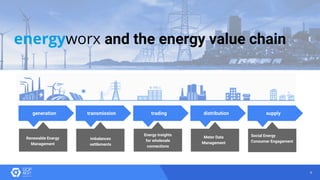

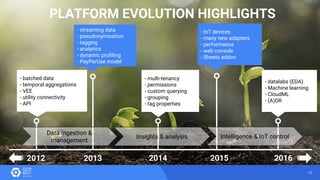

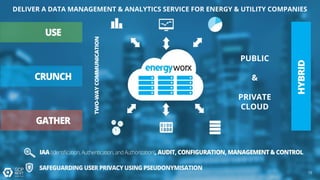

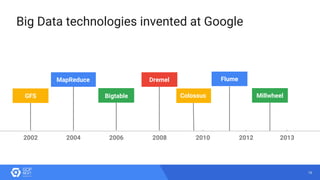

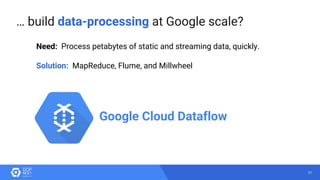

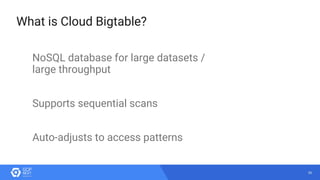

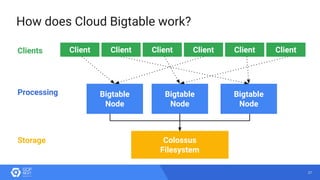

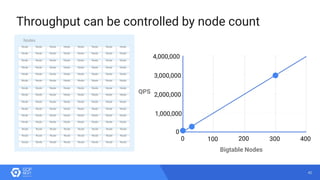

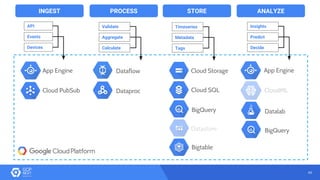

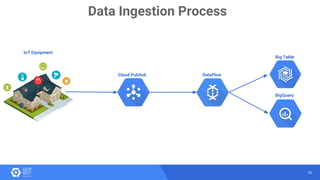

The document discusses the analysis of smart meter data through advanced data management services leveraging Google Cloud technologies like Bigtable, Dataflow, and BigQuery, aimed at addressing challenges in the evolving energy market. It emphasizes the need for centralized data views, privacy safeguards, and the emergence of data-driven business models in the utility sector. Key advantages of the proposed solutions include cloud scalability, machine learning integration, and support for various IoT devices.