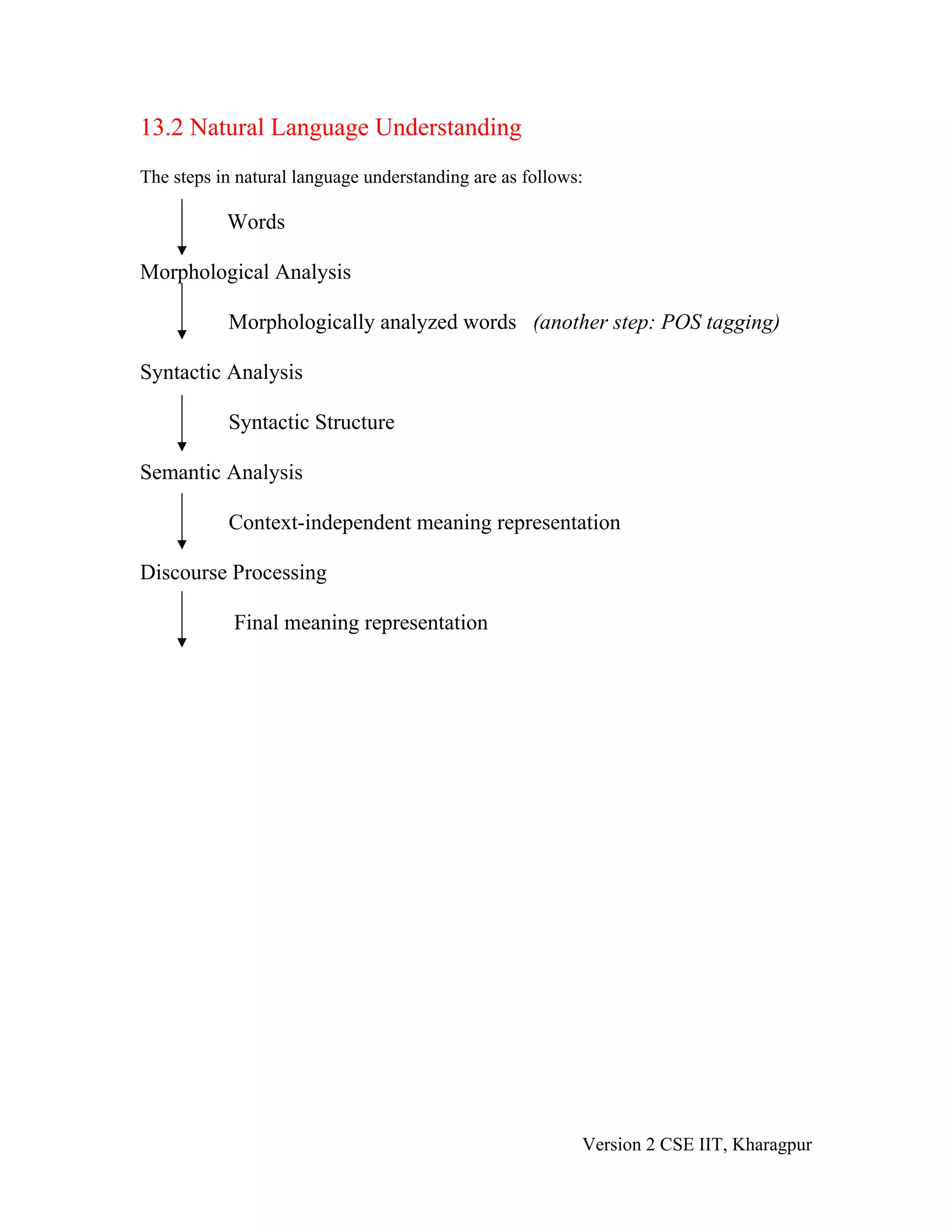

The document discusses natural language processing (NLP) and some of the key challenges involved. It explains that NLP aims to get computers to understand and generate human languages. Understanding written text requires lexical, syntactic, semantic and other knowledge about the language. Ambiguities exist at many levels, from individual words to sentences, and resolving ambiguities is challenging. The document outlines some common sources of ambiguity and methods for addressing them, including part-of-speech tagging and probabilistic parsing. It also discusses models like context-free grammars that are used to represent linguistic knowledge, and algorithms like state space search and dynamic programming that manipulate these models. Finally, it provides an overview of the main steps involved in natural language understanding.