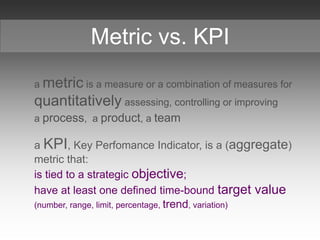

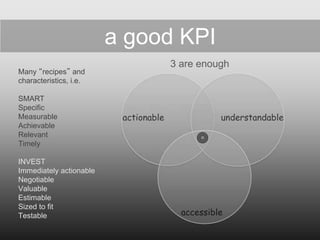

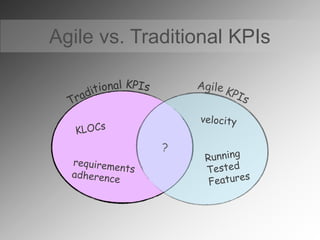

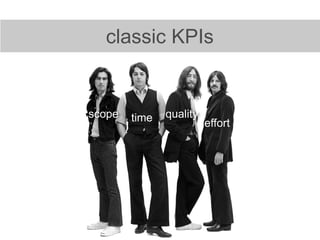

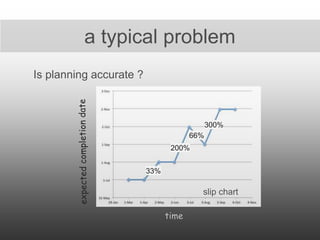

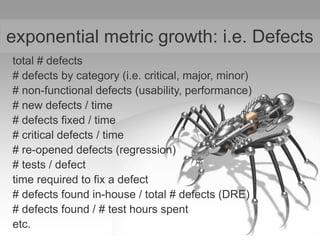

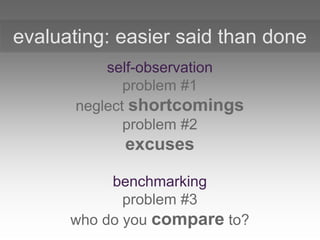

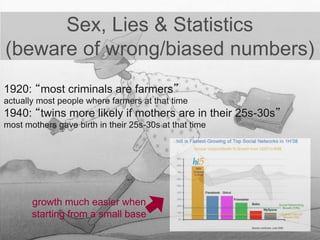

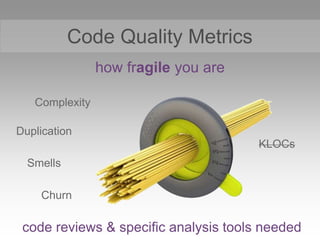

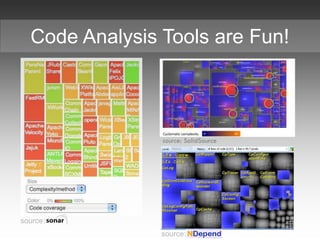

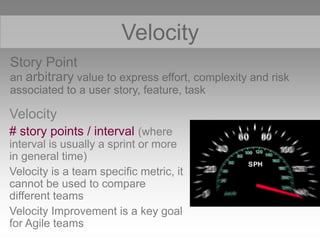

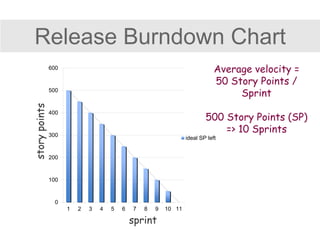

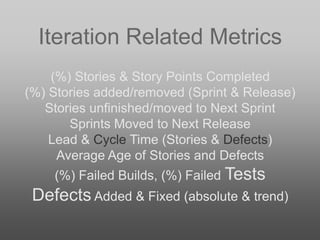

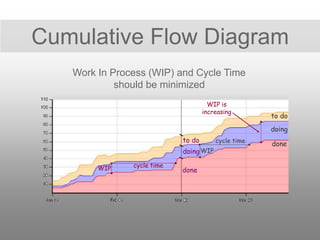

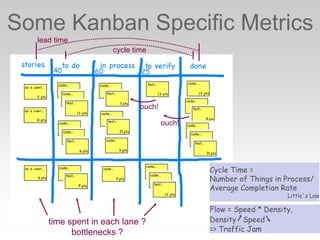

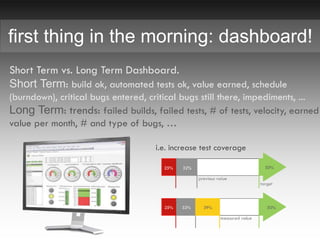

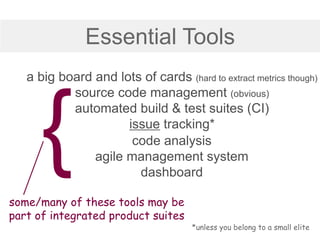

This document discusses key performance indicators (KPIs) for measuring agile projects. It begins by defining metrics and KPIs, noting that KPIs should be tied to strategic objectives and have defined targets. It then discusses characteristics of good KPIs and provides examples of both traditional and agile KPIs related to time, effort, scope, and quality. The document cautions that too many KPIs can be useless and advocates keeping metrics simple. It also discusses challenges like cheating on metrics and provides tips for using tools and dashboards to effectively measure agile performance.