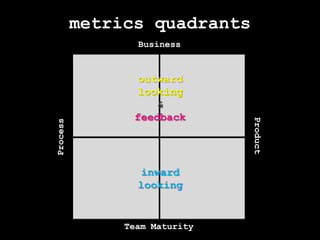

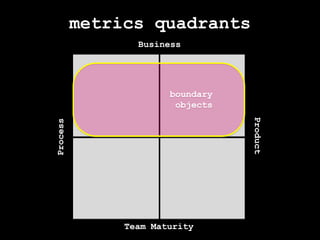

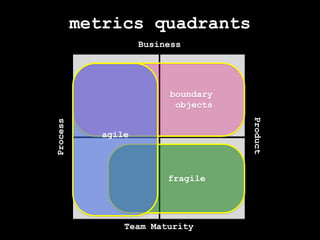

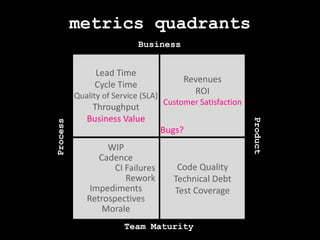

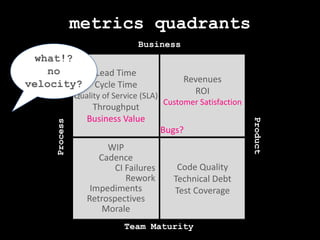

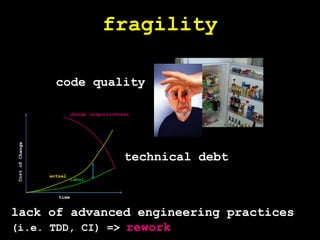

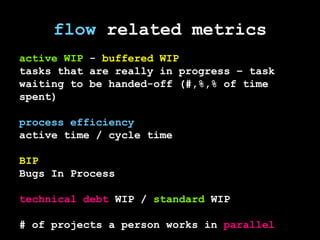

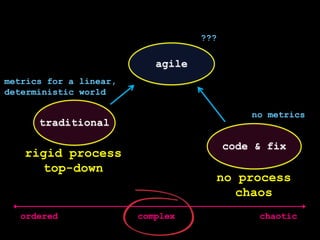

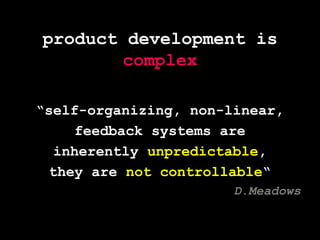

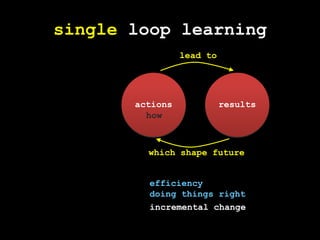

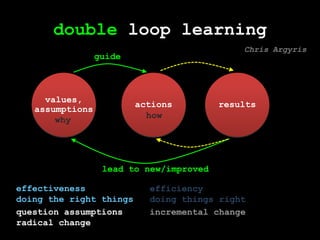

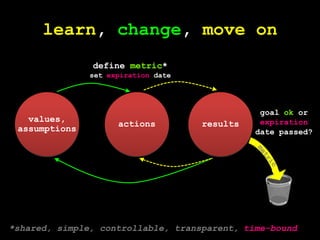

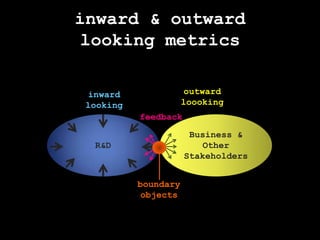

This document discusses metrics for agile product development. It argues that traditional metrics designed for linear processes do not work for complex and unpredictable agile systems. Instead, it recommends focusing on metrics that allow for learning and change over time through single and double loop learning. The document presents a framework with four quadrants of metrics for measuring business outcomes, products, processes, and team maturity using metrics that serve as "boundary objects" between teams.

![boundary objectsmetricbusinessR&Dboundary object [sociology]: something that helps different communities exchange ideas and information.could mean different things to differentpeoplebut allows coordination and alignment](https://image.slidesharecdn.com/metricsale2011-110910035416-phpapp02/85/How-fr-agile-we-are-ALE2011-11-320.jpg)