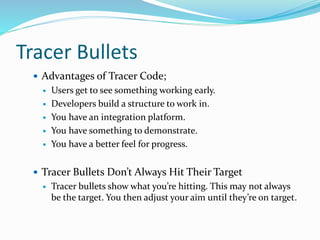

The document discusses a pragmatic approach to software development, focusing on key topics such as the evils of duplication, orthogonality, reversibility, tracer bullets, and prototypes. It emphasizes the importance of minimizing duplication and enhancing the independence of system components for better flexibility and maintainability. Additionally, it highlights the use of prototypes as a learning experience to facilitate quick development and effective problem-solving.