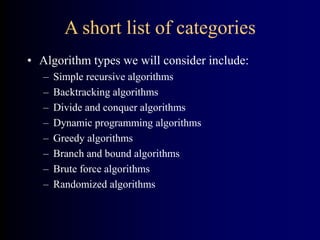

This document discusses different types of algorithms. It provides examples of:

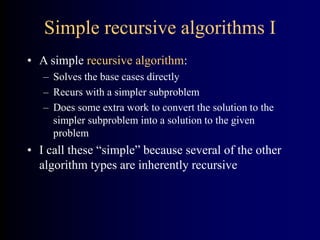

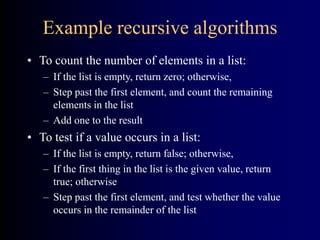

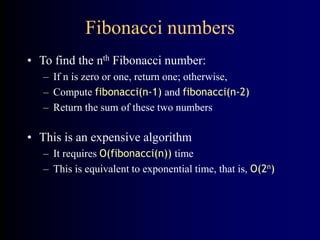

- Simple recursive algorithms that solve base cases and recursively call simpler subproblems.

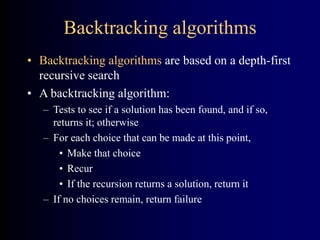

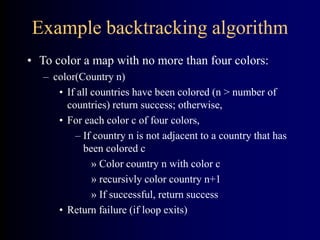

- Backtracking algorithms that use depth-first search and backtrack when choices lead to dead ends.

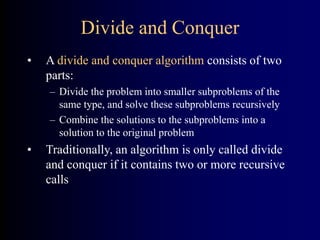

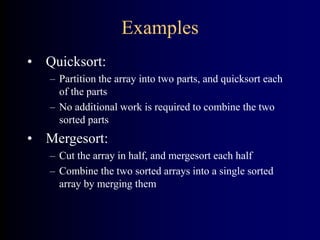

- Divide and conquer algorithms that divide problems into subproblems, solve the subproblems recursively, and combine the solutions.

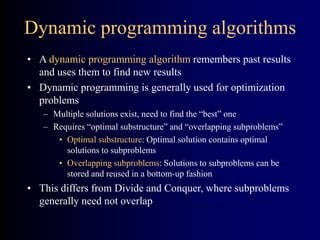

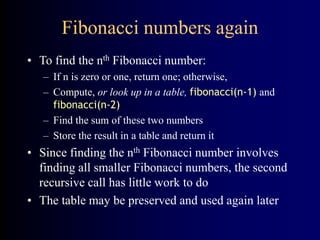

- Dynamic programming algorithms that store and reuse solutions to overlapping subproblems to optimize problems.

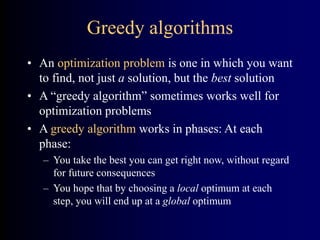

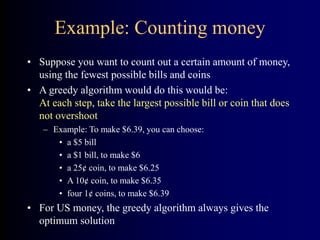

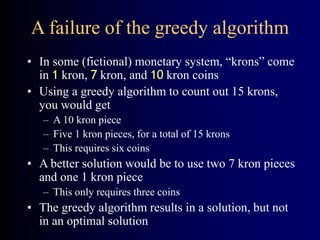

- Greedy algorithms that make locally optimal choices at each step to hopefully find a global optimum.

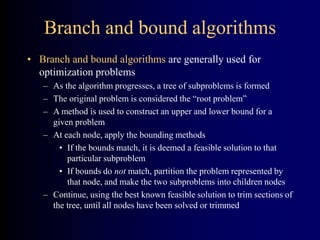

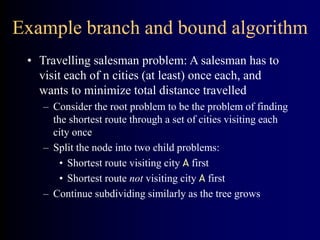

- Branch and bound algorithms that construct a search tree and prune suboptimal branches.

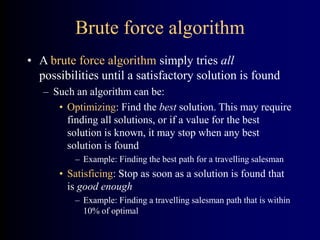

- Brute force algorithms that exhaustively check all possibilities until finding a solution