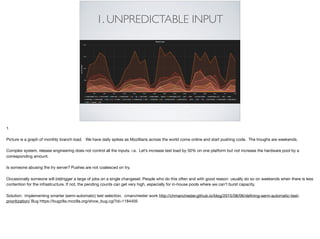

The document discusses the challenges and optimizations in managing large-scale distributed systems, particularly in the context of a continuous integration system at Mozilla. It highlights issues such as unpredictable input, lack of canary testing, and the need for better monitoring and resource allocation strategies. The document concludes by emphasizing the importance of optimizing processes and resources to maintain efficiency and increase throughput in distributed systems.

![9. DUPLICATE JOBS

Duplicate bits, wasted time, resources

We currently build a release twice - once in CI, once as a release job. This is inefficient and makes releng a bottleneck to getting a release out the door. To fix this -

implement release promotion! https://bugzilla.mozilla.org/show_bug.cgi?id=1118794. Same thing applies to nightly builds

Picture Creative Commons

https://upload.wikimedia.org/wikipedia/commons/c/c6/DNA_double_helix_45.PNG

By Jerome Walker,Dennis Myts (Own work) [Public domain], via Wikimedia Commons](https://image.slidesharecdn.com/scalefailures2015-151116215949-lva1-app6891/85/Distributed-Systems-at-Scale-Reducing-the-Fail-20-320.jpg)