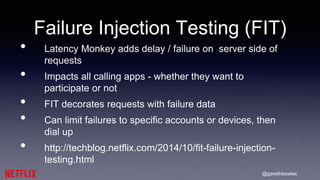

The document discusses the challenges of testing complex distributed systems, specifically in the context of Netflix and AWS. It emphasizes the importance of chaos engineering, utilizing tools like Chaos Monkey to simulate failures and validate system resiliency. The text also outlines various testing methodologies and deployment strategies that help Netflix maintain availability and performance for its users.