Classification of prostate cancer pathology reports using natural language processing

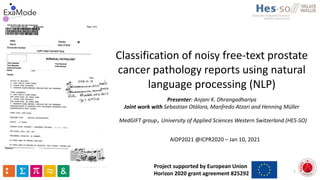

- 1. Classification of noisy free-text prostate cancer pathology reports using natural language processing (NLP) Presenter: Anjani K. Dhrangadhariya Joint work with Sebastian Otálora, Manfredo Atzori and Henning Müller AIDP2021 @ICPR2020 – Jan 10, 2021 1 MedGIFT group, University of Applied Sciences Western Switzerland (HES-SO) Project supported by European Union Horizon 2020 grant agreement 825292

- 2. Pathology Reports Images are taken from the web for educational purposes. All rights reserved with the respective owners. 2

- 4. Structured reporting 4 > Completeness, consistency and clarity > Conformance to standards - Interoperability > Accurate > Comparison over health management timelines > Intervention benefits analysis > Better patient management, treatment decisions > (Semi-) automated decision support Swillens, J. E. M., et al. "Identification of barriers and facilitators in nationwide implementation of standardized structured reporting in pathology: a mixed method study." Snoek, Annefleure, et al. "The impact of standardized structured reporting of pathology reports for breast cancer in the Netherlands."

- 5. Automation Unavailability of structured reports • Manual information extraction (IE) • Time and resource consuming Automation methods • Create structured reports • Organize reports and their respective digital pathology images into structured proprietary database 5

- 6. Automation methods • Natural language Processing (NLP) methods: word and document embeddings, RNNs, transformers • Extensively used for electronic health records (EHR) analysis and IE • Applicability have not fully penetrated clinical pathology!! • Yala et al. classified breast cancer pathology reports into 20 classes using n-grams reaching 97% accuracy • Qiu et al. used a CNN to automatically extract ICD-O-3 topographic codes from a corpus of breast and lung cancer pathology reports with micro-F1 of 81% 6

- 7. Motivation Classify • Very-noisy, publicly-available • prostate pathology reports into high-grade & low-grade using NLP methods High confidence Inspect the text representation and classifier for reliability 7 X Private datasets X Do not investigate the reliability of machine learning approaches beyond performance metrics

- 8. Methods: Corpus • Prostate adenocarcinoma clinical pathology reports from The Cancer Genome Atlas (TCGA) PanCancer dataset (or corpus) • 494 reports (404 non-empty) • Manually annotated into high-grade and low-grade using diagnostic information contained within them 8 High-grade Low-grade Gleason score > 7 <= 7 Number of reports 171 233

- 9. Methods: Corpus characteristics 9 1. PDF format 2. Noisy 3. Variable structure 4. Variable length 5. Class imbalance

- 10. Methods: Preprocessing PDF Text 10 http://jocr.sourceforge.net/ Optical character recognition tool

- 11. Methods: Preprocessing 1. Special-character trails 2. ASCII null characters 3. NLTK stop-words (SW) 4. Corpus-specific SW 5. Punctuations Denoising and Preprocessing Noise 11NLTK = Natural Language Toolkit

- 12. Methods: Text representation 12 Count vectors • Represent text in form of word counts • Tf-idf (Term frequency – Inverse document frequency) • Count-based, weighted • Weights each term in the document wrt. corpus • meaningful words • Filler, stopwords

- 13. Methods: Text representation 13 • Semantic and contextual information lost! • High dimensionality, sparsity

- 14. Methods: Text representation 14 Semantic vectors • Distributed representation of paragraphs or documents • Paragraph vectors • Unsupervised Paragraph vectors 1. Distributed memory model of paragraph vectors (PV-DM) 2. Distributed bag of words model of paragraph vectors (PV-DBOW)

- 15. Methods: Text representation 15 • Distributed memory DM • Distributed bag of words DBOW

- 16. Test set Training set Reports Test set Clean reports Preprocessing 16 Methods

- 17. Test set Training set Reports Test set Clean reports Preprocessing 17 Methods Augmented Training set

- 18. Test set Training set Reports Test set Clean reports Preprocessing 18 Methods Augmented Training set back-translation

- 19. Test set Training set Reports Test set Clean reports Preprocessing 19 Methods Augmented Training set vectorization Vectors 1. Tf-Idf 2. PV-DM 3. PV-DBOW

- 20. Test set Training set Reports Test set Clean reports Preprocessing 20 Methods Augmented Training set vectorization Model training & evaluation Classifiers 1. LR 2. SVM 3. KNN

- 21. Test set Training set Reports Test set Clean reports Preprocessing 21 Methods Augmented Training set vectorization Model training & evaluation Best performing model High grade Low grade

- 22. Results – best model 0.5 0.6 0.7 0.8 0.9 1 tfidf LR tfidf SVM tfidf KNN pvdbow SVM pvdbow KNN pvdbow LR (denoised oversampled) pvdbow LR (no denoising) pvdbow LR (no oversampling) P R F1 ROC AUC 22 1.0 0.5

- 23. Results – best model 0.5 0.6 0.7 0.8 0.9 1 tfidf LR tfidf SVM tfidf KNN pvdbow SVM pvdbow KNN pvdbow LR (denoised oversampled) pvdbow LR (no denoising) pvdbow LR (no oversampling) P R F1 ROC AUC 23

- 24. Results – best model 0.5 0.6 0.7 0.8 0.9 1 tfidf LR tfidf SVM tfidf KNN pvdbow SVM pvdbow KNN pvdbow LR (denoised oversampled) pvdbow LR (no denoising) pvdbow LR (no oversampling) P R F1 ROC AUC 24

- 25. Results – best model 0.5 0.6 0.7 0.8 0.9 1 tfidf LR tfidf SVM tfidf KNN pvdbow SVM pvdbow KNN pvdbow LR (denoised oversampled) pvdbow LR (no denoising) pvdbow LR (no oversampling) P R F1 ROC AUC 25

- 26. Results – best model 0.5 0.6 0.7 0.8 0.9 1 tfidf LR tfidf SVM tfidf KNN pvdbow SVM pvdbow KNN pvdbow LR (denoised oversampled) pvdbow LR (no denoising) pvdbow LR (no oversampling) P R F1 ROC AUC 26

- 27. Results – best model 0.5 0.6 0.7 0.8 0.9 1 tfidf LR tfidf SVM tfidf KNN pvdbow SVM pvdbow KNN pvdbow LR (denoised oversampled) pvdbow LR (no denoising) pvdbow LR (no oversampling) P R F1 ROC AUC 27

- 28. Results – best model 0.5 0.6 0.7 0.8 0.9 1 tfidf LR tfidf SVM tfidf KNN pvdbow SVM pvdbow KNN pvdbow LR (denoised oversampled) pvdbow LR (no denoising) pvdbow LR (no oversampling) P R F1 ROC AUC 28

- 29. Results – best model 0.5 0.6 0.7 0.8 0.9 1 tfidf LR tfidf SVM tfidf KNN pvdbow SVM pvdbow KNN pvdbow LR (denoised oversampled) pvdbow LR (no denoising) pvdbow LR (no oversampling) P R F1 ROC AUC 29

- 30. Results – best model 30

- 31. Results – best model 31

- 32. LIME Interpretability analysis Strong cues for the high-grade adenocarcinoma • Gleason 4+5=9 • Gleason 4 • Gleason 5 33

- 33. LIME Interpretability analysis Very strong cues for the low- grade adenocarcinoma • Gleason grade 3+4 • Gleason grade 3+3 • Histologic grade g3 • Primary Gleason grade 3 • Secondary Gleason grade 4 34

- 34. LIME Interpretability analysis Irrelevant cues for the high- grade adenocarcinoma • Right? • Left? • Prostatic? • 1.3.3.5? 35

- 35. LIME Interpretability analysis Strong cues for the low-grade adenocarcinoma • Gleason score 3+4=7 • Histologic grade g3-4 36 NCI Tumor grade fact-sheet: Histologic grade g3-4 denotes high-grade cancer

- 36. Conclusion The binary classification approach was tested on High-grade & Low-grade prostate adenocarcinoma Semantic representation performed better than count-based representation (23% better ROC AUC score) Reliability of paragraph vector representation - LIME Future work: Extracting tumor staging terms clinical measurements prostrate tissue anatomy information 37

- 37. Resources Data, code and interpretability analysis Github: https://github.com/anjani-dhrangadhariya/pathology-report-classification.git TCGA dataset: http://www.cbioportal.org/study/clinicalData?id=prad_tcga_pan_can_atlas_2018 38

- 38. 39 Thank you for your attention Anjani Dhrangadhariya anjani.dhrangadhariya@hevs.ch https://www.linkedin.com/in/anjani-dhrangadhariya/ More information http://medgift.hevs.ch/wordpress/ https://www.examode.eu/ Project supported by European Union Horizon 2020 grant agreement 825292

Editor's Notes

- the primary form of communication between pathologists and clinicians A pathologist microscopically examines a biological specimen for the presence of cancer-related morphology. After careful examination, the findings are summarized into a pathology report which is sent back to the referring clinician. This clinician based on the diagnostic and supporting information plans out a treatment course for a patient.

- These reports contains vital diagnostic pathology information in form of cancer cell grade, histology grade or TNM stage, anatomy, and tumor site information along with other histologic measurements and descriptions. For decades, all this information in pathology reports has been summarized in an unstructured or at best semi-structured free-text form.

- And structured reporting though difficult to initially adapt to is gaining prominence in clinical practice because of the very obvious reasons… Enforces completeness, consistency and clarity by implementation of standardized formats for reporting. Such reports reinforce that anyone who writes the them conforms to the international established standards which in turn enables interoperability This improves accuracy for reporting as measurement units in such reports are standardized too. This in turn enables accurate comparison over health management timelines enabling systematic evidence-based intervention benefit analysis Which eventually leads to better patient management, better treatment decision and also testing the feasibility of semiautomated decision support.

- But because structured reports are not available, practitioners' resort to manually generating them from the unstructured ones which is unarguably resource consuming. Instead of manual methods, automation could be used to construct structured reports from the unstructured ones and organize these structured reports into proprietary database.

- NLP methods have improved automated general text understanding and these methods have been extensively used for electronic health record analysis. However, their applicability have not fully penetrated clinical pathology domain. For example, method x was used for classifying 90,000 pathology reports eventually achieving a high classification confidence. Method y was also used to classify a medium-size corpus into 20 classes and reached an F1 of 0.89.

- To demonstrate our approach, we use the publicly available prostate cancer pathology reports from TCGA PanCancer dataset 404 non-empty reports were manually classified into high-grade and low-grade using Gleason score information available from the dataset A report was classified as high-grade if its Gleason diagnostic grade was above 7 and low-grade otherwise. With this method, about 171 reports were classified as high-grade and 233 as low-grade

- Before any text could be used for NLP tasks, it needs to be thoroughly preprocessed. The PDF reports were converted into machine-readable text using a freely available JAVA based optical character recognition tool

- This conversion adds much noise to the already noisy unstructured reports which now require denoising. Special-character trails were automatically removed using heuristics and ASCII null characters were manually removed. Next predefined stop words were automatically removed using NLTK stop words corpus and some corpus specific stop words were also removed. Unnecessary punctuations were also removed

- Before any classifier could be trained, the natural language corpus requires to be represented in numerical machine understandable format. We used count vectors to represent text in form of word counts specifically the tf-idf which is even better because also takes into account weights of each terms in the document. Tf-idf up weighs meaningful words and downweighs filler stop words. Tf-idf is basically term frequency (which is the number of occurrences of a term i in document j ) multiplied by inverse document frequency which is defined by the number of times a term appears in the entire corpus. This way frequently used words like articles, verbs and exclamations get down-weighted.

- To consider semantic information from a document, reduce vector dimensionality and sparsity, we used two variation of the paragraph vectors which themselves are a variation of word vectors. These are ”distributed memory” model of paragraph vectors and “distributed bag-of-words” model of paragraph vectors.

- A distributed memory model uses document identifier and surrounding terms to predict target term. The training task is unsupervised and uses standard encoder-decoder model but adds a document memory vector. A distributed bag of word model uses document identifier as input to predict randomly sampled words from the document. These are also trained in an unsupervised manner. These representations retain semantic information of the encoded document

- Getting back to the approach Once the reports are preprocessed and denoised, the corpus of clean reports is next separated into training and test sets.

- To tackle the class imbalance problem text-augmentation using back-translation trick was used to over-sample the minority class.

- To tackle the class imbalance problem text-augmentation using back-translation trick was used to over-sample the minority class. In backtranslation process, a document in source language is first translated to a target language of choice and then is back translated to the source language. This brings a little variation in the augmented text reports. We used German as the target language for back-translation because English and German languages share the Saxon roots.

- Then the previously mentioned vectors were extracted from the denoised over-sampled training corpus.

- These vectorized documents were used to train three machine learning classifier for binary classification of the reports into high- vs. low-grades.

- The best performing model-vector combination was then used to classify samples from the test set into high- vs. low-grade

- Tf-idf vector representation with logistic regression gave a mediocre performance not even crossing the threshold of 70% for any of the metrics.

- So it could be seen that denoising and oversampling brought much performance improvement to the best performing model-vector combination

- And over-all the semantic PV-DBOW vectors consistently and considerably outperformed tf-idf which are count-based vectors. That was precisely by 23% for ROC-AUC score…

- We inspected the best performing model-vector combination using LIME to check if it actually learnt pathology relevant features. From one of the inspected high-grade sample it could be seen that the model picks strong diagnostic clues from the report.

- For the next inspected low-grade sample, the model identifies very strong clues for the report to be low-grade like the Gleason score information and histologic grade

- But the model also turns out to falsely predict high-grade report as low-grade by picking out on very irrelevant clues