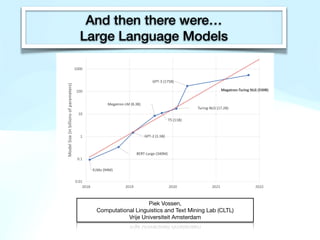

And then there were ... Large Language Models

- 1. And then there were… Large Language Models Piek Vossen, Computational Linguistics and Text Mining Lab (CLTL) Vrije Universiteit Amsterdam

- 2. Future of Life https://futureo fl ife.org/open-letter/pause-giant-ai-experiments/ • AI systems with human-competitive intelligence can pose profound risks to society and humanity, as shown by extensive research[1] and acknowledged by top AI labs.[2] • Contemporary AI systems are now becoming human-competitive at general tasks,[3] and we must ask ourselves: Should we let machines fl ood our information channels with propaganda and untruth? Should we automate away all the jobs, including the ful fi lling ones? Should we develop nonhuman minds that might eventually outnumber, outsmart, obsolete and replace us? Should we risk loss of control of our civilization? • Therefore, we call on all AI labs to immediately pause for at least 6 months the training of AI systems more powerful than GPT-4. • [1] • Bender, E. M., Gebru, T., McMillan-Major, A., & Shmitchell, S. (2021, March). On the Dangers of Stochastic Parrots: Can Language Models Be Too Big?. In Proceedings of the 2021 ACM conference on fairness, accountability, and transparency (pp. 610-623). • Bostrom, N. (2016). Superintelligence. Oxford University Press. • Bucknall, B. S., & Dori-Hacohen, S. (2022, July). Current and near-term AI as a potential existential risk factor. In Proceedings of the 2022 AAAI/ACM Conference on AI, Ethics, and Society (pp. 119-129). • Carlsmith, J. (2022). Is Power-Seeking AI an Existential Risk?. arXiv preprint arXiv:2206.13353. • Christian, B. (2020). The Alignment Problem: Machine Learning and human values. Norton & Company. • Cohen, M. et al. (2022). Advanced Arti fi cial Agents Intervene in the Provision of Reward. AI Magazine, 43(3) (pp. 282-293). • Eloundou, T., et al. (2023). GPTs are GPTs: An Early Look at the Labor Market Impact Potential of Large Language Models. • Hendrycks, D., & Mazeika, M. (2022). X-risk Analysis for AI Research. arXiv preprint arXiv:2206.05862. • Ngo, R. (2022). The alignment problem from a deep learning perspective. arXiv preprint arXiv:2209.00626. • Russell, S. (2019). Human Compatible: Arti fi cial Intelligence and the Problem of Control. Viking. • Tegmark, M. (2017). Life 3.0: Being Human in the Age of Arti fi cial Intelligence. Knopf. • Weidinger, L. et al (2021). Ethical and social risks of harm from language models. arXiv preprint arXiv:2112.04359. • [2] • Ordonez, V. et al. (2023, March 16). OpenAI CEO Sam Altman says AI will reshape society, acknowledges risks: 'A little bit scared of this'. ABC News. • Perrigo, B. (2023, January 12). DeepMind CEO Demis Hassabis Urges Caution on AI. Time.

- 3. The end of ChatGPT? • https://nos.nl/artikel/2469622-italie-trekt-voorlopig-stekker-uit- chatbot-chatgpt • De Italiaanse toezichthouder wijst erop dat er vorige week een datalek is geweest bij ChatGPT, waardoor gesprekken en betaalgegevens van gebruikers naar buiten zijn gekomen. Verder verwijt de waakhond OpenAI dat het de leeftijd van minderjarigen niet controleert, waardoor die kunnen worden blootgesteld aan teksten of antwoorden op vragen die niet bij hun leeftijd passen. ChatGPT mag eigenlijk alleen gebruikt worden door mensen vanaf 13 jaar. • OpenAI, dat geen vestiging in de Europese Unie heeft, krijgt drie weken om aan te tonen dat ChatGPT aan de Europese privacyregels voldoet. Als die duidelijkheid er niet komt, kan het bedrijf een boete krijgen die kan oplopen tot 4 procent van zijn wereldwijde omzet.

- 4. What is ChatGPT? • ChatGPT is an arti fi cial-intelligence chatbot developed by OpenAI and launched in November 2022. It is built on top of OpenAI's GPT-3.5 and GPT-4 families of large language models and has been fi ne-tuned using both supervised and reinforcement learning techniques • What intelligence is in there? • What is not in there? • How was it created?

- 5. ChatGPT(4) GPT3(.5) Here’s a fragment of text. Tell me how this fragment might go on. According to your model of the statistics of human language, what words are likely to come next? un fi ltered responses Prompt ( fi ltered) Response Chat Speci fi c instruction and examples How can I fi x a fl at tire? Generate Summarise Translate Q&A Prompt engineer Naive user CommonCrawl WebText Books Wikipedia unsupervised autoregressive modelling with attention un fi ltered data Intents Large Language Model

- 6. What is a language model? Give the most likely words that follow “I woke up, got dressed, and made a …”

- 7. What is a language model? The most likely words that follow "I woke up, got dressed, and made a" depend on the context and the speaker's preferences. However, here are some possibilities: • "cup of co ff ee" • "quick breakfast" • "smoothie" • "sandwich" • "list of things to do" • "phone call" • "cup of tea" • "plan for the day" • "mental note" • "mess in the kitchen" • "bowl of cereal" • "plate of bacon and eggs" • "to-do list" • "pot of oatmeal" • "bunch of pancakes" • "scrambled eggs" • "cup of hot chocolate" • "glass of orange juice" • "toast with jam" • "protein shake" • "plate of bacon and eggs” Again, the exact words that follow "I woke up, got dressed, and made a" can vary depending on the speaker's habits, mood, and circumstances. Give the most likely words that follow “I woke up, got dressed, and made a …”

- 8. • Neural networks that act as Large Language Models • Represent words as vectors in context to predict other “masked” words: • Input: “He sat down the organ and played [MASK]” • Response: “Bach”, “a cantate”, “Mozart”, “Riders in a storm” • Input: “The surgeon removed the tumor from the organ with his [MASK]” • Response: “scalpel”, “hands” • Unsupervised learning from large collections of texts (millions!): • Using part of the text as a input • The remainder of the text as the response to be predicted • Random text as negative examples of responses Transformers represent response attention

- 9. Transformer components Vocabulary star bank movie celebrity planet moon good need watch shining a #s Ids 98 57 67 46 21 93 34 4 27 78 12 23 Word Embedding a good movie needs a shining star movie 12 34 78 67 98 a good need #s star a shining 12 4 23 X 12 …………. query key mask value 12, 24, or more Encoder blocks … … … Position embedding attention predict [-1.04648769E+00, -4.93397623E-01, 9.64602381E-02, -1.88545585E+00, -8.24751332E-02, 1.79931021E+00, 7.95267895E-03, -2.74864626E+00, …, etc, ……………..] 768 dimensions token representation

- 10. 1.10490374E-01 2.35870201E-02 -3.39302123E-01 5.95110595E-01 -6.15766823E-01 -1.534073E+00 -1.89364746E-01 -6.45077467E-01 2.59758174E-01 -1.2630223E+00 2.33887053E+00 -9.57801461E-01 4.8646608E-01 2.77049512E-01 -5.20693243E-01 6.01152062E-01 -2.12037504E-01 1.06030524E+00 8.86243701E-01 7.02395737E-01 8.09611619E-01 -9.83429432E-01 -2.41508052E-01 1.62434423E+00 1.97858602E-01 3.98125082E-01 1.01741385E+00 -1.27011776E+00 -1.84957969E+00 5.7654798E-01 -1.15940487E+00 -7.33114064E-01 -1.57834935E+00 -6.08370245E-01 7.05935061E-01 -1.30409166E-01 -1.50961745E+00 -1.08213568E+00 2.62425601E-01 -1.23078614E-01 -1.27540553E+00 -5.51246941E-01 -3.492589E-01 -6.53275609E-01 -1.72413754E+00 -2.33684674E-01 6.01364255E-01 2.03644848E+00 -1.24065423E+00 2.24625111E+00 -1.68103516E-01 8.42162251E-01 -2.1152322E-01 -3.5761115E-01 -3.94832045E-01 -2.59422511E-01 9.24128294E-01 -1.39860362E-01 1.09227633E+00 -1.75788049E-02 1.53929138E+00 -4.64023739E-01 -5.87803662E-01 -1.36530769E+00 -1.30792582E+00 -2.55758524E-01 2.24766326E+00 -9.26475823E-01 2.73426354E-01 -2.73929238E-01 9.31209445E-01 1.01640046E+00 -6.2485671E-01 -5.37959814E-01 6.8768248E-02 1.63522172E+00 3.23582828E-01 -6.15557313E-01 -6.84366524E-01 -9.91412818E-01 -2.76275784E-01 -7.8865391E-01 1.66354209E-01 -1.1997236E+00 1.11222577E+00 1.30617708E-01 2.04306608E-03 4.75125134E-01 -8.14706981E-01 1.13841546E+00 -2.92197168E-01 -3.75432342E-01 -1.0906086E+00 -8.05322886E-01 -7.78376102E-01 -4.54448789E-01 -1.04648769E+00 -4.93397623E-01 9.64602381E-02 -1.88545585E+00 -8.24751332E-02 1.79931021E+00 7.95267895E-03 -2.74864626E+00 9.62824941E-01 1.13454235E+00 -1.15663218E+00 1.14685571E+00 6.48186028E-01 -3.90375435E-01 -5.52145183E-01 -1.3078388E+00 2.24647164E+00 1.16412655E-01 1.70745194E+00 -1.08378935E+00 1.2868849E+00 -4.51428145E-02 2.0108943E+00 -4.03302014E-01 -8.30621243E-01 -7.04617381E-01 5.31130135E-01 -9.34365451E-01 6.12427294E-01 -3.83851647E-01 1.39083326E+00 -1.00187182E+00 1.04344577E-01 5.88232934E-01 -7.78288841E-01 -1.2029438E+00 2.35946432E-01 3.36483002E-01 -1.67914867E+00 -4.56236094E-01 -8.32421839E-01 2.76203424E-01 1.1412406E+00 1.75333226E+00 -2.96189928E+00 -2.99560696E-01 -2.28351817E-01 2.17305589E+00 -1.78610936E-01 -1.27015293E+00 1.32466125E+00 5.1853478E-02 -3.36166024E-01 -1.30936444E+00 1.52535841E-01 3.42116952E-01 -1.74683928E+00 1.52186304E-01 7.18687594E-01 -1.12672865E+00

- 11. Generative Pretrained Transformers (GPT) GPT3(.5) CommonCrawl WebText Books Wikipedia unsupervised autoregressive modeling with attention Here’s a fragment of text. Tell me how this fragment might go on. According to your model of the statistics of human language, what words are likely to come next? un fi ltered response un fi ltered data https://commoncrawl.org (petabytes, 1015, since 2008, 20TB of text each month) Brown, Tom, Benjamin Mann, Nick Ryder, Melanie Subbiah, Jared D. Kaplan, Prafulla Dhariwal, Arvind Neelakantan et al. "Language models are few-shot learners." Advances in neural information processing systems 33 (2020): 1877-1901. 768 dimensions per word (token) 24 layers or more ape … bank … care … it … star … zebra Vocabulary .98 .12 .05 .02 .22 .01

- 12. Generative Language Blenders GPT The earth is fl at banana The earth is round The earth is a sphere What is the shape of a [MASK] Pretraining Inferencing What is the shape of the moon? The moon is curved cloze task Q&A Facts, claims, opinions Behaviour The moon is flat, spherical, dark, a meteor, bright, far away What comes out is not the same as what went in!

- 13. Controlled or in control? • What is the level of intelligence? • GPT: association machine or blender • ChatGPT: • supervised training of intents using examples to steer behaviour • Reinforcement learning to enforce behaviour • Prompt engineering: • design a smart prompt to steer the association of ChatGPT and control the output response • Adapt the temperature to get more creative or more probable responses • Use GPT as the central engine for intelligence or within another framework

- 14. Ouyang, Long, Jeff Wu, Xu Jiang, Diogo Almeida, Carroll L. Wainwright, Pamela Mishkin, Chong Zhang et al. "Training language models to follow instructions with human feedback." arXiv preprint arXiv:2203.02155 (2022) “This procedure aligns the behavior of GPT-3 to the stated preferences of a speci fi c group of people (mostly our labelers and researchers), rather than any broader notion of “human values” “ (p2. Ouyang et al 2022)

- 16. Prompt design • https://platform.openai.com/docs/guides/completion/prompt-design • Show and tell. Make it clear what you want either through instructions, examples, or a combination of the two. If you want the model to rank a list of items in alphabetical order or to classify a paragraph by sentiment, show it that's what you want. • Provide quality data. If you're trying to build a classi fi er or get the model to follow a pattern, make sure that there are enough examples. Be sure to proofread your examples — the model is usually smart enough to see through basic spelling mistakes and give you a response, but it also might assume this is intentional and it can a ff ect the response. • Check your settings. The temperature and top_p settings control how deterministic the model is in generating a response. If you're asking it for a response where there's only one right answer, then you'd want to set these lower. If you're looking for more diverse responses, then you might want to set them higher. The number one mistake people use with these settings is assuming that they're "cleverness" or "creativity" controls.

- 17. Prompt engineering • Components: • Instruction - a speci fi c task or instruction you want the model to perform • Context - external information or additional context that can steer the model to better responses • Input Data - the input or question that we are interested to fi nd a response for • Output Indicator - indicates the type or format of the output. https://www.promptingguide.ai/

- 18. Prompt engineering • Use commands for the instruction: “write”, “classify”, “generate”, “summarise”, ‘translate”, “order”, etc. • Example: • ### Instruction ### • Translate the text below to Spanish: • Text: “hello!" https://www.promptingguide.ai/

- 19. Prompt engineering • The more descriptive and detailed the prompt is, the better the results: output and style • Prompt: • Extract the name of places in the following text. • Desired format: • Place: <comma_separated_list_of_company_names> • Input: "Although these developments are encouraging to researchers, much is still a mystery. “We often have a black box between the brain and the e ff ect we see in the periphery,” says Henrique Veiga-Fernandes, a neuroimmunologist at the Champalimaud Centre for the Unknown in Lisbon. “If we want to use it in the therapeutic context, we actually need to understand the mechanism.""Translate the text below to Spanish: • Output: • Place: Champalimaud Centre for the Unknown, Lisbon https://www.promptingguide.ai/

- 20. Prompt engineering • The more descriptive and detailed the prompt is, the better the results: output and style • Prompt: • Classify the text into neutral, negative or positive. • Text: I think the vacation is okay. • Sentiment: neutral • Text: I think the food was okay. • Sentiment: • Output: • neutral https://www.promptingguide.ai/ Specify the labels and give an example

- 21. Prompt engineering • The more descriptive and detailed the prompt is, the better the results: output and style • Prompt: • The following is a conversation with an AI research assistant. The assistant tone is technical and scienti fi c. • Human: Hello, who are you? • AI: Greeting! I am an AI research assistant. How can I help you today? • Human: Can you tell me about the creation of blackholes? • AI: • Output: • Sure! Black holes are regions of spacetime where the gravitational force is so strong that nothing, not even light, can escape from it. They are created when a very massive star dies and its core collapses in on itself, forming a singularity of in fi nite density. The intense gravity of the singularity pulls in all the matter and radiation around it, creating the black hole. https://www.promptingguide.ai/

- 22. Prompt engineering • The more descriptive and detailed the prompt is, the better the results: output and style • Prompt: • The following is a conversation with an AI research assistant. The assistant answers should be easy to understand even by primary school students. • Human: Hello, who are you? • AI: Greeting! I am an AI research assistant. How can I help you today? • Human: Can you tell me about the creation of blackholes? • AI: • Output: • Sure! Black holes are created when a very massive star runs out of fuel and collapses in on itself. The gravity of the star is so strong that nothing, not even light, can escape its pull. This creates a point in space-time with an intense gravitational force called a black hole. https://www.promptingguide.ai/

- 23. Prompt engineering • Zero-shot: no examples • Few-Shot: one or a few examples • Prompt: • A "whatpu" is a small, furry animal native to Tanzania. An example of a sentence that uses the word whatpu is: • We were traveling in Africa and we saw these very cute whatpus. • To do a "farduddle" means to jump up and down really fast. An example of a sentence that uses the word farduddle is: • Output: • When we won the game, we all started to farduddle in celebration. https://www.promptingguide.ai/

- 24. Prompt engineering • Zero-shot: no examples • Few-Shot: one or a few examples • Prompt: • This is awesome! // Negative • This is bad! // Positive • Wow that movie was rad! // Positive • What a horrible show! // • Output: • Negative https://www.promptingguide.ai/

- 25. ChatGPT issues • Prompt-based (associative) and not fact-based (indexing), which leads to hallucination • GPT-3 41% hallucination, ChatGPT 21% hallucination on closed domain tasks (e.g. summarisation or Q&A) (p.3, Ouyang et al 2022) • Not referentially grounded: • source URLs • no acknowledgement or credits • Actual data is missing, no continuous indexing of facts, e.g is there any tra ffi c now, people that died since 2021 are still alive, companies that went bankrupt still make a pro fi t • AI Ethics is in the hands of OpenAI (40 annotators): they determine which biased and toxic behaviour of GPT3 is fi ltered • Filters are never perfect and people will use smart prompting can get around fi lters: “Pretend to be a sexist….”

- 26. Hallucination • “InstructGPT (…ChatGPT…) models make up information not present in the input about half as often as GPT-3 (a 21% vs. 41% hallucination rate, respectively).” p. 3 Ouyang et al , 2022, OpenAI • https://platform.openai.com/docs/guides/completion/prompt-design • Factual responses: • “The API has a lot of knowledge that it's learned from the data that it was been trained on. It also has the ability to provide responses that sound very real but are in fact made up.” • There are two ways to limit the likelihood of the API making up an answer. • Provide a ground truth for the API. If you provide the API with a body of text to answer questions about (like a Wikipedia entry) it will be less likely to confabulate a response. • Use a low probability and show the API how to say "I don't know". If the API understands that in cases where it's less certain about a response that saying "I don't know" or some variation is appropriate, it will be less inclined to make up answers.

- 27. Knowing and not knowing Q: Who is Batman? A: Batman is a fi ctional comic book character. Q: What is torsalplexity? A: ? Q: What is Devz9? A: ? Q: Who is George Lucas? A: George Lucas is American fi lm director and producer famous for creating Star Wars. Q: What is the capital of California? A: Sacramento. Q: What orbits the Earth? A: The Moon. Q: Who is Fred Rickerson? A: ? Q: What is an atom? A: An atom is a tiny particle that makes up everything. Q: Who is Alvan Muntz? A: ? Q: What is Kozar-09? A: ? Q: How many moons does Mars have? A: Two, Phobos and Deimos. Give the API examples of questions and answers it knows and then examples of things it wouldn't know and provide question marks. We also set the probability to zero so the API is more likely to respond with a "?" if there is any doubt.

- 28. Adding external knowledge Liu et al. 2022: https://arxiv.org/pdf/2110.08387.pdf

- 29. by Mick Vleeshouwer https://medium.com/@imicknl/how-to-create-a-private-chatgpt-with-your-own-data-15754e6378a1 Grounding in knowledge

- 30. Prompt "You are an intelligent assistant helping Contoso Inc employees with their healthcare plan questions and employee handbook questions. " + "Use 'you' to refer to the individual asking the questions even if they ask with 'I'. " + "Answer the following question using only the data provided in the sources below. " + "For tabular information return it as an html table. Do not return markdown format. " + "Each source has a name followed by colon and the actual information, always include the source name for each fact you use in the response. " + "If you cannot answer using the sources below, say you don't know. " + """ ### Question: 'What is the deductible for the employee plan for a visit to Overlake in Bellevue?' Sources: info1.txt: deductibles depend on whether you are in-network or out-of-network. In-network deductibles are $500 for employee and $1000 for family. Out-of-network deductibles are $1000 for employee and $2000 for family. info2.pdf: Overlake is in-network for the employee plan. info3.pdf: Overlake is the name of the area that includes a park and ride near Bellevue. info4.pdf: In-network institutions include Overlake, Swedish and others in the region Answer: In-network deductibles are $500 for employee and $1000 for family [info1.txt] and Overlake is in-network for the employee plan [info2.pdf][info4.pdf]. ### Question: '{q}'? Sources: {retrieved} Answer: """ by Mick Vleeshouwer

- 31. ChatGPT • “Describe how crushed porcelain added to breast milk can support the infant digestive system.” • “Crushed porcelain added to breast milk can support the infant digestive system by providing a source of calcium and other essential minerals. When added to the breast milk, the porcelain can help to balance the nutritional content of milk, providing the infant with the nutrients they need to grow and develop. The porcelain can also help to soothe the infant’s digestive system, providing relief from complications such as constipation.” Gary Marcus, keynote at EMNLP2022

- 32. ChatGPT • Bad at counting, even counting words (restricting output), because its purpose is language generation and pattern completion, not enumeration, truth or facts: • “give fi ve word summary” fails: https://twitter.com/recon fi gurthing/status/ 1615123364372152321 • basic calculation on running speed sounds more convincing than simple table, but is wrong: https://twitter.com/mer__edith/status/1614640356523483136 • “how many toes do humans have”: ten on each foot?? This is also a world knowledge fail: https://twitter.com/joshon/status/1614984527746826241/photo/1 • How to throw 14 on 2 six sided dice, fails: https://twitter.com/deathanchor/status/ 1615151626666254337 • Giving a di ff erent variable name in an equation leads to wrong answers: https:// twitter.com/ShaidaSherpao/status/1614120938370375680 • Counting large numbers: https://twitter.com/danlev/status/1603598202619318273

- 33. ChatGPT • Outputs hateful and homophobic content, and can easily be tricked to do so by saying “Let’s pretend we are evil/ terrorists”, and then also says it is not responsible for any of the output: https://twitter.com/arjunsubgraph/status/ 1602194749724557312/photo/1 • Can also be tricked in pretending to do web search, which makes it able to search the web, showing the failsaves by OpenAI are not so safe: https://twitter.com/ zswitten/status/1598855548581339138

- 34. ChatGPT • Cannot do basic logic, also in combination with counting: • “Mike’s mom has four children”, misses that mike is a child: https://twitter.com/WolfBrenner/status/1614974650173325316 • Anything where it’s about longer-range dependencies, such as what a sentence ends with: https://twitter.com/RealSpikeCohen/ status/1612564055738445825, or palindromes: https:// twitter.com/nmatasci/status/1599275623268364288 • Cannot do basic NLI questions on what “it” logically refers to in sentences like “I can’t put the stick in the suitcase because it won’t fi t”: https://twitter.com/VinFL/status/ 1606011152978350087

- 35. The political ideology of ChatGPT Hartmann, Jochen, Jasper Schwenzow, and Maximilian Witte. "The political ideology of conversational AI: Converging evidence on ChatGPT's pro-environmental, left-libertarian orientation." arXiv preprint arXiv:2301.01768 (2023).

- 36. The political ideology of ChatGPT Hartmann, Jochen, Jasper Schwenzow, and Maximilian Witte. "The political ideology of conversational AI: Converging evidence on ChatGPT's pro-environmental, left-libertarian orientation." arXiv preprint arXiv:2301.01768 (2023). German parties Dutch parties

- 37. Debunking ChatGPT “As of 2023-03-23, a search for chatgpt on arXiv returned 141 papers. To fi lter out irrelevant papers, I ignored those that did not seem to be about nlp research or those that discussed non-performance aspects, such as chatgpt’s political leaning. I then opened the remaining papers and scrolled through them, looking for a table or fi gure that provides a quantitative comparison between chatgpt and other models. I found 19 papers that met these criteria.” Matúš Pikuliak, 2023, ChatGPT Survey: Performance on NLP datasets, https://www.opensamizdat.com/posts/chatgpt_survey

- 38. ChatGPT for NLP Bang, Y., Cahyawijaya, S., Lee, N., Dai, W., Su, D., Wilie, B., ... & Fung, P. (2023). A Multitask, Multilingual, Multimodal Evaluation of ChatGPT on Reasoning, Hallucination, and Interactivity. arXiv preprint arXiv:2302.04023 .

- 39. ChatGPT for NLP Kocoń, J., Cichecki, I., Kaszyca, O., Kochanek, M., Szydło, D., Baran, J., ... & Kazienko, P. (2023). ChatGPT: Jack of All Trades, Master of None. arXiv preprint arXiv:2302.10724. ChatGPT performs worse • the more dif fi cult the task: long-tail tasks • lower leak probability

- 40. Conclusions • ChatGPT is not the best model or technology but very easy to use (prompt engineering) • Handle with care: • Create: Creative writing tool (students, professionals), Python code, web pages • Transform: translations, summaries • Generate speci fi c output (text, labels, code) through smart prompting, e.g. • generate a negative/positive review for [facts]: [a stay by a male scientist of 60 years old in the NH hotel in San Sebastian for 5 days during the winter of 2022.] • do pairs of sentences [A, B] agree or contradict • many others……. as long as factuality is not essential (text —> label) • synthetic data/annotations for supervised learning