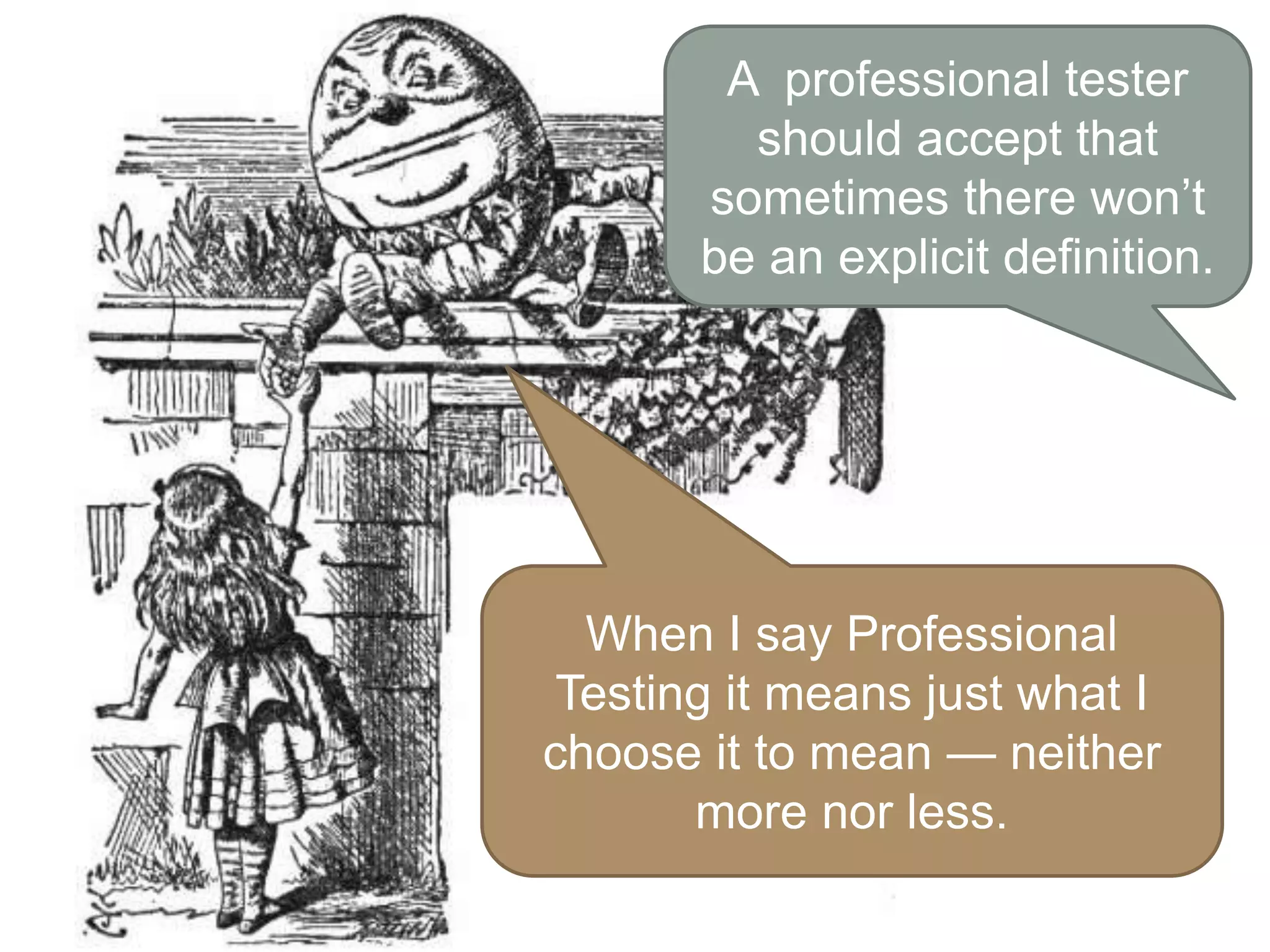

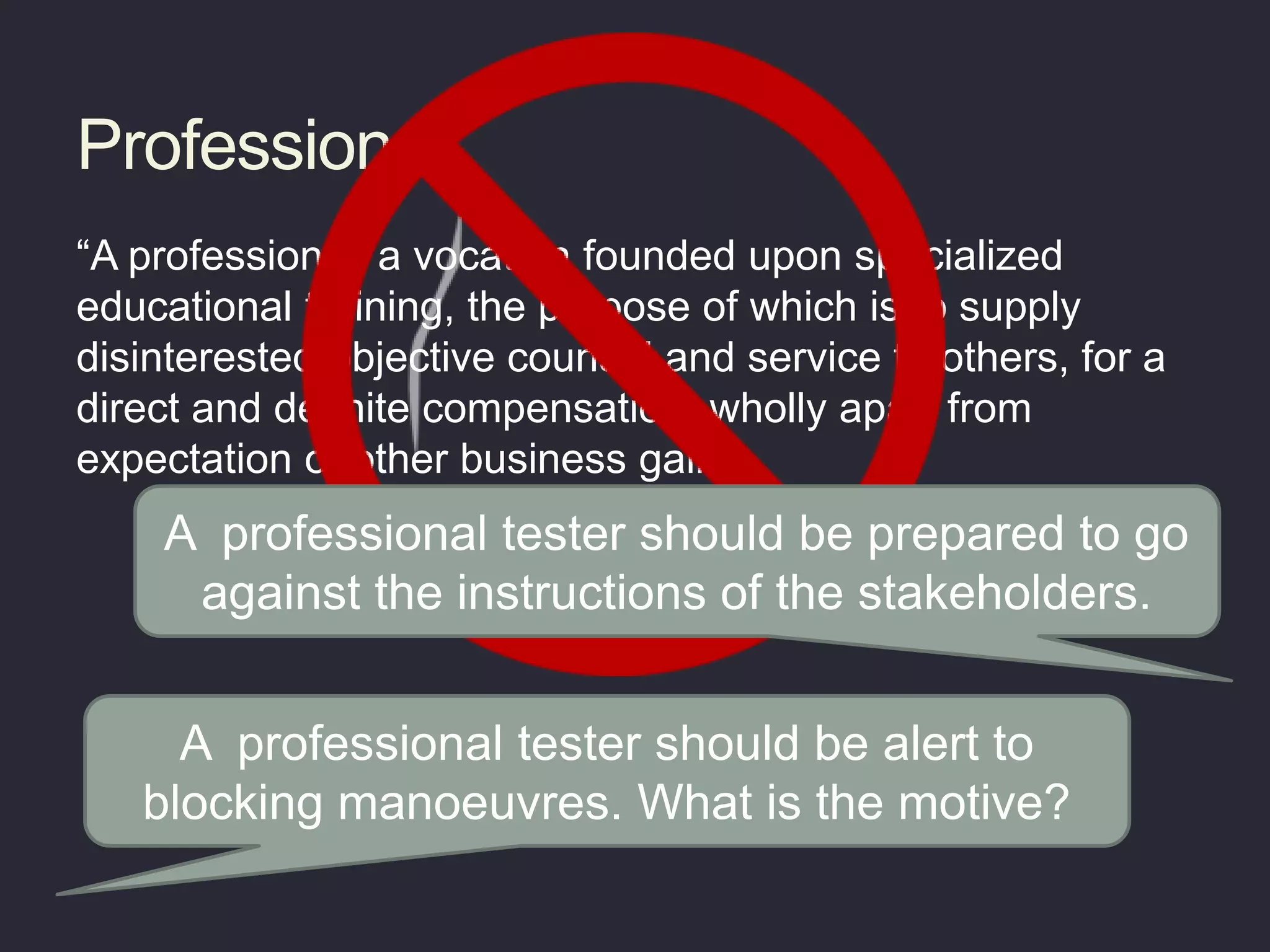

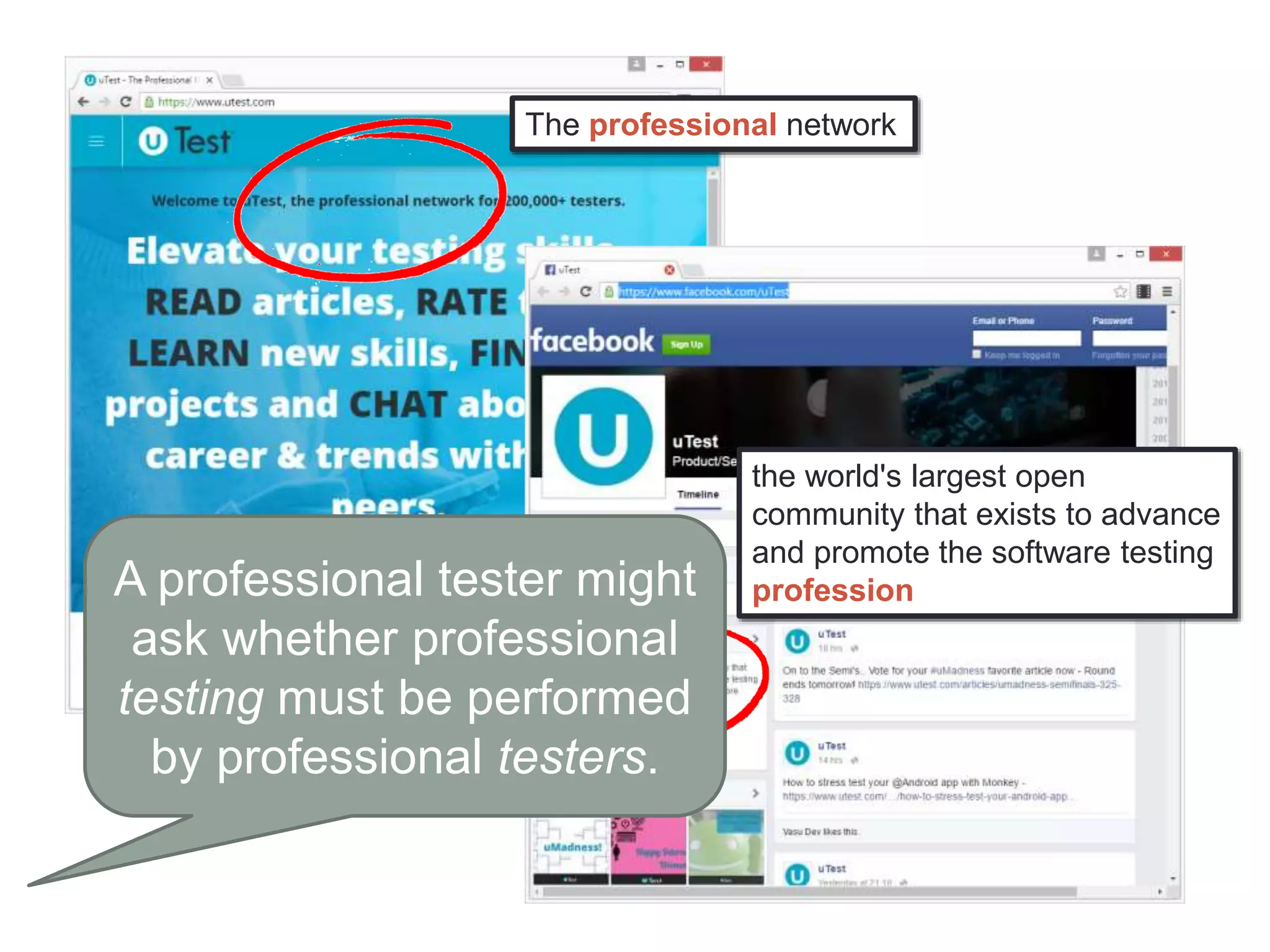

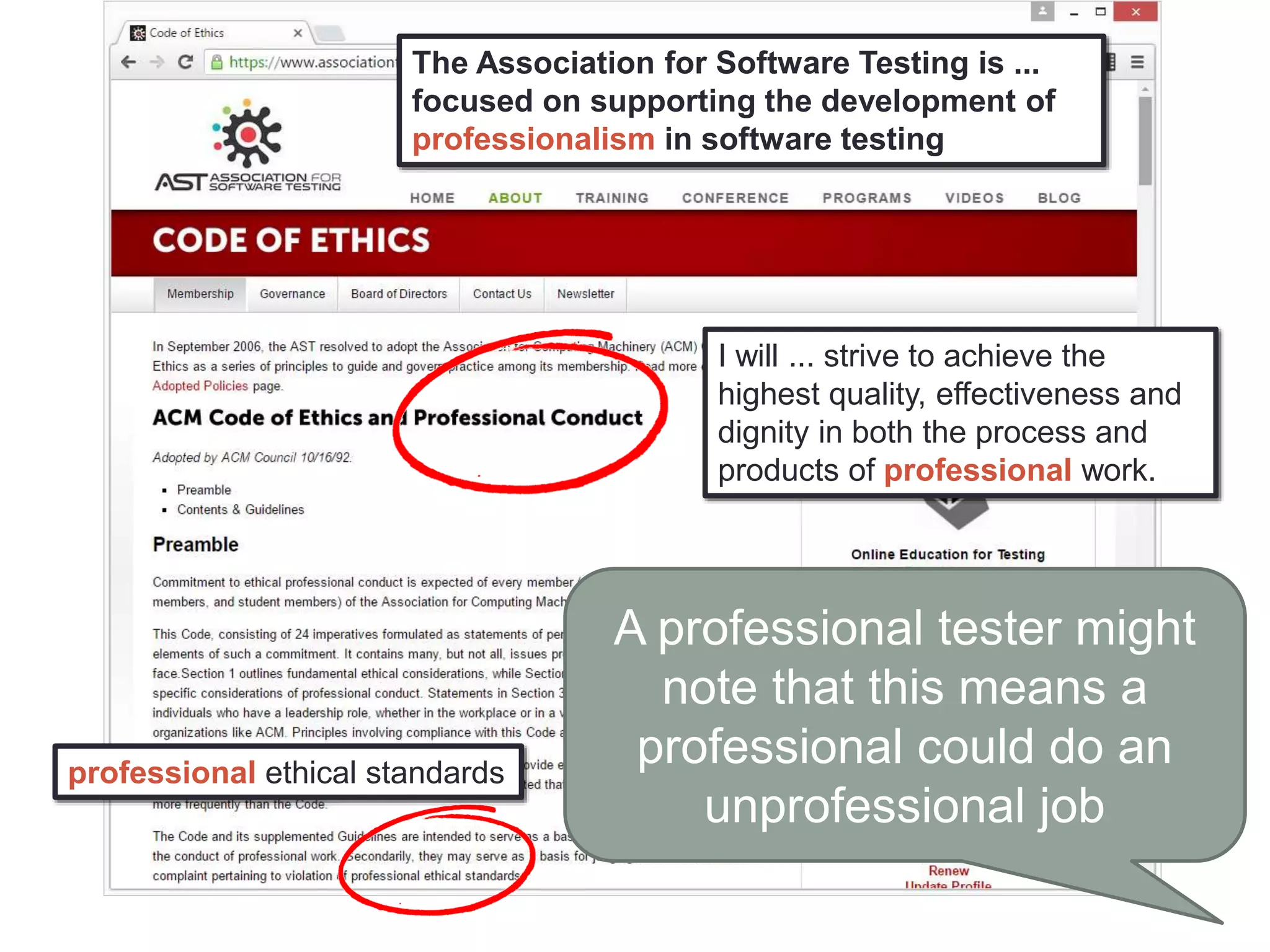

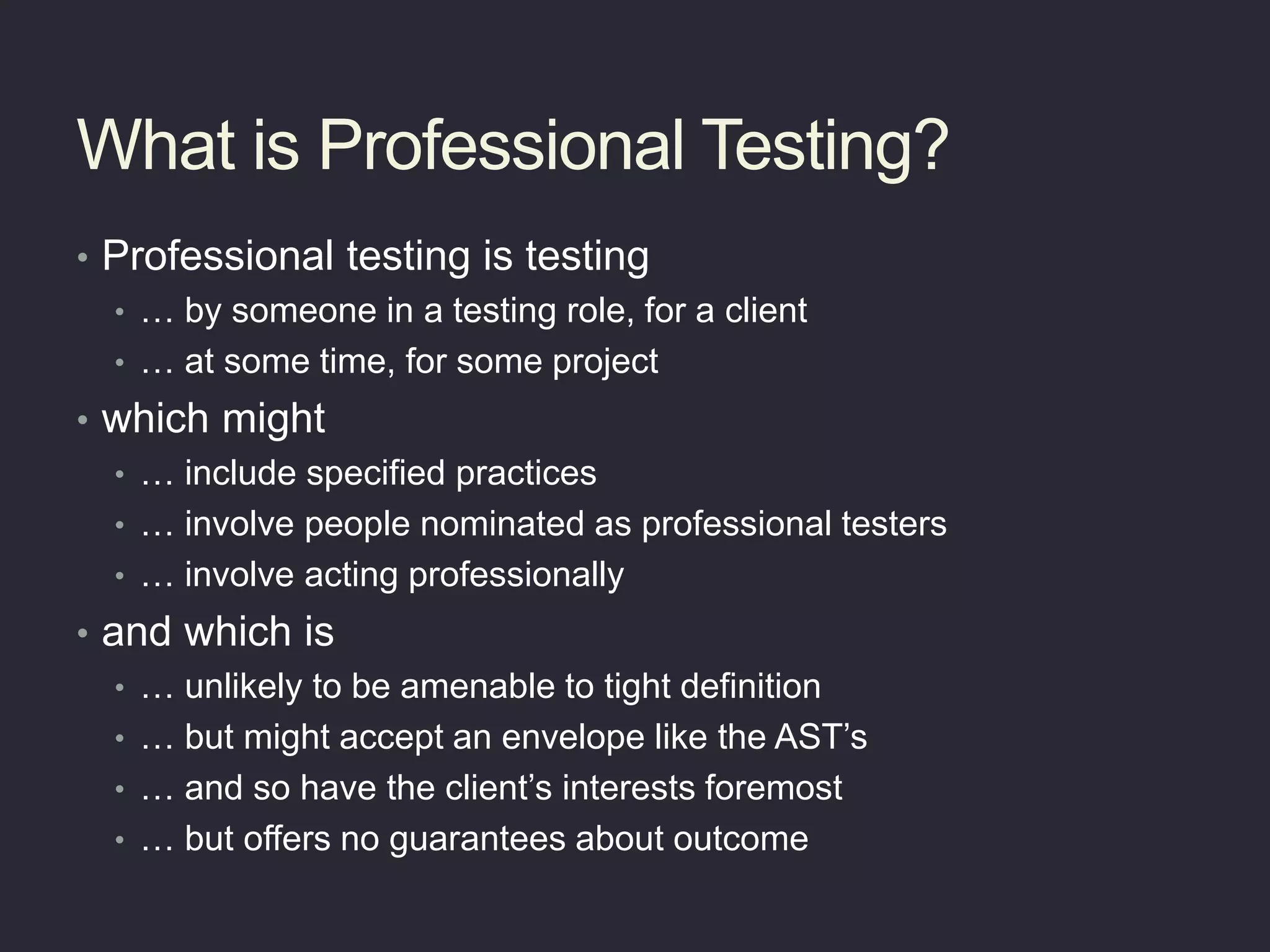

The document explores the concept of professional testing, defining it as testing conducted by someone in a designated testing role for a client, while emphasizing the importance of understanding nuances, motivations, and missions in this context. It discusses the difference between professionalism and acting professionally, highlighting that professional testing is difficult to define strictly but is anchored in ethical standards and client interests. Ultimately, it raises questions about the nature of professional testing and the roles and responsibilities of professional testers.