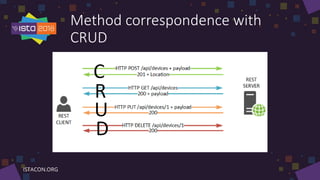

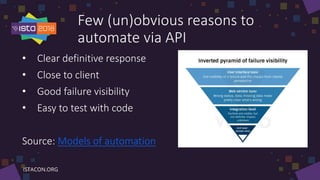

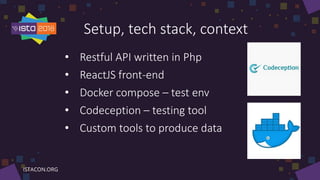

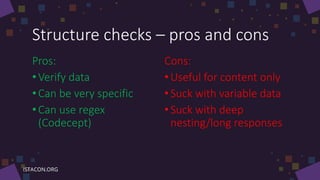

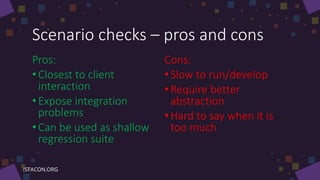

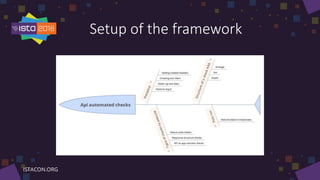

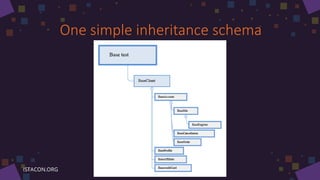

This document provides lessons learned about automating API testing. It discusses what an API is and reasons for automating API tests, including getting clear responses close to the client experience. The document outlines different types of tests to automate, including status code checks, structure checks, and scenario checks. It also discusses cognitive barriers to testing, test setup and environment, using an automation framework, and splitting test logic from application logic. The goal is not to provide a how-to guide but rather discuss lessons learned about effective API testing.