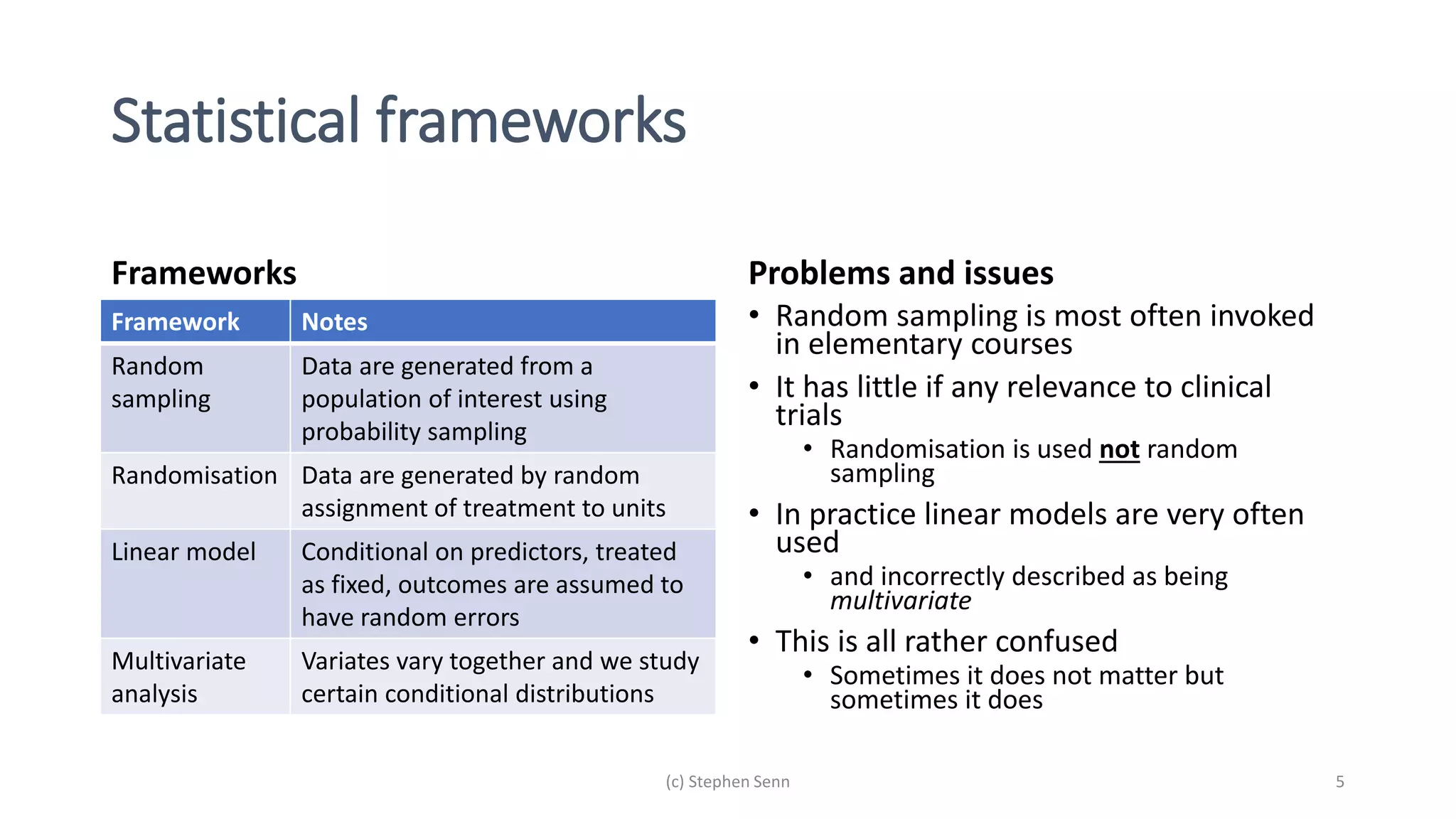

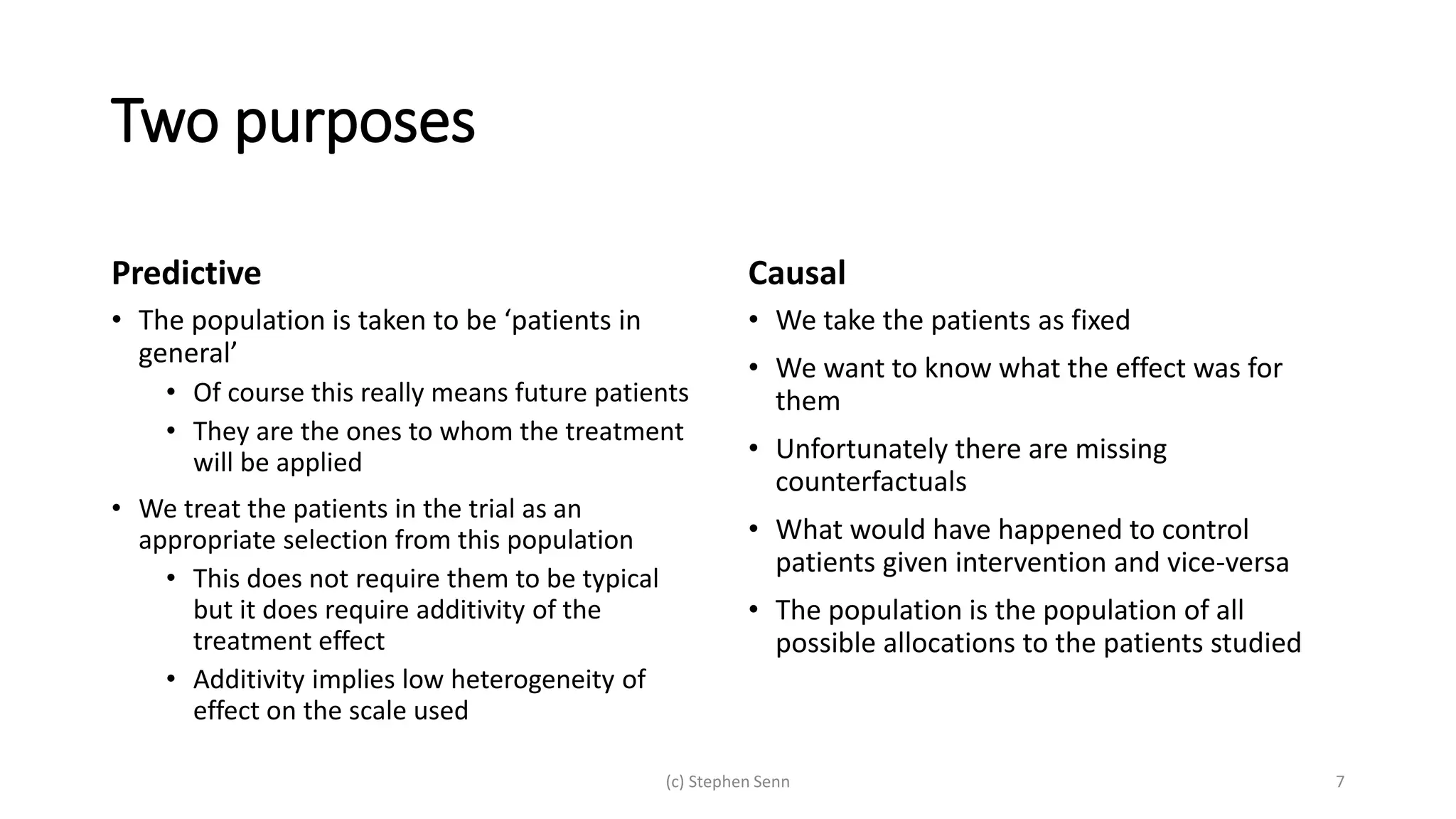

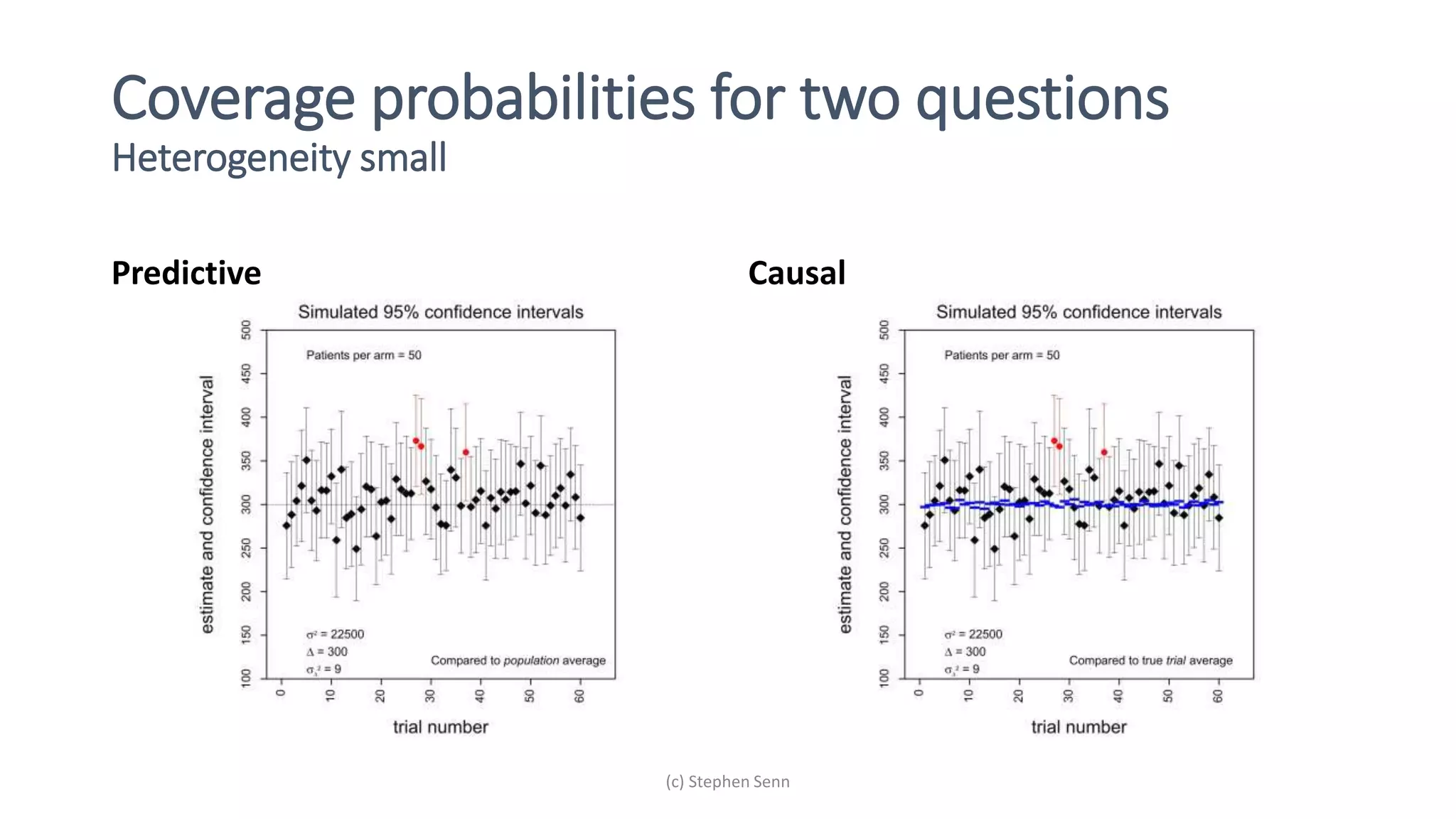

1) Clinical trials can aim to answer causal or predictive questions, but the questions and assumptions need to be clear.

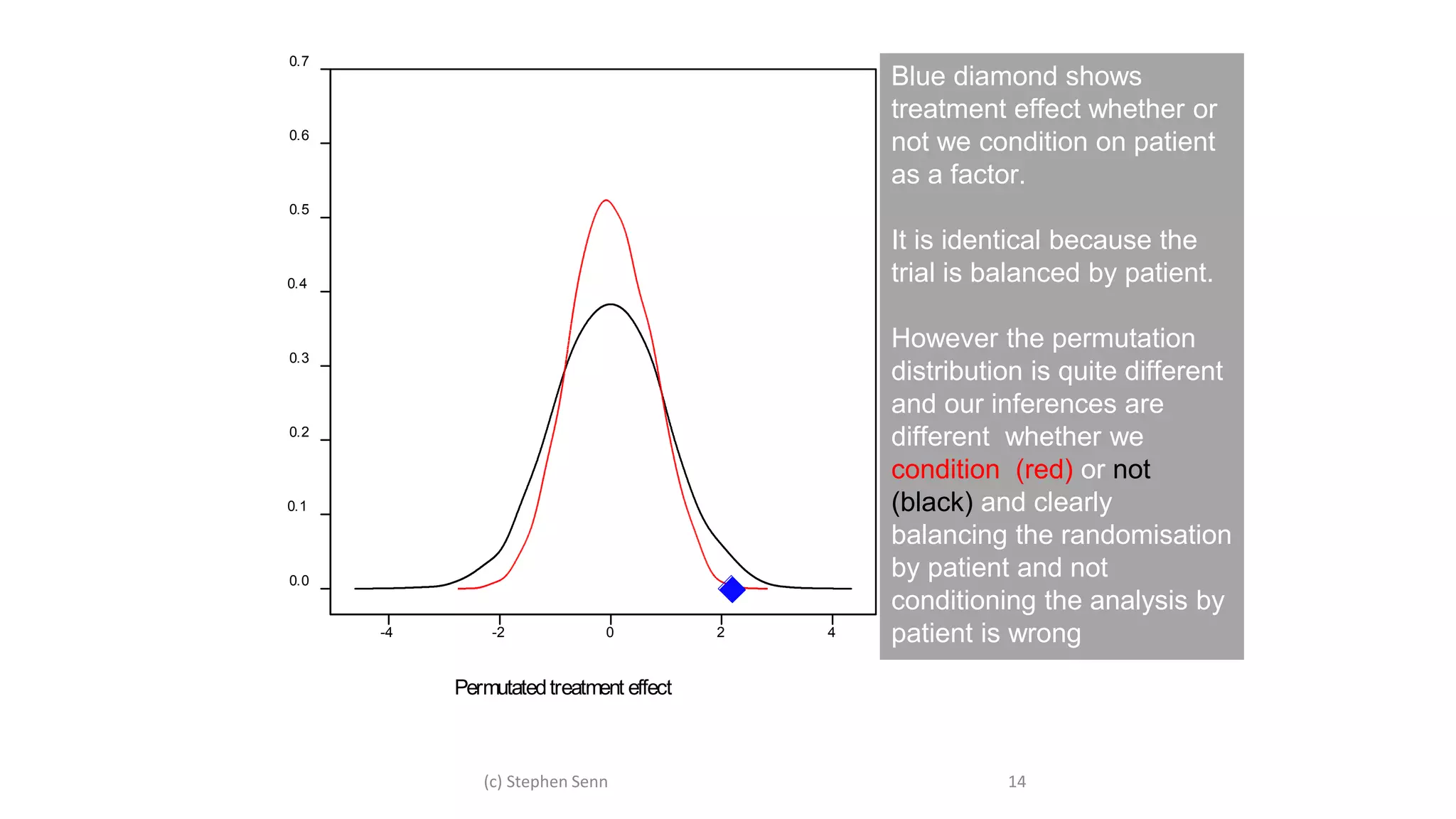

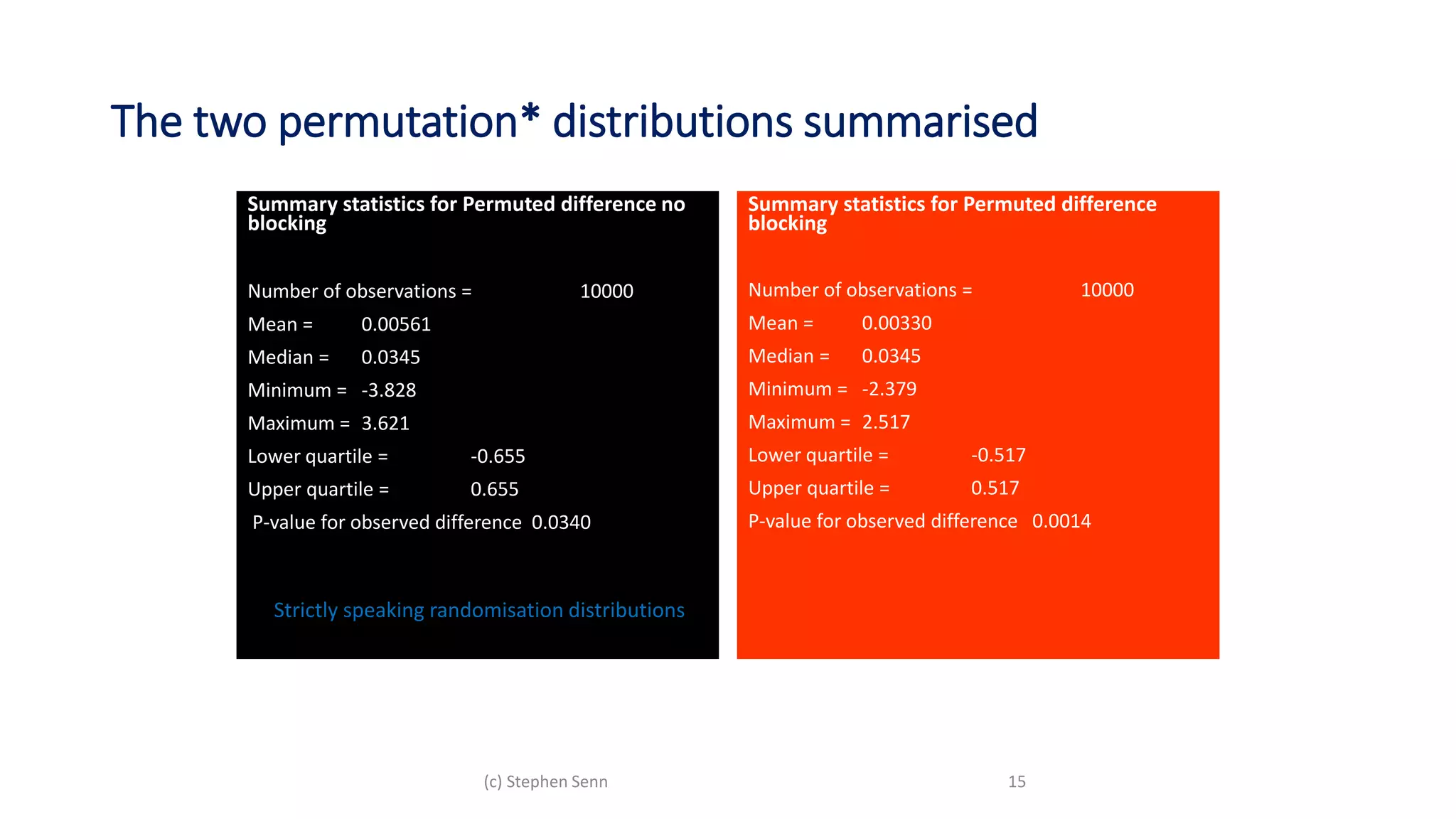

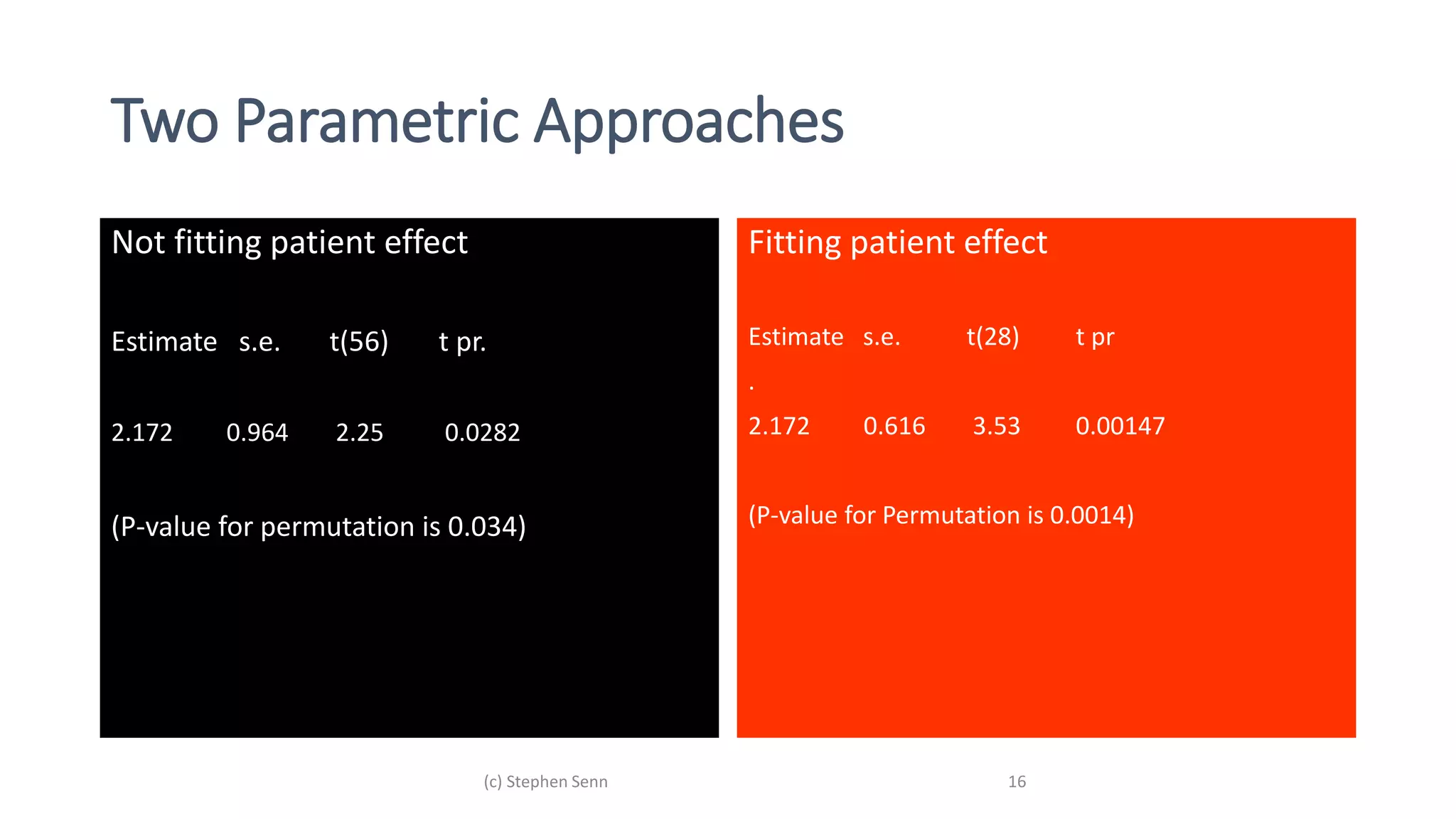

2) For causal questions, randomization-based analyses that account for study design are important. Linear models can be valid if they respect randomization.

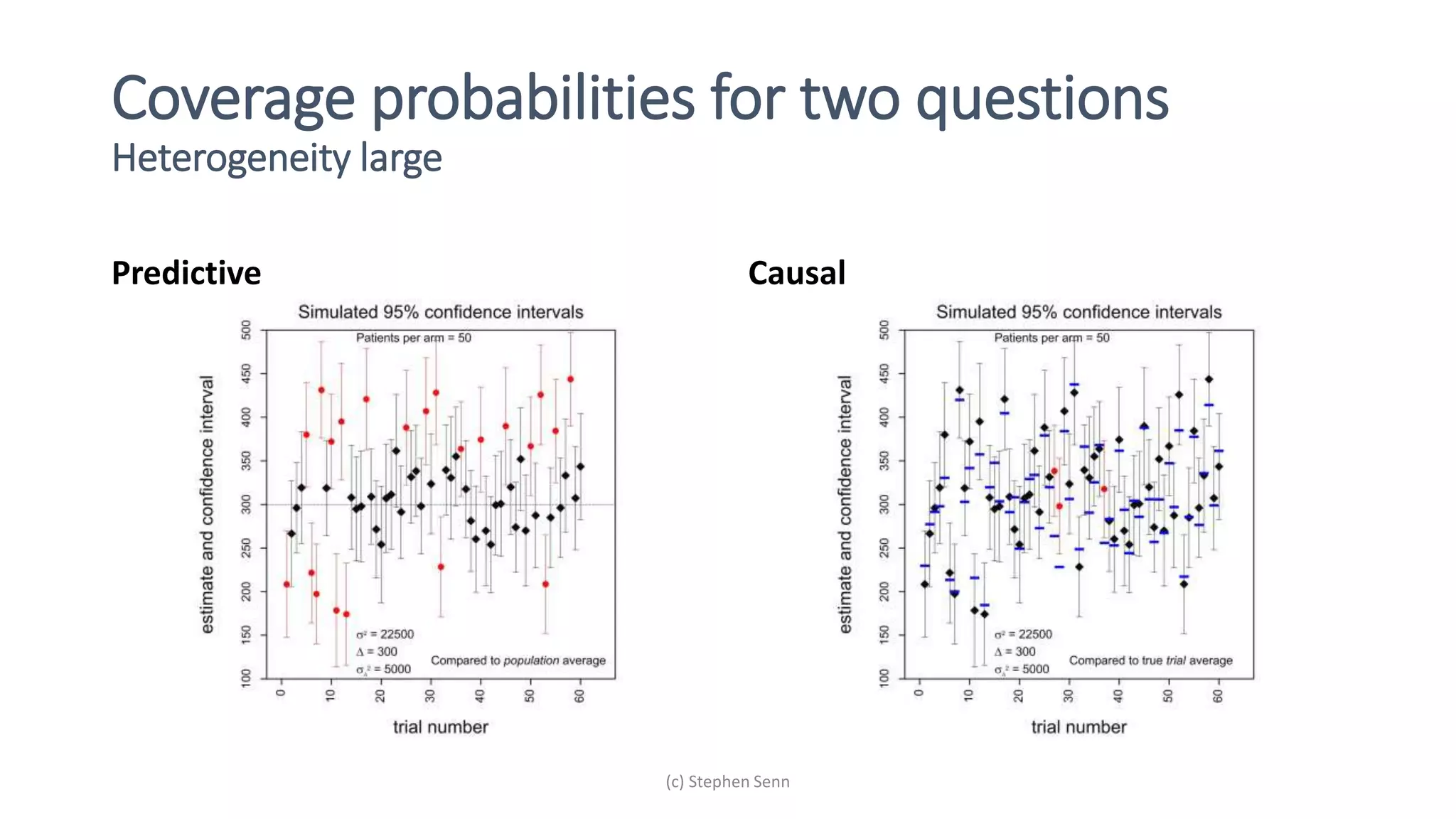

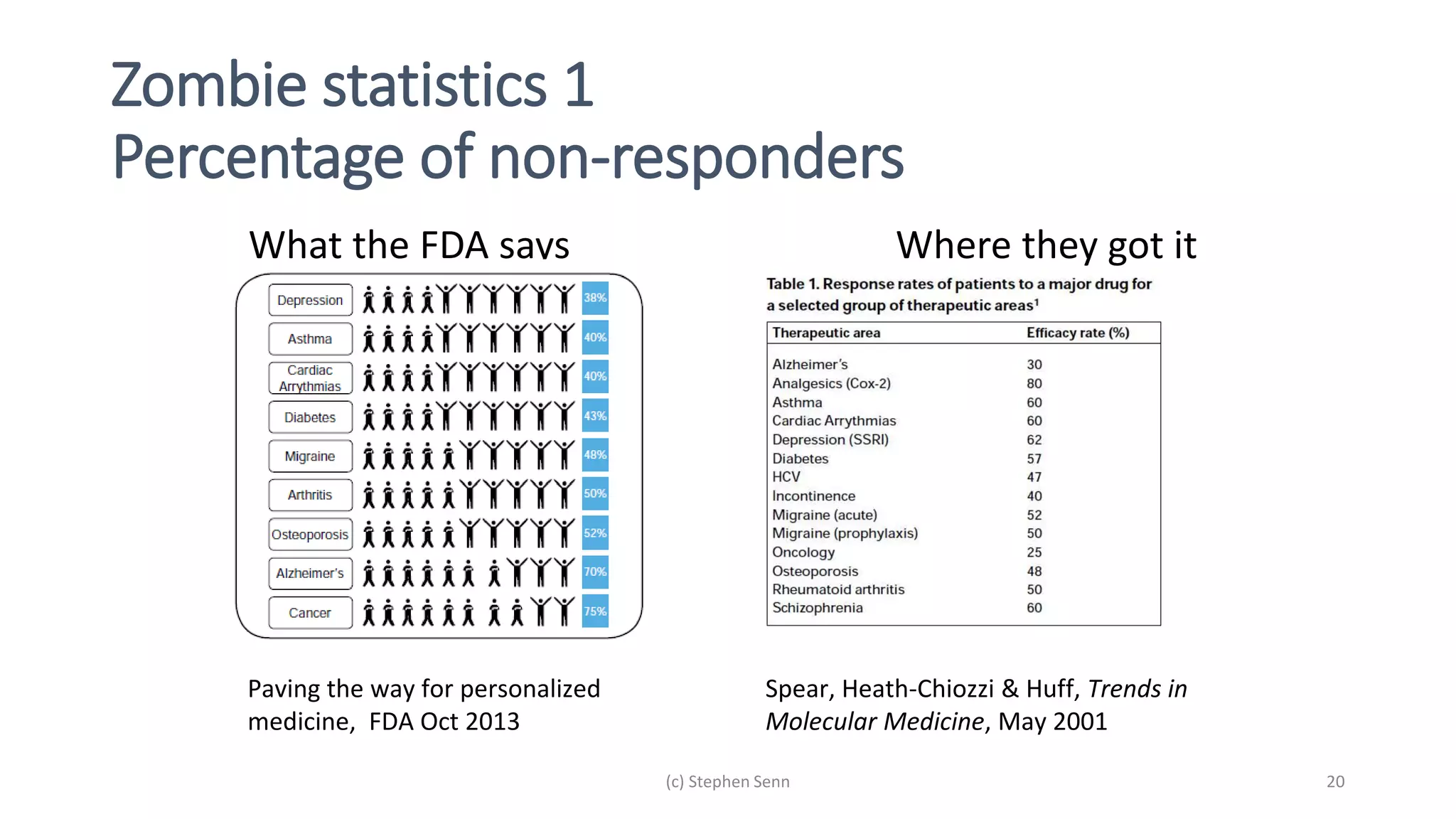

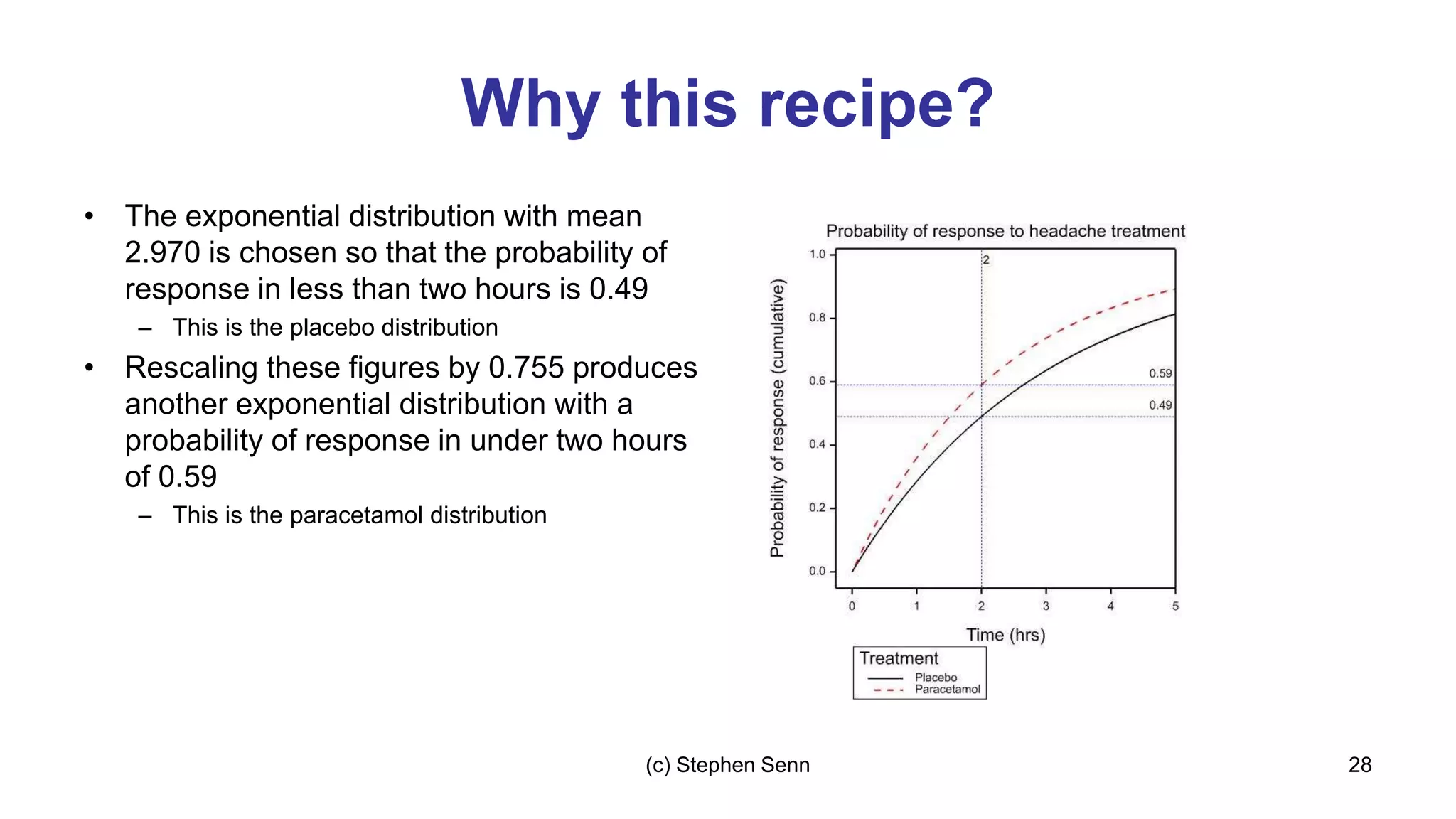

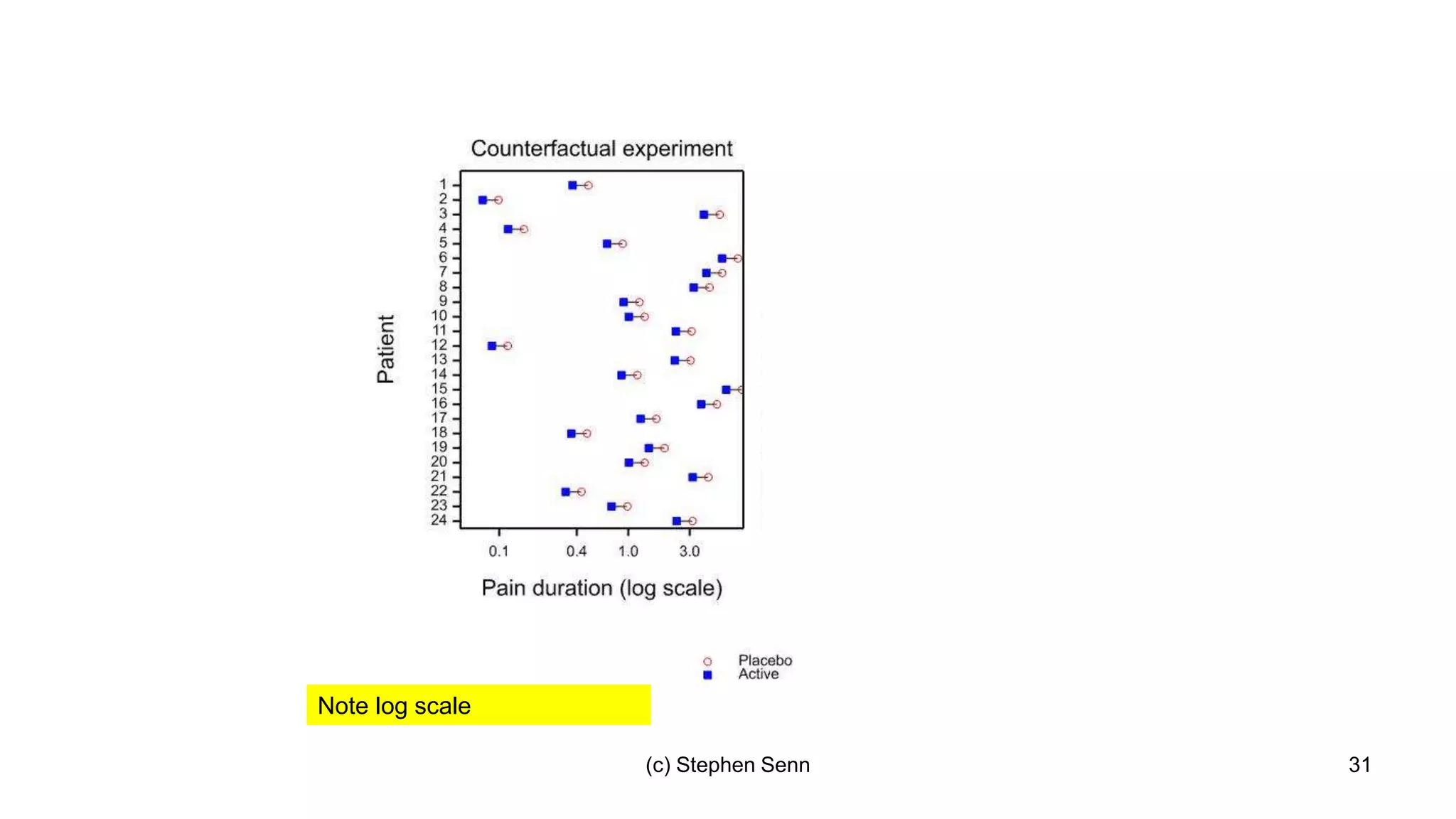

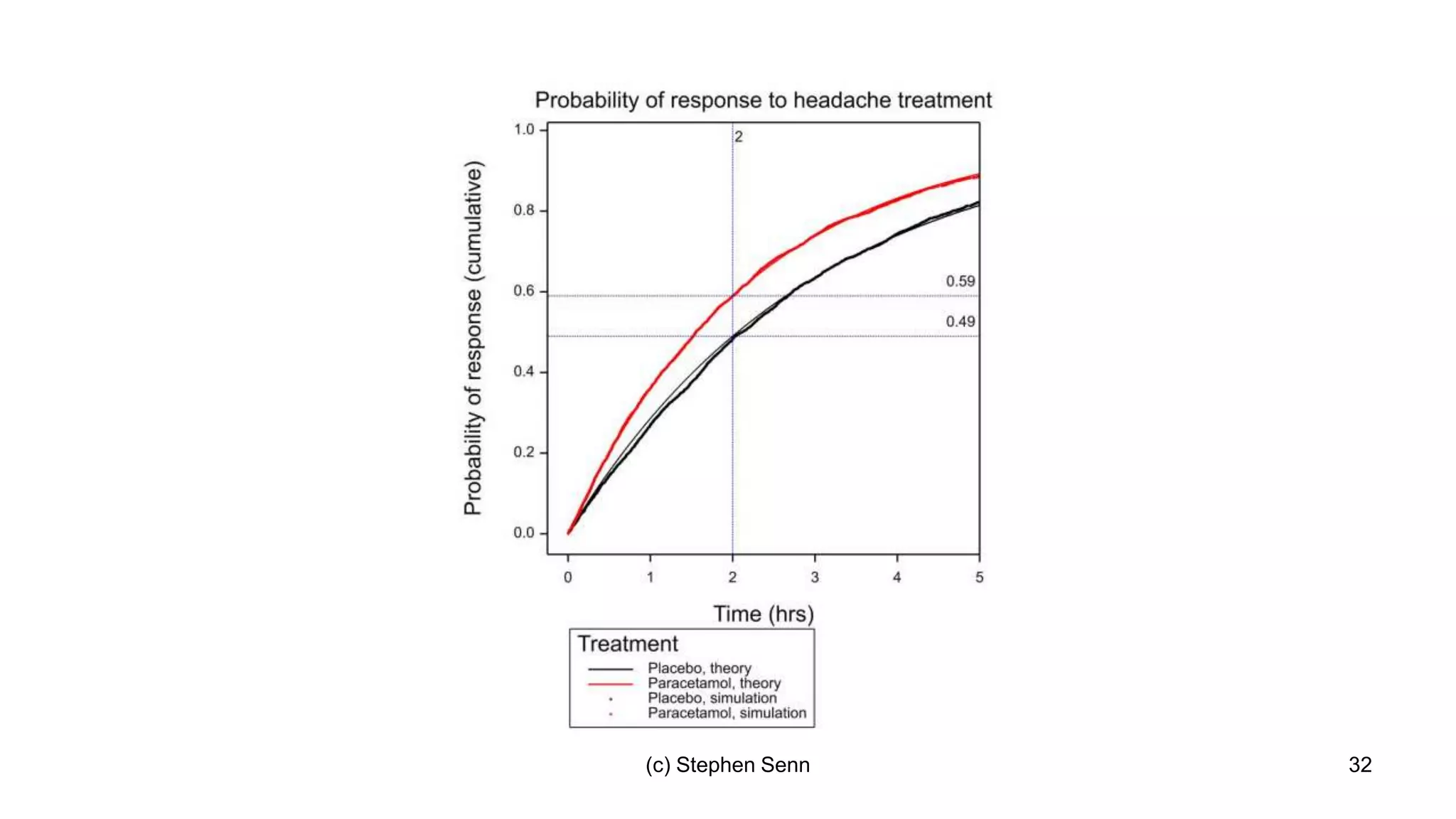

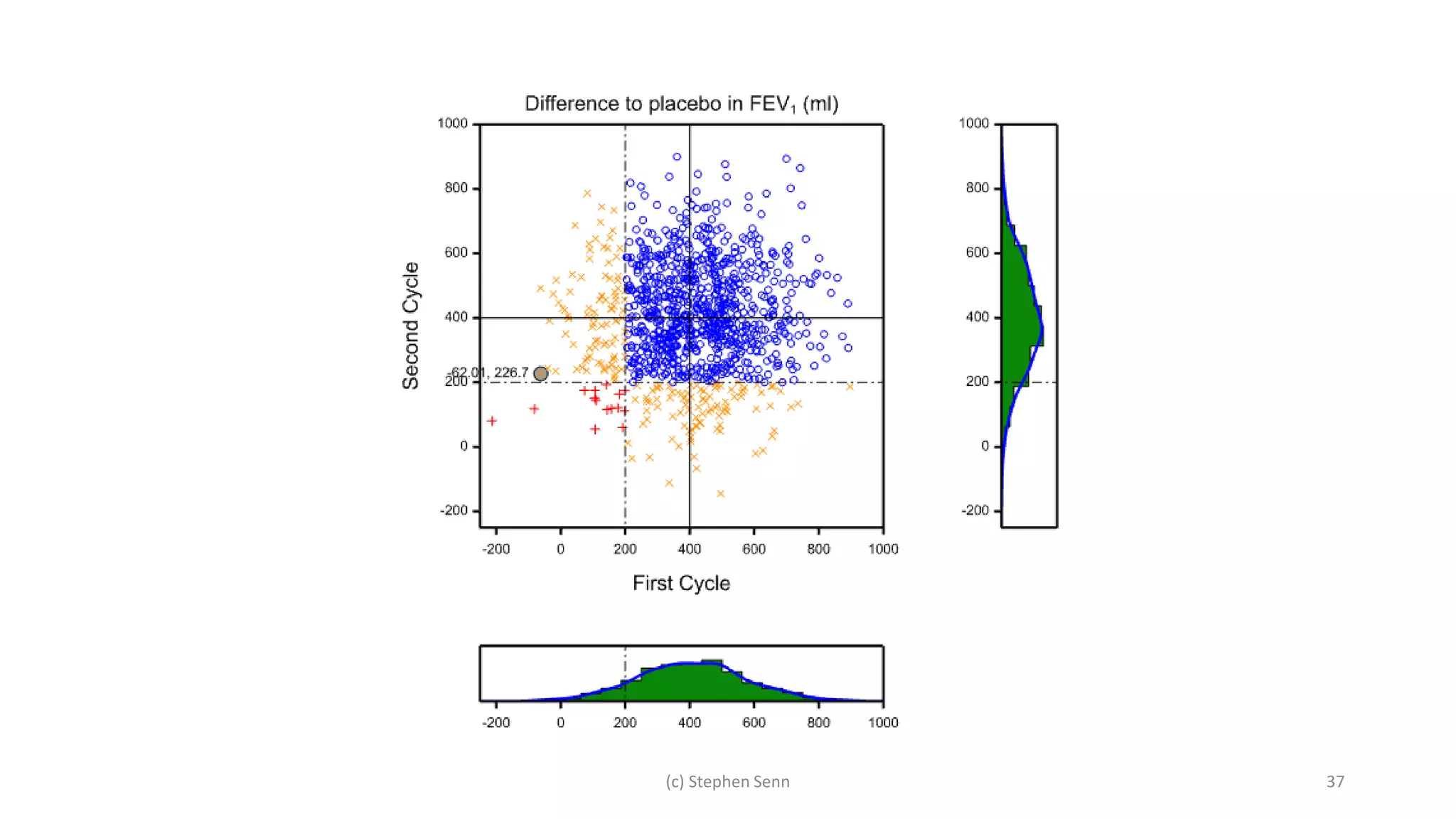

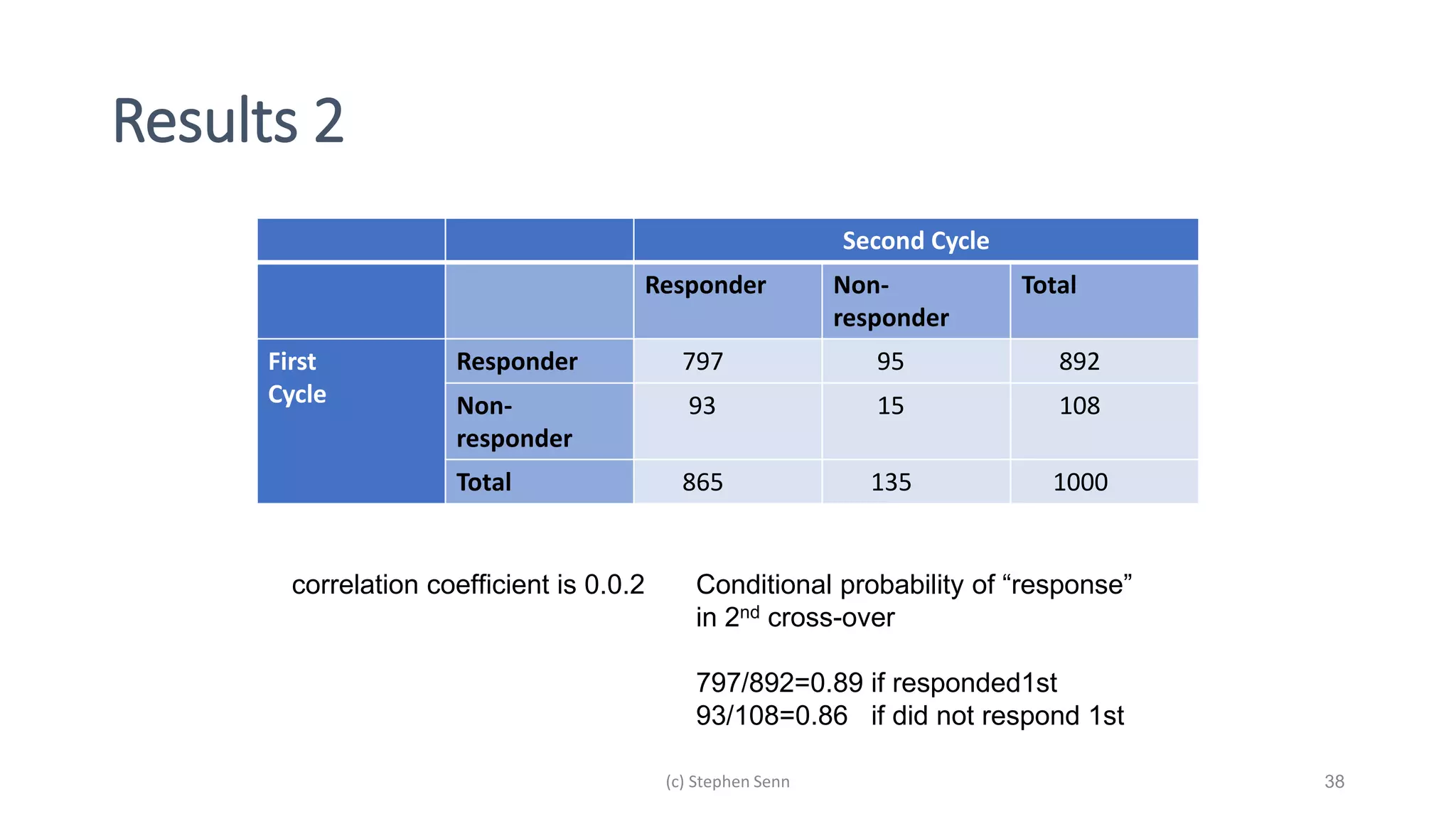

3) Claims of personalized medicine are often overstated due to misinterpretations of variation in trial data. Repeated trials on individuals using designs like n-of-1 trials could provide more insight into response heterogeneity.