Vox - Making voiceprints out of text content.

•

0 likes•358 views

"It works!" Of all the words in the English language, those two have to be the sweetest. Especially when heard at regular intervals. Here's one more demo slide of the grammar parser at work.

Report

Share

Report

Share

Download to read offline

Recommended

Metaphic or the art of looking another way.

For all intents and purposes, we are our words. And verbs and adjectives capture actions and sentiments better than any other tool. Metaphic is premised on the belief that a grammar book and a calculator are all you really need to make sense of web search and social media chatter, apart from all text, in general.

Make Social Media Talk Business

The document discusses a social media monitoring and analysis service called The Conversation Company. It offers the following:

1. Proprietary technology to analyze social media text data and provide insights beyond basic analytics.

2. Services including social media monitoring, analysis and reporting, and text analysis for domains like retail, food, and telecom.

3. Real-time tracking of brands and competitors on social media to understand consumer insights and identify influencer groups.

Term=machine+learning - Experiments in #textanalysis

The document discusses machine learning and different ways to search and analyze information related to the term. It provides examples of categorized search results on conferences in machine learning and using socialized search to resolve issues with a bank. It also analyzes social media traffic from Twitter and extracts important entities mentioned in relation to machine learning from blogs, Quora, Wired and other sources. Finally, it discusses building knowledge by looking at related terms and controlling relevance through simple rules and prefix/suffix analysis.

Ta da!

Big Data analytics combined with text and grammar analysis can add narrative dimensions to data by understanding audience moods, consumer correlations, and discovering new content. With parts of speech tagging, data becomes more insightful by revealing patterns, connections, and correlations beyond just statistics. Text and data analysis using a proprietary framework parser as a cloud-hosted web service can transform data into actionable intelligence and business insights through stories rather than just statistics.

Search vs. find

The document discusses Infosys and provides 3 demo links analyzing how the term "Infosys" is categorized, contexts are extracted, and a bag of words analysis. It notes that the demos are home-grown experiments that are not fully developed and may have glitches. Contact information is provided for Metaphic.

Tutorial - Speech Synthesis System

Speech synthesis we can, in theory, mean any kind of synthetization of speech. For example, it can be the process in which a speech decoder generates the speech signal based on the parameters it has received through the transmission line, or it can be a procedure performed by a computer to estimate some kind of a presentation of the speech signal given a text input. Since there is a special course about the codecs (Puheen koodaus, Speech Coding), this chapter will concentrate on text-to-speech synthesis, or shortly TTS, which will be often referred to as speech synthesis to simplify the notation. Anyway, it is good to keep in mind that irrespective of what kind of synthesis we are dealing with, there are similar criteria in regard to the speech quality. We will return to this topic after a brief TTS motivation, and the rest of this chapter will be dedicated to the implementation point of view in TTS systems. Text-to-speech synthesis is a research field that has received a lot of attention and resources during the last couple of decades – for excellent reasons. One of the most interesting ideas (rather futuristic, though) is the fact that a workable TTS system, combined with a workable speech recognition device, would actually be an extremely efficient method for speech coding). It would provide incomparable compression ratio and flexible possibilities to choose the type of speech (e.g., breathless or hoarse), the fundamental frequency along with its range, the rhythm of speech, and several other effects. Furthermore, if the content of a message needs to be changed, it is much easier to retype the text than to record the signal again. Unfortunately this kind of a system does not yet exist for large vocabularies. Of course there are also numerous speech synthesis applications that are closer to being available than the one discussed above. For instance, a telephone inquiry system where the information is frequently updated, can use TTS to deliver answers to the customers. Speech synthesizers are also important to the visually impaired and to those who have lost their ability to speak. Several other examples can be found in everyday life, such as listening to the messages and news instead of reading them, and using hands-free functions through a voice interface in a car, and so on.

visH (fin).pptx

This document provides an outline and details of a student internship project on text-to-speech conversion using the Python programming language. The project was conducted at iPEC Solutions, which provides AI training and services. The student designed a text-to-speech system using tools including Praat, Audacity, and WaveSurfer. The system converts text to speech by extracting phonetic components, matching them to inventory items, and generating acoustic signals for output. The project aimed to help those with communication difficulties through improved accessibility of text-to-speech technology.

Film Company Logo Design and Analysis

The document discusses potential logo designs for a film company called RADO REEL. It begins by explaining the research conducted into existing film company logos and logo design conventions. Then, several initial logo design ideas are presented consisting of different combinations of graphical elements and fonts in bright colors contrasted against a black background. The document analyzes the strengths and weaknesses of each design. It concludes by selecting one final logo design that features a simplified graphical film reel element and structured font that contrasts to show professionalism while reflecting the filmmaker's name to make it highly recognizable.

Recommended

Metaphic or the art of looking another way.

For all intents and purposes, we are our words. And verbs and adjectives capture actions and sentiments better than any other tool. Metaphic is premised on the belief that a grammar book and a calculator are all you really need to make sense of web search and social media chatter, apart from all text, in general.

Make Social Media Talk Business

The document discusses a social media monitoring and analysis service called The Conversation Company. It offers the following:

1. Proprietary technology to analyze social media text data and provide insights beyond basic analytics.

2. Services including social media monitoring, analysis and reporting, and text analysis for domains like retail, food, and telecom.

3. Real-time tracking of brands and competitors on social media to understand consumer insights and identify influencer groups.

Term=machine+learning - Experiments in #textanalysis

The document discusses machine learning and different ways to search and analyze information related to the term. It provides examples of categorized search results on conferences in machine learning and using socialized search to resolve issues with a bank. It also analyzes social media traffic from Twitter and extracts important entities mentioned in relation to machine learning from blogs, Quora, Wired and other sources. Finally, it discusses building knowledge by looking at related terms and controlling relevance through simple rules and prefix/suffix analysis.

Ta da!

Big Data analytics combined with text and grammar analysis can add narrative dimensions to data by understanding audience moods, consumer correlations, and discovering new content. With parts of speech tagging, data becomes more insightful by revealing patterns, connections, and correlations beyond just statistics. Text and data analysis using a proprietary framework parser as a cloud-hosted web service can transform data into actionable intelligence and business insights through stories rather than just statistics.

Search vs. find

The document discusses Infosys and provides 3 demo links analyzing how the term "Infosys" is categorized, contexts are extracted, and a bag of words analysis. It notes that the demos are home-grown experiments that are not fully developed and may have glitches. Contact information is provided for Metaphic.

Tutorial - Speech Synthesis System

Speech synthesis we can, in theory, mean any kind of synthetization of speech. For example, it can be the process in which a speech decoder generates the speech signal based on the parameters it has received through the transmission line, or it can be a procedure performed by a computer to estimate some kind of a presentation of the speech signal given a text input. Since there is a special course about the codecs (Puheen koodaus, Speech Coding), this chapter will concentrate on text-to-speech synthesis, or shortly TTS, which will be often referred to as speech synthesis to simplify the notation. Anyway, it is good to keep in mind that irrespective of what kind of synthesis we are dealing with, there are similar criteria in regard to the speech quality. We will return to this topic after a brief TTS motivation, and the rest of this chapter will be dedicated to the implementation point of view in TTS systems. Text-to-speech synthesis is a research field that has received a lot of attention and resources during the last couple of decades – for excellent reasons. One of the most interesting ideas (rather futuristic, though) is the fact that a workable TTS system, combined with a workable speech recognition device, would actually be an extremely efficient method for speech coding). It would provide incomparable compression ratio and flexible possibilities to choose the type of speech (e.g., breathless or hoarse), the fundamental frequency along with its range, the rhythm of speech, and several other effects. Furthermore, if the content of a message needs to be changed, it is much easier to retype the text than to record the signal again. Unfortunately this kind of a system does not yet exist for large vocabularies. Of course there are also numerous speech synthesis applications that are closer to being available than the one discussed above. For instance, a telephone inquiry system where the information is frequently updated, can use TTS to deliver answers to the customers. Speech synthesizers are also important to the visually impaired and to those who have lost their ability to speak. Several other examples can be found in everyday life, such as listening to the messages and news instead of reading them, and using hands-free functions through a voice interface in a car, and so on.

visH (fin).pptx

This document provides an outline and details of a student internship project on text-to-speech conversion using the Python programming language. The project was conducted at iPEC Solutions, which provides AI training and services. The student designed a text-to-speech system using tools including Praat, Audacity, and WaveSurfer. The system converts text to speech by extracting phonetic components, matching them to inventory items, and generating acoustic signals for output. The project aimed to help those with communication difficulties through improved accessibility of text-to-speech technology.

Film Company Logo Design and Analysis

The document discusses potential logo designs for a film company called RADO REEL. It begins by explaining the research conducted into existing film company logos and logo design conventions. Then, several initial logo design ideas are presented consisting of different combinations of graphical elements and fonts in bright colors contrasted against a black background. The document analyzes the strengths and weaknesses of each design. It concludes by selecting one final logo design that features a simplified graphical film reel element and structured font that contrasts to show professionalism while reflecting the filmmaker's name to make it highly recognizable.

Writing 101 presentation

The document discusses the importance of context in writing. Great writing is appropriate, compelling, engaging, relevant, entertaining, succinct, and accurate. All writing serves a marketing purpose. Context is key - the audience, their needs, the message, desired outcomes, and appropriate tone must be considered. Drafters are instructed to write brief bios of themselves in three different contexts - a company website, sales proposal, and government contract - to demonstrate how message and tone change based on contextual factors. Feedback is encouraged to improve relevancy, quality, and suitability for each context.

Noa Ha'aman - 2017 - MojiSem: Varying Linguistic Purposes of Emoji in (Twitte...

Noa Ha'aman - 2017 - MojiSem: Varying Linguistic Purposes of Emoji in (Twitte...Association for Computational Linguistics

This document discusses research on analyzing the linguistic functions of emojis in tweets. It presents the results of a study that annotated emojis in a corpus of tweets with linguistic and discursive function tags. The study found it is possible to train a classifier to distinguish between emojis used as linguistic content words versus those used for paralinguistic or affective purposes, even with a small training set. However, accurately classifying multimodal emojis into specific categories like attitude or topic requires more data and feature engineering. Inter-annotator agreement was high for content words but lower for more subjective multimodal subcategories. The document concludes more annotation data is needed to better model the variety of emoji uses and address issues like ambiguity and individualSEO + NLP - Redefining The Computer & Human Relationship.pdf

NLP is constantly used by software & computers. For SEO, understanding how this affects your keywords and phrases is vital for success.

NLP (Natural Language Processing) is the process of computer science that allows computers the ability to understand the text and spoken words as closely to the human language as possible.

NLP is constantly used by software and computers – think Siri, Alexa, translation software, voice assistance, social media monitoring tools, chatbots, spam filters, and more importantly search engines.

IRJET- Voice based Billing System

This document presents a voice-based billing system that takes voice input from customers on purchased items and quantities and generates an itemized bill. It consists of three main modules: 1) A speech-to-text module that converts voice input into text using Google APIs. 2) A word tokenization module that splits the text into individual words using NLTK. 3) A bill generation module that takes the tokenized words as input to calculate the total bill amount. The system was tested on purchasing various fruits and achieved 90% accuracy compared to existing systems. It aims to reduce time complexity for billing compared to manual entry.

IBM cognitive service introduction

The document provides an overview of various cognitive services offered by IBM Watson Developer Cloud including AlchemyLanguage, Concept Expansion, Concept Insights, Dialog, Document Conversion, Language Translation, Natural Language Classifier, Personality Insights, Relationship Extraction, Retrieve and Rank, Speech to Text, Text to Speech, AlchemyVision, Visual Insights, Visual Recognition, AlchemyData News, and Tradeoff Analytics. It describes the purpose and capabilities of each service and includes demos and code samples for some of the services. Resources for developers are also listed at the end.

Key Phrases for Better Search

The document discusses how content analytics can enhance search capabilities. It provides examples of how key phrases, collocations, and statistically improbable phrases can be used to power related searches, cluster results, and enable faceted search. Beyond search, these content analytics techniques can be applied to applications like product recommendations, social media analysis, and customer experience analytics.

SAP (SPEECH AND AUDIO PROCESSING)

This document presents a mini project report on developing a text-to-speech synthesizer using .NET Framework. The objectives are to help visually impaired people read text and enable machines to communicate verbally. It describes the theoretical background of speech synthesis, the front-end and back-end processes, and details the use of concatenative synthesis using a recorded speech database. The document outlines the code, demonstrates the text-to-speech conversion, and discusses applications, advantages, limitations and future enhancements.

Multimedia-Lecture-3.pptx

The document discusses text and its use in multimedia. It describes factors that affect text legibility like font size and style. It recommends choosing easily readable fonts in few sizes and colors. It also discusses tools for editing and designing fonts used to create custom fonts and manipulate existing ones. These tools include Fontographer and Font Monger. The document also discusses using text in multimedia, like subtitles, and navigation elements like menus and buttons. Hypertext and hypermedia are discussed along with their structures like nodes, anchors, and links that allow non-linear navigation.

Speech recognition an overview

This document provides an overview of speech recognition technology. It defines key terms like utterances, pronunciation, and grammar. It describes how speech recognition works by explaining the acoustic model, grammar, and recognized text. It also discusses standards, performance measurement, and provides an example of Google Search by Voice.

Windows 11 voice input

What’s Microsoft Windows voice/speech input? What technologies does it use? How to achieve voice typing? Read this article to learn the details!

Text and Speech Analysis

The objective of this work is to provide a complete analysis of a piece of conversation, carrying out the following features:

- Phonologic features of dialogue and a brief statistical analysis;

Unlocking the Power of AI Text-to-Speech

AI Text-to-Speech (TTS) is an advanced technology that enables the conversion of written text into natural-sounding spoken words. It is a branch of artificial intelligence (AI) and speech synthesis that aims to create highly intelligible and expressive speech that closely mimics human speech patterns. By employing various algorithms and neural network models, AI TTS systems can generate synthesized voices that can be used in a wide range of applications, including accessibility aids, entertainment, virtual assistants, and more.

The advent of AI TTS has brought about significant advancements in human-computer interaction and communication. Its importance lies in its ability to bridge the gap between written information and auditory experiences, making content accessible to individuals with visual impairments or reading difficulties. AI TTS also finds applications in media and entertainment industries, where it enhances voice-overs in films, video games, and virtual reality experiences. Additionally, AI TTS technology powers virtual assistants, chatbots, and voice-enabled devices, providing more natural and interactive user experiences.

At a high level, AI TTS systems convert text into spoken words through a series of steps. The process typically involves text analysis and preprocessing to understand the linguistic structure and context, followed by acoustic modeling to generate the appropriate phonetic and prosodic features.

Finally, waveform synthesis techniques are employed to transform these features into a continuous and intelligible speech signal. AI TTS utilizes deep learning algorithms, such as recurrent neural networks (RNNs) or convolutional neural networks (CNNs), to learn from large amounts of training data and generate high-quality speech output.

As AI TTS continues to advance, it presents exciting possibilities for improving accessibility, entertainment, language learning, and beyond. This outline will delve into the fundamentals, components, challenges, and applications of AI Text-to-Speech, shedding light on its potential and exploring the implications of this groundbreaking technology.

Paper on Speech Recognition

The document discusses a proposed customized speech recognition system that can recognize any regional language. It does this by using Microsoft SAPI to convert words in regional languages to phonemes and store them in a custom grammar database along with their associated actions. During use, a user's spoken words are converted to phonemes using SAPI and compared to the custom grammar database to identify the associated action to perform. This allows the system to recognize and respond to voice commands in any language by training itself on a user's specific regional language.

Scriptwriting format conventions report

The document discusses different types of scripts used in various mediums:

1) A master scene script or "spec script" focuses on story over technical details to sell an idea to investors. It includes sluglines, narrative descriptions, and character text blocks.

2) A shooting script includes additional technical details needed for production beyond a spec script, such as camera angles and editing techniques. It is used by directors and crew.

3) A radio script focuses on how lines should be delivered, using specifications like distortions, volume levels, and proximity to microphones.

4) A video game script separates the story into a flowchart of choices and their outcomes, and script text for different narratives based on player decisions.

Big data

This document discusses text analysis tools and techniques. It provides an overview of 8 popular text analysis tools, including MonkeyLearn, Aylien, IBM Watson, Thematic, Google Cloud NLP, Amazon Comprehend, MeaningCloud, and Lexalytics. It then describes 5 common text analysis techniques: information extraction, categorization, clustering, visualization, and summarization. Finally, it outlines 7 basic functions of text analytics: language identification, tokenization, sentence breaking, part-of-speech tagging, chunking, syntax parsing, and sentence chaining.

NLP Deep Learning with Tensorflow

Understand what is natural language process and how can we approach this problem with deep learning especially using google tensorflow

MODULE 4-Text Analytics.pptx

This document provides an overview of text analysis and mining. It discusses key concepts like text pre-processing, representation, shallow parsing, stop words, stemming and lemmatization. Specific techniques covered include tokenization, part-of-speech tagging, Porter stemming algorithm. Applications mentioned are sentiment analysis, document similarity, cluster analysis. The document also provides a multi-step example of text analysis involving collecting raw text, representing text, computing TF-IDF, categorizing documents by topics, determining sentiments and gaining insights.

Global Situational Awareness of A.I. and where its headed

You can see the future first in San Francisco.

Over the past year, the talk of the town has shifted from $10 billion compute clusters to $100 billion clusters to trillion-dollar clusters. Every six months another zero is added to the boardroom plans. Behind the scenes, there’s a fierce scramble to secure every power contract still available for the rest of the decade, every voltage transformer that can possibly be procured. American big business is gearing up to pour trillions of dollars into a long-unseen mobilization of American industrial might. By the end of the decade, American electricity production will have grown tens of percent; from the shale fields of Pennsylvania to the solar farms of Nevada, hundreds of millions of GPUs will hum.

The AGI race has begun. We are building machines that can think and reason. By 2025/26, these machines will outpace college graduates. By the end of the decade, they will be smarter than you or I; we will have superintelligence, in the true sense of the word. Along the way, national security forces not seen in half a century will be un-leashed, and before long, The Project will be on. If we’re lucky, we’ll be in an all-out race with the CCP; if we’re unlucky, an all-out war.

Everyone is now talking about AI, but few have the faintest glimmer of what is about to hit them. Nvidia analysts still think 2024 might be close to the peak. Mainstream pundits are stuck on the wilful blindness of “it’s just predicting the next word”. They see only hype and business-as-usual; at most they entertain another internet-scale technological change.

Before long, the world will wake up. But right now, there are perhaps a few hundred people, most of them in San Francisco and the AI labs, that have situational awareness. Through whatever peculiar forces of fate, I have found myself amongst them. A few years ago, these people were derided as crazy—but they trusted the trendlines, which allowed them to correctly predict the AI advances of the past few years. Whether these people are also right about the next few years remains to be seen. But these are very smart people—the smartest people I have ever met—and they are the ones building this technology. Perhaps they will be an odd footnote in history, or perhaps they will go down in history like Szilard and Oppenheimer and Teller. If they are seeing the future even close to correctly, we are in for a wild ride.

Let me tell you what we see.

一比一原版(Glasgow毕业证书)格拉斯哥大学毕业证如何办理

毕业原版【微信:41543339】【(Glasgow毕业证书)格拉斯哥大学毕业证】【微信:41543339】成绩单、外壳、offer、留信学历认证(永久存档真实可查)采用学校原版纸张、特殊工艺完全按照原版一比一制作(包括:隐形水印,阴影底纹,钢印LOGO烫金烫银,LOGO烫金烫银复合重叠,文字图案浮雕,激光镭射,紫外荧光,温感,复印防伪)行业标杆!精益求精,诚心合作,真诚制作!多年品质 ,按需精细制作,24小时接单,全套进口原装设备,十五年致力于帮助留学生解决难题,业务范围有加拿大、英国、澳洲、韩国、美国、新加坡,新西兰等学历材料,包您满意。

【我们承诺采用的是学校原版纸张(纸质、底色、纹路),我们拥有全套进口原装设备,特殊工艺都是采用不同机器制作,仿真度基本可以达到100%,所有工艺效果都可提前给客户展示,不满意可以根据客户要求进行调整,直到满意为止!】

【业务选择办理准则】

一、工作未确定,回国需先给父母、亲戚朋友看下文凭的情况,办理一份就读学校的毕业证【微信41543339】文凭即可

二、回国进私企、外企、自己做生意的情况,这些单位是不查询毕业证真伪的,而且国内没有渠道去查询国外文凭的真假,也不需要提供真实教育部认证。鉴于此,办理一份毕业证【微信41543339】即可

三、进国企,银行,事业单位,考公务员等等,这些单位是必需要提供真实教育部认证的,办理教育部认证所需资料众多且烦琐,所有材料您都必须提供原件,我们凭借丰富的经验,快捷的绿色通道帮您快速整合材料,让您少走弯路。

留信网认证的作用:

1:该专业认证可证明留学生真实身份

2:同时对留学生所学专业登记给予评定

3:国家专业人才认证中心颁发入库证书

4:这个认证书并且可以归档倒地方

5:凡事获得留信网入网的信息将会逐步更新到个人身份内,将在公安局网内查询个人身份证信息后,同步读取人才网入库信息

6:个人职称评审加20分

7:个人信誉贷款加10分

8:在国家人才网主办的国家网络招聘大会中纳入资料,供国家高端企业选择人才

留信网服务项目:

1、留学生专业人才库服务(留信分析)

2、国(境)学习人员提供就业推荐信服务

3、留学人员区块链存储服务

→ 【关于价格问题(保证一手价格)】

我们所定的价格是非常合理的,而且我们现在做得单子大多数都是代理和回头客户介绍的所以一般现在有新的单子 我给客户的都是第一手的代理价格,因为我想坦诚对待大家 不想跟大家在价格方面浪费时间

对于老客户或者被老客户介绍过来的朋友,我们都会适当给一些优惠。

选择实体注册公司办理,更放心,更安全!我们的承诺:客户在留信官方认证查询网站查询到认证通过结果后付款,不成功不收费!

My burning issue is homelessness K.C.M.O.

My burning issue is homelessness in Kansas City, MO

To: Tom Tresser

From: Roger Warren

一比一原版(Coventry毕业证书)考文垂大学毕业证如何办理

毕业原版【微信:41543339】【(Coventry毕业证书)考文垂大学毕业证】【微信:41543339】成绩单、外壳、offer、留信学历认证(永久存档真实可查)采用学校原版纸张、特殊工艺完全按照原版一比一制作(包括:隐形水印,阴影底纹,钢印LOGO烫金烫银,LOGO烫金烫银复合重叠,文字图案浮雕,激光镭射,紫外荧光,温感,复印防伪)行业标杆!精益求精,诚心合作,真诚制作!多年品质 ,按需精细制作,24小时接单,全套进口原装设备,十五年致力于帮助留学生解决难题,业务范围有加拿大、英国、澳洲、韩国、美国、新加坡,新西兰等学历材料,包您满意。

【我们承诺采用的是学校原版纸张(纸质、底色、纹路),我们拥有全套进口原装设备,特殊工艺都是采用不同机器制作,仿真度基本可以达到100%,所有工艺效果都可提前给客户展示,不满意可以根据客户要求进行调整,直到满意为止!】

【业务选择办理准则】

一、工作未确定,回国需先给父母、亲戚朋友看下文凭的情况,办理一份就读学校的毕业证【微信41543339】文凭即可

二、回国进私企、外企、自己做生意的情况,这些单位是不查询毕业证真伪的,而且国内没有渠道去查询国外文凭的真假,也不需要提供真实教育部认证。鉴于此,办理一份毕业证【微信41543339】即可

三、进国企,银行,事业单位,考公务员等等,这些单位是必需要提供真实教育部认证的,办理教育部认证所需资料众多且烦琐,所有材料您都必须提供原件,我们凭借丰富的经验,快捷的绿色通道帮您快速整合材料,让您少走弯路。

留信网认证的作用:

1:该专业认证可证明留学生真实身份

2:同时对留学生所学专业登记给予评定

3:国家专业人才认证中心颁发入库证书

4:这个认证书并且可以归档倒地方

5:凡事获得留信网入网的信息将会逐步更新到个人身份内,将在公安局网内查询个人身份证信息后,同步读取人才网入库信息

6:个人职称评审加20分

7:个人信誉贷款加10分

8:在国家人才网主办的国家网络招聘大会中纳入资料,供国家高端企业选择人才

留信网服务项目:

1、留学生专业人才库服务(留信分析)

2、国(境)学习人员提供就业推荐信服务

3、留学人员区块链存储服务

→ 【关于价格问题(保证一手价格)】

我们所定的价格是非常合理的,而且我们现在做得单子大多数都是代理和回头客户介绍的所以一般现在有新的单子 我给客户的都是第一手的代理价格,因为我想坦诚对待大家 不想跟大家在价格方面浪费时间

对于老客户或者被老客户介绍过来的朋友,我们都会适当给一些优惠。

选择实体注册公司办理,更放心,更安全!我们的承诺:客户在留信官方认证查询网站查询到认证通过结果后付款,不成功不收费!

More Related Content

Similar to Vox - Making voiceprints out of text content.

Writing 101 presentation

The document discusses the importance of context in writing. Great writing is appropriate, compelling, engaging, relevant, entertaining, succinct, and accurate. All writing serves a marketing purpose. Context is key - the audience, their needs, the message, desired outcomes, and appropriate tone must be considered. Drafters are instructed to write brief bios of themselves in three different contexts - a company website, sales proposal, and government contract - to demonstrate how message and tone change based on contextual factors. Feedback is encouraged to improve relevancy, quality, and suitability for each context.

Noa Ha'aman - 2017 - MojiSem: Varying Linguistic Purposes of Emoji in (Twitte...

Noa Ha'aman - 2017 - MojiSem: Varying Linguistic Purposes of Emoji in (Twitte...Association for Computational Linguistics

This document discusses research on analyzing the linguistic functions of emojis in tweets. It presents the results of a study that annotated emojis in a corpus of tweets with linguistic and discursive function tags. The study found it is possible to train a classifier to distinguish between emojis used as linguistic content words versus those used for paralinguistic or affective purposes, even with a small training set. However, accurately classifying multimodal emojis into specific categories like attitude or topic requires more data and feature engineering. Inter-annotator agreement was high for content words but lower for more subjective multimodal subcategories. The document concludes more annotation data is needed to better model the variety of emoji uses and address issues like ambiguity and individualSEO + NLP - Redefining The Computer & Human Relationship.pdf

NLP is constantly used by software & computers. For SEO, understanding how this affects your keywords and phrases is vital for success.

NLP (Natural Language Processing) is the process of computer science that allows computers the ability to understand the text and spoken words as closely to the human language as possible.

NLP is constantly used by software and computers – think Siri, Alexa, translation software, voice assistance, social media monitoring tools, chatbots, spam filters, and more importantly search engines.

IRJET- Voice based Billing System

This document presents a voice-based billing system that takes voice input from customers on purchased items and quantities and generates an itemized bill. It consists of three main modules: 1) A speech-to-text module that converts voice input into text using Google APIs. 2) A word tokenization module that splits the text into individual words using NLTK. 3) A bill generation module that takes the tokenized words as input to calculate the total bill amount. The system was tested on purchasing various fruits and achieved 90% accuracy compared to existing systems. It aims to reduce time complexity for billing compared to manual entry.

IBM cognitive service introduction

The document provides an overview of various cognitive services offered by IBM Watson Developer Cloud including AlchemyLanguage, Concept Expansion, Concept Insights, Dialog, Document Conversion, Language Translation, Natural Language Classifier, Personality Insights, Relationship Extraction, Retrieve and Rank, Speech to Text, Text to Speech, AlchemyVision, Visual Insights, Visual Recognition, AlchemyData News, and Tradeoff Analytics. It describes the purpose and capabilities of each service and includes demos and code samples for some of the services. Resources for developers are also listed at the end.

Key Phrases for Better Search

The document discusses how content analytics can enhance search capabilities. It provides examples of how key phrases, collocations, and statistically improbable phrases can be used to power related searches, cluster results, and enable faceted search. Beyond search, these content analytics techniques can be applied to applications like product recommendations, social media analysis, and customer experience analytics.

SAP (SPEECH AND AUDIO PROCESSING)

This document presents a mini project report on developing a text-to-speech synthesizer using .NET Framework. The objectives are to help visually impaired people read text and enable machines to communicate verbally. It describes the theoretical background of speech synthesis, the front-end and back-end processes, and details the use of concatenative synthesis using a recorded speech database. The document outlines the code, demonstrates the text-to-speech conversion, and discusses applications, advantages, limitations and future enhancements.

Multimedia-Lecture-3.pptx

The document discusses text and its use in multimedia. It describes factors that affect text legibility like font size and style. It recommends choosing easily readable fonts in few sizes and colors. It also discusses tools for editing and designing fonts used to create custom fonts and manipulate existing ones. These tools include Fontographer and Font Monger. The document also discusses using text in multimedia, like subtitles, and navigation elements like menus and buttons. Hypertext and hypermedia are discussed along with their structures like nodes, anchors, and links that allow non-linear navigation.

Speech recognition an overview

This document provides an overview of speech recognition technology. It defines key terms like utterances, pronunciation, and grammar. It describes how speech recognition works by explaining the acoustic model, grammar, and recognized text. It also discusses standards, performance measurement, and provides an example of Google Search by Voice.

Windows 11 voice input

What’s Microsoft Windows voice/speech input? What technologies does it use? How to achieve voice typing? Read this article to learn the details!

Text and Speech Analysis

The objective of this work is to provide a complete analysis of a piece of conversation, carrying out the following features:

- Phonologic features of dialogue and a brief statistical analysis;

Unlocking the Power of AI Text-to-Speech

AI Text-to-Speech (TTS) is an advanced technology that enables the conversion of written text into natural-sounding spoken words. It is a branch of artificial intelligence (AI) and speech synthesis that aims to create highly intelligible and expressive speech that closely mimics human speech patterns. By employing various algorithms and neural network models, AI TTS systems can generate synthesized voices that can be used in a wide range of applications, including accessibility aids, entertainment, virtual assistants, and more.

The advent of AI TTS has brought about significant advancements in human-computer interaction and communication. Its importance lies in its ability to bridge the gap between written information and auditory experiences, making content accessible to individuals with visual impairments or reading difficulties. AI TTS also finds applications in media and entertainment industries, where it enhances voice-overs in films, video games, and virtual reality experiences. Additionally, AI TTS technology powers virtual assistants, chatbots, and voice-enabled devices, providing more natural and interactive user experiences.

At a high level, AI TTS systems convert text into spoken words through a series of steps. The process typically involves text analysis and preprocessing to understand the linguistic structure and context, followed by acoustic modeling to generate the appropriate phonetic and prosodic features.

Finally, waveform synthesis techniques are employed to transform these features into a continuous and intelligible speech signal. AI TTS utilizes deep learning algorithms, such as recurrent neural networks (RNNs) or convolutional neural networks (CNNs), to learn from large amounts of training data and generate high-quality speech output.

As AI TTS continues to advance, it presents exciting possibilities for improving accessibility, entertainment, language learning, and beyond. This outline will delve into the fundamentals, components, challenges, and applications of AI Text-to-Speech, shedding light on its potential and exploring the implications of this groundbreaking technology.

Paper on Speech Recognition

The document discusses a proposed customized speech recognition system that can recognize any regional language. It does this by using Microsoft SAPI to convert words in regional languages to phonemes and store them in a custom grammar database along with their associated actions. During use, a user's spoken words are converted to phonemes using SAPI and compared to the custom grammar database to identify the associated action to perform. This allows the system to recognize and respond to voice commands in any language by training itself on a user's specific regional language.

Scriptwriting format conventions report

The document discusses different types of scripts used in various mediums:

1) A master scene script or "spec script" focuses on story over technical details to sell an idea to investors. It includes sluglines, narrative descriptions, and character text blocks.

2) A shooting script includes additional technical details needed for production beyond a spec script, such as camera angles and editing techniques. It is used by directors and crew.

3) A radio script focuses on how lines should be delivered, using specifications like distortions, volume levels, and proximity to microphones.

4) A video game script separates the story into a flowchart of choices and their outcomes, and script text for different narratives based on player decisions.

Big data

This document discusses text analysis tools and techniques. It provides an overview of 8 popular text analysis tools, including MonkeyLearn, Aylien, IBM Watson, Thematic, Google Cloud NLP, Amazon Comprehend, MeaningCloud, and Lexalytics. It then describes 5 common text analysis techniques: information extraction, categorization, clustering, visualization, and summarization. Finally, it outlines 7 basic functions of text analytics: language identification, tokenization, sentence breaking, part-of-speech tagging, chunking, syntax parsing, and sentence chaining.

NLP Deep Learning with Tensorflow

Understand what is natural language process and how can we approach this problem with deep learning especially using google tensorflow

MODULE 4-Text Analytics.pptx

This document provides an overview of text analysis and mining. It discusses key concepts like text pre-processing, representation, shallow parsing, stop words, stemming and lemmatization. Specific techniques covered include tokenization, part-of-speech tagging, Porter stemming algorithm. Applications mentioned are sentiment analysis, document similarity, cluster analysis. The document also provides a multi-step example of text analysis involving collecting raw text, representing text, computing TF-IDF, categorizing documents by topics, determining sentiments and gaining insights.

Similar to Vox - Making voiceprints out of text content. (17)

Noa Ha'aman - 2017 - MojiSem: Varying Linguistic Purposes of Emoji in (Twitte...

Noa Ha'aman - 2017 - MojiSem: Varying Linguistic Purposes of Emoji in (Twitte...

SEO + NLP - Redefining The Computer & Human Relationship.pdf

SEO + NLP - Redefining The Computer & Human Relationship.pdf

Recently uploaded

Global Situational Awareness of A.I. and where its headed

You can see the future first in San Francisco.

Over the past year, the talk of the town has shifted from $10 billion compute clusters to $100 billion clusters to trillion-dollar clusters. Every six months another zero is added to the boardroom plans. Behind the scenes, there’s a fierce scramble to secure every power contract still available for the rest of the decade, every voltage transformer that can possibly be procured. American big business is gearing up to pour trillions of dollars into a long-unseen mobilization of American industrial might. By the end of the decade, American electricity production will have grown tens of percent; from the shale fields of Pennsylvania to the solar farms of Nevada, hundreds of millions of GPUs will hum.

The AGI race has begun. We are building machines that can think and reason. By 2025/26, these machines will outpace college graduates. By the end of the decade, they will be smarter than you or I; we will have superintelligence, in the true sense of the word. Along the way, national security forces not seen in half a century will be un-leashed, and before long, The Project will be on. If we’re lucky, we’ll be in an all-out race with the CCP; if we’re unlucky, an all-out war.

Everyone is now talking about AI, but few have the faintest glimmer of what is about to hit them. Nvidia analysts still think 2024 might be close to the peak. Mainstream pundits are stuck on the wilful blindness of “it’s just predicting the next word”. They see only hype and business-as-usual; at most they entertain another internet-scale technological change.

Before long, the world will wake up. But right now, there are perhaps a few hundred people, most of them in San Francisco and the AI labs, that have situational awareness. Through whatever peculiar forces of fate, I have found myself amongst them. A few years ago, these people were derided as crazy—but they trusted the trendlines, which allowed them to correctly predict the AI advances of the past few years. Whether these people are also right about the next few years remains to be seen. But these are very smart people—the smartest people I have ever met—and they are the ones building this technology. Perhaps they will be an odd footnote in history, or perhaps they will go down in history like Szilard and Oppenheimer and Teller. If they are seeing the future even close to correctly, we are in for a wild ride.

Let me tell you what we see.

一比一原版(Glasgow毕业证书)格拉斯哥大学毕业证如何办理

毕业原版【微信:41543339】【(Glasgow毕业证书)格拉斯哥大学毕业证】【微信:41543339】成绩单、外壳、offer、留信学历认证(永久存档真实可查)采用学校原版纸张、特殊工艺完全按照原版一比一制作(包括:隐形水印,阴影底纹,钢印LOGO烫金烫银,LOGO烫金烫银复合重叠,文字图案浮雕,激光镭射,紫外荧光,温感,复印防伪)行业标杆!精益求精,诚心合作,真诚制作!多年品质 ,按需精细制作,24小时接单,全套进口原装设备,十五年致力于帮助留学生解决难题,业务范围有加拿大、英国、澳洲、韩国、美国、新加坡,新西兰等学历材料,包您满意。

【我们承诺采用的是学校原版纸张(纸质、底色、纹路),我们拥有全套进口原装设备,特殊工艺都是采用不同机器制作,仿真度基本可以达到100%,所有工艺效果都可提前给客户展示,不满意可以根据客户要求进行调整,直到满意为止!】

【业务选择办理准则】

一、工作未确定,回国需先给父母、亲戚朋友看下文凭的情况,办理一份就读学校的毕业证【微信41543339】文凭即可

二、回国进私企、外企、自己做生意的情况,这些单位是不查询毕业证真伪的,而且国内没有渠道去查询国外文凭的真假,也不需要提供真实教育部认证。鉴于此,办理一份毕业证【微信41543339】即可

三、进国企,银行,事业单位,考公务员等等,这些单位是必需要提供真实教育部认证的,办理教育部认证所需资料众多且烦琐,所有材料您都必须提供原件,我们凭借丰富的经验,快捷的绿色通道帮您快速整合材料,让您少走弯路。

留信网认证的作用:

1:该专业认证可证明留学生真实身份

2:同时对留学生所学专业登记给予评定

3:国家专业人才认证中心颁发入库证书

4:这个认证书并且可以归档倒地方

5:凡事获得留信网入网的信息将会逐步更新到个人身份内,将在公安局网内查询个人身份证信息后,同步读取人才网入库信息

6:个人职称评审加20分

7:个人信誉贷款加10分

8:在国家人才网主办的国家网络招聘大会中纳入资料,供国家高端企业选择人才

留信网服务项目:

1、留学生专业人才库服务(留信分析)

2、国(境)学习人员提供就业推荐信服务

3、留学人员区块链存储服务

→ 【关于价格问题(保证一手价格)】

我们所定的价格是非常合理的,而且我们现在做得单子大多数都是代理和回头客户介绍的所以一般现在有新的单子 我给客户的都是第一手的代理价格,因为我想坦诚对待大家 不想跟大家在价格方面浪费时间

对于老客户或者被老客户介绍过来的朋友,我们都会适当给一些优惠。

选择实体注册公司办理,更放心,更安全!我们的承诺:客户在留信官方认证查询网站查询到认证通过结果后付款,不成功不收费!

My burning issue is homelessness K.C.M.O.

My burning issue is homelessness in Kansas City, MO

To: Tom Tresser

From: Roger Warren

一比一原版(Coventry毕业证书)考文垂大学毕业证如何办理

毕业原版【微信:41543339】【(Coventry毕业证书)考文垂大学毕业证】【微信:41543339】成绩单、外壳、offer、留信学历认证(永久存档真实可查)采用学校原版纸张、特殊工艺完全按照原版一比一制作(包括:隐形水印,阴影底纹,钢印LOGO烫金烫银,LOGO烫金烫银复合重叠,文字图案浮雕,激光镭射,紫外荧光,温感,复印防伪)行业标杆!精益求精,诚心合作,真诚制作!多年品质 ,按需精细制作,24小时接单,全套进口原装设备,十五年致力于帮助留学生解决难题,业务范围有加拿大、英国、澳洲、韩国、美国、新加坡,新西兰等学历材料,包您满意。

【我们承诺采用的是学校原版纸张(纸质、底色、纹路),我们拥有全套进口原装设备,特殊工艺都是采用不同机器制作,仿真度基本可以达到100%,所有工艺效果都可提前给客户展示,不满意可以根据客户要求进行调整,直到满意为止!】

【业务选择办理准则】

一、工作未确定,回国需先给父母、亲戚朋友看下文凭的情况,办理一份就读学校的毕业证【微信41543339】文凭即可

二、回国进私企、外企、自己做生意的情况,这些单位是不查询毕业证真伪的,而且国内没有渠道去查询国外文凭的真假,也不需要提供真实教育部认证。鉴于此,办理一份毕业证【微信41543339】即可

三、进国企,银行,事业单位,考公务员等等,这些单位是必需要提供真实教育部认证的,办理教育部认证所需资料众多且烦琐,所有材料您都必须提供原件,我们凭借丰富的经验,快捷的绿色通道帮您快速整合材料,让您少走弯路。

留信网认证的作用:

1:该专业认证可证明留学生真实身份

2:同时对留学生所学专业登记给予评定

3:国家专业人才认证中心颁发入库证书

4:这个认证书并且可以归档倒地方

5:凡事获得留信网入网的信息将会逐步更新到个人身份内,将在公安局网内查询个人身份证信息后,同步读取人才网入库信息

6:个人职称评审加20分

7:个人信誉贷款加10分

8:在国家人才网主办的国家网络招聘大会中纳入资料,供国家高端企业选择人才

留信网服务项目:

1、留学生专业人才库服务(留信分析)

2、国(境)学习人员提供就业推荐信服务

3、留学人员区块链存储服务

→ 【关于价格问题(保证一手价格)】

我们所定的价格是非常合理的,而且我们现在做得单子大多数都是代理和回头客户介绍的所以一般现在有新的单子 我给客户的都是第一手的代理价格,因为我想坦诚对待大家 不想跟大家在价格方面浪费时间

对于老客户或者被老客户介绍过来的朋友,我们都会适当给一些优惠。

选择实体注册公司办理,更放心,更安全!我们的承诺:客户在留信官方认证查询网站查询到认证通过结果后付款,不成功不收费!

End-to-end pipeline agility - Berlin Buzzwords 2024

We describe how we achieve high change agility in data engineering by eliminating the fear of breaking downstream data pipelines through end-to-end pipeline testing, and by using schema metaprogramming to safely eliminate boilerplate involved in changes that affect whole pipelines.

A quick poll on agility in changing pipelines from end to end indicated a huge span in capabilities. For the question "How long time does it take for all downstream pipelines to be adapted to an upstream change," the median response was 6 months, but some respondents could do it in less than a day. When quantitative data engineering differences between the best and worst are measured, the span is often 100x-1000x, sometimes even more.

A long time ago, we suffered at Spotify from fear of changing pipelines due to not knowing what the impact might be downstream. We made plans for a technical solution to test pipelines end-to-end to mitigate that fear, but the effort failed for cultural reasons. We eventually solved this challenge, but in a different context. In this presentation we will describe how we test full pipelines effectively by manipulating workflow orchestration, which enables us to make changes in pipelines without fear of breaking downstream.

Making schema changes that affect many jobs also involves a lot of toil and boilerplate. Using schema-on-read mitigates some of it, but has drawbacks since it makes it more difficult to detect errors early. We will describe how we have rejected this tradeoff by applying schema metaprogramming, eliminating boilerplate but keeping the protection of static typing, thereby further improving agility to quickly modify data pipelines without fear.

Predictably Improve Your B2B Tech Company's Performance by Leveraging Data

Harness the power of AI-backed reports, benchmarking and data analysis to predict trends and detect anomalies in your marketing efforts.

Peter Caputa, CEO at Databox, reveals how you can discover the strategies and tools to increase your growth rate (and margins!).

From metrics to track to data habits to pick up, enhance your reporting for powerful insights to improve your B2B tech company's marketing.

- - -

This is the webinar recording from the June 2024 HubSpot User Group (HUG) for B2B Technology USA.

Watch the video recording at https://youtu.be/5vjwGfPN9lw

Sign up for future HUG events at https://events.hubspot.com/b2b-technology-usa/

一比一原版(牛布毕业证书)牛津布鲁克斯大学毕业证如何办理

毕业原版【微信:41543339】【(牛布毕业证书)牛津布鲁克斯大学毕业证】【微信:41543339】成绩单、外壳、offer、留信学历认证(永久存档真实可查)采用学校原版纸张、特殊工艺完全按照原版一比一制作(包括:隐形水印,阴影底纹,钢印LOGO烫金烫银,LOGO烫金烫银复合重叠,文字图案浮雕,激光镭射,紫外荧光,温感,复印防伪)行业标杆!精益求精,诚心合作,真诚制作!多年品质 ,按需精细制作,24小时接单,全套进口原装设备,十五年致力于帮助留学生解决难题,业务范围有加拿大、英国、澳洲、韩国、美国、新加坡,新西兰等学历材料,包您满意。

【我们承诺采用的是学校原版纸张(纸质、底色、纹路),我们拥有全套进口原装设备,特殊工艺都是采用不同机器制作,仿真度基本可以达到100%,所有工艺效果都可提前给客户展示,不满意可以根据客户要求进行调整,直到满意为止!】

【业务选择办理准则】

一、工作未确定,回国需先给父母、亲戚朋友看下文凭的情况,办理一份就读学校的毕业证【微信41543339】文凭即可

二、回国进私企、外企、自己做生意的情况,这些单位是不查询毕业证真伪的,而且国内没有渠道去查询国外文凭的真假,也不需要提供真实教育部认证。鉴于此,办理一份毕业证【微信41543339】即可

三、进国企,银行,事业单位,考公务员等等,这些单位是必需要提供真实教育部认证的,办理教育部认证所需资料众多且烦琐,所有材料您都必须提供原件,我们凭借丰富的经验,快捷的绿色通道帮您快速整合材料,让您少走弯路。

留信网认证的作用:

1:该专业认证可证明留学生真实身份

2:同时对留学生所学专业登记给予评定

3:国家专业人才认证中心颁发入库证书

4:这个认证书并且可以归档倒地方

5:凡事获得留信网入网的信息将会逐步更新到个人身份内,将在公安局网内查询个人身份证信息后,同步读取人才网入库信息

6:个人职称评审加20分

7:个人信誉贷款加10分

8:在国家人才网主办的国家网络招聘大会中纳入资料,供国家高端企业选择人才

留信网服务项目:

1、留学生专业人才库服务(留信分析)

2、国(境)学习人员提供就业推荐信服务

3、留学人员区块链存储服务

→ 【关于价格问题(保证一手价格)】

我们所定的价格是非常合理的,而且我们现在做得单子大多数都是代理和回头客户介绍的所以一般现在有新的单子 我给客户的都是第一手的代理价格,因为我想坦诚对待大家 不想跟大家在价格方面浪费时间

对于老客户或者被老客户介绍过来的朋友,我们都会适当给一些优惠。

选择实体注册公司办理,更放心,更安全!我们的承诺:客户在留信官方认证查询网站查询到认证通过结果后付款,不成功不收费!

办(uts毕业证书)悉尼科技大学毕业证学历证书原版一模一样

原版一模一样【微信:741003700 】【(uts毕业证书)悉尼科技大学毕业证学历证书】【微信:741003700 】学位证,留信认证(真实可查,永久存档)offer、雅思、外壳等材料/诚信可靠,可直接看成品样本,帮您解决无法毕业带来的各种难题!外壳,原版制作,诚信可靠,可直接看成品样本。行业标杆!精益求精,诚心合作,真诚制作!多年品质 ,按需精细制作,24小时接单,全套进口原装设备。十五年致力于帮助留学生解决难题,包您满意。

本公司拥有海外各大学样板无数,能完美还原海外各大学 Bachelor Diploma degree, Master Degree Diploma

1:1完美还原海外各大学毕业材料上的工艺:水印,阴影底纹,钢印LOGO烫金烫银,LOGO烫金烫银复合重叠。文字图案浮雕、激光镭射、紫外荧光、温感、复印防伪等防伪工艺。材料咨询办理、认证咨询办理请加学历顾问Q/微741003700

留信网认证的作用:

1:该专业认证可证明留学生真实身份

2:同时对留学生所学专业登记给予评定

3:国家专业人才认证中心颁发入库证书

4:这个认证书并且可以归档倒地方

5:凡事获得留信网入网的信息将会逐步更新到个人身份内,将在公安局网内查询个人身份证信息后,同步读取人才网入库信息

6:个人职称评审加20分

7:个人信誉贷款加10分

8:在国家人才网主办的国家网络招聘大会中纳入资料,供国家高端企业选择人才

Population Growth in Bataan: The effects of population growth around rural pl...

A population analysis specific to Bataan.

在线办理(英国UCA毕业证书)创意艺术大学毕业证在读证明一模一样

学校原件一模一样【微信:741003700 】《(英国UCA毕业证书)创意艺术大学毕业证》【微信:741003700 】学位证,留信认证(真实可查,永久存档)原件一模一样纸张工艺/offer、雅思、外壳等材料/诚信可靠,可直接看成品样本,帮您解决无法毕业带来的各种难题!外壳,原版制作,诚信可靠,可直接看成品样本。行业标杆!精益求精,诚心合作,真诚制作!多年品质 ,按需精细制作,24小时接单,全套进口原装设备。十五年致力于帮助留学生解决难题,包您满意。

本公司拥有海外各大学样板无数,能完美还原。

1:1完美还原海外各大学毕业材料上的工艺:水印,阴影底纹,钢印LOGO烫金烫银,LOGO烫金烫银复合重叠。文字图案浮雕、激光镭射、紫外荧光、温感、复印防伪等防伪工艺。材料咨询办理、认证咨询办理请加学历顾问Q/微741003700

【主营项目】

一.毕业证【q微741003700】成绩单、使馆认证、教育部认证、雅思托福成绩单、学生卡等!

二.真实使馆公证(即留学回国人员证明,不成功不收费)

三.真实教育部学历学位认证(教育部存档!教育部留服网站永久可查)

四.办理各国各大学文凭(一对一专业服务,可全程监控跟踪进度)

如果您处于以下几种情况:

◇在校期间,因各种原因未能顺利毕业……拿不到官方毕业证【q/微741003700】

◇面对父母的压力,希望尽快拿到;

◇不清楚认证流程以及材料该如何准备;

◇回国时间很长,忘记办理;

◇回国马上就要找工作,办给用人单位看;

◇企事业单位必须要求办理的

◇需要报考公务员、购买免税车、落转户口

◇申请留学生创业基金

留信网认证的作用:

1:该专业认证可证明留学生真实身份

2:同时对留学生所学专业登记给予评定

3:国家专业人才认证中心颁发入库证书

4:这个认证书并且可以归档倒地方

5:凡事获得留信网入网的信息将会逐步更新到个人身份内,将在公安局网内查询个人身份证信息后,同步读取人才网入库信息

6:个人职称评审加20分

7:个人信誉贷款加10分

8:在国家人才网主办的国家网络招聘大会中纳入资料,供国家高端企业选择人才

Learn SQL from basic queries to Advance queries

Dive into the world of data analysis with our comprehensive guide on mastering SQL! This presentation offers a practical approach to learning SQL, focusing on real-world applications and hands-on practice. Whether you're a beginner or looking to sharpen your skills, this guide provides the tools you need to extract, analyze, and interpret data effectively.

Key Highlights:

Foundations of SQL: Understand the basics of SQL, including data retrieval, filtering, and aggregation.

Advanced Queries: Learn to craft complex queries to uncover deep insights from your data.

Data Trends and Patterns: Discover how to identify and interpret trends and patterns in your datasets.

Practical Examples: Follow step-by-step examples to apply SQL techniques in real-world scenarios.

Actionable Insights: Gain the skills to derive actionable insights that drive informed decision-making.

Join us on this journey to enhance your data analysis capabilities and unlock the full potential of SQL. Perfect for data enthusiasts, analysts, and anyone eager to harness the power of data!

#DataAnalysis #SQL #LearningSQL #DataInsights #DataScience #Analytics

University of New South Wales degree offer diploma Transcript

澳洲UNSW毕业证书制作新南威尔士大学假文凭定制Q微168899991做UNSW留信网教留服认证海牙认证改UNSW成绩单GPA做UNSW假学位证假文凭高仿毕业证申请新南威尔士大学University of New South Wales degree offer diploma Transcript

Beyond the Basics of A/B Tests: Highly Innovative Experimentation Tactics You...

This webinar will explore cutting-edge, less familiar but powerful experimentation methodologies which address well-known limitations of standard A/B Testing. Designed for data and product leaders, this session aims to inspire the embrace of innovative approaches and provide insights into the frontiers of experimentation!

06-04-2024 - NYC Tech Week - Discussion on Vector Databases, Unstructured Dat...

06-04-2024 - NYC Tech Week - Discussion on Vector Databases, Unstructured Data and AI

Round table discussion of vector databases, unstructured data, ai, big data, real-time, robots and Milvus.

A lively discussion with NJ Gen AI Meetup Lead, Prasad and Procure.FYI's Co-Found

一比一原版(Chester毕业证书)切斯特大学毕业证如何办理

毕业原版【微信:41543339】【(Chester毕业证书)切斯特大学毕业证】【微信:41543339】成绩单、外壳、offer、留信学历认证(永久存档真实可查)采用学校原版纸张、特殊工艺完全按照原版一比一制作(包括:隐形水印,阴影底纹,钢印LOGO烫金烫银,LOGO烫金烫银复合重叠,文字图案浮雕,激光镭射,紫外荧光,温感,复印防伪)行业标杆!精益求精,诚心合作,真诚制作!多年品质 ,按需精细制作,24小时接单,全套进口原装设备,十五年致力于帮助留学生解决难题,业务范围有加拿大、英国、澳洲、韩国、美国、新加坡,新西兰等学历材料,包您满意。

【我们承诺采用的是学校原版纸张(纸质、底色、纹路),我们拥有全套进口原装设备,特殊工艺都是采用不同机器制作,仿真度基本可以达到100%,所有工艺效果都可提前给客户展示,不满意可以根据客户要求进行调整,直到满意为止!】

【业务选择办理准则】

一、工作未确定,回国需先给父母、亲戚朋友看下文凭的情况,办理一份就读学校的毕业证【微信41543339】文凭即可

二、回国进私企、外企、自己做生意的情况,这些单位是不查询毕业证真伪的,而且国内没有渠道去查询国外文凭的真假,也不需要提供真实教育部认证。鉴于此,办理一份毕业证【微信41543339】即可

三、进国企,银行,事业单位,考公务员等等,这些单位是必需要提供真实教育部认证的,办理教育部认证所需资料众多且烦琐,所有材料您都必须提供原件,我们凭借丰富的经验,快捷的绿色通道帮您快速整合材料,让您少走弯路。

留信网认证的作用:

1:该专业认证可证明留学生真实身份

2:同时对留学生所学专业登记给予评定

3:国家专业人才认证中心颁发入库证书

4:这个认证书并且可以归档倒地方

5:凡事获得留信网入网的信息将会逐步更新到个人身份内,将在公安局网内查询个人身份证信息后,同步读取人才网入库信息

6:个人职称评审加20分

7:个人信誉贷款加10分

8:在国家人才网主办的国家网络招聘大会中纳入资料,供国家高端企业选择人才

留信网服务项目:

1、留学生专业人才库服务(留信分析)

2、国(境)学习人员提供就业推荐信服务

3、留学人员区块链存储服务

→ 【关于价格问题(保证一手价格)】

我们所定的价格是非常合理的,而且我们现在做得单子大多数都是代理和回头客户介绍的所以一般现在有新的单子 我给客户的都是第一手的代理价格,因为我想坦诚对待大家 不想跟大家在价格方面浪费时间

对于老客户或者被老客户介绍过来的朋友,我们都会适当给一些优惠。

选择实体注册公司办理,更放心,更安全!我们的承诺:客户在留信官方认证查询网站查询到认证通过结果后付款,不成功不收费!

一比一原版(GWU,GW文凭证书)乔治·华盛顿大学毕业证如何办理

毕业原版【微信:176555708】【(GWU,GW毕业证书)乔治·华盛顿大学毕业证】【微信:176555708】成绩单、外壳、offer、留信学历认证(永久存档真实可查)采用学校原版纸张、特殊工艺完全按照原版一比一制作(包括:隐形水印,阴影底纹,钢印LOGO烫金烫银,LOGO烫金烫银复合重叠,文字图案浮雕,激光镭射,紫外荧光,温感,复印防伪)行业标杆!精益求精,诚心合作,真诚制作!多年品质 ,按需精细制作,24小时接单,全套进口原装设备,十五年致力于帮助留学生解决难题,业务范围有加拿大、英国、澳洲、韩国、美国、新加坡,新西兰等学历材料,包您满意。

【我们承诺采用的是学校原版纸张(纸质、底色、纹路),我们拥有全套进口原装设备,特殊工艺都是采用不同机器制作,仿真度基本可以达到100%,所有工艺效果都可提前给客户展示,不满意可以根据客户要求进行调整,直到满意为止!】

【业务选择办理准则】

一、工作未确定,回国需先给父母、亲戚朋友看下文凭的情况,办理一份就读学校的毕业证【微信176555708】文凭即可

二、回国进私企、外企、自己做生意的情况,这些单位是不查询毕业证真伪的,而且国内没有渠道去查询国外文凭的真假,也不需要提供真实教育部认证。鉴于此,办理一份毕业证【微信176555708】即可

三、进国企,银行,事业单位,考公务员等等,这些单位是必需要提供真实教育部认证的,办理教育部认证所需资料众多且烦琐,所有材料您都必须提供原件,我们凭借丰富的经验,快捷的绿色通道帮您快速整合材料,让您少走弯路。

留信网认证的作用:

1:该专业认证可证明留学生真实身份

2:同时对留学生所学专业登记给予评定

3:国家专业人才认证中心颁发入库证书

4:这个认证书并且可以归档倒地方

5:凡事获得留信网入网的信息将会逐步更新到个人身份内,将在公安局网内查询个人身份证信息后,同步读取人才网入库信息

6:个人职称评审加20分

7:个人信誉贷款加10分

8:在国家人才网主办的国家网络招聘大会中纳入资料,供国家高端企业选择人才

留信网服务项目:

1、留学生专业人才库服务(留信分析)

2、国(境)学习人员提供就业推荐信服务

3、留学人员区块链存储服务

→ 【关于价格问题(保证一手价格)】

我们所定的价格是非常合理的,而且我们现在做得单子大多数都是代理和回头客户介绍的所以一般现在有新的单子 我给客户的都是第一手的代理价格,因为我想坦诚对待大家 不想跟大家在价格方面浪费时间

对于老客户或者被老客户介绍过来的朋友,我们都会适当给一些优惠。

选择实体注册公司办理,更放心,更安全!我们的承诺:客户在留信官方认证查询网站查询到认证通过结果后付款,不成功不收费!

Recently uploaded (20)

Global Situational Awareness of A.I. and where its headed

Global Situational Awareness of A.I. and where its headed

Udemy_2024_Global_Learning_Skills_Trends_Report (1).pdf

Udemy_2024_Global_Learning_Skills_Trends_Report (1).pdf

End-to-end pipeline agility - Berlin Buzzwords 2024

End-to-end pipeline agility - Berlin Buzzwords 2024

Predictably Improve Your B2B Tech Company's Performance by Leveraging Data

Predictably Improve Your B2B Tech Company's Performance by Leveraging Data

Influence of Marketing Strategy and Market Competition on Business Plan

Influence of Marketing Strategy and Market Competition on Business Plan

Population Growth in Bataan: The effects of population growth around rural pl...

Population Growth in Bataan: The effects of population growth around rural pl...

University of New South Wales degree offer diploma Transcript

University of New South Wales degree offer diploma Transcript

Beyond the Basics of A/B Tests: Highly Innovative Experimentation Tactics You...

Beyond the Basics of A/B Tests: Highly Innovative Experimentation Tactics You...

06-04-2024 - NYC Tech Week - Discussion on Vector Databases, Unstructured Dat...

06-04-2024 - NYC Tech Week - Discussion on Vector Databases, Unstructured Dat...

A presentation that explain the Power BI Licensing

A presentation that explain the Power BI Licensing

Vox - Making voiceprints out of text content.

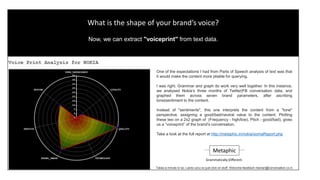

- 1. One of the many advantages of tagging text with Parts of Speech (PoS) tokens is that it makes the content much more pliable for querying. The assumption, and the finding, was that when it comes to text analysis, grammar knows best. And that grammar and graph work very well together to give you more insights into the data. In this instance, we analysed Nokia’s three months of Twitter|FB conversation data, and graphed them across seven brand parameters, after ascribing “tone” to the content. (Instead of sentiment, we go by tone.) By plotting Frequency (high-low scale), with Tone (positive-negative scale), we publish a “voiceprint” of the brand's conversation. Take a look at the full report at http://metaphic.in/nokia/somaReport.php What is the shape and tone of your brand’s voice? Now, we can show you by extracting a "voiceprint" from text data. Metaphic Takes a minute or so. Lacks ux/ui so just click on stuff. Welcome feedback manian@conversation.co.in Grammatically Different.