Embed presentation

Download to read offline

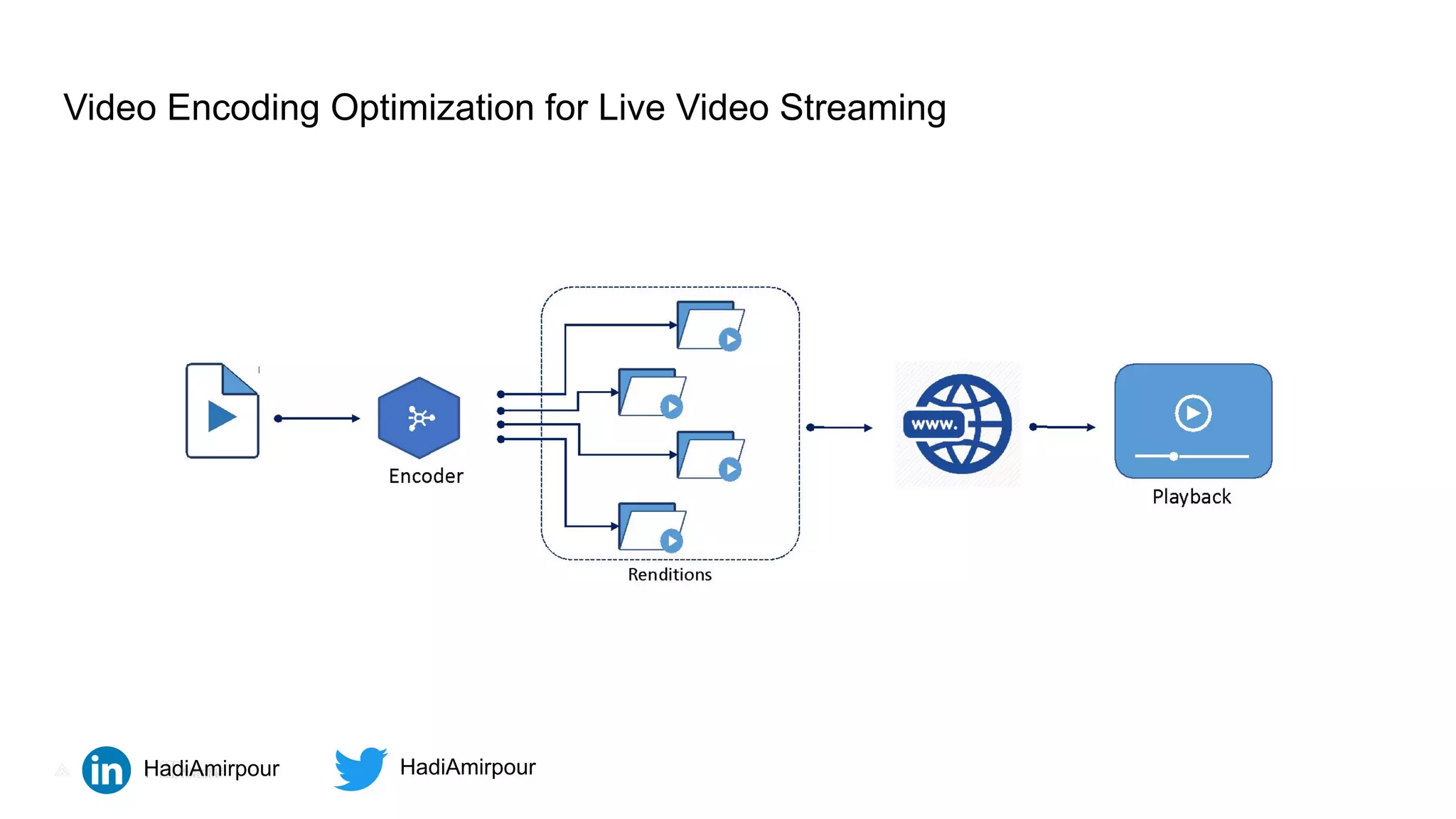

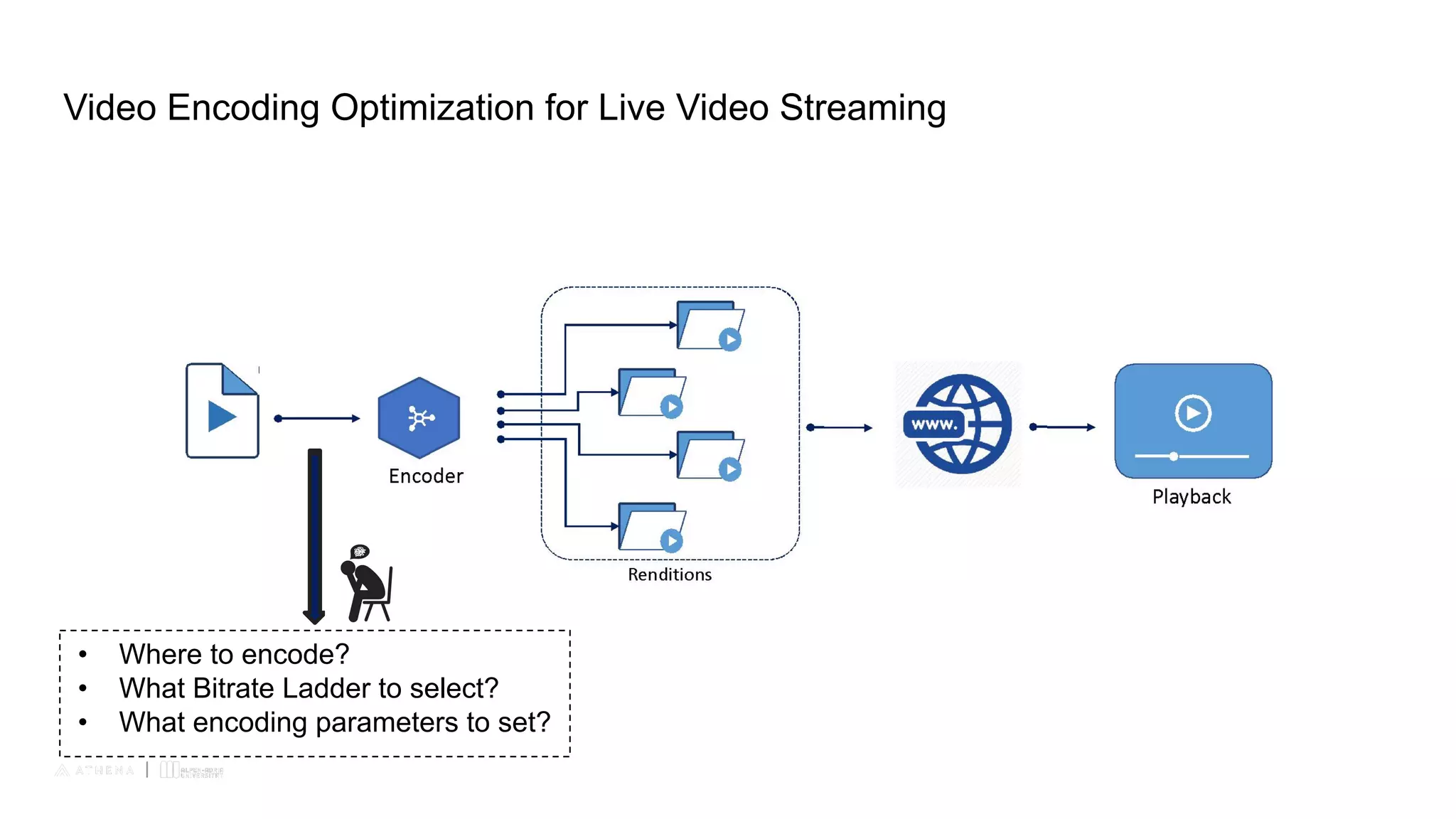

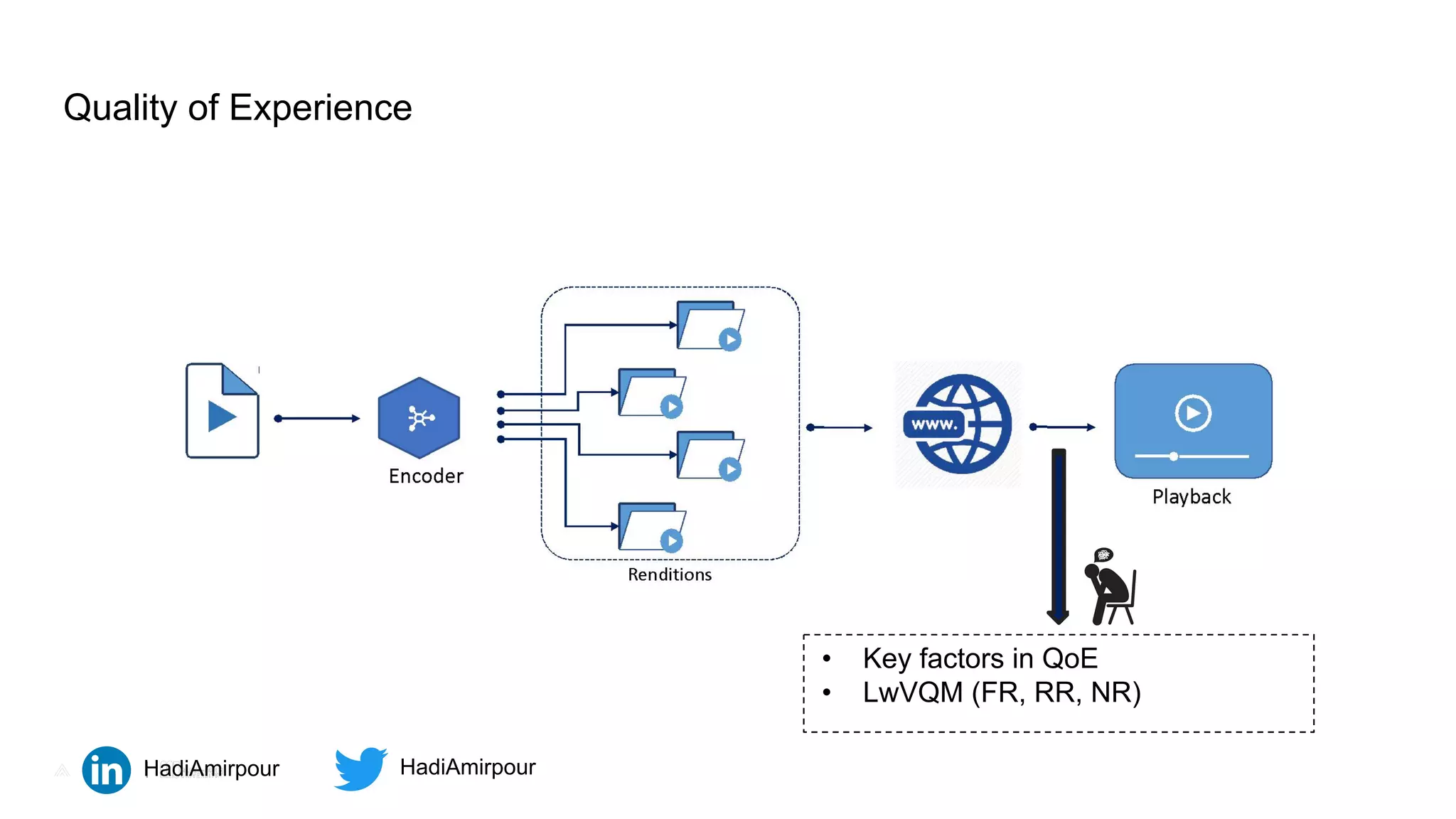

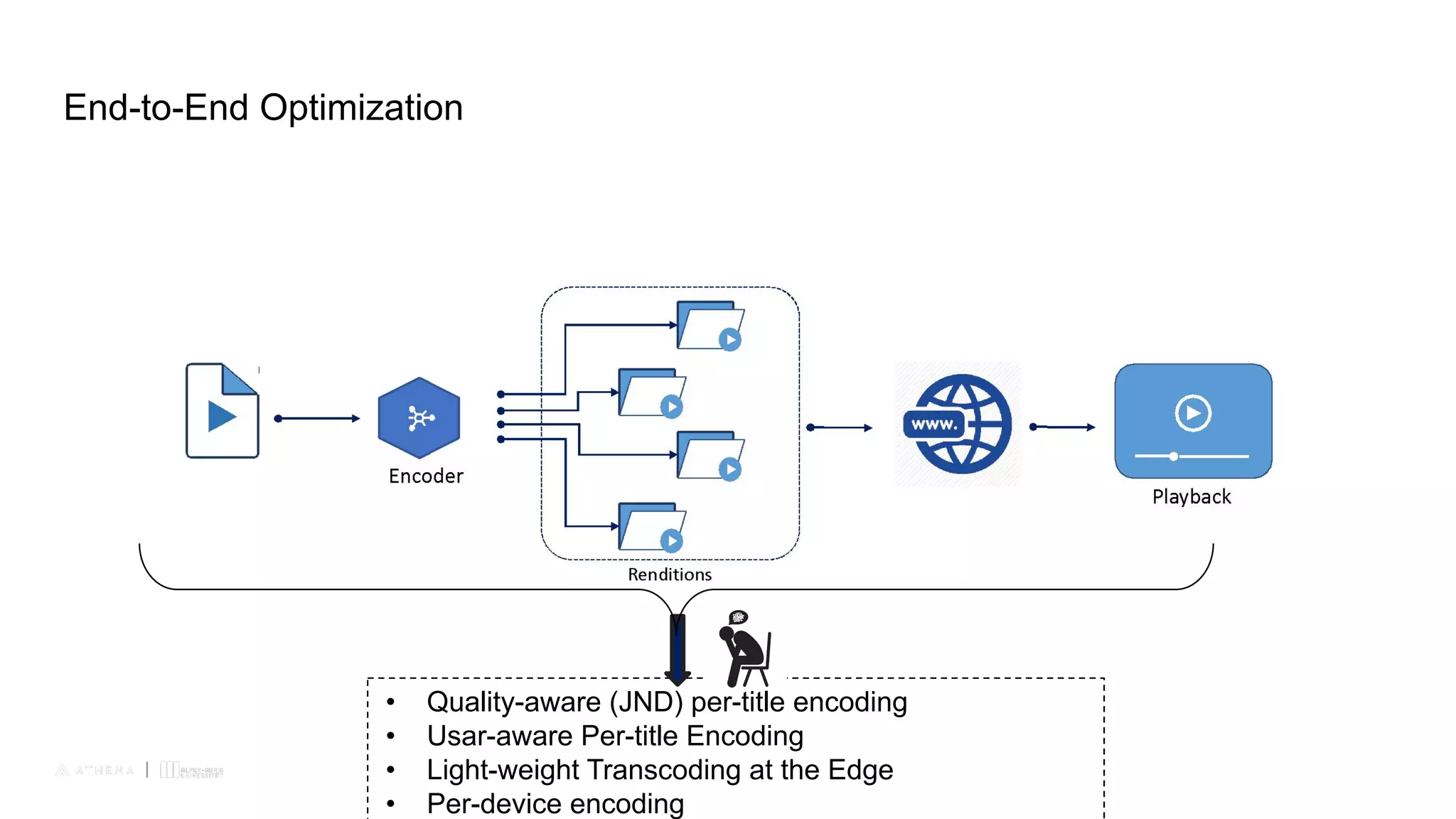

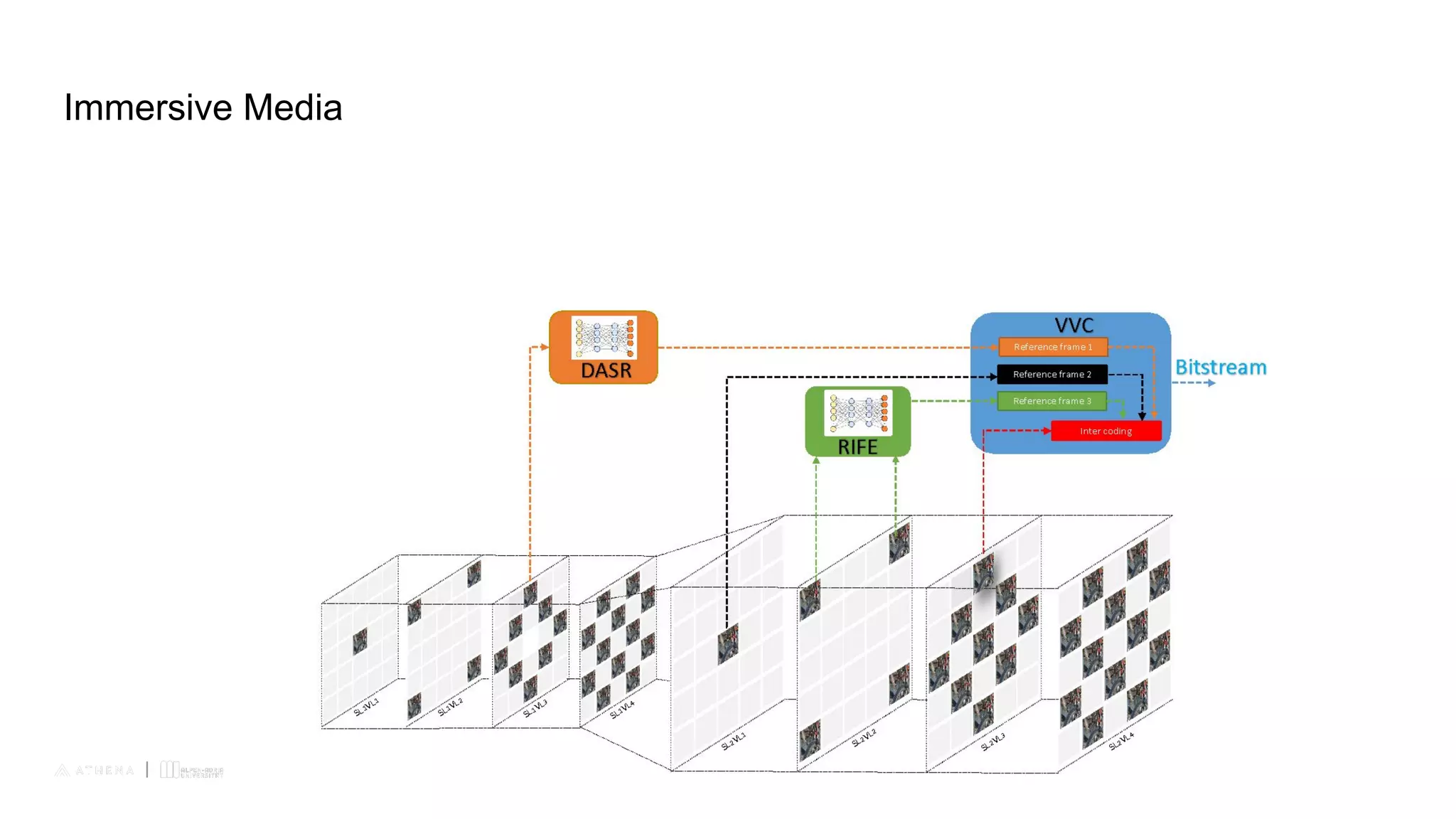

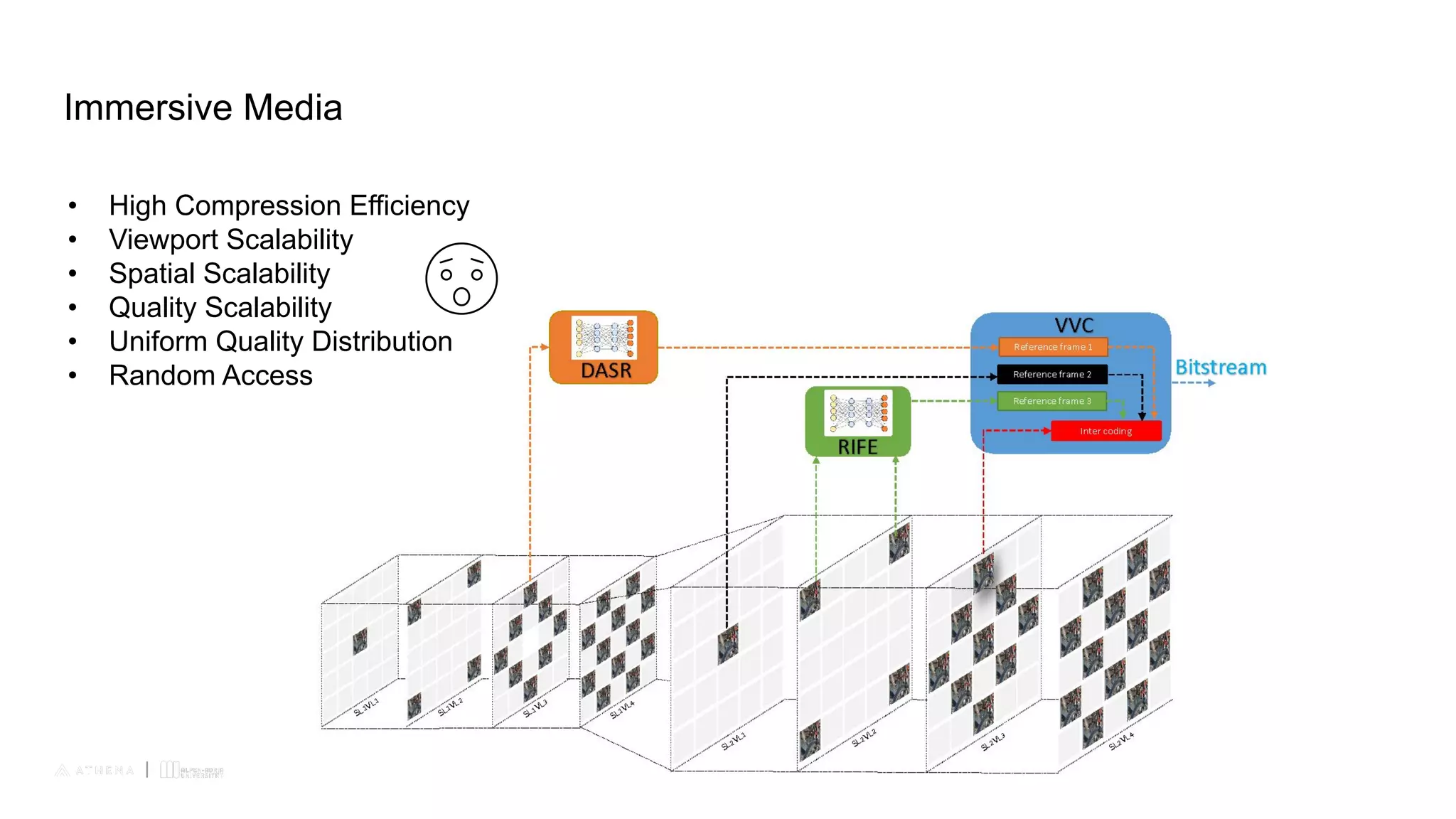

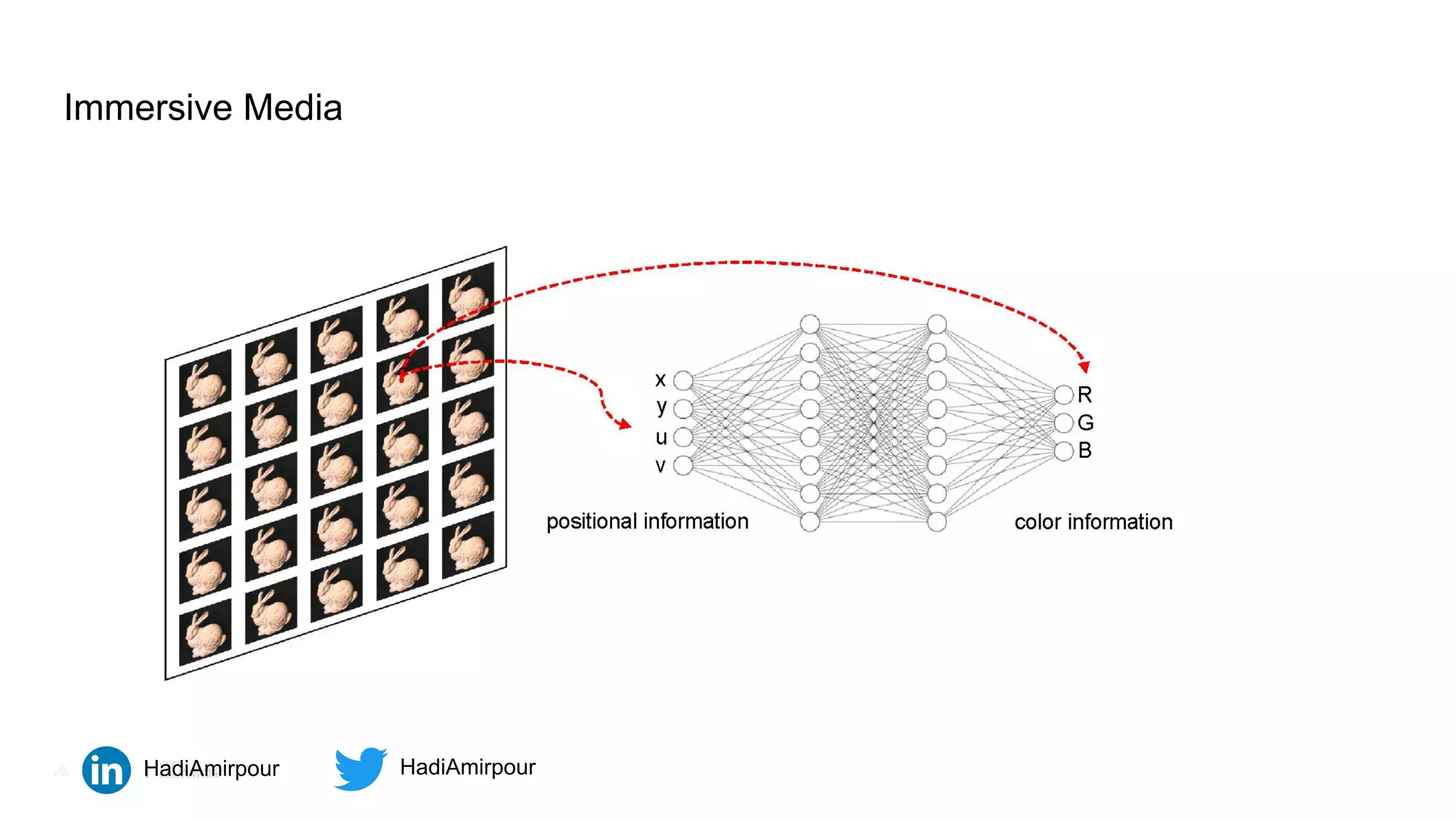

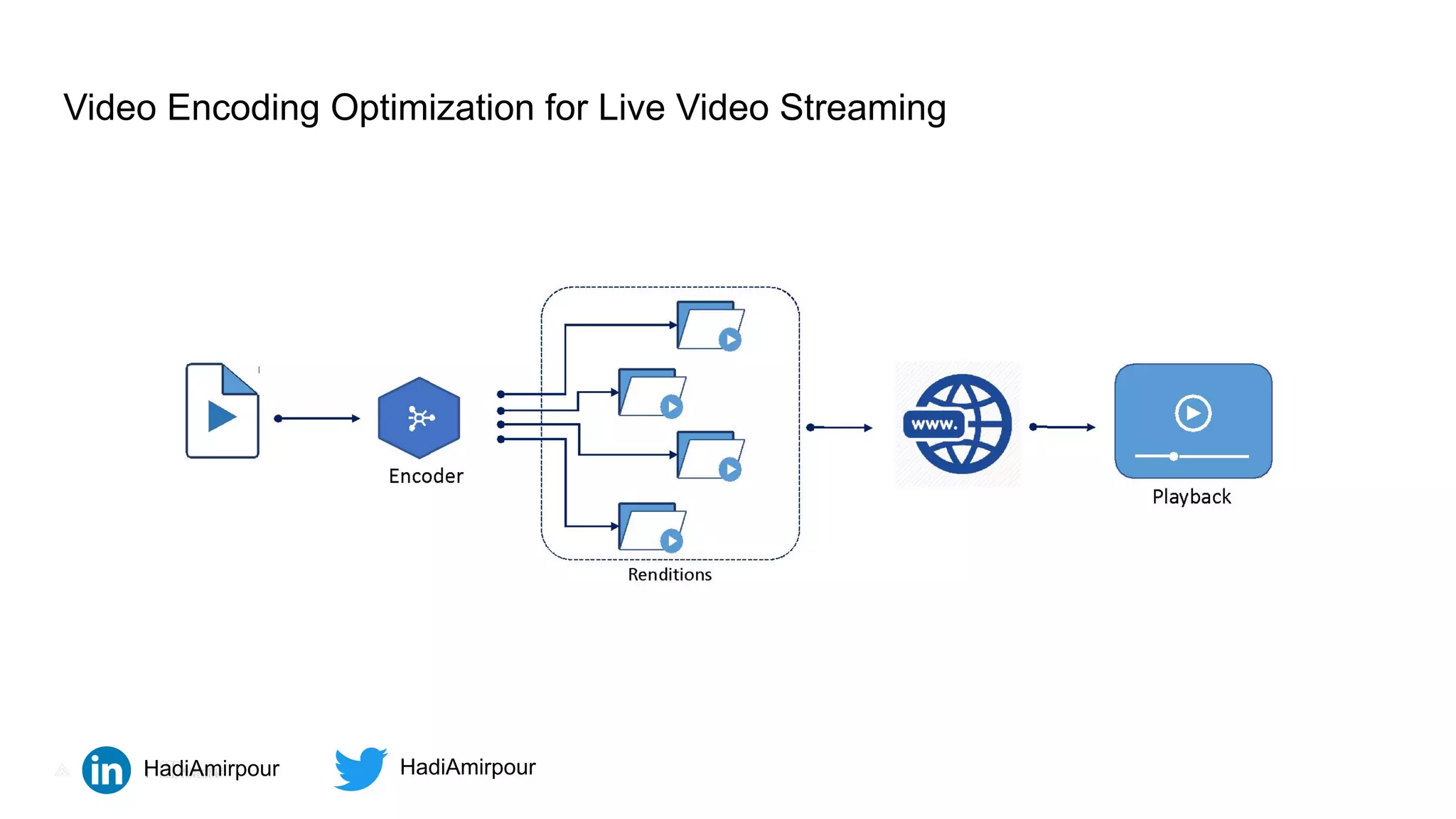

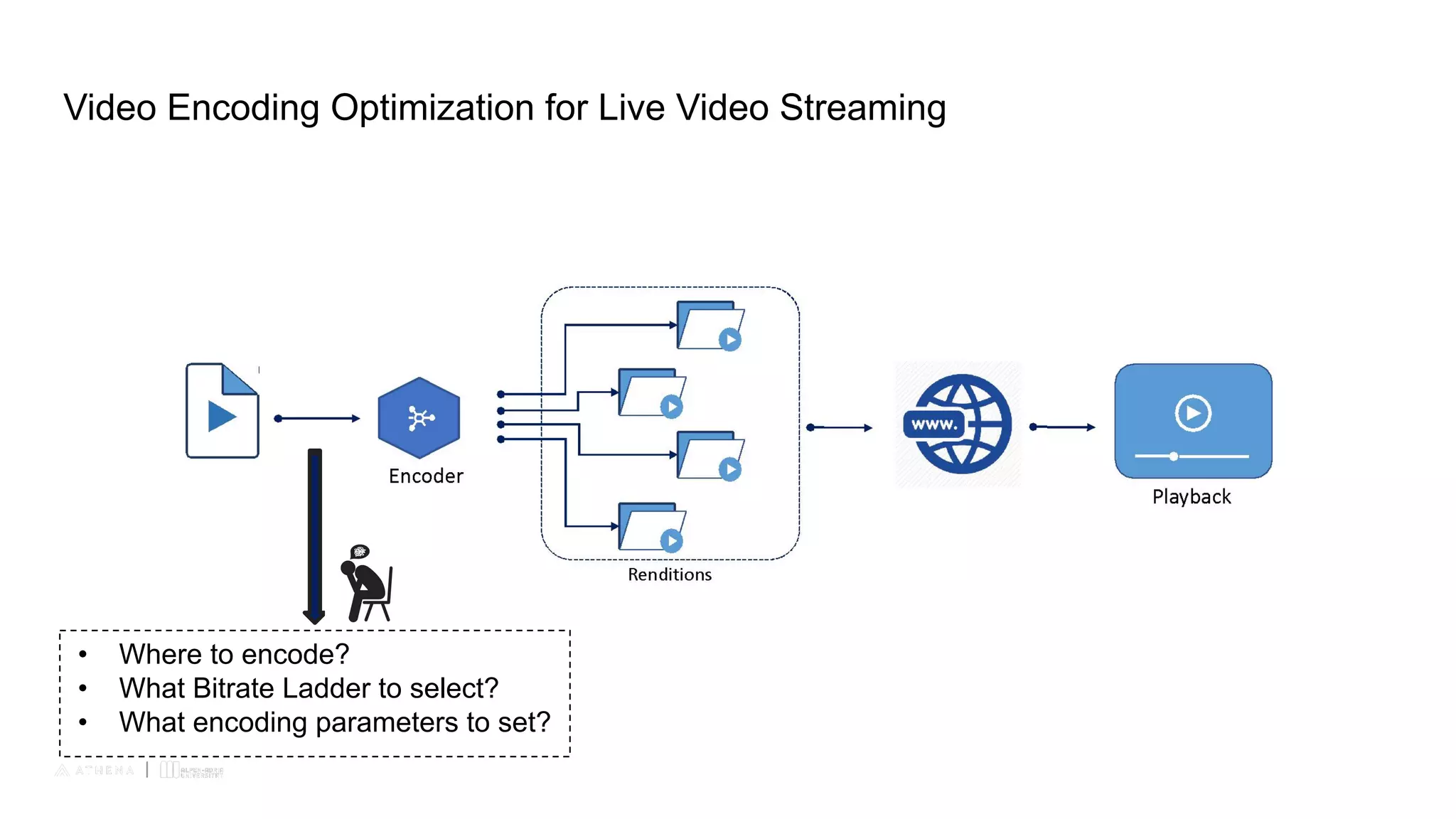

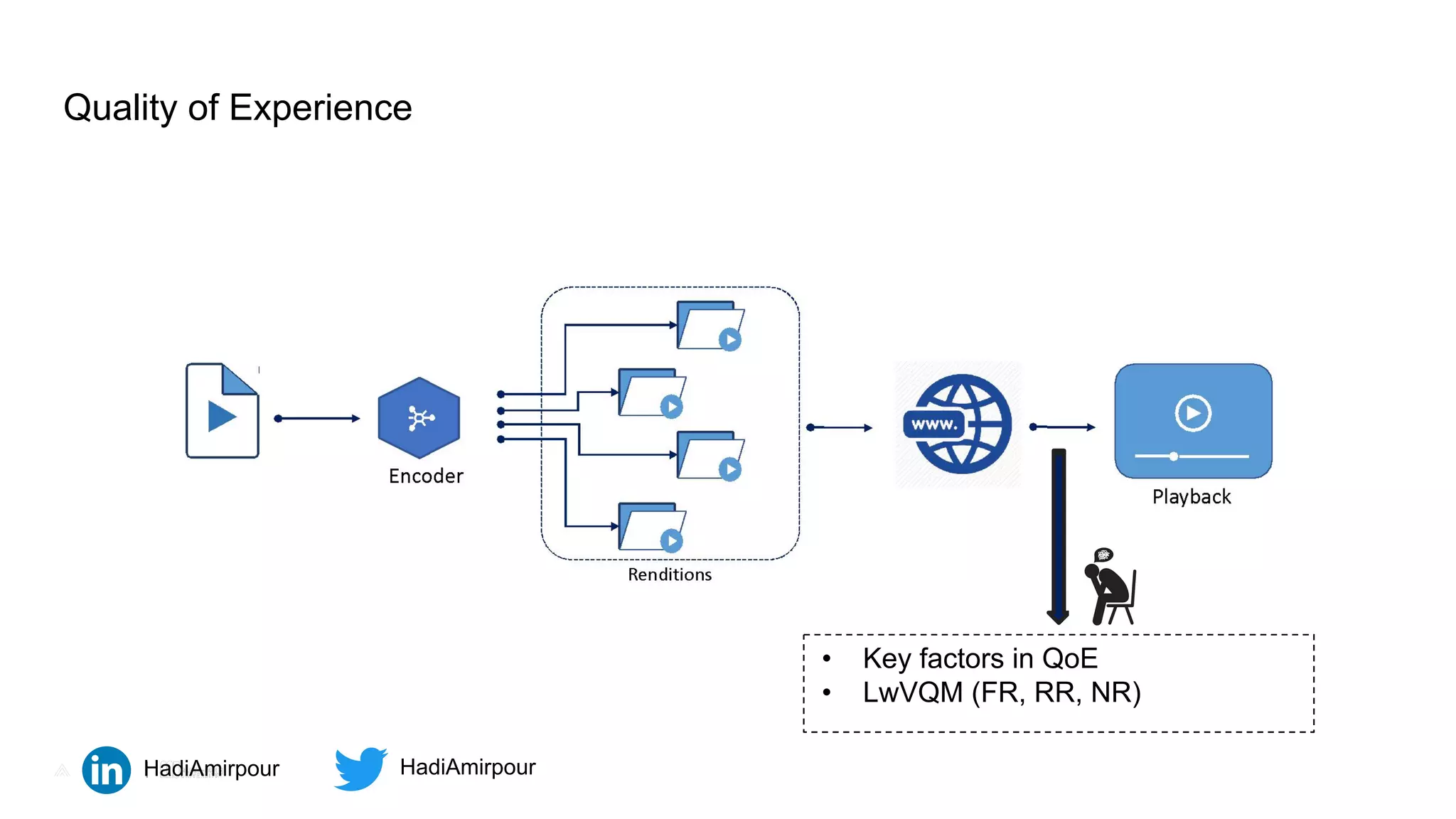

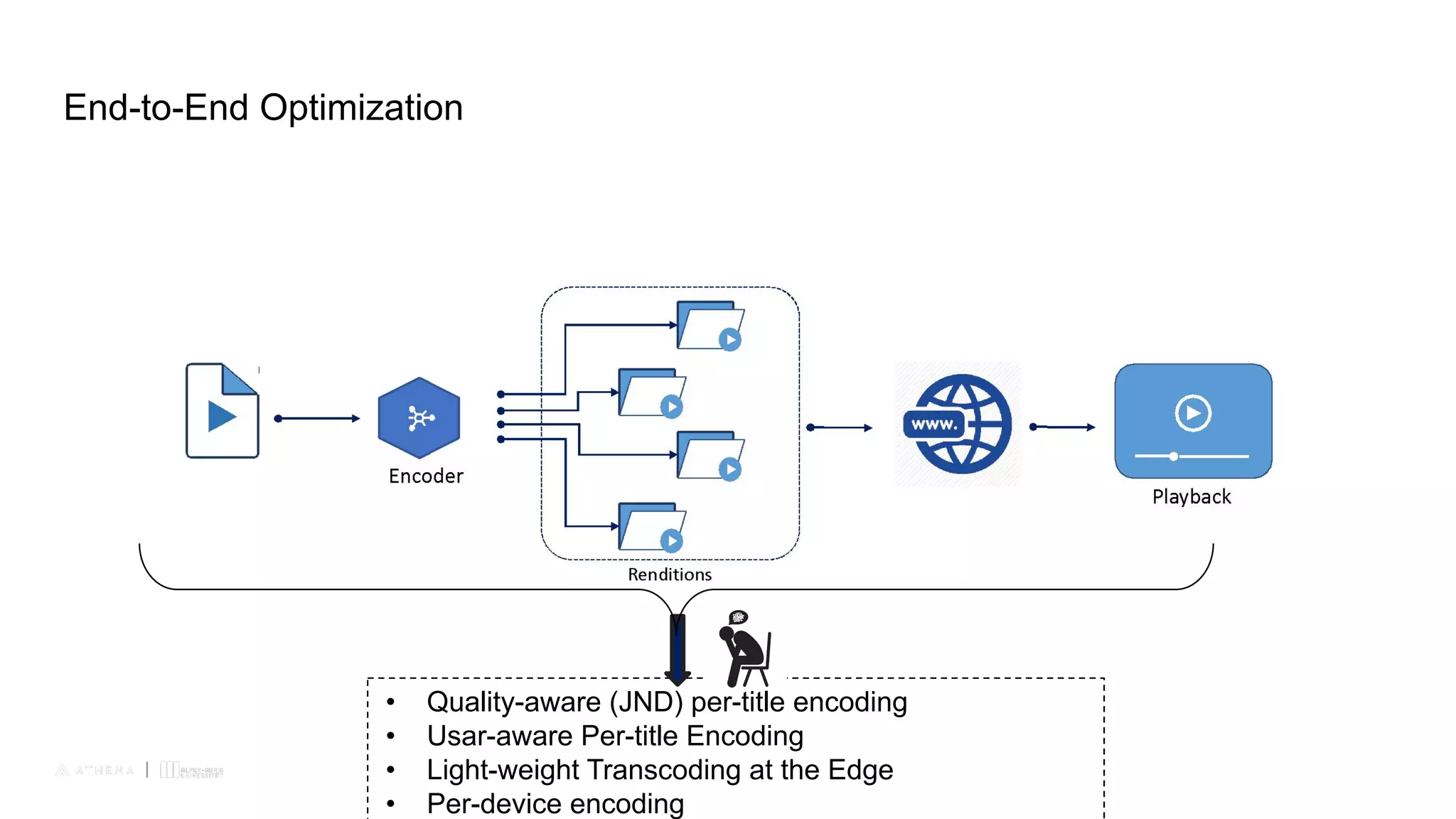

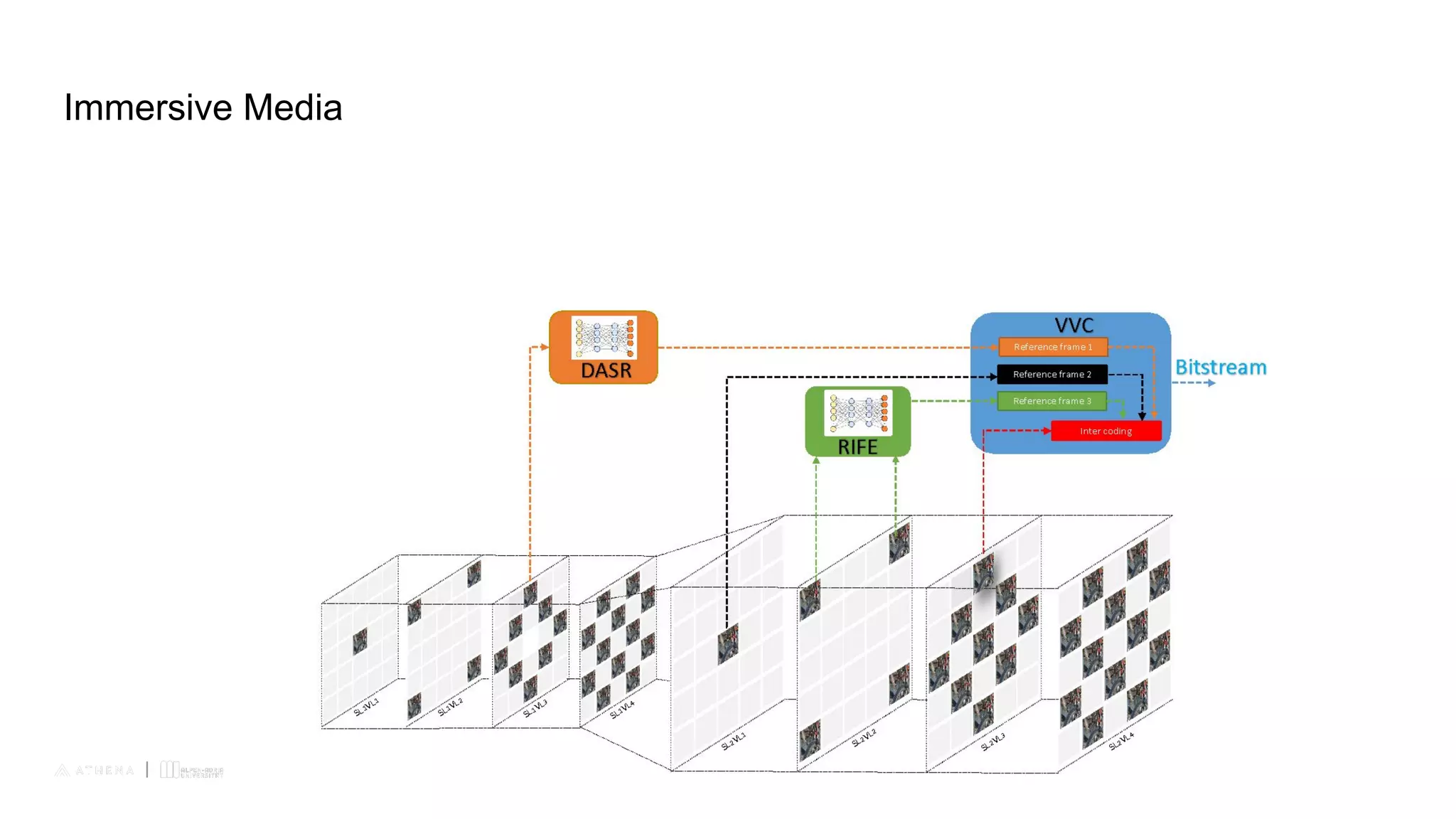

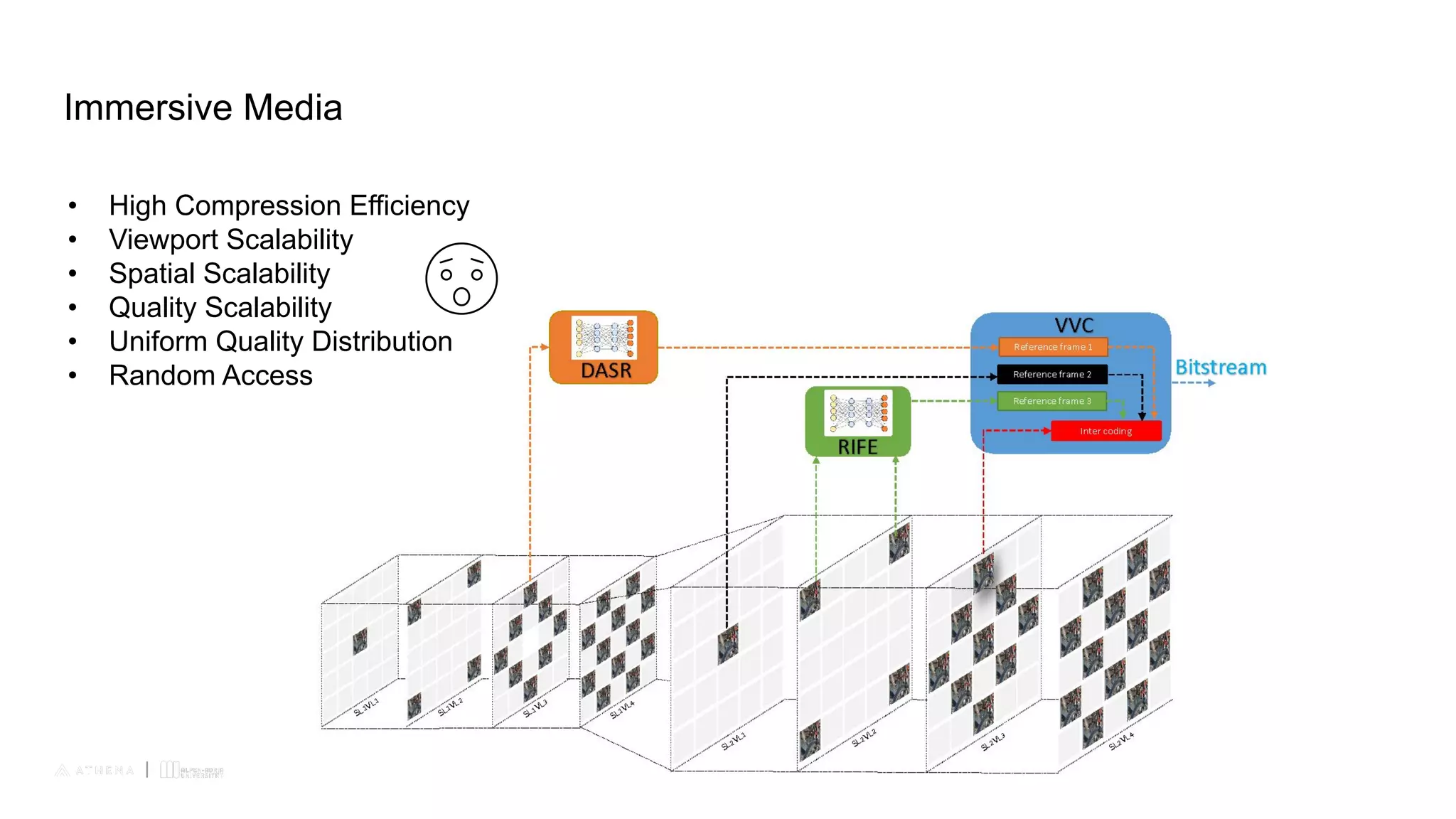

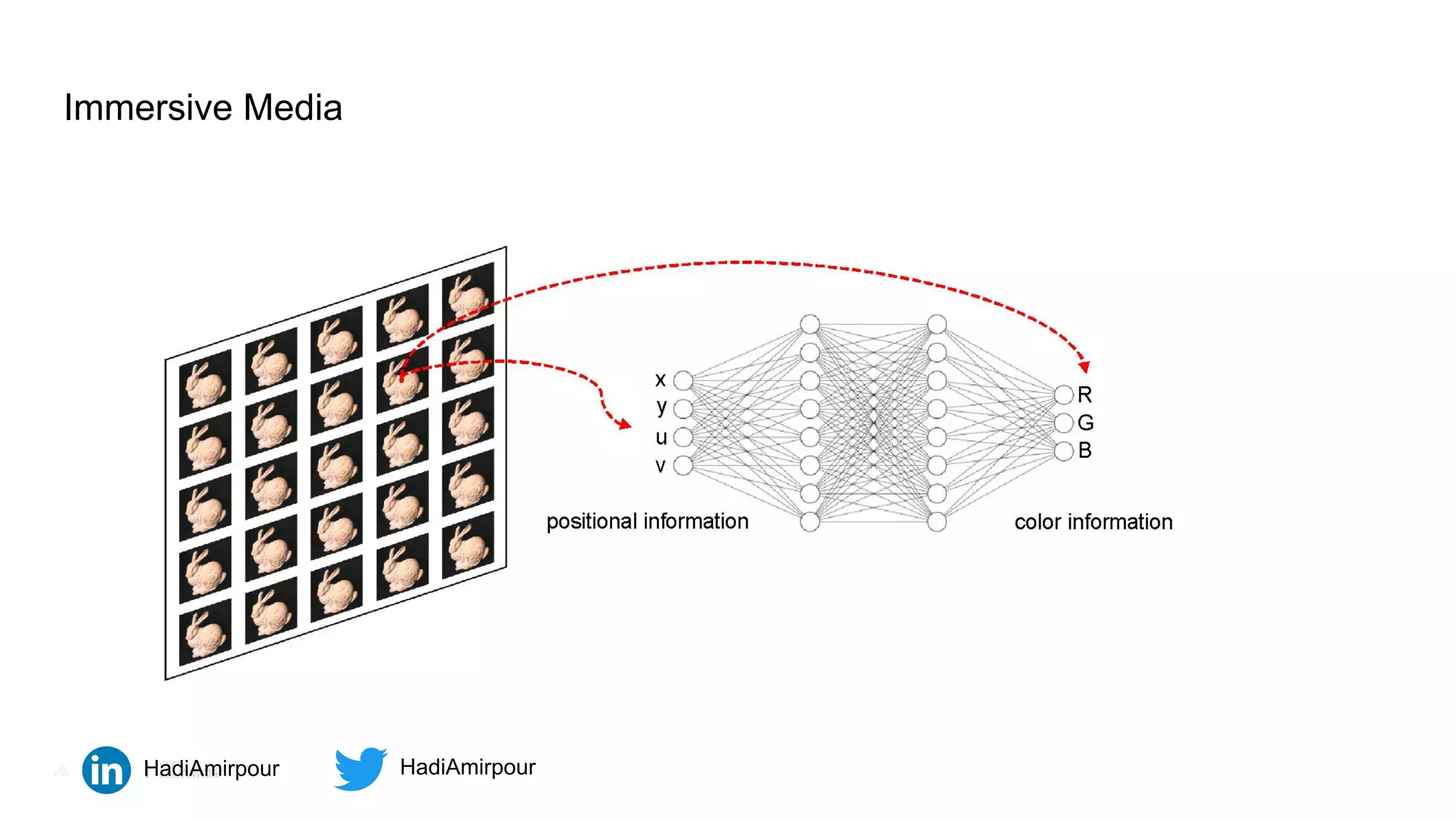

This document profiles Hadi Amirpour and his research interests. It lists his educational background, including a B.Sc. in Electrical Engineering, B.Sc. in Biomedical Engineering, M.Sc. in Electrical Engineering, and Ph.D. in Computer Science. His research focuses on video encoding optimization for live video streaming, quality of experience for video, and immersive media. Specific areas of research within these topics are listed, such as bitrate ladders, encoding parameters, quality factors, and compression techniques for immersive media.