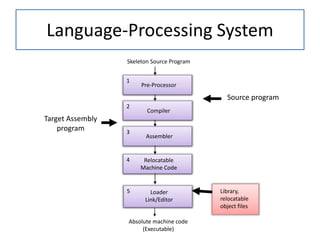

This document provides information about the phases and objectives of a compiler design course. It discusses the following key points:

- The course aims to teach students about the various phases of a compiler like parsing, code generation, and optimization techniques.

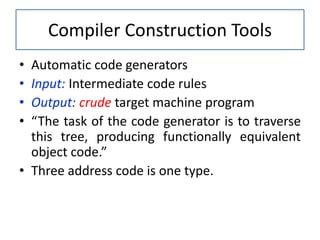

- The outcomes include explaining the compilation process and building tools like lexical analyzers and parsers. Students should also be able to develop semantic analysis and code generators.

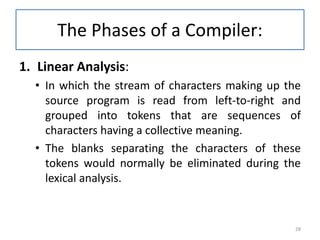

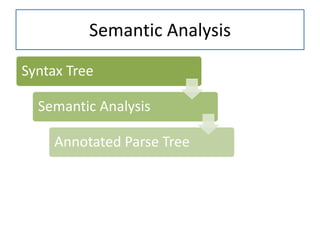

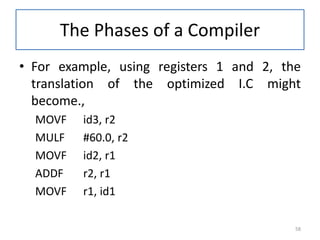

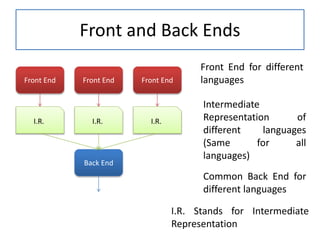

- The document then covers the different phases of a compiler in detail, including lexical analysis, syntax analysis, semantic analysis, intermediate code generation, and code optimization. It provides examples to illustrate each phase.