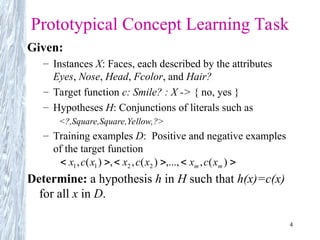

The document discusses concept learning, focusing on the methods for inducing hypotheses from training examples, such as the Find-S algorithm and the Candidate Elimination algorithm. It examines the structure of hypotheses, their representation, the need for inductive bias, and the challenges presented by inconsistencies in training data and concept drift. Key concepts include the definition of version spaces, the general-to-specific ordering of hypotheses, and the necessity of selecting appropriate hypotheses that can generalize effectively from limited instances.

![21

Inductive Bias

Consider

– concept learning algorithm L

– instances X, target concept c

– training examples Dc={<x,c(x)>}

– let L(xi,Dc) denote the classification assigned to the

instance xi by L after training on data Dc.

Definition:

The inductive bias of L is any minimal set of assertions B

such that for any target concept c and corresponding

training examples Dc

where A B means A logically entails B

)]

,

(

)

)[(

( c

i

i

c

i D

x

L

x

D

B

X

x

](https://image.slidesharecdn.com/unit1-mlt-250105115827-39a0f061/85/UNIT-1-INTRODUCTION-MACHINE-LEARNING-TECHNIQUES-AD-21-320.jpg)