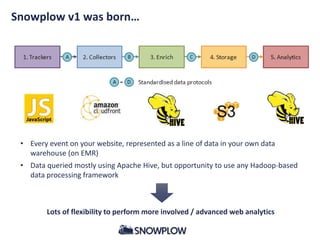

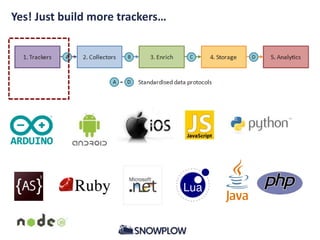

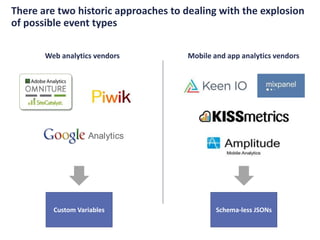

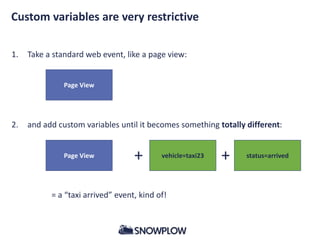

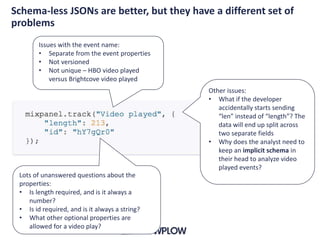

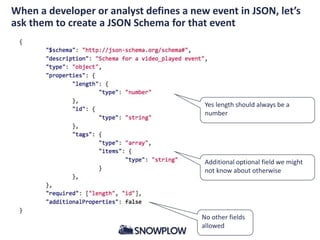

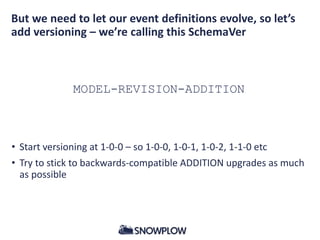

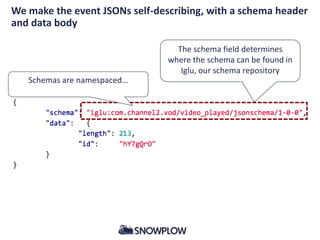

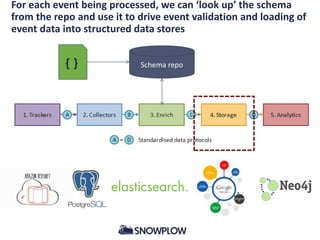

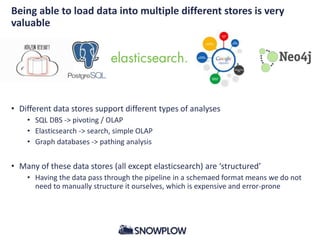

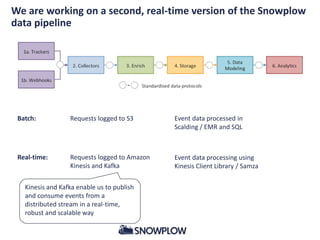

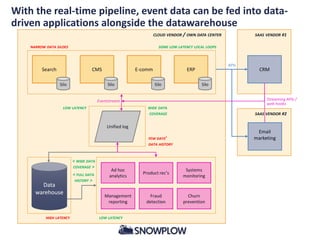

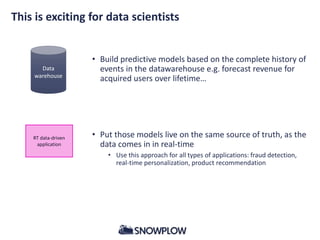

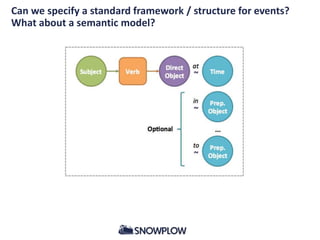

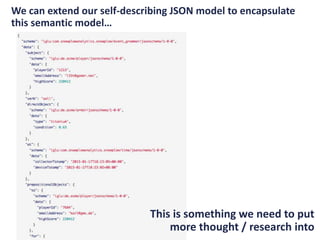

This document discusses event data and the Snowplow data pipeline. It notes that 3 years ago, analyzing user behavior and engagement using tools like Google Analytics was difficult. The Snowplow data pipeline was created to collect and analyze event-level data at scale using open source big data technologies. The pipeline has expanded to encompass different types of digital event data by developing a schema for structured JSON events and a versioning system. A real-time version of the pipeline is also being built to feed event data into applications in addition to batch processing. Developing a semantic model and standard framework for describing events is discussed as being important for enabling downstream applications to consume structured event data.