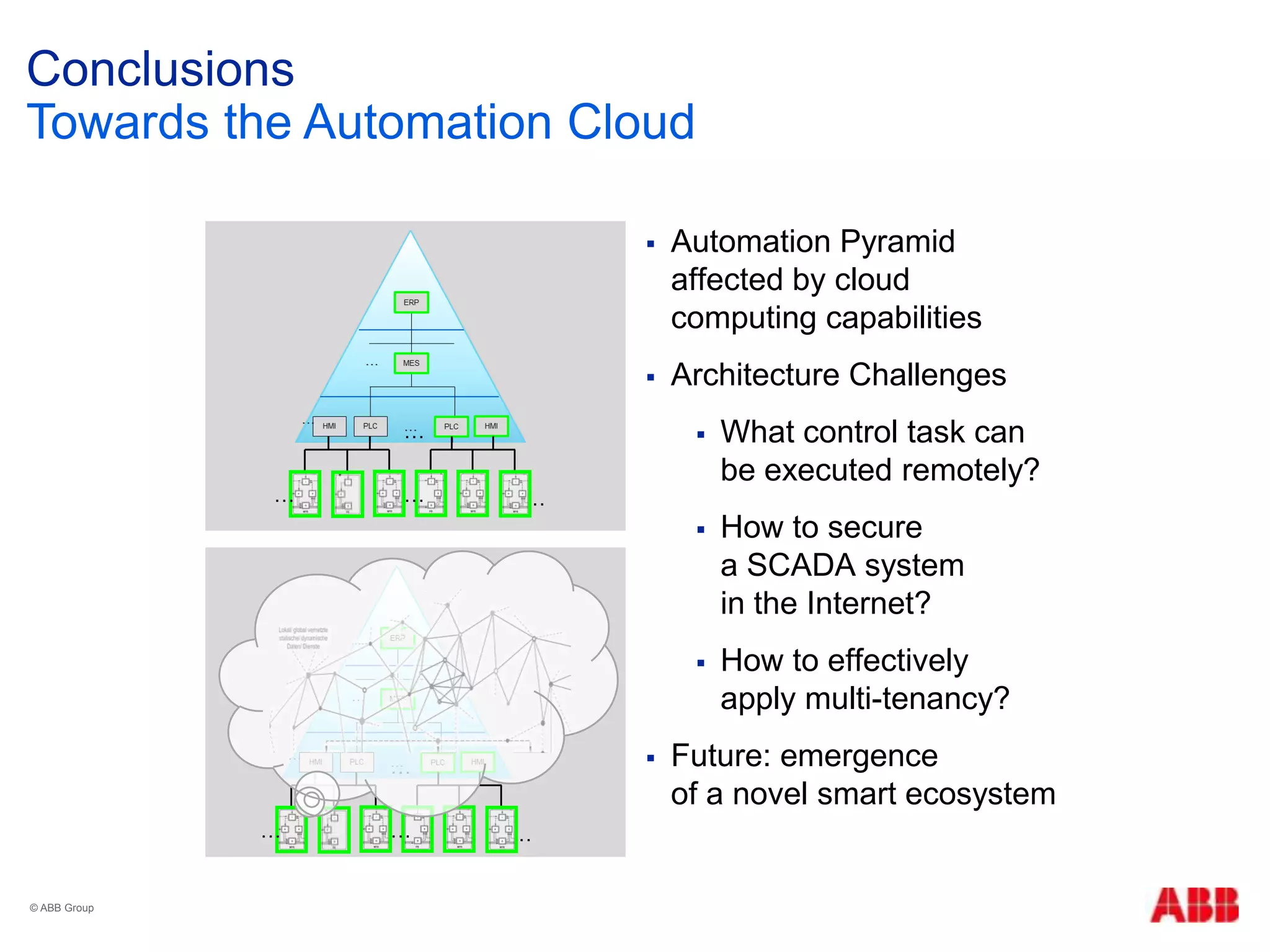

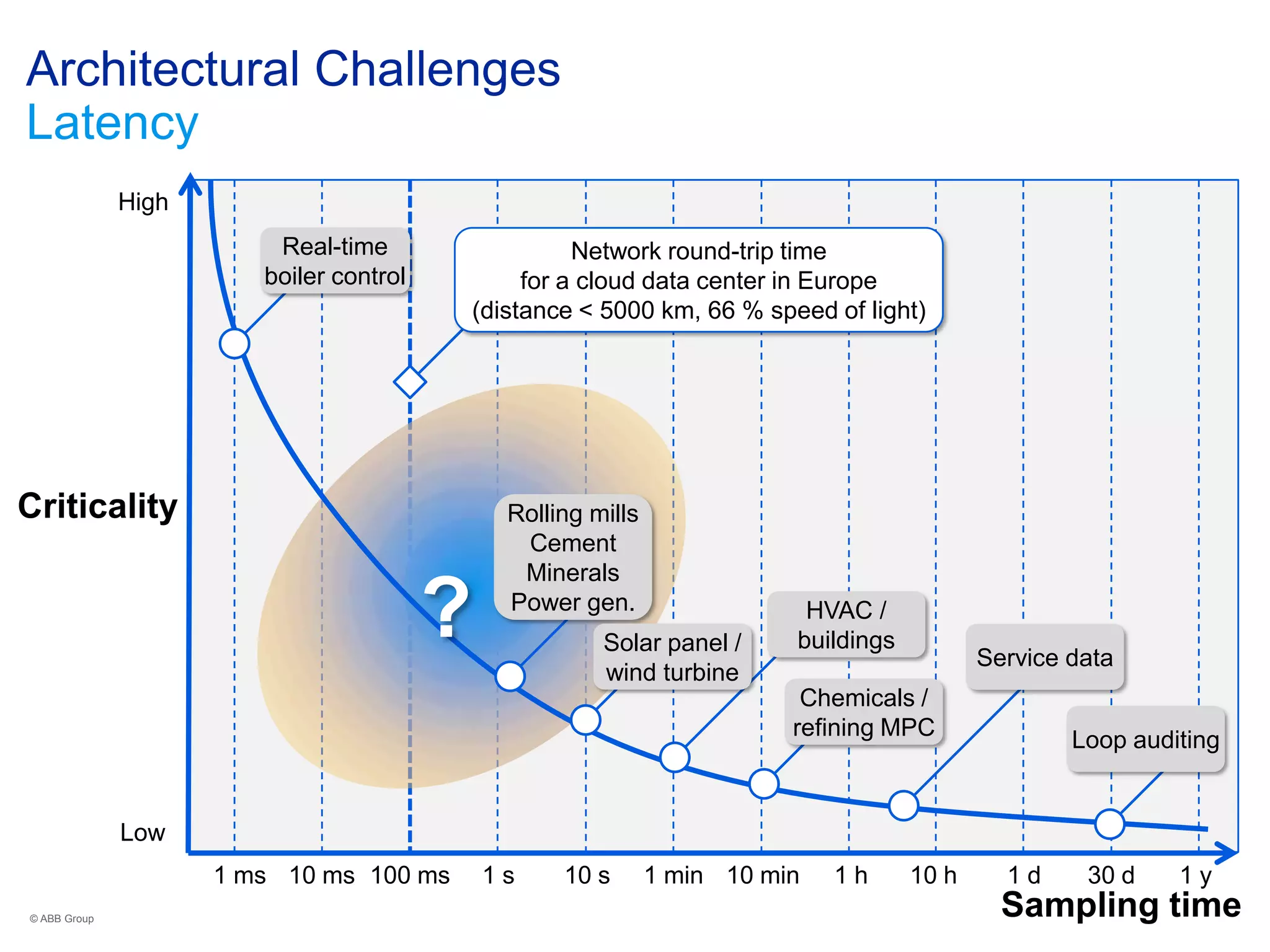

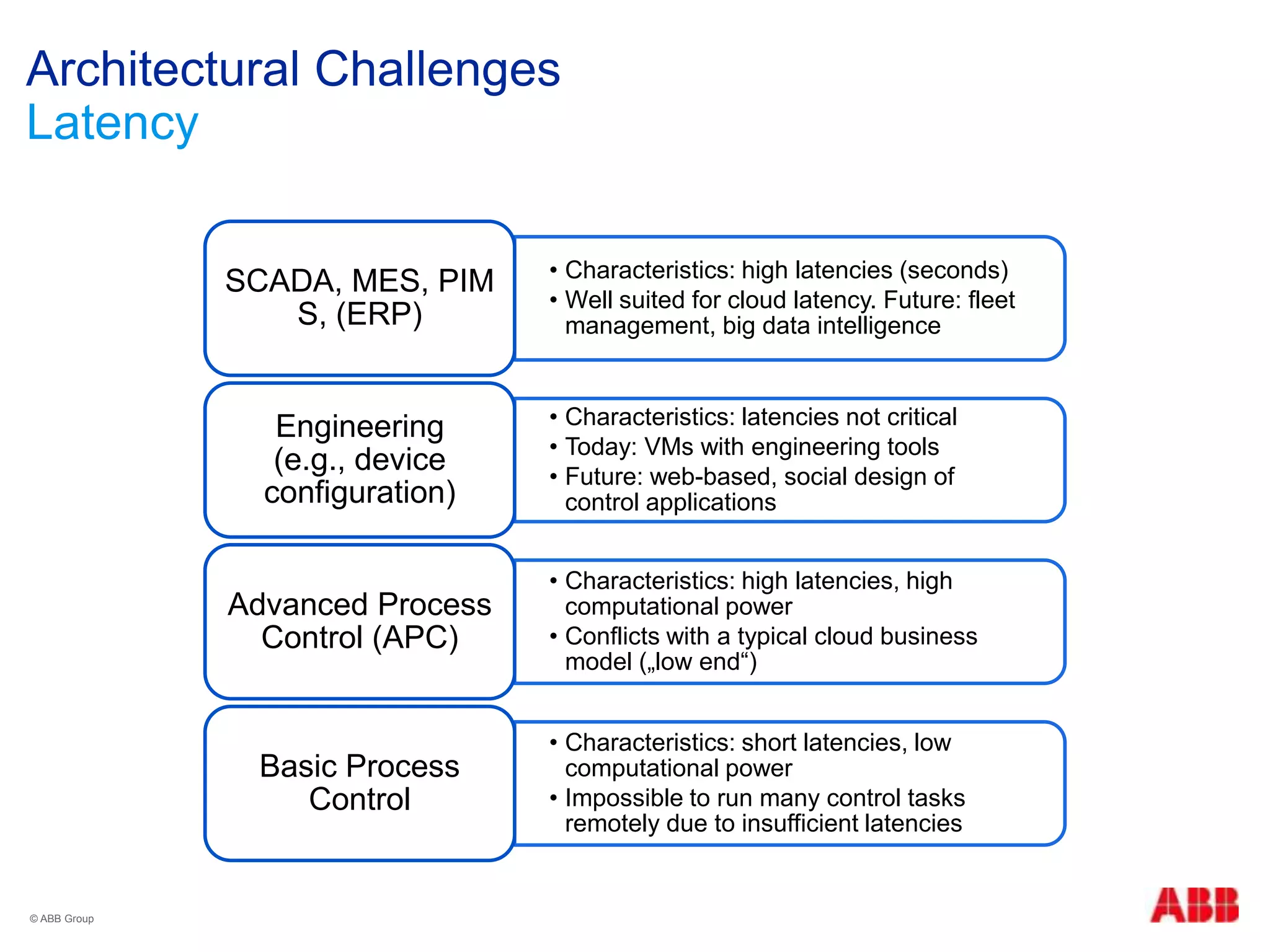

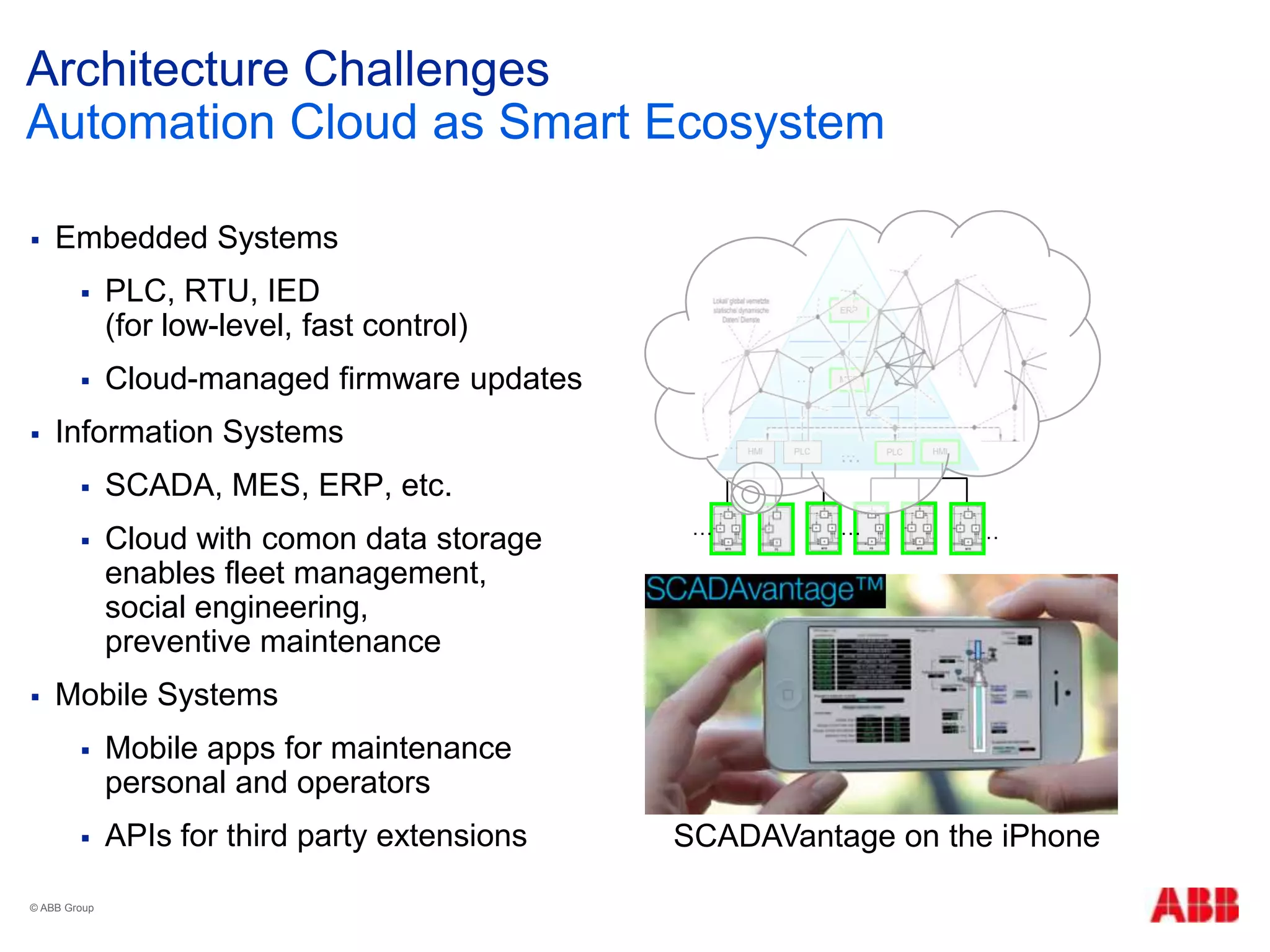

The document discusses architectural challenges and developments in integrating cloud computing into traditional automation systems, particularly in the context of cyber-physical systems. Key challenges highlighted include latency, security, and multi-tenancy, as well as the potential evolution of an automation cloud ecosystem. The paper emphasizes the need for innovative solutions to manage control tasks remotely while ensuring robust security measures.

![Cloud Computing

“Cloud computing is a model for enabling convenient, on-

demand network access to a shared pool of configurable

computing resources

(e.g., networks, servers, storage, applications, and

services) that can be rapidly provisioned and released with

minimal management effort or service provider interaction.”

5 essential characteristics

On-demand self-service

Broad network access

Resource pooling

Rapid elasticity or expansion

Measured service

NIST Definition

© ABB Group

[http://www.nist.gov/itl/cloud/]](https://image.slidesharecdn.com/2013-07-02gi-fgswacloud-web-130703041304-phpapp01/75/Towards-the-Automation-Cloud-Architectural-Challenges-for-a-Novel-Smart-Ecosystem-6-2048.jpg)

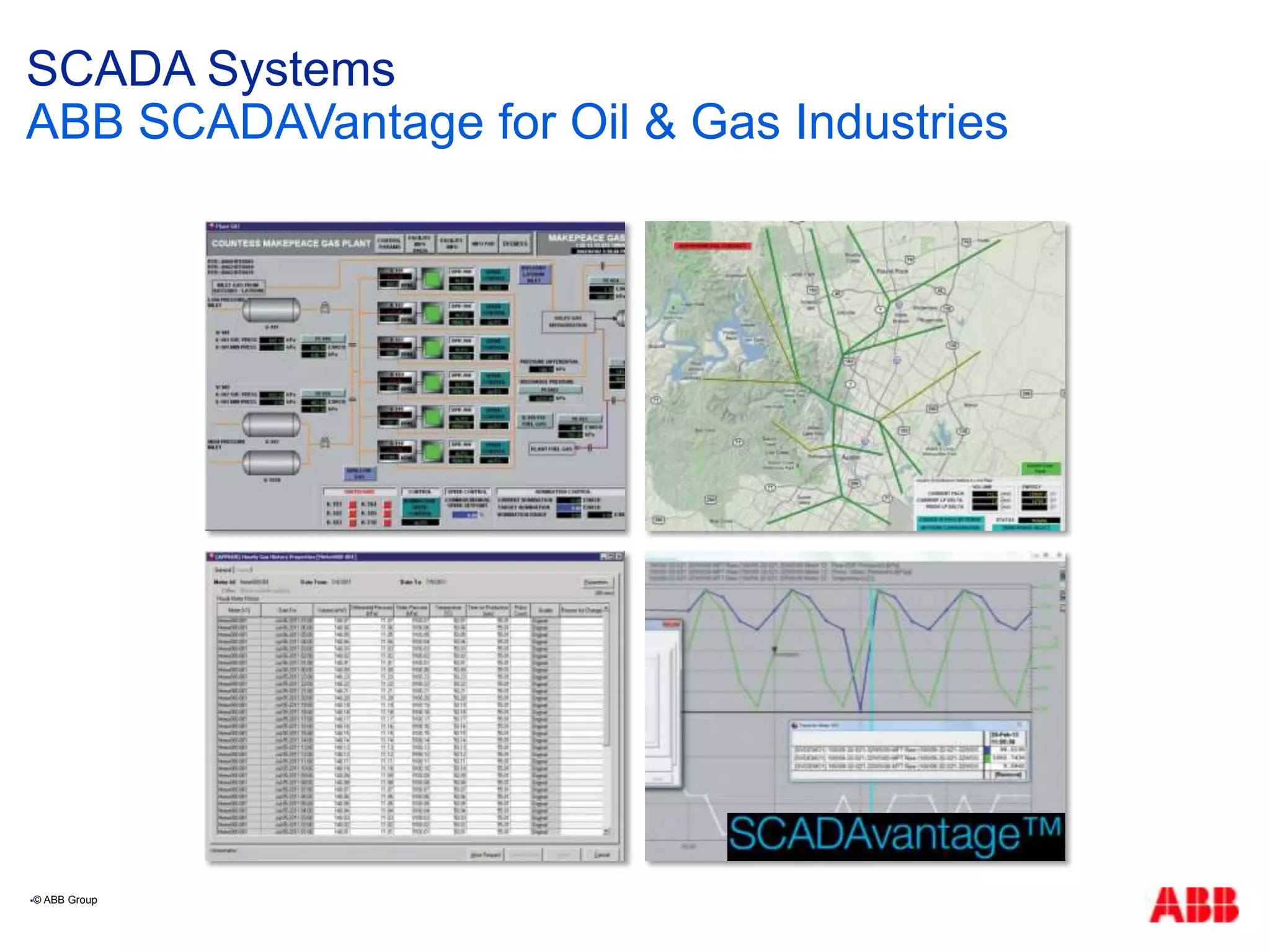

![ ABB partnered with cloud provider GlobaLogix

to provide a hosted version of SCADAVantage (SaaS)

RTUs triggering fast, basic control on-site

High latency SCADA functionality hosted

in 53 data centers in North America, regional proximity

But: no horizontal scaling, no elasticity

Architecture Challenges

Latency

[http://www.abb.com/cawp/seitp202/cf46b46446b6f83985257b7a00488357.aspx]](https://image.slidesharecdn.com/2013-07-02gi-fgswacloud-web-130703041304-phpapp01/75/Towards-the-Automation-Cloud-Architectural-Challenges-for-a-Novel-Smart-Ecosystem-18-2048.jpg)

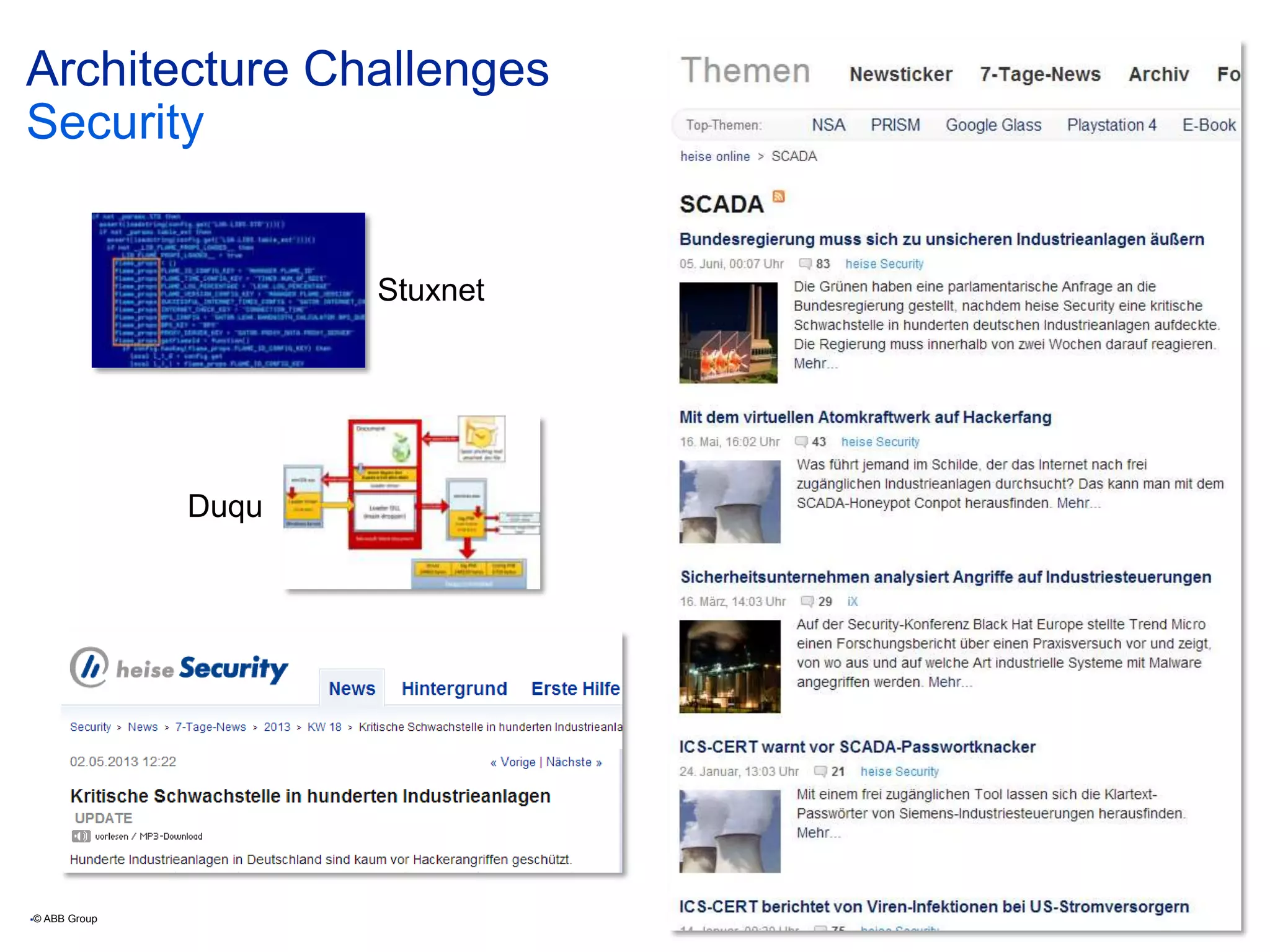

![ GlobaLogix data centers hosting ABB„s SCADAVantage

2048 bit encryption (exceeding DoD standards)

Compliance with the most stringent Tier 4 data center

standards from the Telecommunications Industry

Association (TIA) and American National Standards

Institute (ANSI)

Citrix authentication on client laptops and tables

Password protected web access to read-only data

Architecture Challenges

Security

© ABB Group

[http://www.abb.com/cawp/seitp202/cf46b46446b6f83985257b7a00488357.aspx]

March 1, 2013 | Slide 20](https://image.slidesharecdn.com/2013-07-02gi-fgswacloud-web-130703041304-phpapp01/75/Towards-the-Automation-Cloud-Architectural-Challenges-for-a-Novel-Smart-Ecosystem-20-2048.jpg)

![Architecture Challenges

Multitenancy

© ABB Group

[http://goo.gl/FlrES/]](https://image.slidesharecdn.com/2013-07-02gi-fgswacloud-web-130703041304-phpapp01/75/Towards-the-Automation-Cloud-Architectural-Challenges-for-a-Novel-Smart-Ecosystem-21-2048.jpg)

![Architecture Challenges

Cloud pattern catalogues

Architecture decision sets, ontologies,

domain-specific patterns, …

Architecture description languages

Cloud elements as first-class entities,

domain-specific abstractions, …

Architecture evaluation

ATAM templates for cloud platforms

Model-based predictions

Cloud benchmarks

Reference workloads,

tooling, comparisons, …

Methods for Ultra-large Scale Systems

Smart Grid & Automation Cloud as ULSS

Systems of systems

Directions for Academic Research

© ABB Group

[Koziolek, Proc. WICSA‟11]](https://image.slidesharecdn.com/2013-07-02gi-fgswacloud-web-130703041304-phpapp01/75/Towards-the-Automation-Cloud-Architectural-Challenges-for-a-Novel-Smart-Ecosystem-23-2048.jpg)