[Tokyo Scala User Group] Akka Streams & Reactive Streams (0.7)

- 1. Konrad 'ktoso' Malawski GeeCON 2014 @ Kraków, PL Akka Streams Konrad `@ktosopl` Malawski

- 2. hAkker @ Konrad `@ktosopl` Malawski

- 3. hAkker @ Konrad `@ktosopl` Malawski typesafe.com geecon.org Java.pl / KrakowScala.pl sckrk.com / meetup.com/Paper-Cup @ London GDGKrakow.pl meetup.com/Lambda-Lounge-Krakow

- 4. You? ?

- 5. You? ? z ?

- 6. You? ? z ? ?

- 7. You? ? z ? ? ?

- 8. Streams

- 9. Streams

- 10. Streams “You cannot enter the same river twice” ~ Heraclitus http://en.wikiquote.org/wiki/Heraclitus

- 11. Streams Real Time Stream Processing ! When you attach “late” to a Publisher, you may miss initial elements – it’s a river of data. http://en.wikiquote.org/wiki/Heraclitus

- 12. Reactive Streams

- 13. Reactive Streams ! ! Stream processing

- 14. Reactive Streams Back-pressured ! Stream processing

- 15. Reactive Streams Back-pressured Asynchronous Stream processing

- 16. Reactive Streams Back-pressured Asynchronous Stream processing Standardised (!)

- 17. Reactive Streams: Goals 1. Back-pressured Asynchronous Stream processing ! 2. Standard implemented by many libraries

- 18. Reactive Streams - Specification & TCK http://reactive-streams.org

- 19. Reactive Streams - Who? Kaazing Corp. rxJava @ Netflix, reactor @ Pivotal (SpringSource), vert.x @ Red Hat, Twitter, akka-streams @ Typesafe, spray @ Spray.io, Oracle, java (?) – Doug Lea - SUNY Oswego … http://reactive-streams.org

- 20. Reactive Streams - Inter-op We want to make different implementations co-operate with each other. http://reactive-streams.org

- 21. Reactive Streams - Inter-op The different implementations “talk to each other” using the Reactive Streams protocol. http://reactive-streams.org

- 22. Reactive Streams - Inter-op The Reactive Streams SPI is NOT meant to be user-api. You should use one of the implementing libraries. http://reactive-streams.org

- 24. Back-pressure? Example Without Publisher[T] Subscriber[T]

- 25. Back-pressure? Example Without Fast Publisher Slow Subscriber

- 26. Back-pressure? Push + NACK model

- 27. Back-pressure? Push + NACK model

- 28. Back-pressure? Push + NACK model Subscriber usually has some kind of buffer

- 29. Back-pressure? Push + NACK model

- 30. Back-pressure? Push + NACK model

- 31. Back-pressure? Push + NACK model What if the buffer overflows?

- 32. Back-pressure? Push + NACK model (a) Use bounded buffer, drop messages + require re-sending

- 33. Back-pressure? Push + NACK model (a) Use bounded buffer, drop messages + require re-sending Kernel does this! Routers do this! (TCP)

- 34. Back-pressure? Push + NACK model (b) Increase buffer size… Well, while you have memory available!

- 35. Back-pressure? Push + NACK model (b)

- 36. Back-pressure? Why NACKing is NOT enough

- 37. Back-pressure? Example NACKing たいへんですよ! Buffer overflow is imminent!

- 38. Back-pressure? Example NACKing Telling the Publisher to slow down / stop sending…

- 39. Back-pressure? Example NACKing NACK did not make it in time, because M was in-flight!

- 40. Back-pressure? speed(publisher) < speed(subscriber)

- 41. Back-pressure? Fast Subscriber, No Problem No problem!

- 42. Back-pressure? Reactive-Streams = “Dynamic Push/Pull”

- 43. Back-pressure? RS: Dynamic Push/Pull Just push – not safe when Slow Subscriber Just pull – too slow when Fast Subscriber

- 44. Back-pressure? RS: Dynamic Push/Pull Just push – not safe when Slow Subscriber Just pull – too slow when Fast Subscriber ! Solution: Dynamic adjustment (Reactive Streams)

- 45. Back-pressure? RS: Dynamic Push/Pull Slow Subscriber sees it’s buffer can take 3 elements. Publisher will never blow up it’s buffer.

- 46. Back-pressure? RS: Dynamic Push/Pull Fast Publisher will send at-most 3 elements. This is pull-based-backpressure.

- 47. Back-pressure? RS: Dynamic Push/Pull Fast Subscriber can issue more Request(n), before more data arrives!

- 48. Back-pressure? RS: Dynamic Push/Pull Fast Subscriber can issue more Request(n), before more data arrives!

- 49. Back-pressure? RS: Accumulate demand Publisher accumulates total demand per subscriber.

- 50. Back-pressure? RS: Accumulate demand Total demand of elements is safe to publish. Subscriber’s buffer will not overflow.

- 51. Back-pressure? RS: Requesting “a lot” Fast Subscriber, can request “a lot” from Publisher. This is effectively “publisher push”, and is really fast. Buffer size is known and this is safe.

- 52. Back-pressure? RS: Dynamic Push/Pull

- 53. Back-pressure? RS: Dynamic Push/Pull Safe! Will never overflow!

- 54. わなにですか?

- 55. Akka Akka is a high-performance concurrency library for Scala and Java. ! At it’s core it focuses on the Actor Model:

- 56. Akka Akka is a high-performance concurrency library for Scala and Java. ! At it’s core it focuses on the Actor Model: An Actor can only: • Send / receive messages • Create Actors • Change it’s behaviour

- 57. Akka Akka has multiple modules: ! Akka-camel: integration Akka-remote: remote actors Akka-cluster: clustering Akka-persistence: CQRS / Event Sourcing Akka-streams: stream processing …

- 58. Akka Streams 0.7 early preview

- 59. Akka Streams – Linear Flow

- 60. Akka Streams – Linear Flow

- 61. Akka Streams – Linear Flow

- 62. Akka Streams – Linear Flow

- 63. Akka Streams – Linear Flow FlowFrom[Double].map(_.toInt). [...] No Source attached yet. “Pipe ready to work with Doubles”.

- 64. Akka Streams – Linear Flow implicit val sys = ActorSystem("tokyo-sys")! ! It’s the world in which Actors live in. AkkaStreams uses Actors, so it needs ActorSystem.

- 65. Akka Streams – Linear Flow implicit val sys = ActorSystem("tokyo-sys")! implicit val mat = FlowMaterializer()! Contains logic on HOW to materialise the stream. Can be pure Actors, or (future) Apache Spark (in the future).

- 66. Akka Streams – Linear Flow implicit val sys = ActorSystem("tokyo-sys")! implicit val mat = FlowMaterializer()! You can configure it’s buffer sizes etc. (Or implement your own materialiser (“run on spark”))

- 67. Akka Streams – Linear Flow implicit val sys = ActorSystem("tokyo-sys")! implicit val mat = FlowMaterializer()! val foreachSink = ForeachSink[Int](println)! val mf = FlowFrom(1 to 3).withSink(foreachSink).run() Uses the implicit FlowMaterializer

- 68. Akka Streams – Linear Flow implicit val sys = ActorSystem("tokyo-sys")! implicit val mat = FlowMaterializer()! val foreachSink = ForeachSink[Int](println)! val mf = FlowFrom(1 to 3).withSink(foreachSink).run()(mat)

- 69. Akka Streams – Linear Flow val mf = FlowFrom[Int].! map(_ * 2).! withSink(ForeachSink(println)) // needs source,! // can NOT run

- 70. Akka Streams – Linear Flow val f = FlowFrom[Int].! map(_ * 2).! ! ! ! withSink(ForeachSink(i => println(s"i = $i”))).! ! ! // needs Source to run!

- 71. Akka Streams – Linear Flow val f = FlowFrom[Int].! map(_ * 2).! ! ! ! withSink(ForeachSink(i => println(s"i = $i”))).! ! ! // needs Source to run!

- 72. Akka Streams – Linear Flow val f = FlowFrom[Int].! map(_ * 2).! ! ! ! withSink(ForeachSink(i => println(s"i = $i”))).! ! ! // needs Source to run!

- 73. Akka Streams – Linear Flow val f = FlowFrom[Int].! map(_ * 2).! ! ! ! withSink(ForeachSink(i => println(s"i = $i”))).! ! ! // needs Source to run!

- 74. Akka Streams – Linear Flow val f = FlowFrom[Int].! map(_ * 2).! ! ! ! withSink(ForeachSink(i => println(s"i = $i”))).! ! ! // needs Source to run! ! ! ! ! f.withSource(IterableSource(1 to 10)).run()

- 75. Akka Streams – Linear Flow val f = FlowFrom[Int].! map(_ * 2).! ! ! ! withSink(ForeachSink(i => println(s"i = $i”))).! ! ! // needs Source to run! ! ! ! ! f.withSource(IterableSource(1 to 10)).run()

- 76. Akka Streams – Linear Flow val f = FlowFrom[Int].! map(_ * 2).! ! ! ! withSink(ForeachSink(i => println(s"i = $i”))).! ! ! // needs Source to run! ! ! ! ! f.withSource(IterableSource(1 to 10)).run()

- 77. Akka Streams – Linear Flow val f = FlowFrom[Int].! map(_ * 2).! ! ! ! withSink(ForeachSink(i => println(s"i = $i”))).! ! ! // needs Source to run! ! ! ! ! f.withSource(IterableSource(1 to 10)).run()

- 78. Akka Streams – Linear Flow val f = FlowFrom[Int].! map(_ * 2).! ! ! ! withSink(ForeachSink(i => println(s"i = $i”))).! ! ! // needs Source to run! ! ! ! ! f.withSource(IterableSource(1 to 10)).run()

- 79. Akka Streams – Flows are reusable ! ! ! ! f.withSource(IterableSource(1 to 10)).run()! ! ! ! f.withSource(IterableSource(1 to 100)).run()! ! ! ! f.withSource(IterableSource(1 to 1000)).run()

- 80. Akka Streams <-> Actors – Advanced val subscriber = system.actorOf(Props[SubStreamParent], ”parent")! ! FlowFrom(1 to 100).! map(_.toString).! filter(_.length == 2).! drop(2).! groupBy(_.last).! publishTo(ActorSubscriber(subscriber))!

- 81. Akka Streams <-> Actors – Advanced val subscriber = system.actorOf(Props[SubStreamParent], ”parent")! ! FlowFrom(1 to 100).! map(_.toString).! filter(_.length == 2).! drop(2).! groupBy(_.last).! publishTo(ActorSubscriber(subscriber))! Each “group” is a stream too! “Stream of Streams”.

- 82. Akka Streams <-> Actors – Advanced ! groupBy(_.last). GroupBy groups “11” to group “1”, “12” to group “2” etc.

- 83. Akka Streams <-> Actors – Advanced ! groupBy(_.last). It offers (groupKey, subStreamFlow) to Subscriber

- 84. Akka Streams <-> Actors – Advanced ! groupBy(_.last). It can then start children, to handle the sub-flows!

- 85. Akka Streams <-> Actors – Advanced ! groupBy(_.last). For example, one child for each group.

- 86. Akka Streams <-> Actors – Advanced val subscriber = system.actorOf(Props[SubStreamParent], ”parent")! ! FlowFrom(1 to 100).! map(_.toString).! filter(_.length == 2).! drop(2).! groupBy(_.last).! publishTo(ActorSubscriber(subscriber))! 普通 Akka Actor, will consume SubStream offers.

- 87. Akka Streams <-> Actors – Advanced class SubStreamParent extends ActorSubscriber ! with ImplicitFlowMaterializer ! with ActorLogging {! ! override def requestStrategy = OneByOneRequestStrategy! ! override def receive = {! case OnNext((groupId: String, subStream: FlowWithSource[_, _])) =>! ! val subSub = context.actorOf(Props[SubStreamSubscriber], ! s"sub-$groupId")! subStream.publishTo(ActorSubscriber(subSub))! }! }!

- 88. Akka Streams <-> Actors – Advanced class SubStreamParent extends ActorSubscriber ! with ImplicitFlowMaterializer ! with ActorLogging {! ! override def requestStrategy = OneByOneRequestStrategy! ! override def receive = {! case OnNext((groupId: String, subStream: FlowWithSource[_, _])) =>! ! val subSub = context.actorOf(Props[SubStreamSubscriber], ! s"sub-$groupId")! subStream.publishTo(ActorSubscriber(subSub))! }! }!

- 89. Akka Streams <-> Actors – Advanced class SubStreamParent extends ActorSubscriber ! with ImplicitFlowMaterializer ! with ActorLogging {! ! override def requestStrategy = OneByOneRequestStrategy! ! override def receive = {! case OnNext((groupId: String, subStream: FlowWithSource[_, _])) =>! ! val subSub = context.actorOf(Props[SubStreamSubscriber], ! s"sub-$groupId")! subStream.publishTo(ActorSubscriber(subSub))! }! }!

- 90. Akka Streams <-> Actors – Advanced class SubStreamParent extends ActorSubscriber ! with ImplicitFlowMaterializer ! with ActorLogging {! ! override def requestStrategy = OneByOneRequestStrategy! ! override def receive = {! case OnNext((groupId: String, subStream: FlowWithSource[_, _])) =>! ! val subSub = context.actorOf(Props[SubStreamSubscriber], ! s"sub-$groupId")! subStream.publishTo(ActorSubscriber(subSub))! }! }!

- 91. Akka Streams <-> Actors – Advanced class SubStreamParent extends ActorSubscriber ! with ImplicitFlowMaterializer ! with ActorLogging {! ! override def requestStrategy = OneByOneRequestStrategy! ! override def receive = {! case OnNext((groupId: String, subStream: FlowWithSource[_, _])) =>! ! val subSub = context.actorOf(Props[SubStreamSubscriber], ! s"sub-$groupId")! subStream.publishTo(ActorSubscriber(subSub))! }! }!

- 92. Akka Streams <-> Actors – Advanced class SubStreamParent extends ActorSubscriber {! ! override def requestStrategy = OneByOneRequestStrategy! ! override def receive = {! case OnNext(n: String) => println(s”n = $n”) ! }! }!

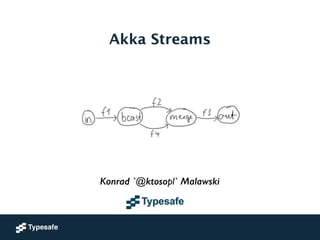

- 93. Akka Streams – GraphFlow GraphFlow

- 94. Akka Streams – GraphFlow Linear Flows or non-akka pipelines Could be another RS implementation!

- 95. Akka Streams – GraphFlow Fan-out elements and Fan-in elements

- 96. Akka Streams – GraphFlow Fan-out elements and Fan-in elements Now you need a FlowGraph

- 97. Akka Streams – GraphFlow // first define some pipeline pieces! val f1 = FlowFrom[Input].map(_.toIntermediate)! val f2 = FlowFrom[Intermediate].map(_.enrich)! val f3 = FlowFrom[Enriched].filter(_.isImportant)! val f4 = FlowFrom[Intermediate].mapFuture(_.enrichAsync)! ! // then add input and output placeholders! val in = SubscriberSource[Input]! val out = PublisherSink[Enriched]!

- 98. Akka Streams – GraphFlow

- 99. Akka Streams – GraphFlow val b3 = Broadcast[Int]("b3")! val b7 = Broadcast[Int]("b7")! val b11 = Broadcast[Int]("b11")! val m8 = Merge[Int]("m8")! val m9 = Merge[Int]("m9")! val m10 = Merge[Int]("m10")! val m11 = Merge[Int]("m11")! val in3 = IterableSource(List(3))! val in5 = IterableSource(List(5))! val in7 = IterableSource(List(7))!

- 100. Akka Streams – GraphFlow

- 101. Akka Streams – GraphFlow // First layer! in7 ~> b7! b7 ~> m11! b7 ~> m8! ! in5 ~> m11! ! in3 ~> b3! b3 ~> m8! b3 ~> m10!

- 102. Akka Streams – GraphFlow ! // Second layer! m11 ~> b11! b11 ~> FlowFrom[Int].grouped(1000) ~> resultFuture2 ! b11 ~> m9! b11 ~> m10! ! m8 ~> m9!

- 103. Akka Streams – GraphFlow ! // Third layer! m9 ~> FlowFrom[Int].grouped(1000) ~> resultFuture9! m10 ~> FlowFrom[Int].grouped(1000) ~> resultFuture10!

- 104. Akka Streams – GraphFlow ! // Third layer! m9 ~> FlowFrom[Int].grouped(1000) ~> resultFuture9! m10 ~> FlowFrom[Int].grouped(1000) ~> resultFuture10!

- 105. Akka Streams – GraphFlow ! // Third layer! m9 ~> FlowFrom[Int].grouped(1000) ~> resultFuture9! m10 ~> FlowFrom[Int].grouped(1000) ~> resultFuture10!

- 106. Akka Streams – GraphFlow Sinks and Sources are “keys” which can be addressed within the graph val resultFuture2 = FutureSink[Seq[Int]]! val resultFuture9 = FutureSink[Seq[Int]]! val resultFuture10 = FutureSink[Seq[Int]]! ! val g = FlowGraph { implicit b =>! // ...! m10 ~> FlowFrom[Int].grouped(1000) ~> resultFuture10! // ...! }.run()! ! Await.result(g.getSinkFor(resultFuture2), 3.seconds).sorted! should be(List(5, 7))

- 107. Akka Streams – GraphFlow Sinks and Sources are “keys” which can be addressed within the graph val resultFuture2 = FutureSink[Seq[Int]]! val resultFuture9 = FutureSink[Seq[Int]]! val resultFuture10 = FutureSink[Seq[Int]]! ! val g = FlowGraph { implicit b =>! // ...! m10 ~> FlowFrom[Int].grouped(1000) ~> resultFuture10! // ...! }.run()! ! Await.result(g.getSinkFor(resultFuture2), 3.seconds).sorted! should be(List(5, 7))

- 108. Akka Streams – GraphFlow ! val g = FlowGraph {}! FlowGraph is immutable and safe to share and re-use! Think of it as “the description” which then gets “run”.

- 109. Available Elements 0.7 early preview

- 110. Available Sources • FutureSource • IterableSource • IteratorSource • PublisherSource • SubscriberSource • ThunkSource • TickSource (timer based) • … easy to add your own! 0.7 early preview

- 111. Available operations • buffer • collect • concat • conflate • drop / dropWithin • take / takeWithin • filter • fold • foreach • groupBy • grouped • map • onComplete • prefixAndTail • broadcast • merge / “generalised merge” • zip • … possible to add your own! 0.7 early preview

- 112. Available Sinks • BlackHoleSink • FoldSink • ForeachSink • FutureSink • OnCompleteSink • PublisherSink / FanoutPublisherSink • SubscriberSink • … easy to add your own! 0.7 early preview

- 113. Links 1. http://akka.io 2. http://reactive-streams.org 3. https://groups.google.com/group/akka-user

- 114. ありがとう ございました! Questions? http://akka.io ktoso @ typesafe.com twitter: ktosopl github: ktoso team blog: letitcrash.com

- 115. ©Typesafe 2014 – All Rights Reserved