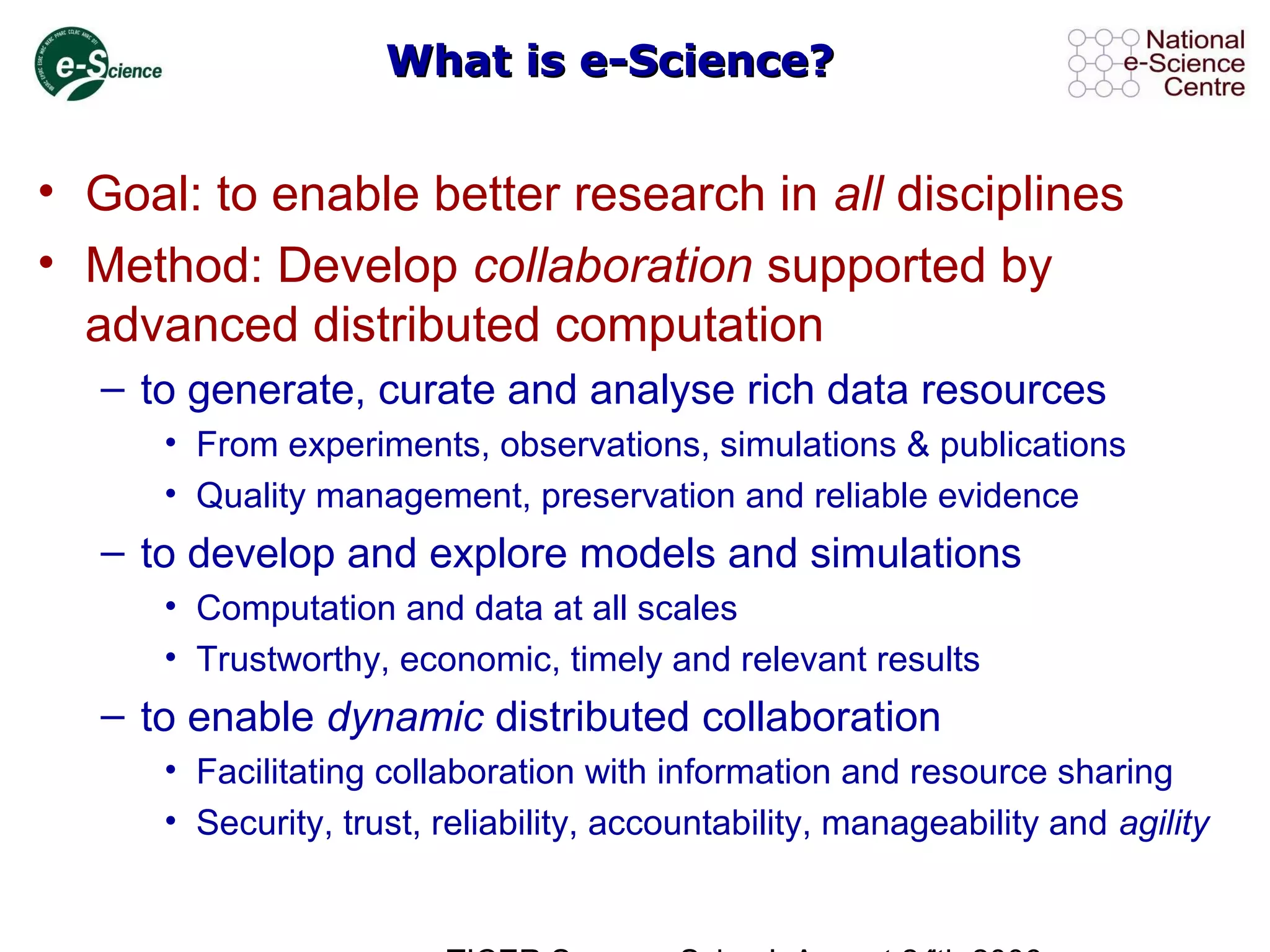

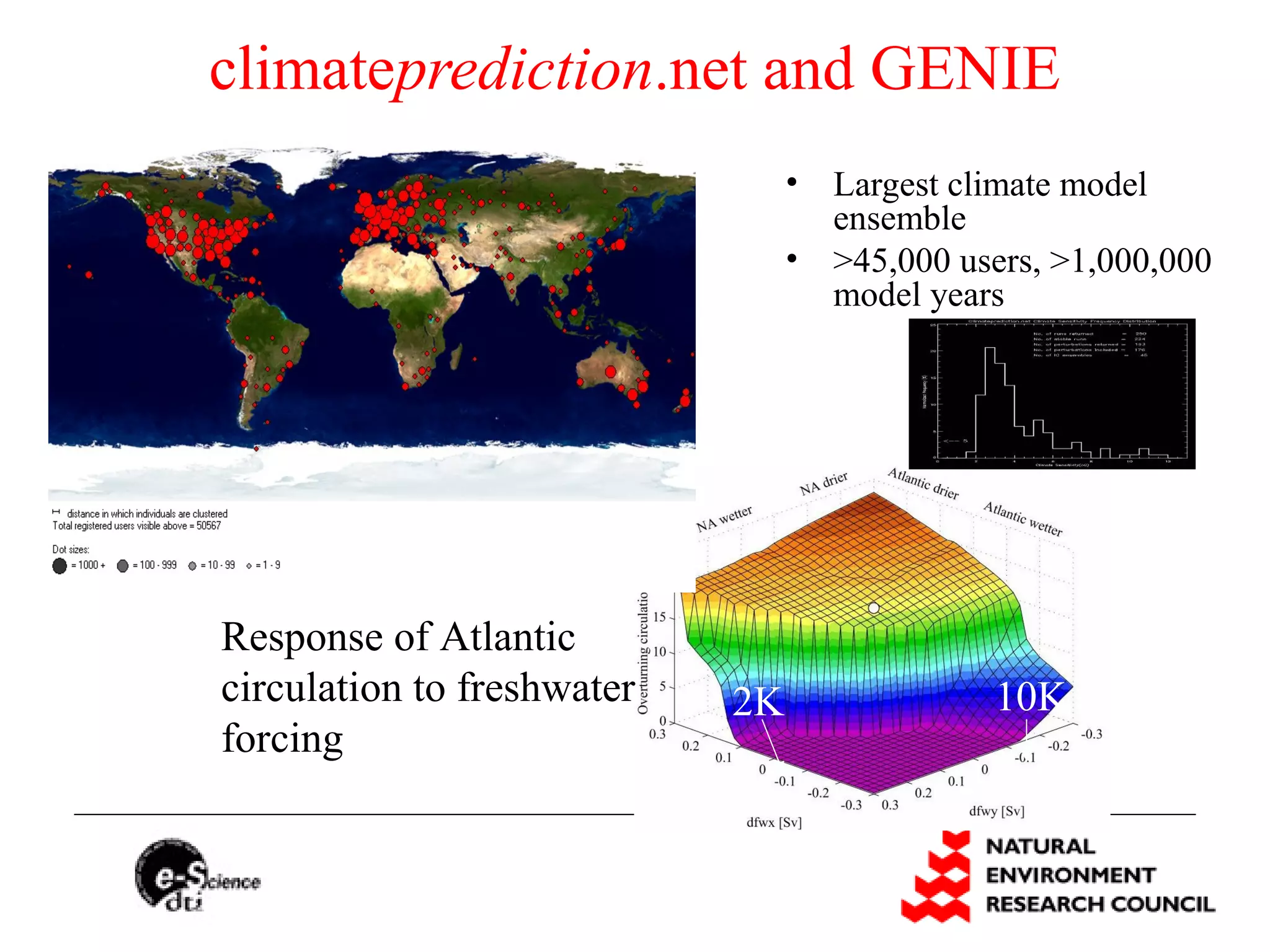

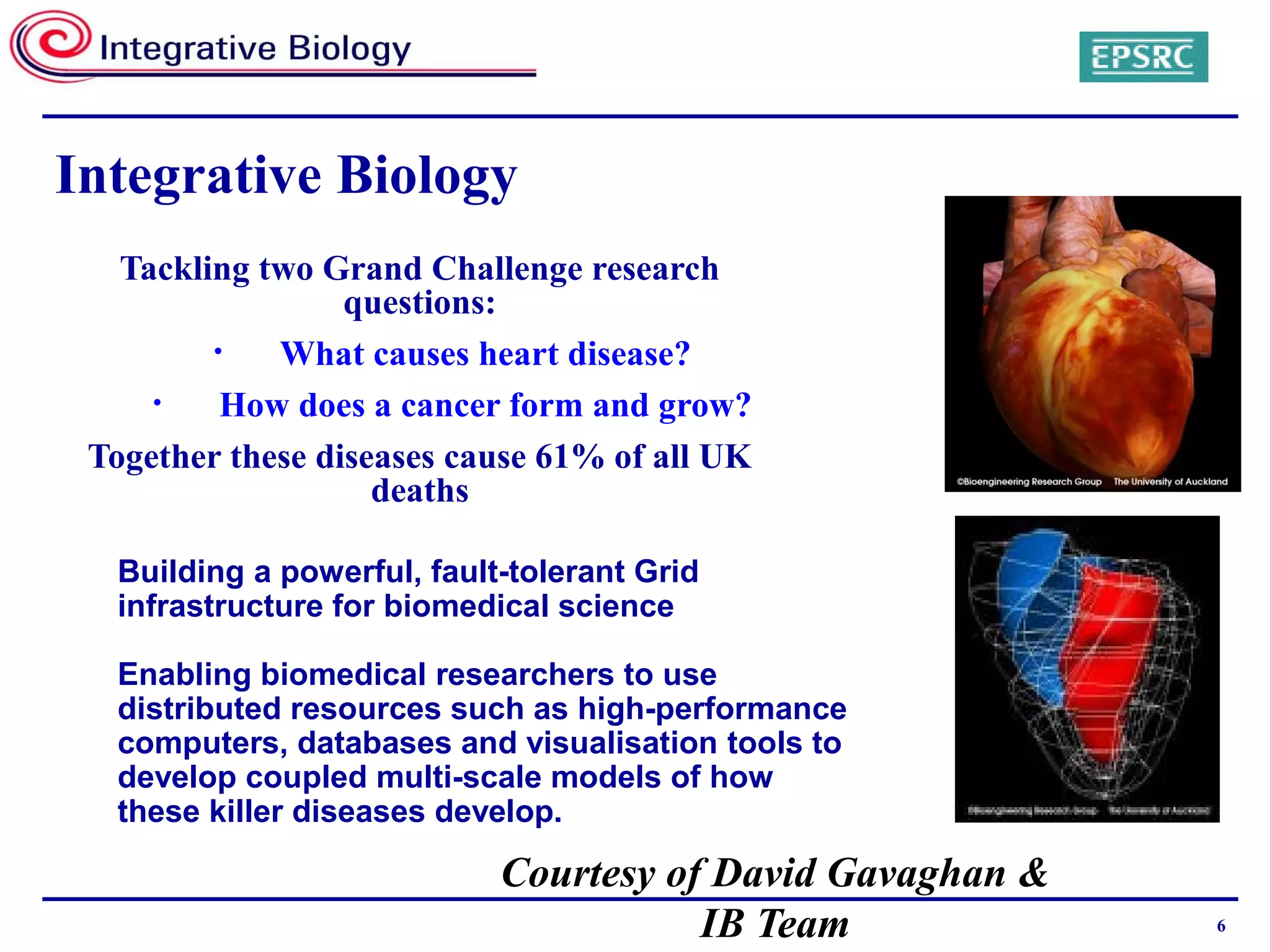

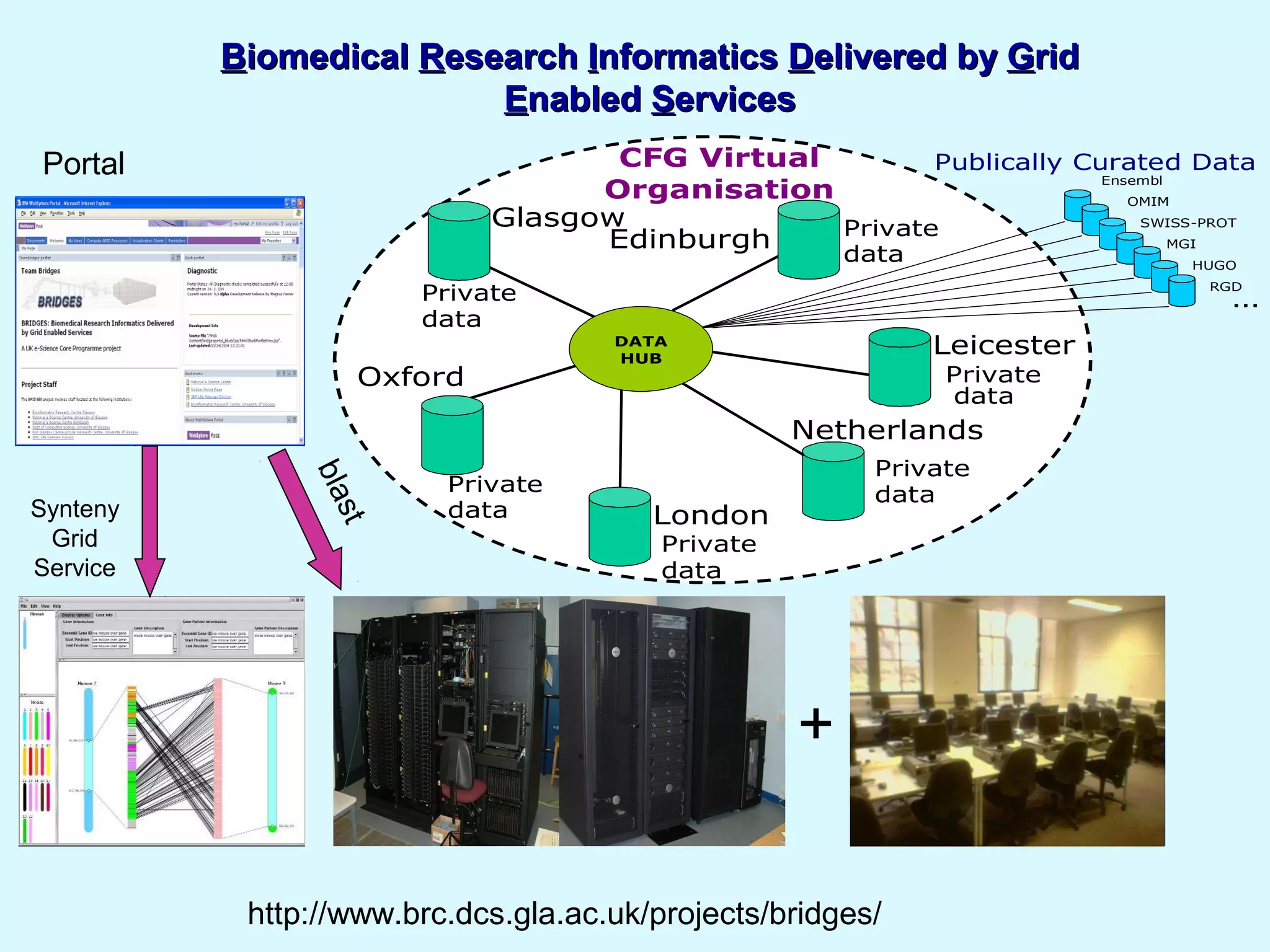

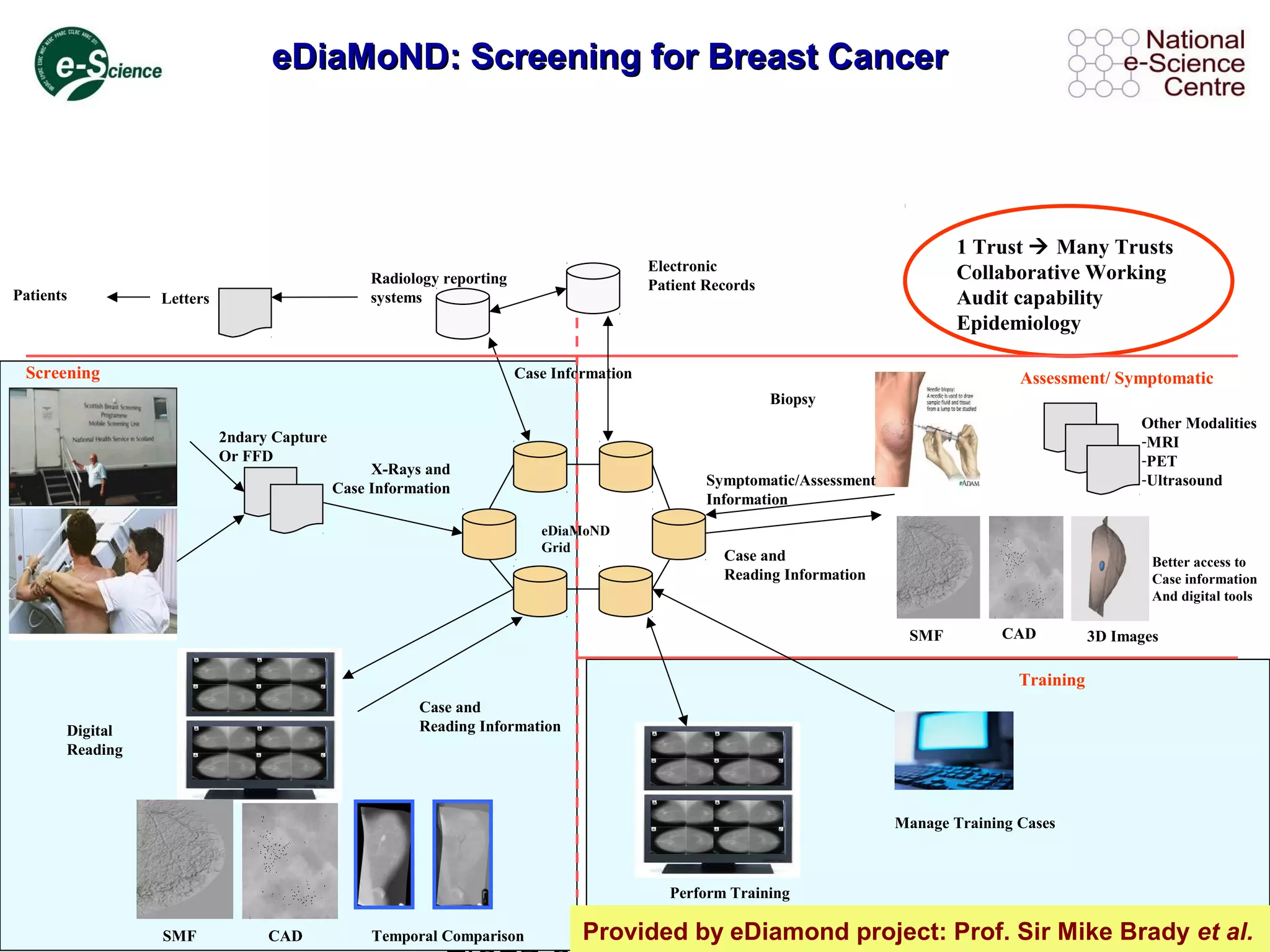

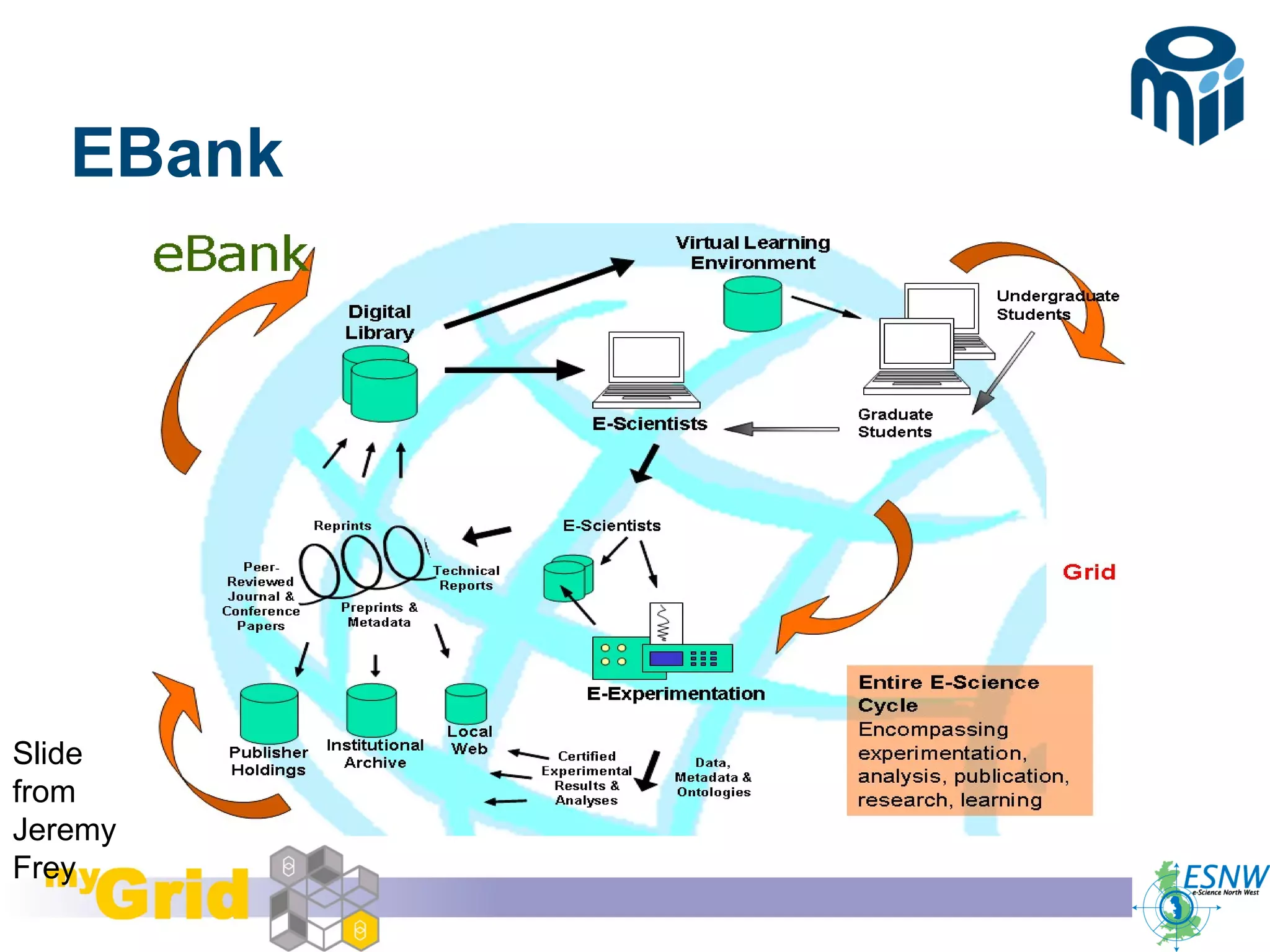

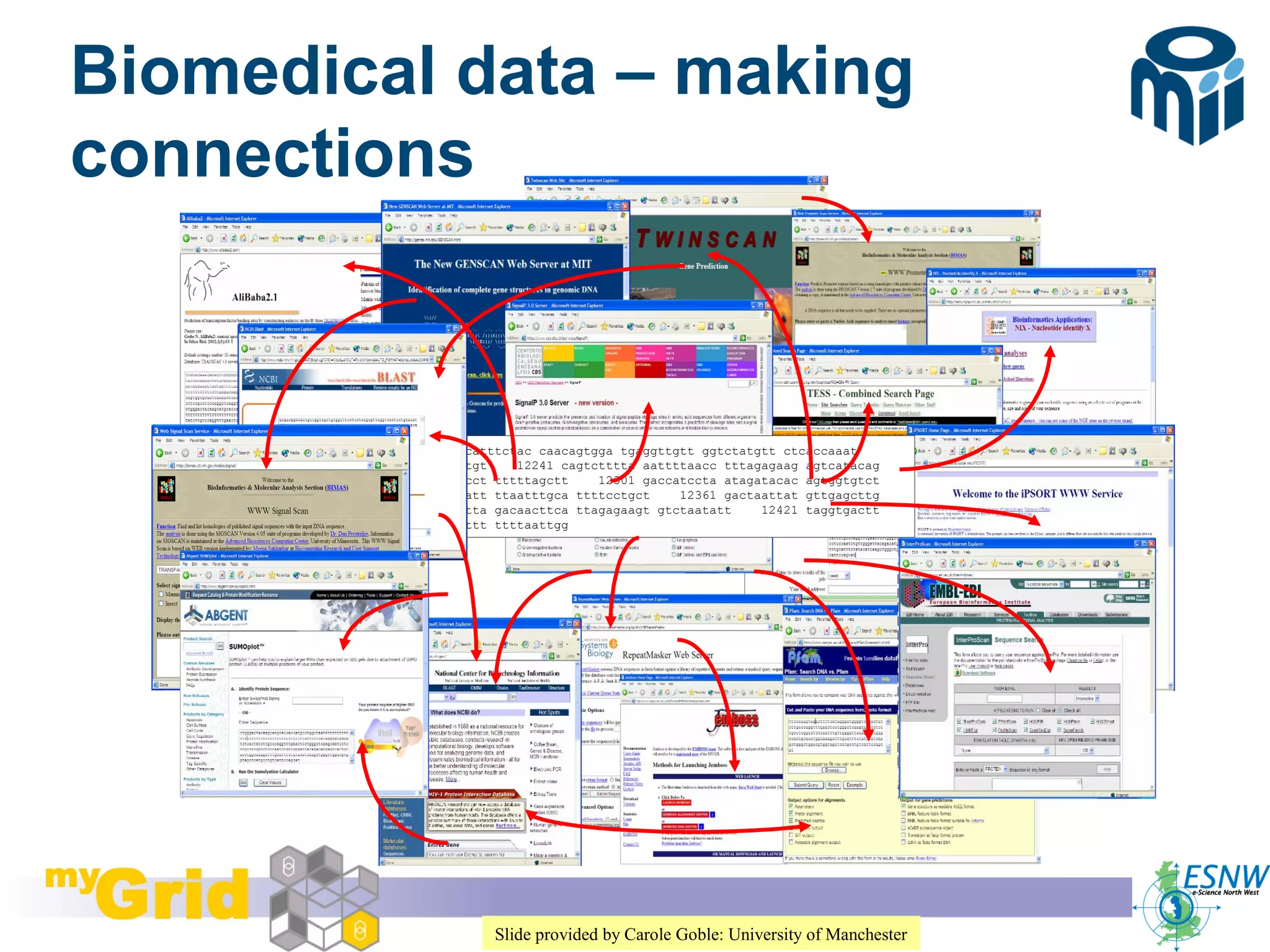

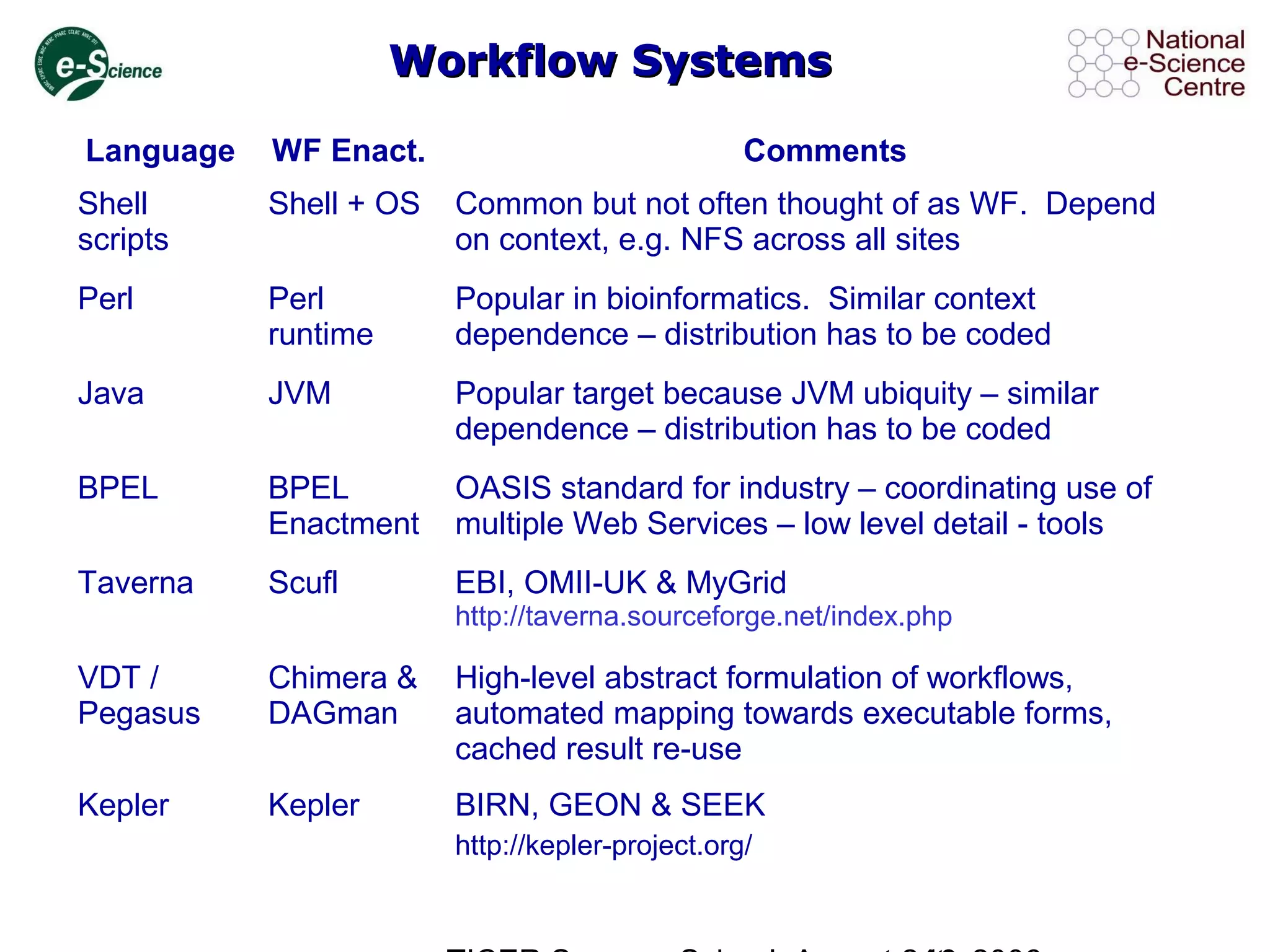

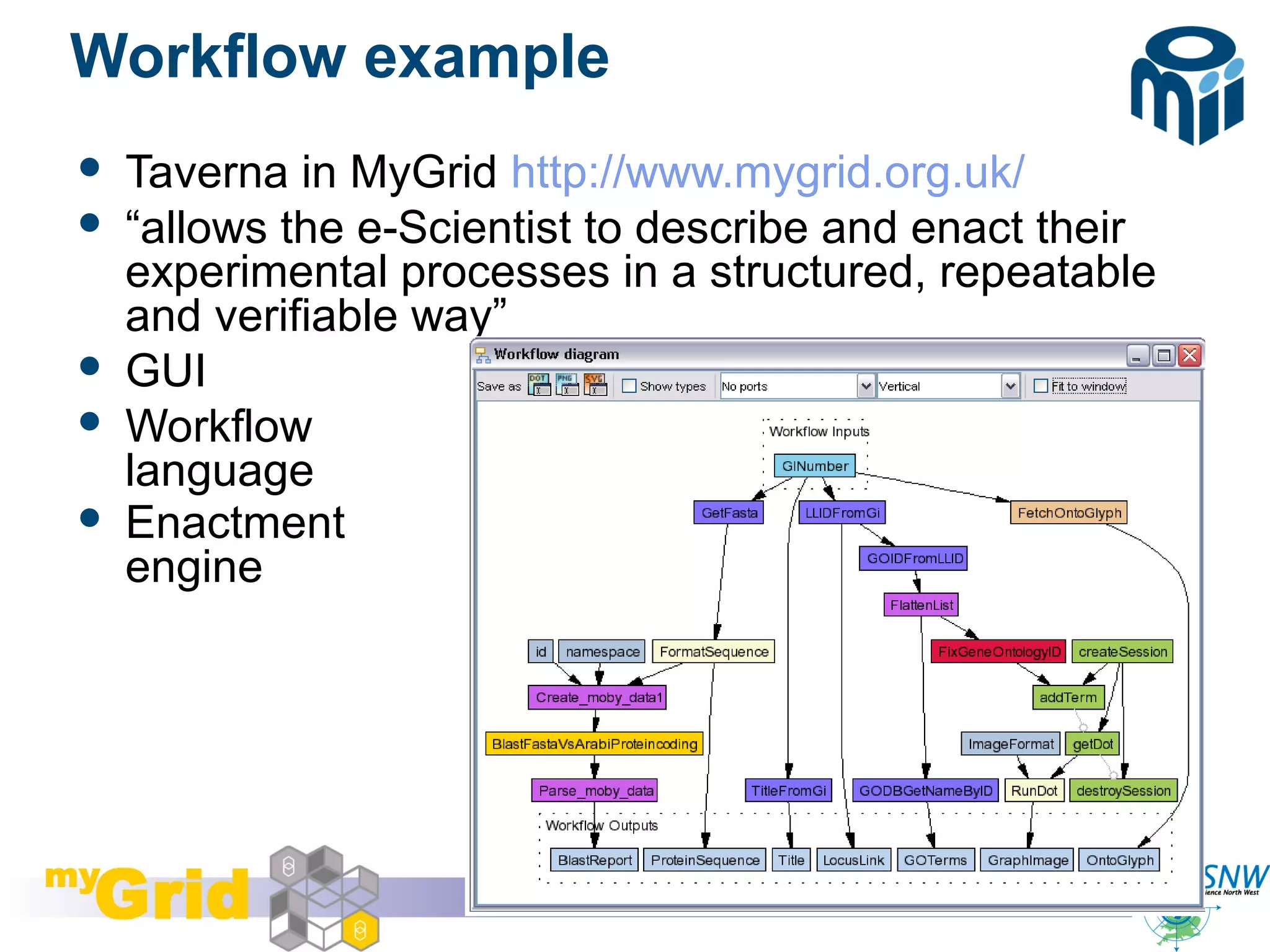

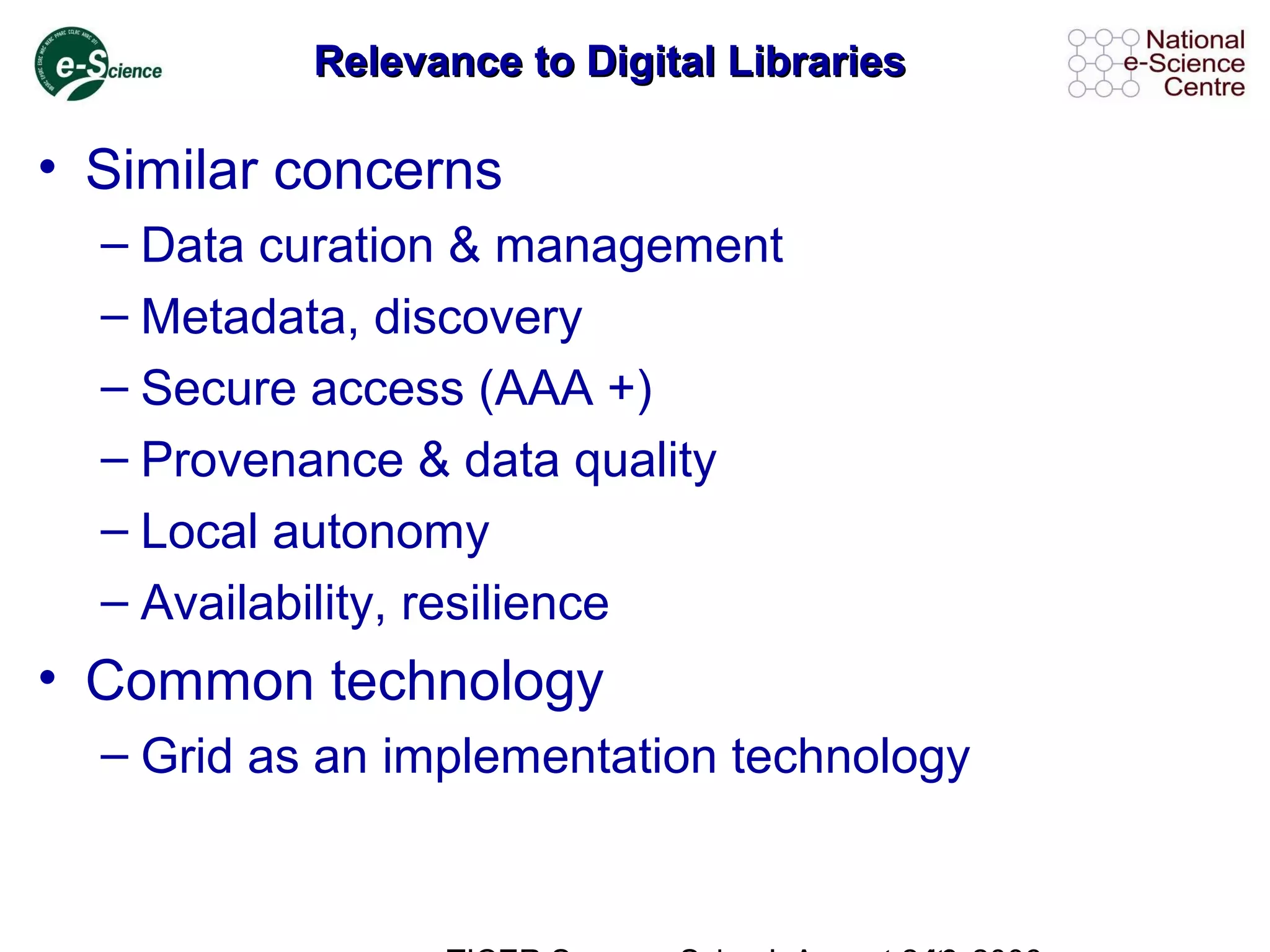

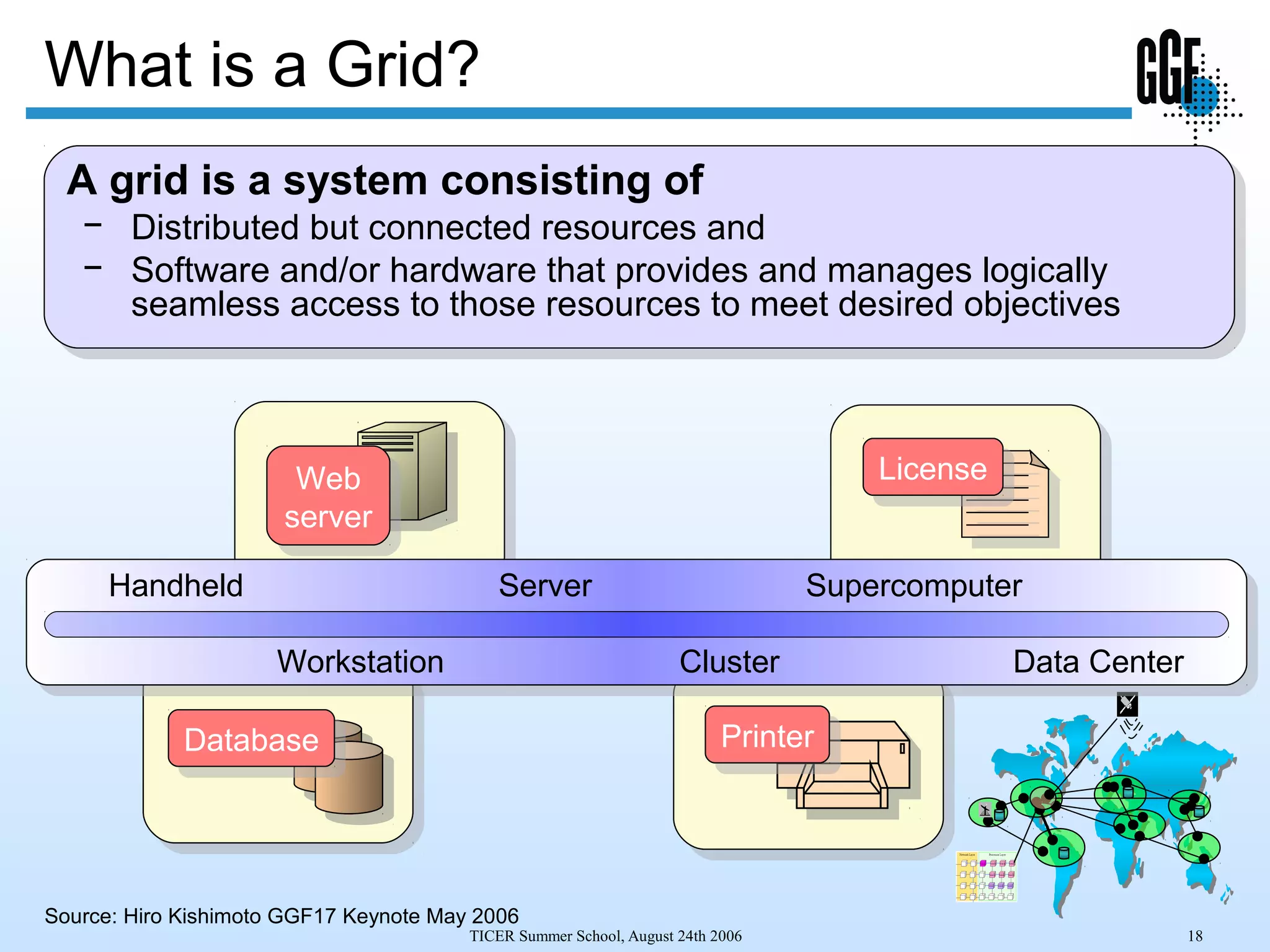

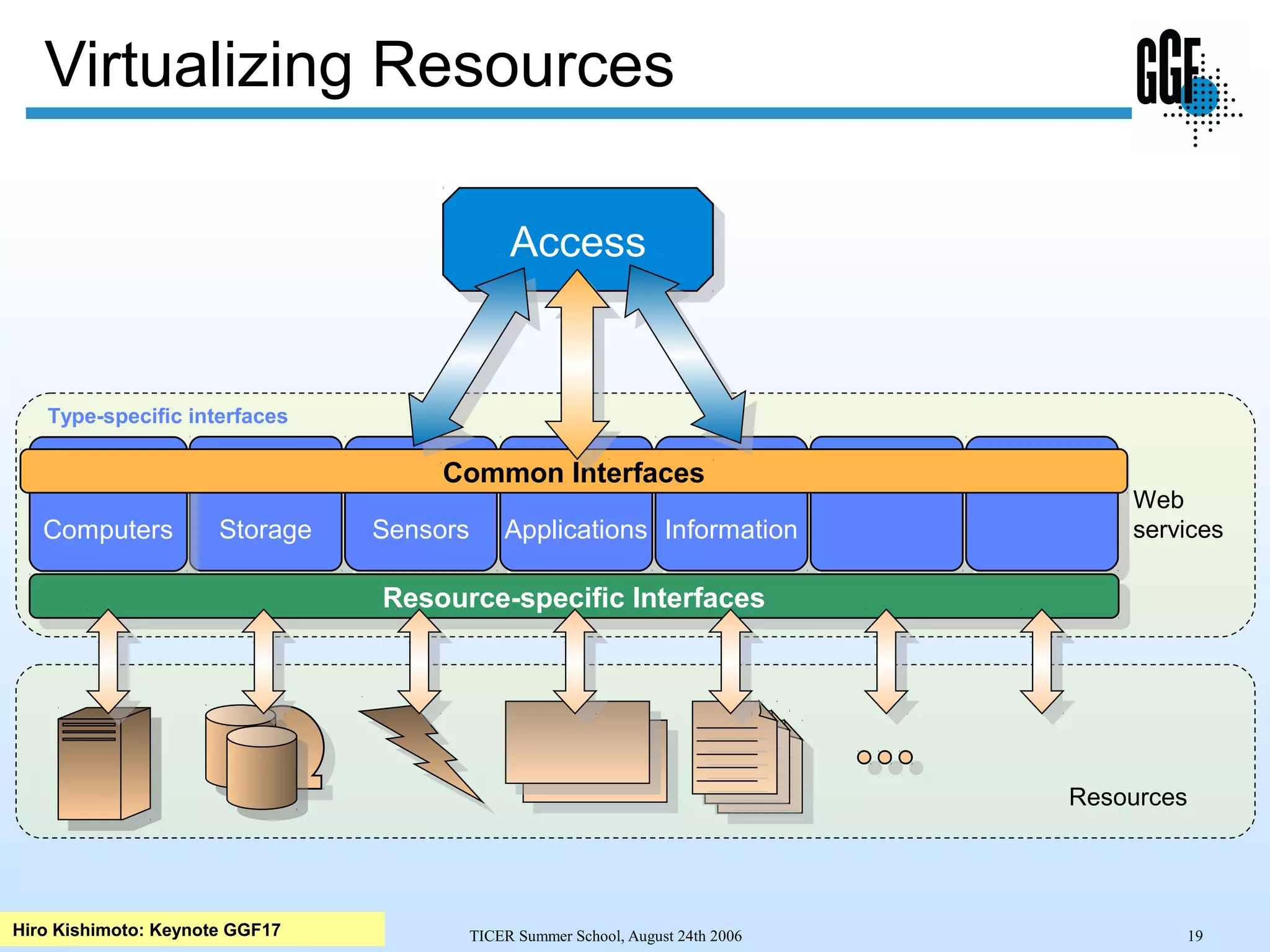

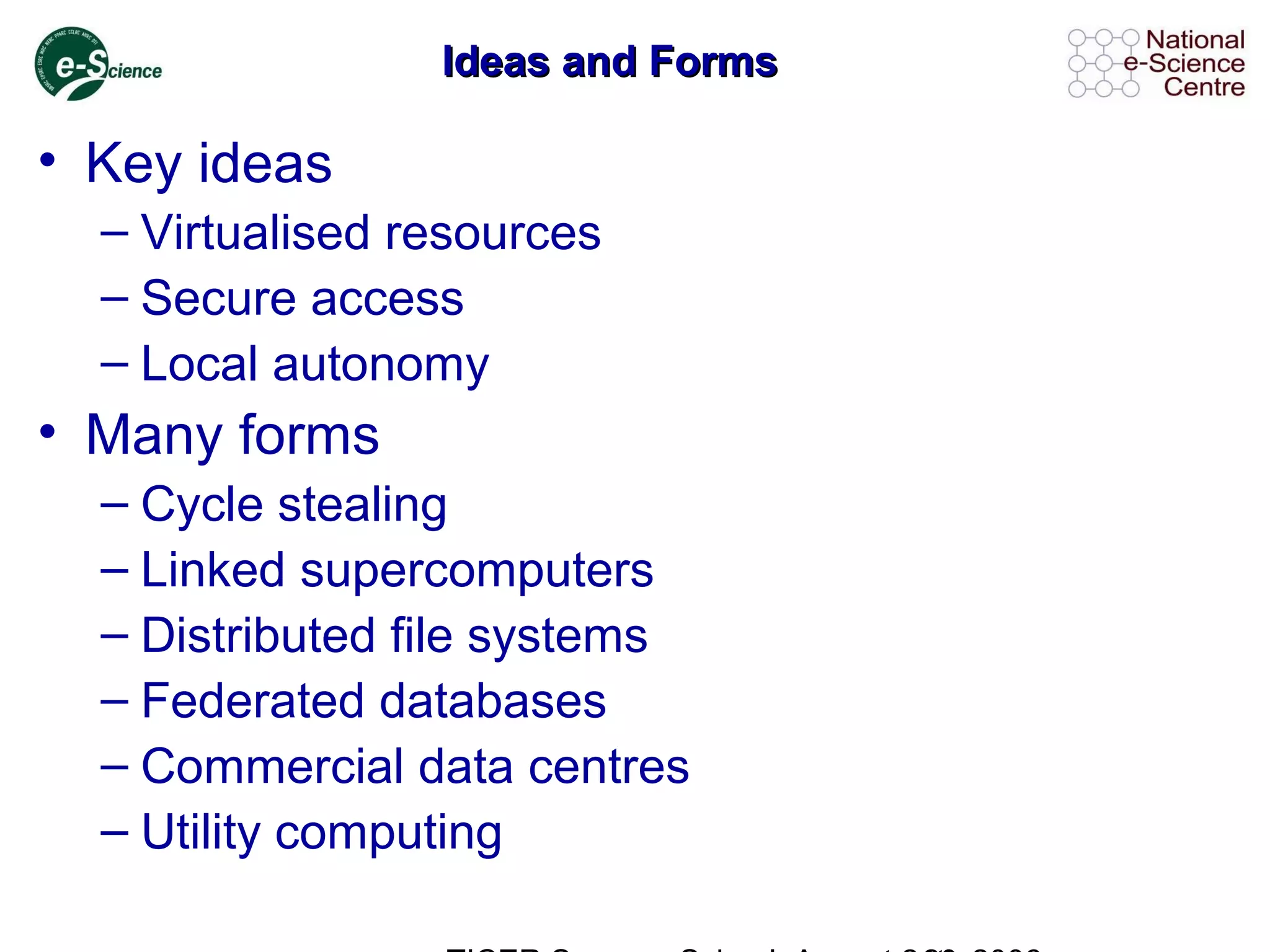

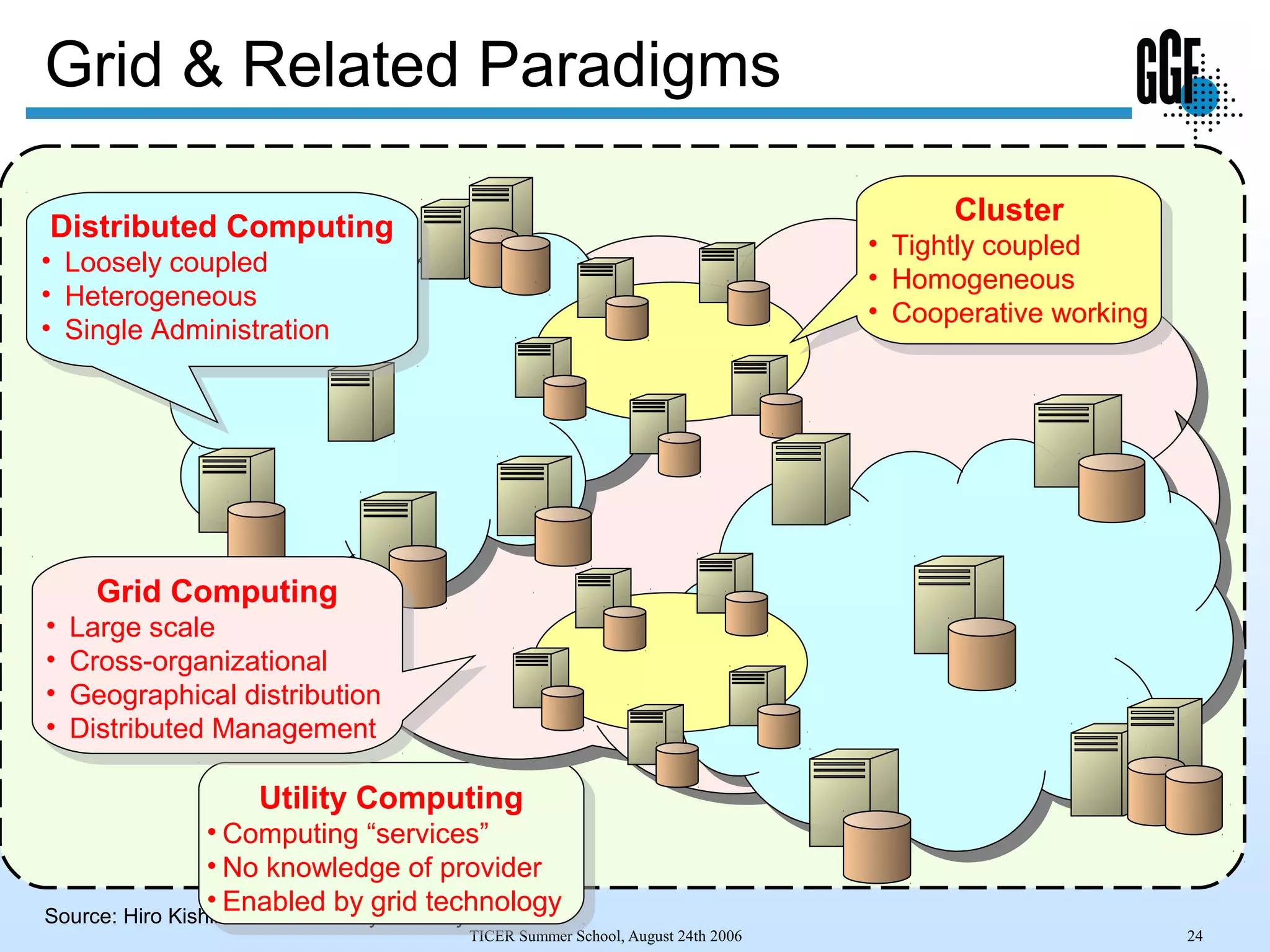

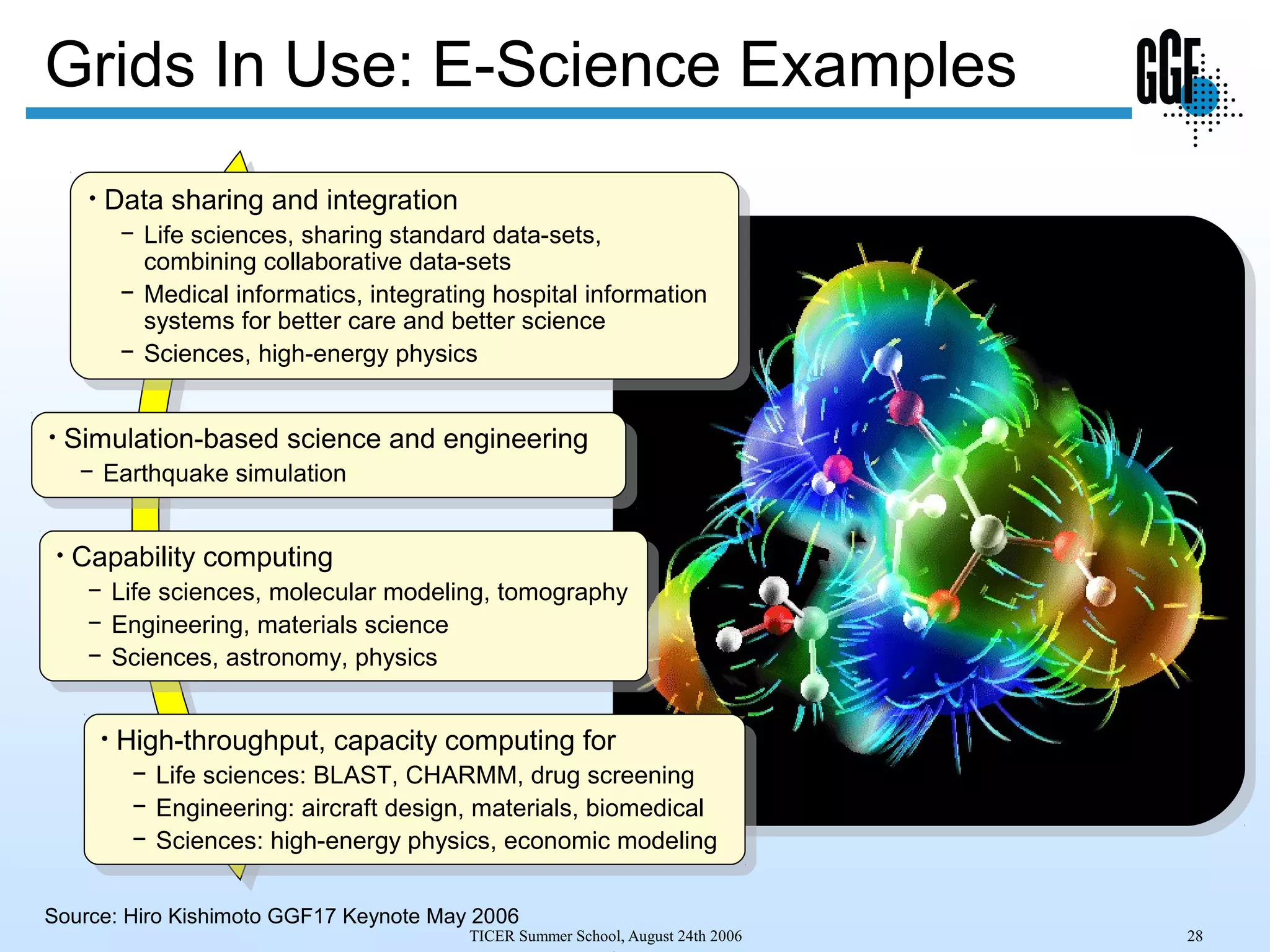

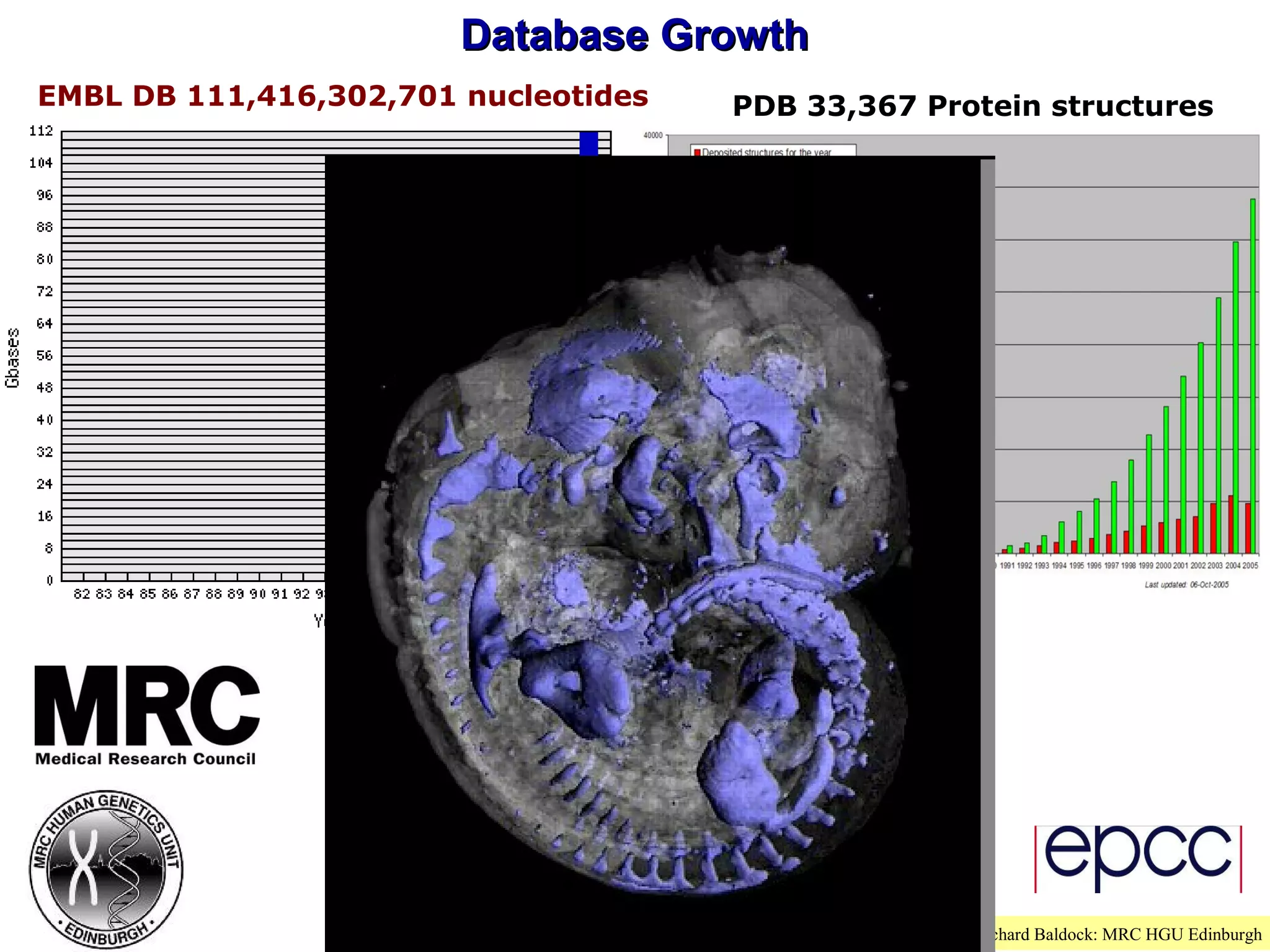

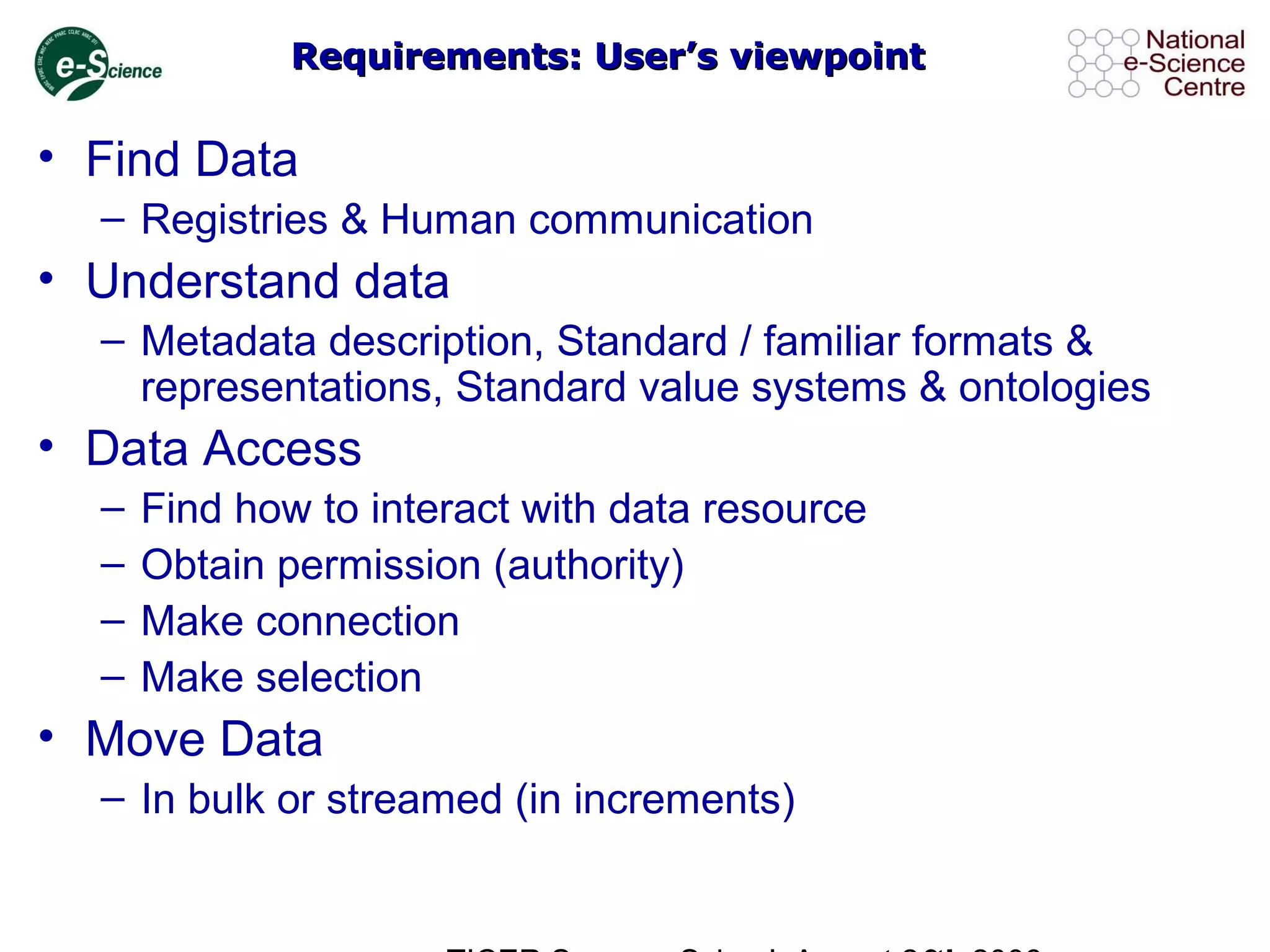

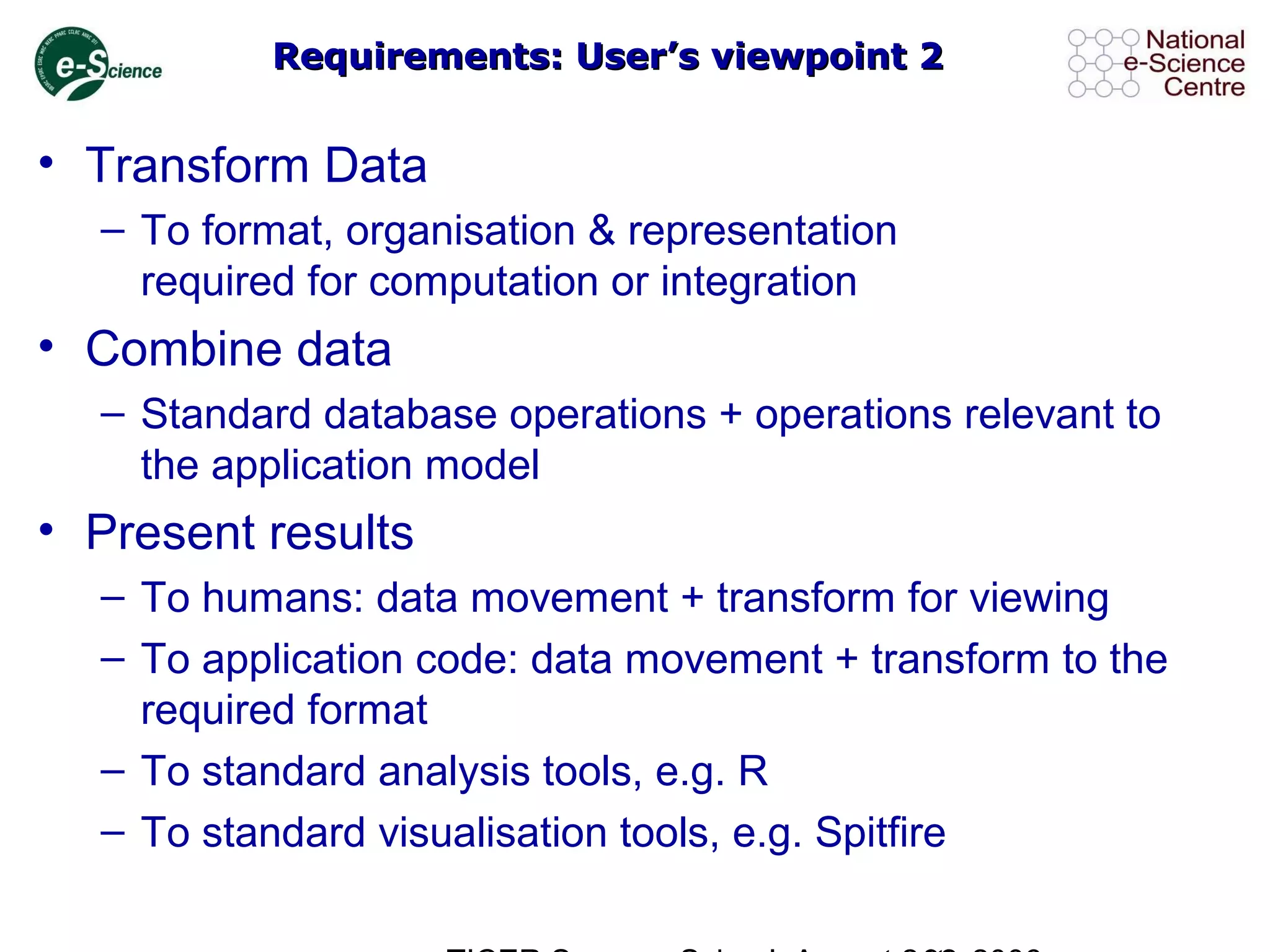

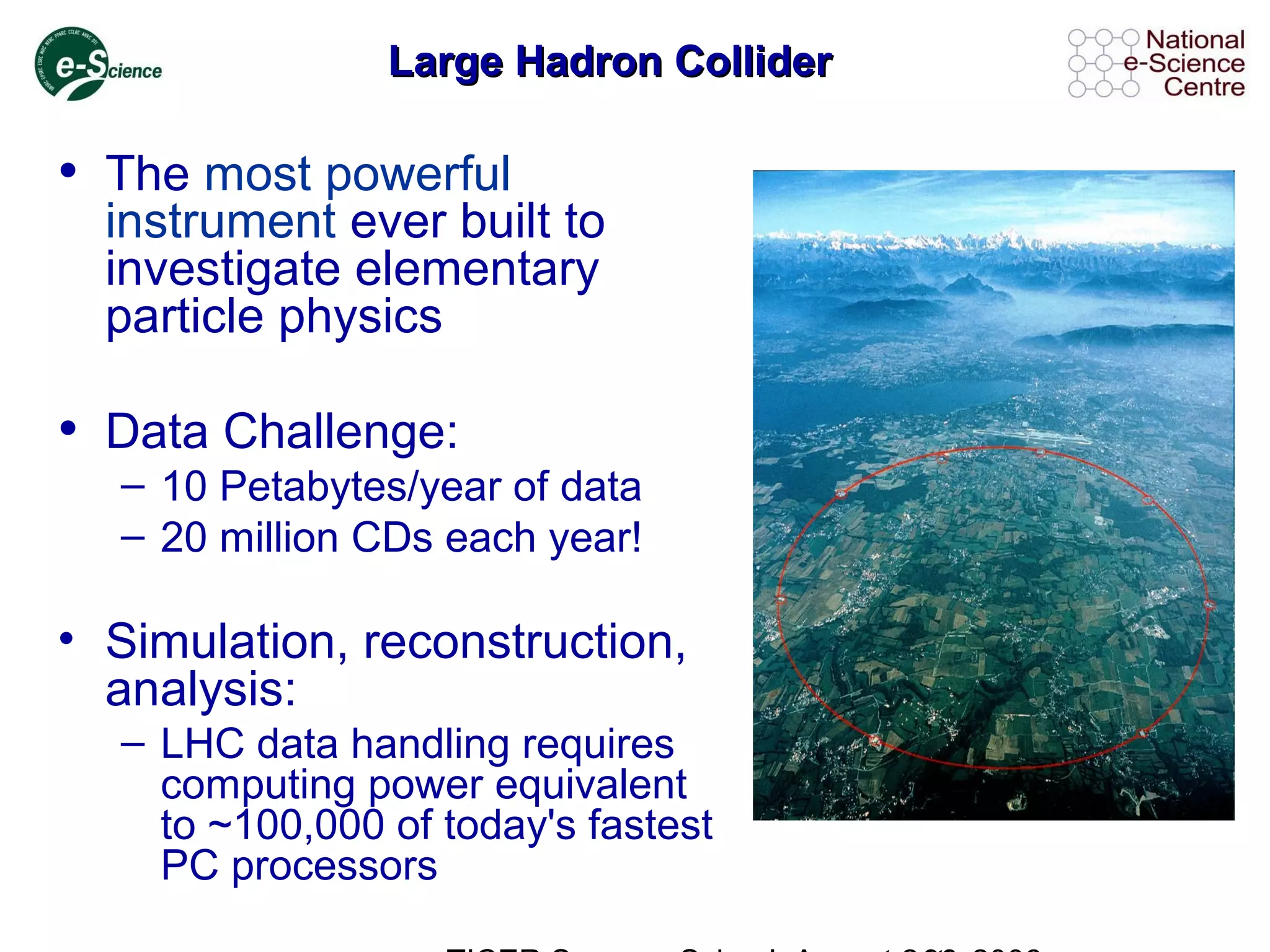

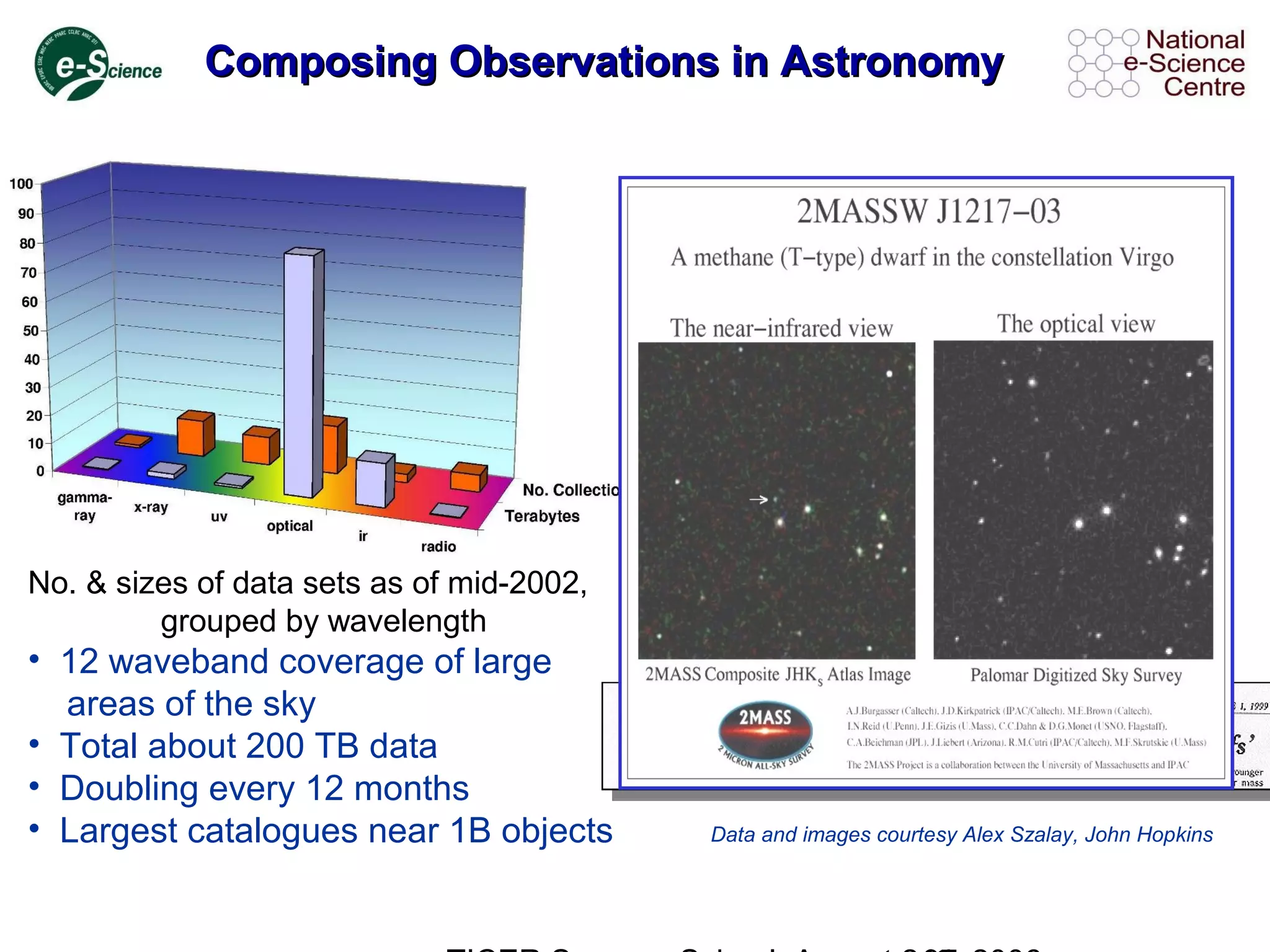

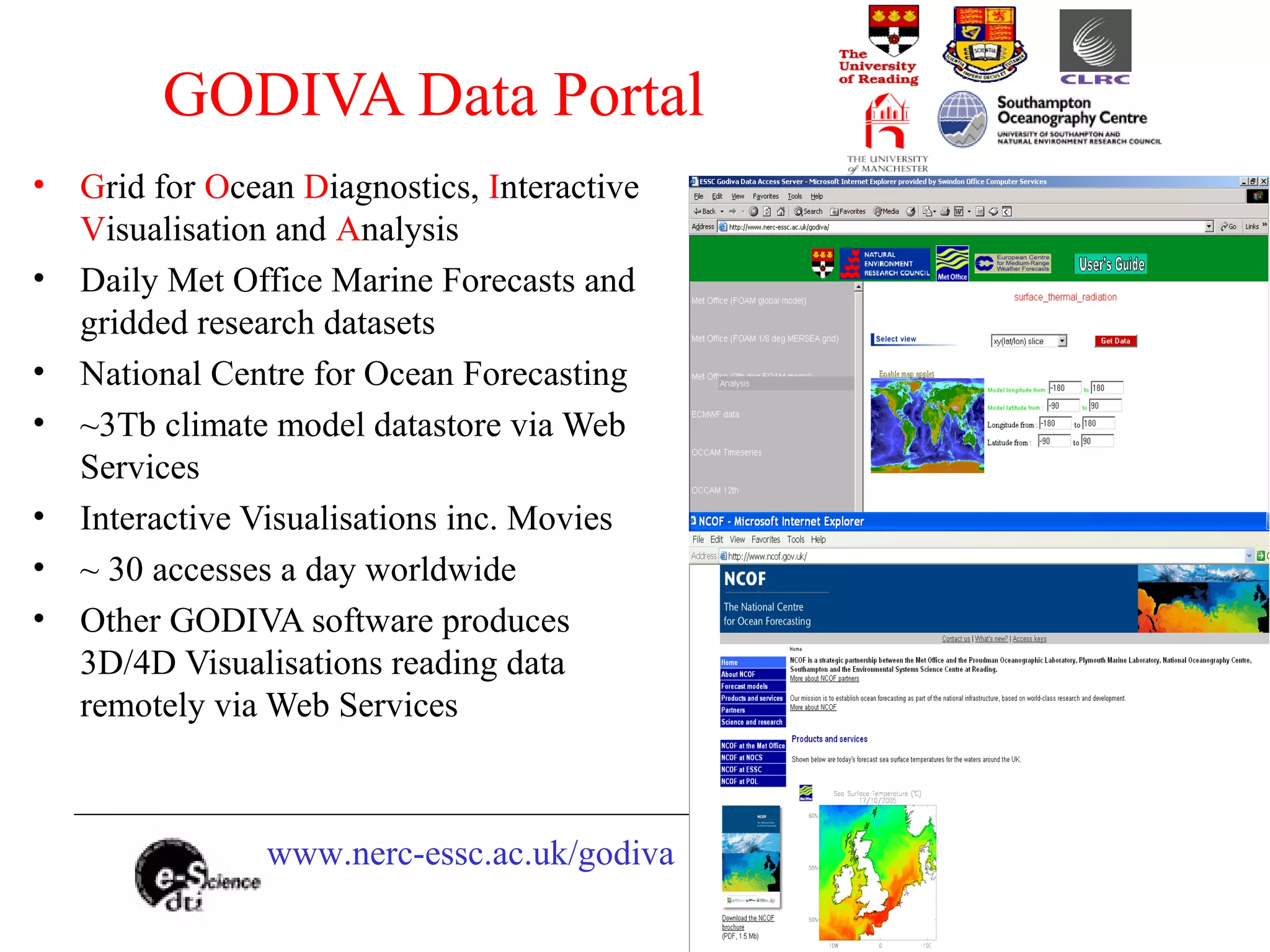

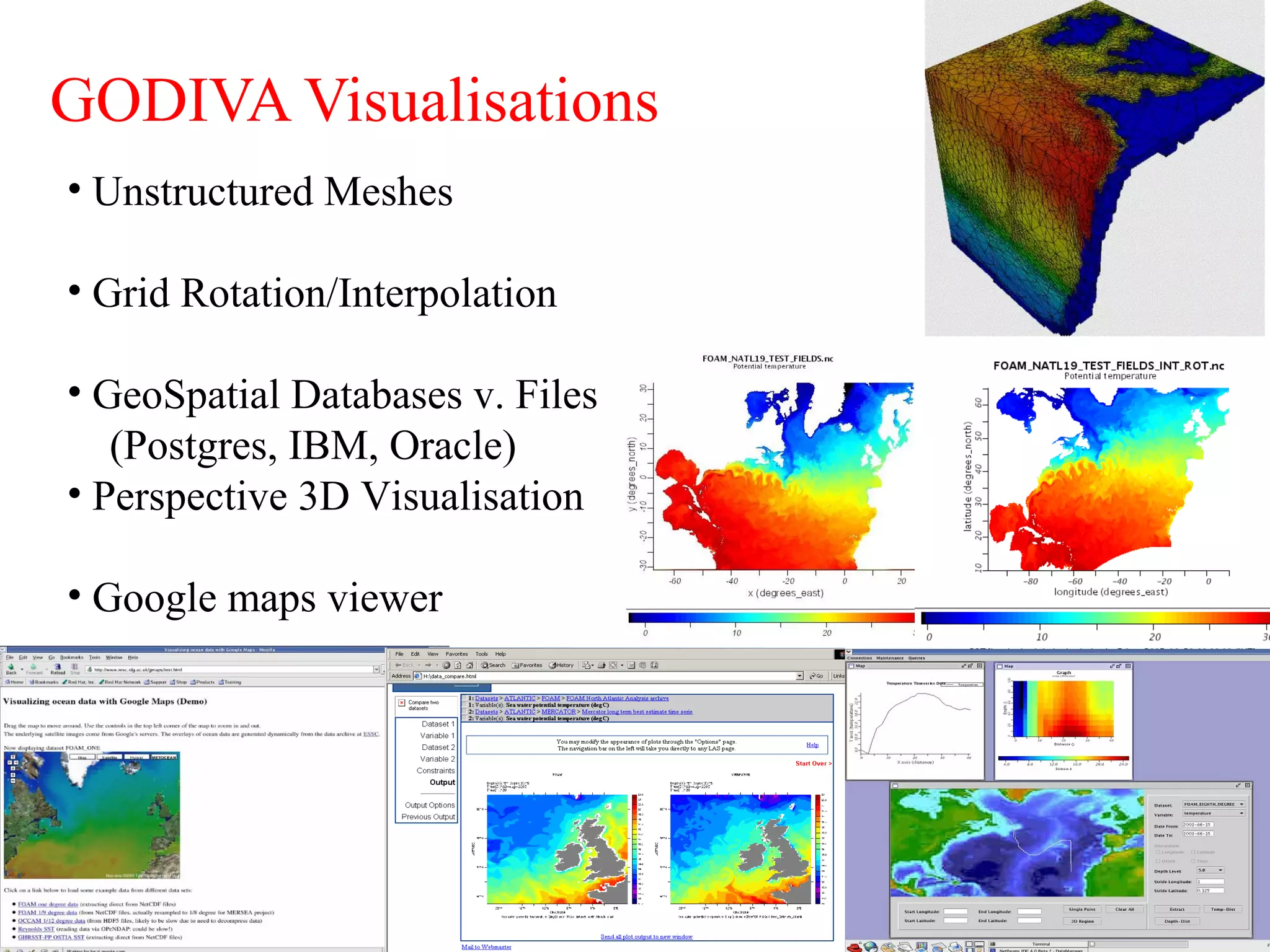

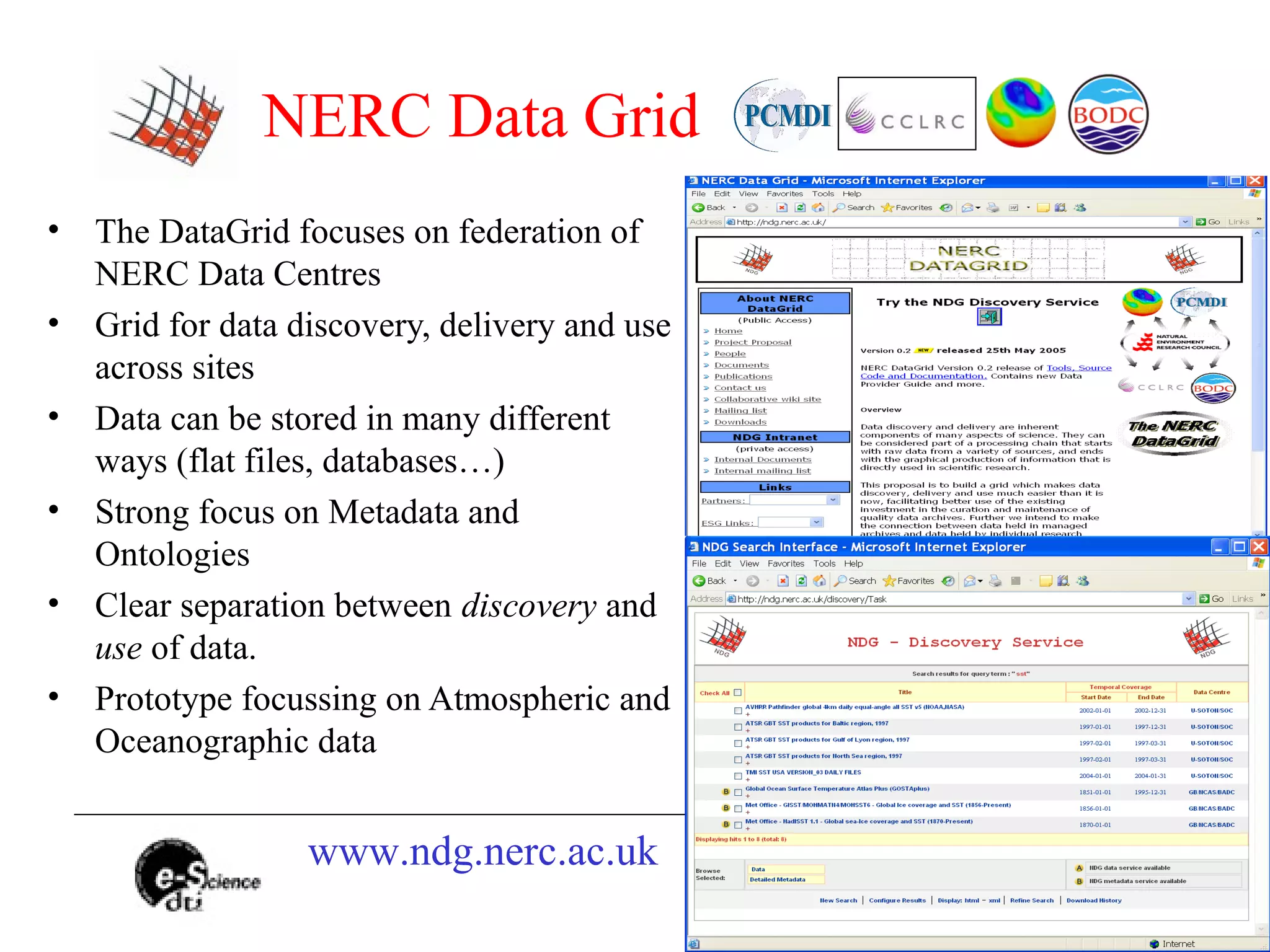

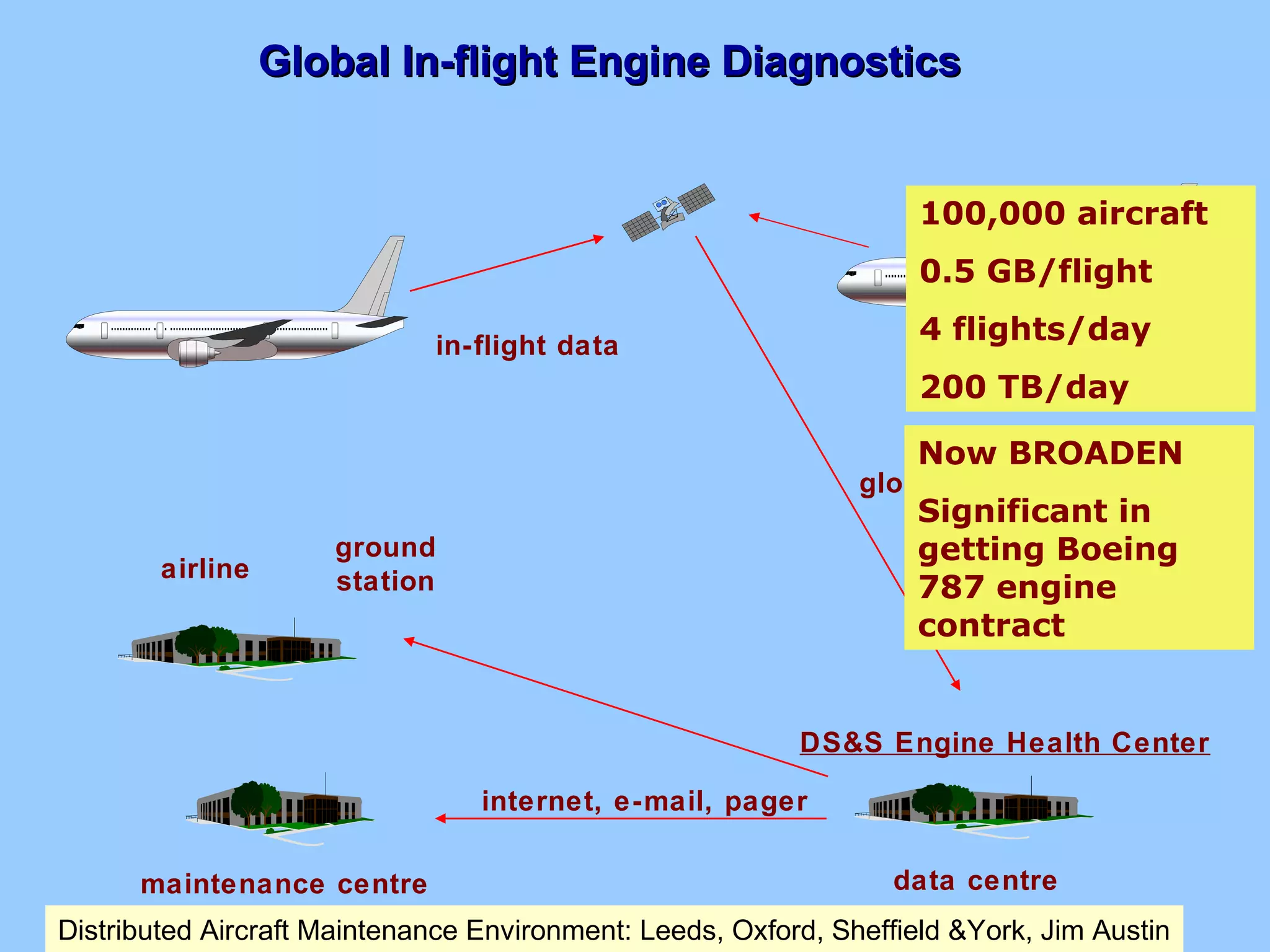

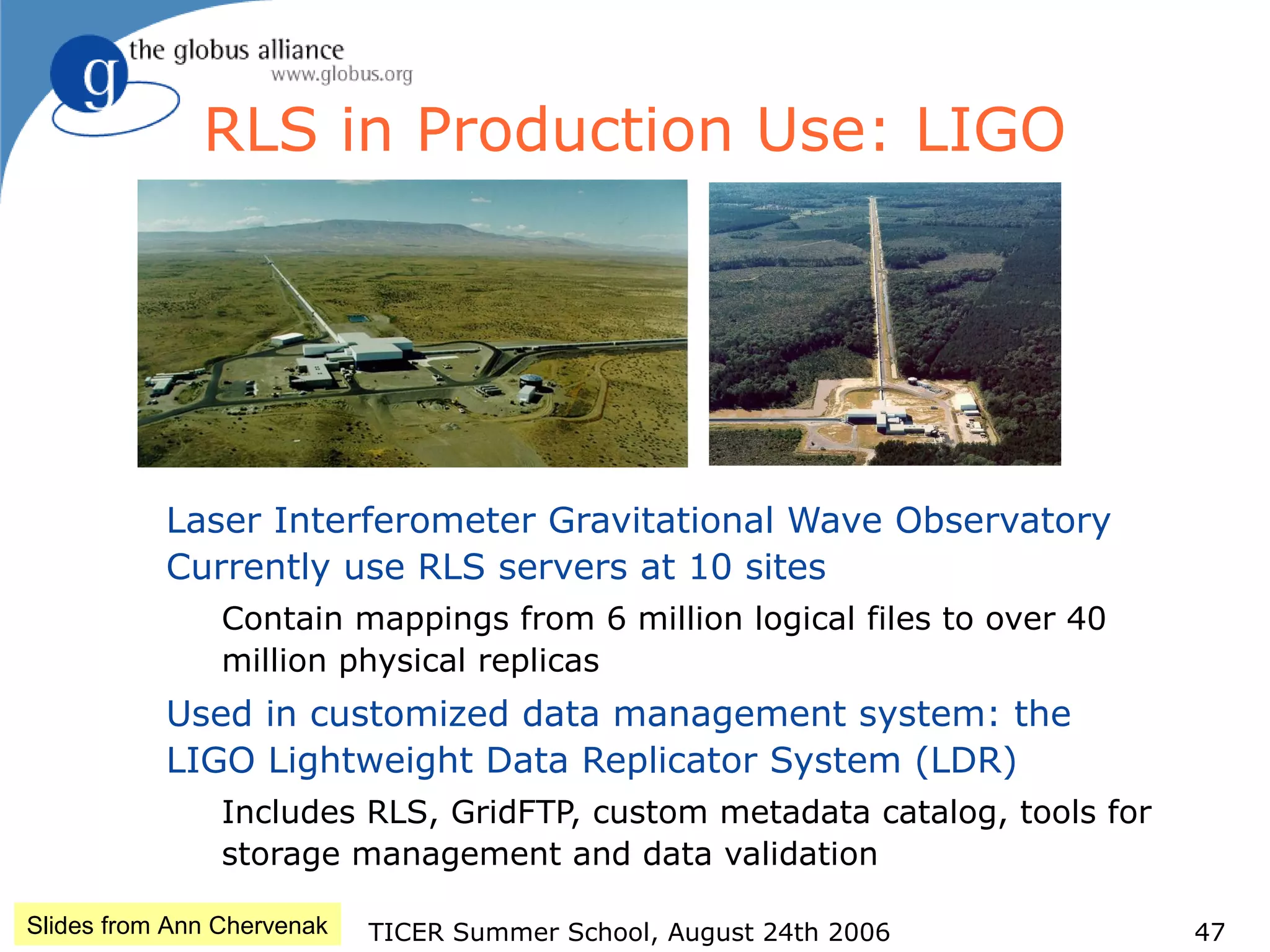

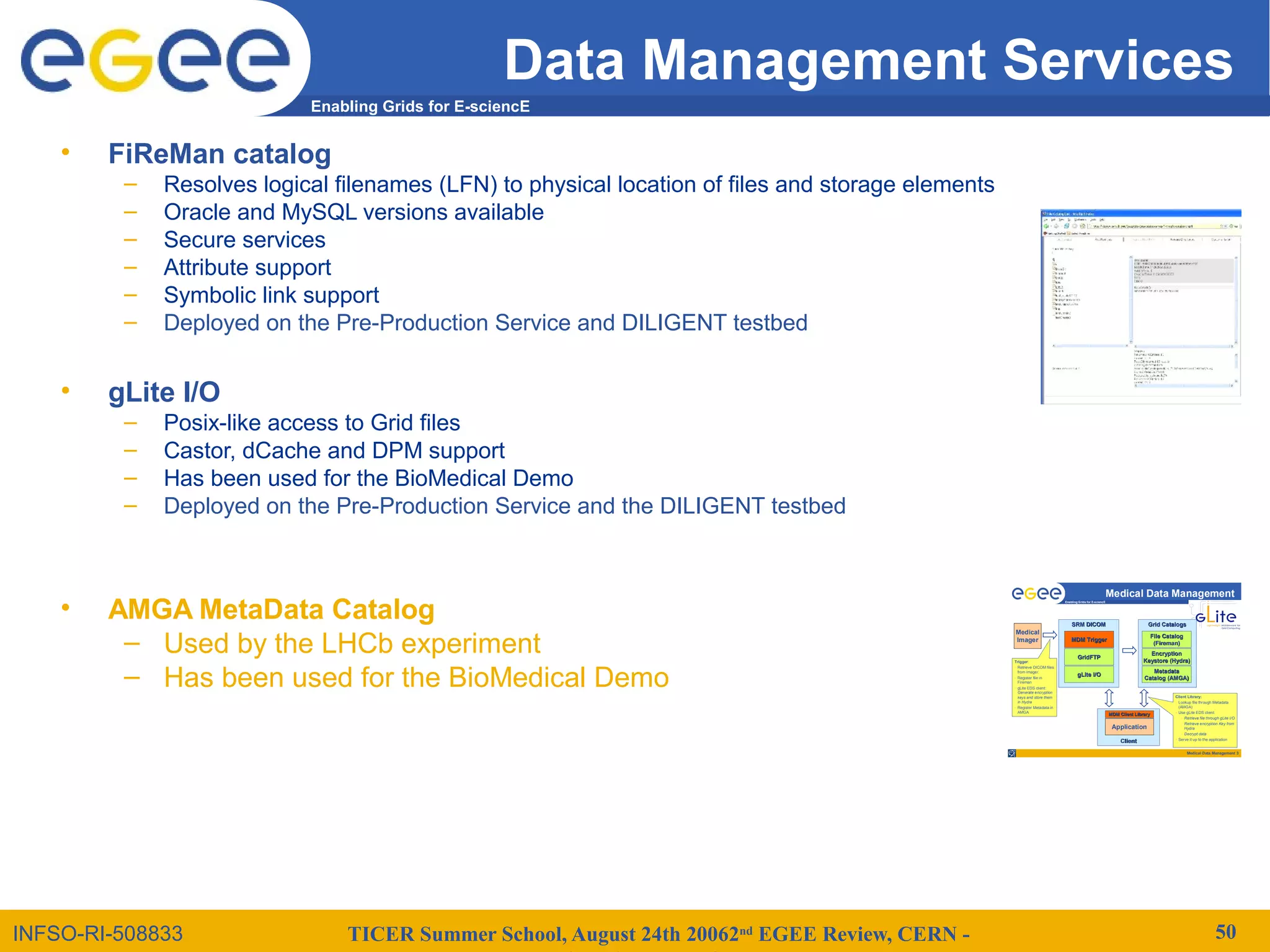

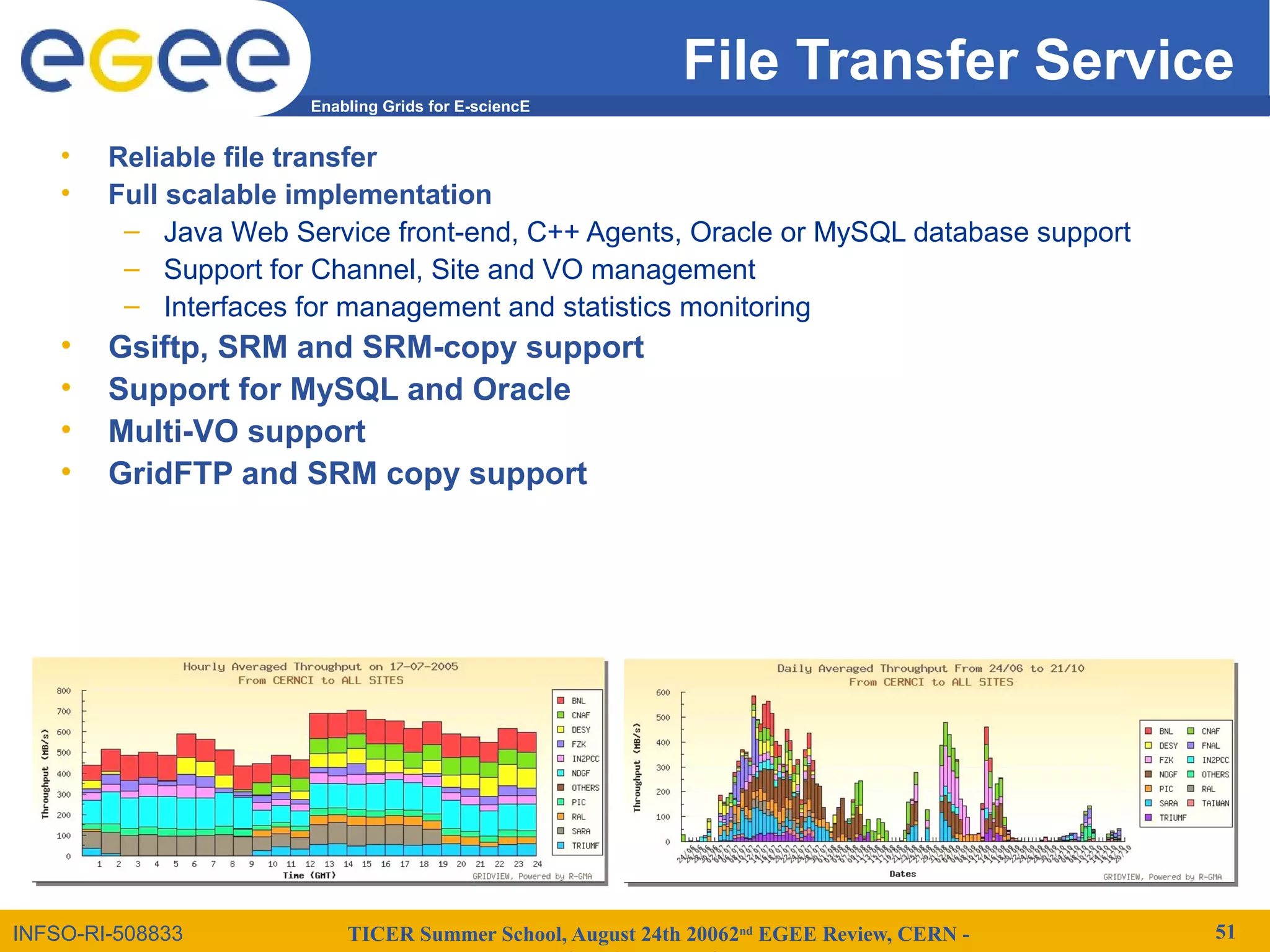

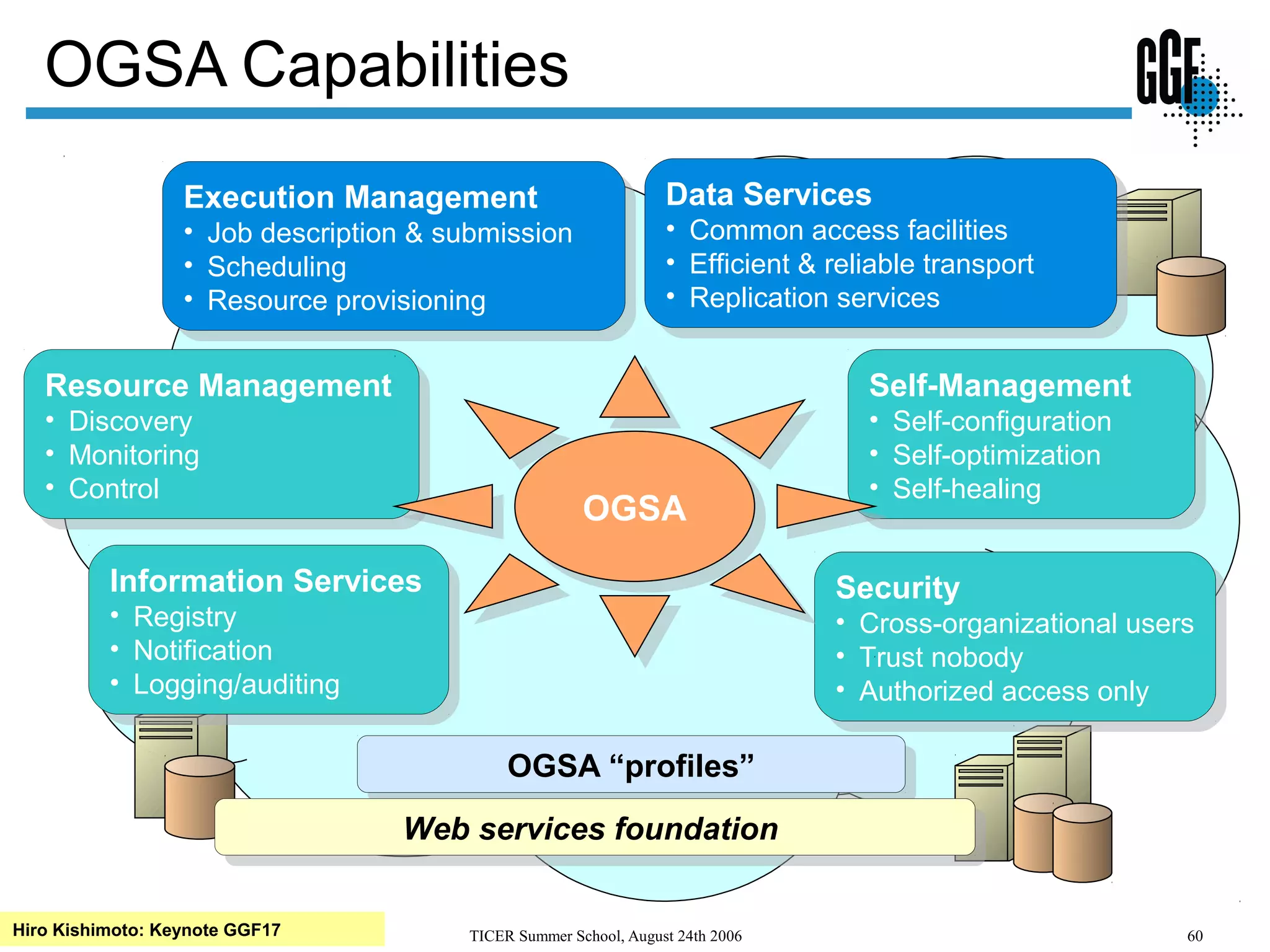

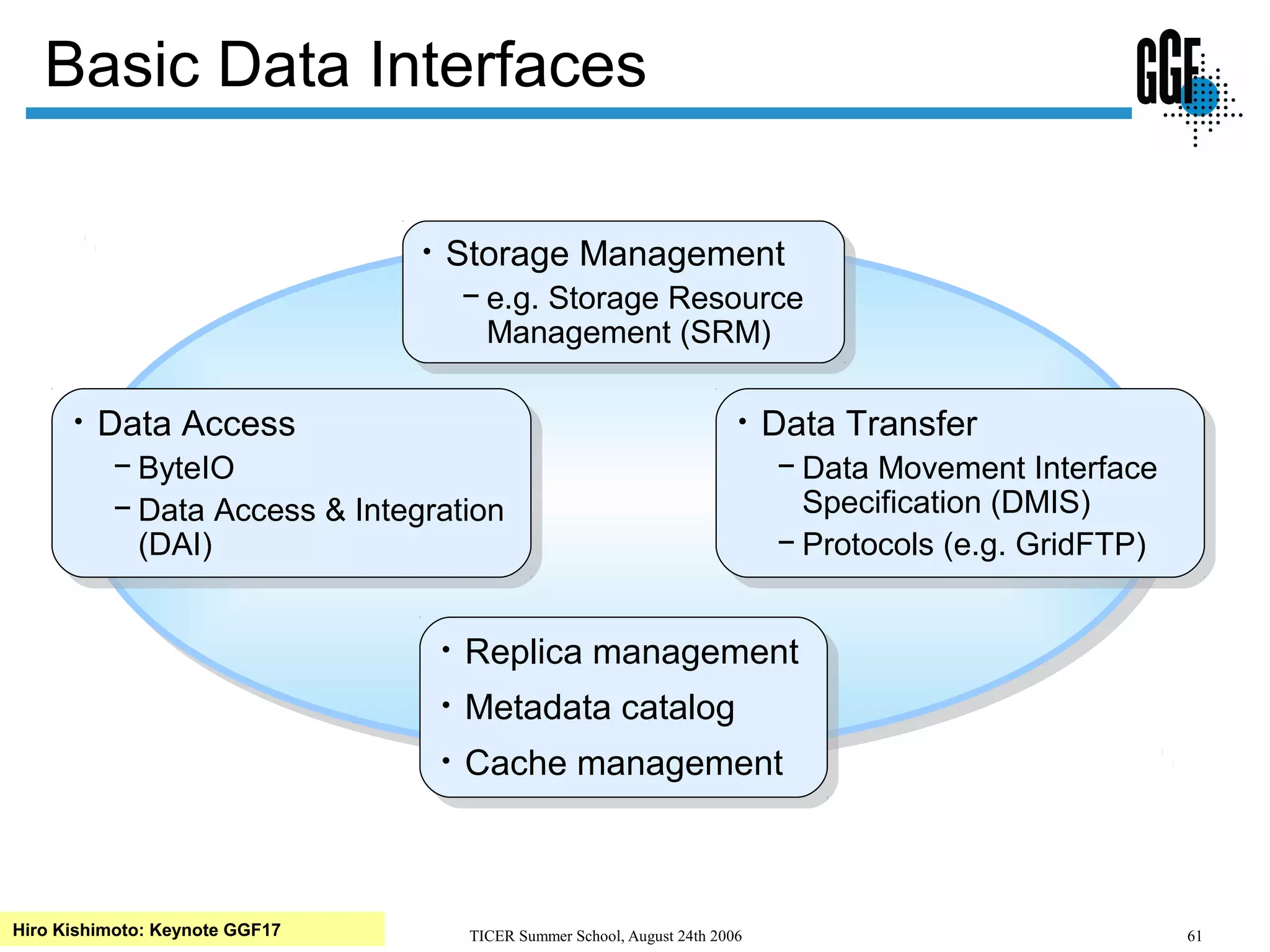

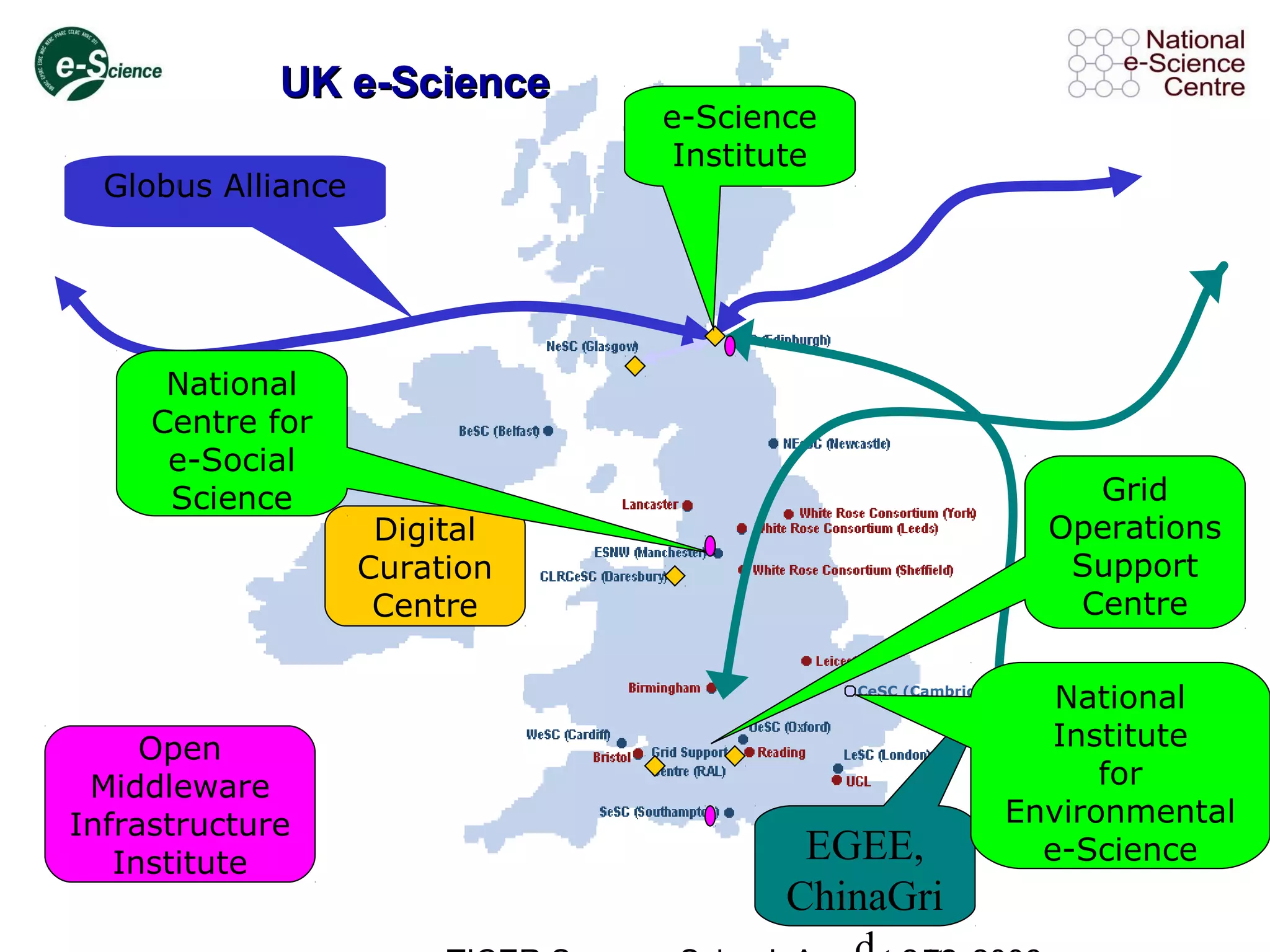

The document summarizes the Ticer Summer School held on August 24th, 2006. It discusses topics related to digital libraries, grids, e-science, and data management. Examples are provided of different e-science projects that utilize grids for data-intensive applications in domains such as climate modeling, biomedicine, and high-energy physics. Requirements for users and owners of data resources on grids are also outlined.