Embed presentation

Download to read offline

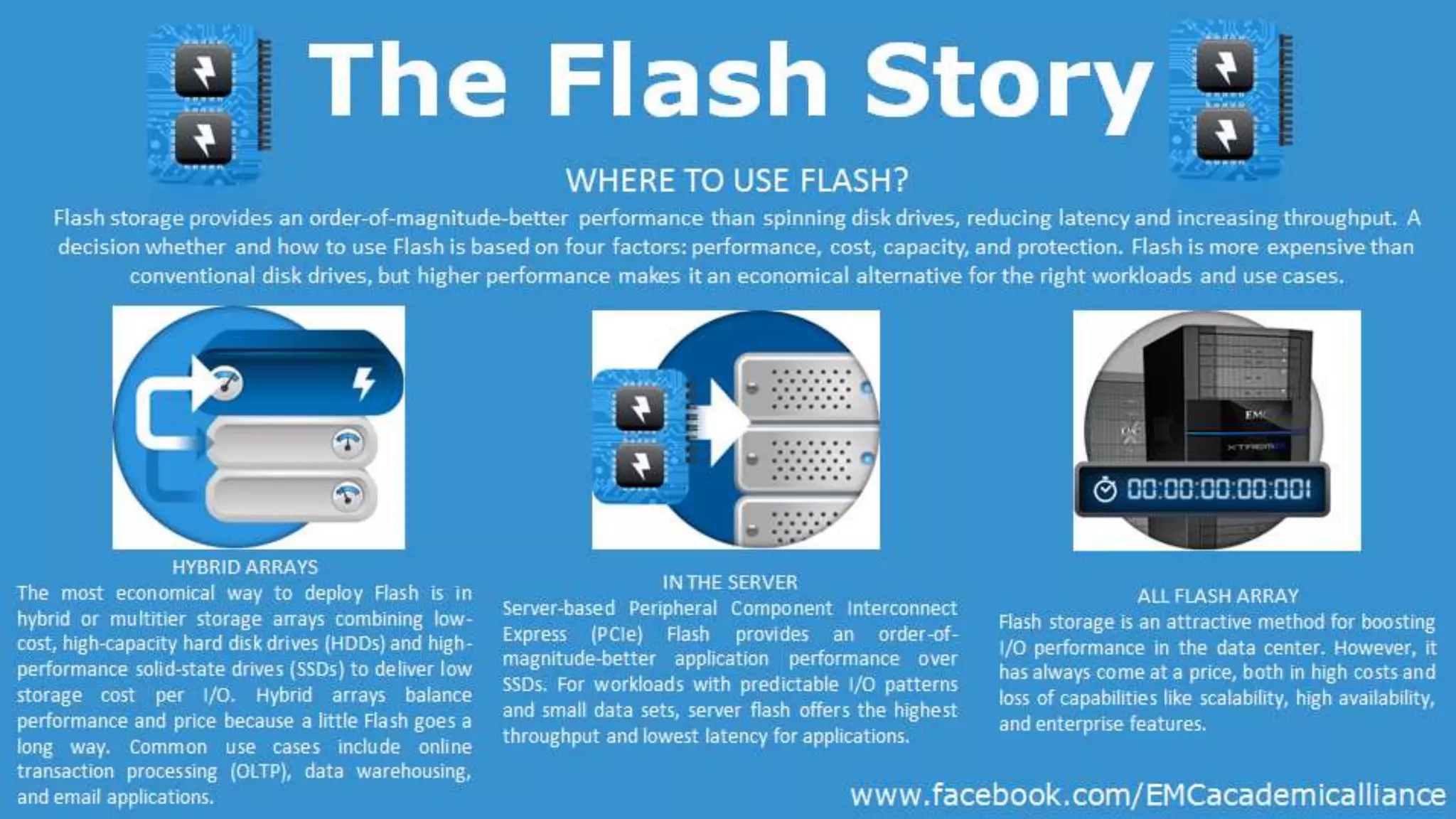

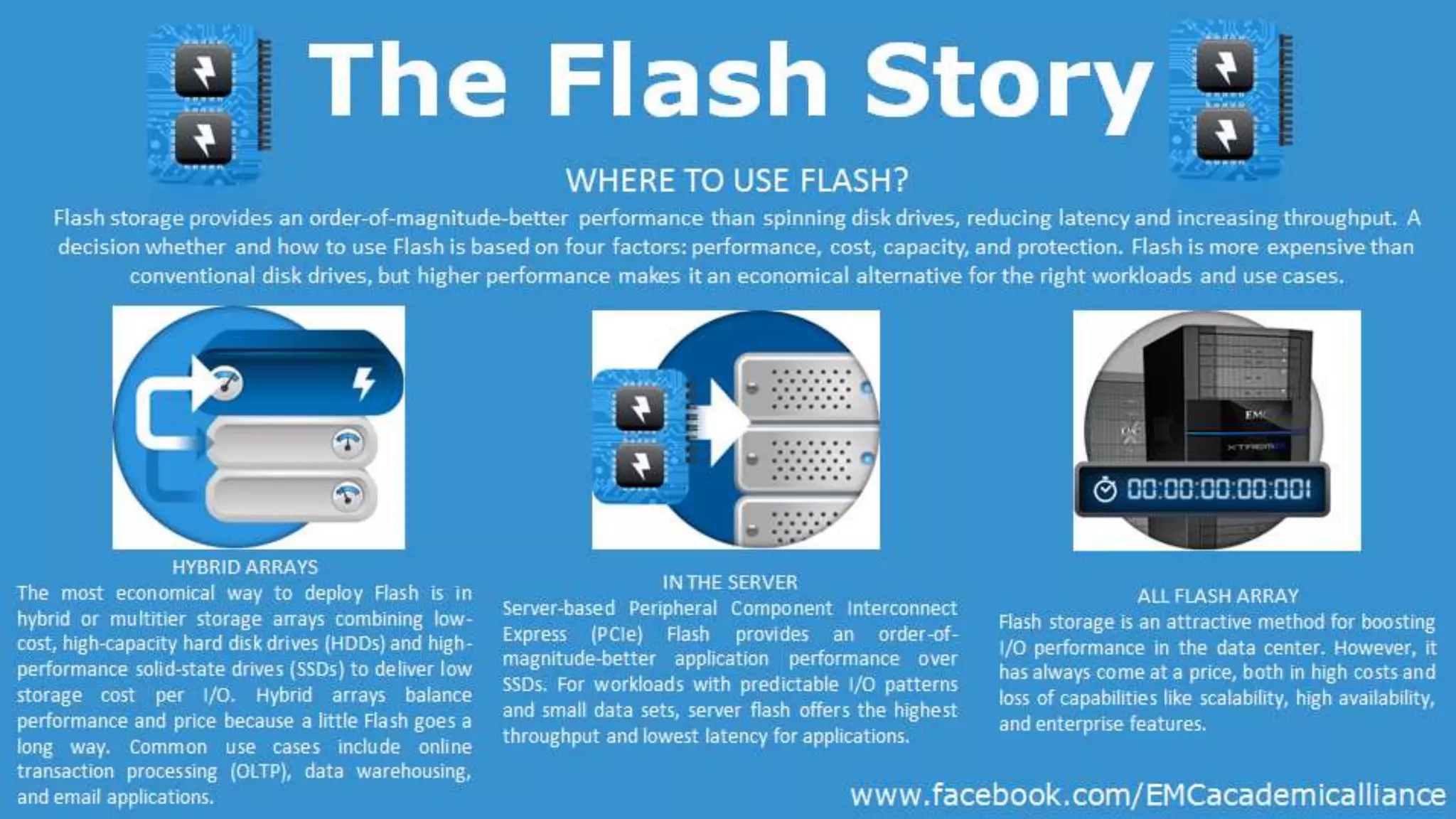

Flash storage significantly outperforms traditional spinning disk drives in terms of latency and throughput, but is more expensive, requiring careful consideration of performance, cost, capacity, and protection. The most economical approach to using flash is through hybrid arrays that combine low-cost hard drives with high-performance solid-state drives, optimizing cost per input/output. Common applications include online transaction processing, data warehousing, and server-based PCIe flash, which enhances performance for predictable workloads despite certain limitations in scalability and enterprise features.