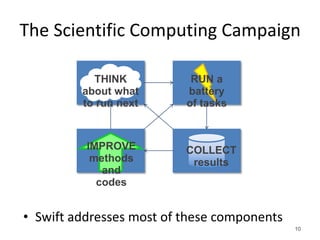

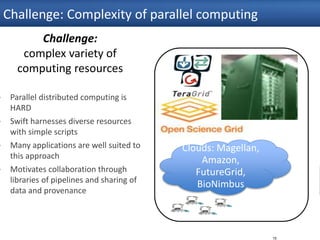

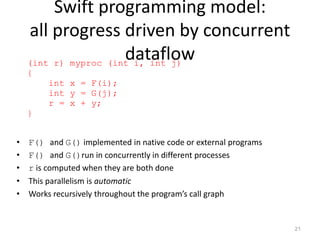

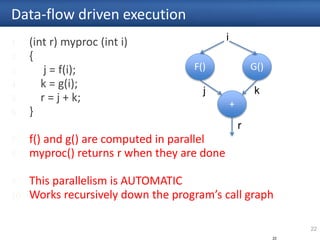

The document discusses Swift, a parallel scripting language designed for high-performance computing, emphasizing its capabilities for handling large-scale data processing across various computing platforms. It features key language characteristics, data types, and application integration for scientific workflows, making it suitable for disciplines like neuroscience and biochemistry. The presentation highlights Swift's ease of use, efficiency, and increasing adoption within the research community.

![Swift programming model

• Data types

int i = 4;

string s = "hello world";

file image<"snapshot.jpg">;

• Shell access

app (file o) myapp(file f, int i)

{ mysim "-s" i @f @o; }

• Structured data

typedef image file;

image A[];

type protein_run {

file pdb_in; file sim_out;

}

bag<blob>[] B;

24

Conventional expressions

if (x == 3) {

y = x+2;

s = strcat("y: ", y);

}

Parallel loops

foreach f,i in A {

B[i] =

convert(A[i]);

}

Data flow

merge(analyze(B[0],

B[1]),

analyze(B[2],

B[3]));

Swift: A language for distributed parallel scripting, J. Parallel

Computing, 2011](https://image.slidesharecdn.com/nbvtalk-160810054455/85/Swift-A-parallel-scripting-for-applications-at-the-petascale-and-beyond-24-320.jpg)

![31

Parallelism via foreach { }

1 type image;

2

3 (image output) flip(image input) {

4 app {

5 convert "-rotate" "180" @input @output;

6 }

7 }

8

9 image observations[ ] <simple_mapper; prefix=“orion”>;

10 image flipped[ ] <simple_mapper; prefix=“flipped”>;

11

12

13

14 foreach obs,i in observations {

15 flipped[i] = flip(obs);

16 }

31

Name outputs based on index

Process all dataset members in parallel

Map inputs from local directory](https://image.slidesharecdn.com/nbvtalk-160810054455/85/Swift-A-parallel-scripting-for-applications-at-the-petascale-and-beyond-31-320.jpg)

![32

foreach sim in [1:1000] {

(structure[sim], log[sim]) = predict(p, 100., 25.);

}

result = analyze(structure)

…

1000

Runs of the

“predict”

application

Analyze()

T1af

7

T1r6

9

T1b72

Large scale parallelization with simple loops](https://image.slidesharecdn.com/nbvtalk-160810054455/85/Swift-A-parallel-scripting-for-applications-at-the-petascale-and-beyond-32-320.jpg)

![33

Nested loops generate massive parallelism

1. Sweep( )

2. {

3. int nSim = 1000;

4. int maxRounds = 3;

5. Protein pSet[ ] <ext; exec="Protein.map">;

6. float startTemp[ ] = [ 100.0, 200.0 ];

7. float delT[ ] = [ 1.0, 1.5, 2.0, 5.0, 10.0 ];

8.

9. foreach p, pn in pSet {

10. foreach t in startTemp {

11. foreach d in delT {

12. ItFix(p, nSim, maxRounds, t, d);

13. }

14. }

15. }

16. }

17. 33

10 proteins x 1000 simulations x

3 rounds x 2 temps x 5 deltas

= 300K tasks](https://image.slidesharecdn.com/nbvtalk-160810054455/85/Swift-A-parallel-scripting-for-applications-at-the-petascale-and-beyond-33-320.jpg)

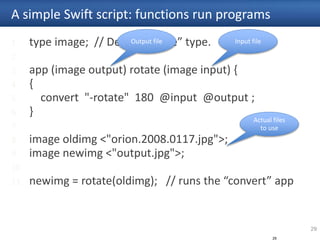

![35

Complex parallel workflows can be easily expressed

Example fMRI preprocessing script below is automatically parallelized

(Run snr) functional ( Run r, NormAnat a,

Air shrink )

{ Run yroRun = reorientRun( r , "y" );

Run roRun = reorientRun( yroRun , "x" );

Volume std = roRun[0];

Run rndr = random_select( roRun, 0.1 );

AirVector rndAirVec = align_linearRun( rndr, std, 12, 1000, 1000, "81 3 3" );

Run reslicedRndr = resliceRun( rndr, rndAirVec, "o", "k" );

Volume meanRand = softmean( reslicedRndr, "y", "null" );

Air mnQAAir = alignlinear( a.nHires, meanRand, 6, 1000, 4, "81 3 3" );

Warp boldNormWarp = combinewarp( shrink, a.aWarp, mnQAAir );

Run nr = reslice_warp_run( boldNormWarp, roRun );

Volume meanAll = strictmean( nr, "y", "null" )

Volume boldMask = binarize( meanAll, "y" );

snr = gsmoothRun( nr, boldMask, "6 6 6" );

}

(Run or) reorientRun (Run ir,

string direction) {

foreach Volume iv, i in ir.v {

or.v[i] = reorient(iv, direction);

}

}](https://image.slidesharecdn.com/nbvtalk-160810054455/85/Swift-A-parallel-scripting-for-applications-at-the-petascale-and-beyond-35-320.jpg)

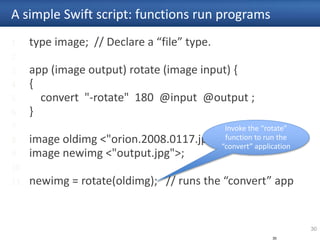

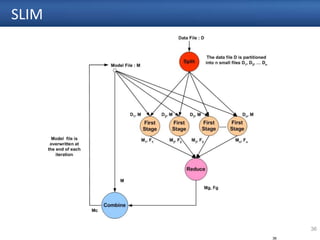

![37

SLIM: Swift sketch

type DataFile;

type ModelFile;

type FloatFile;

// Function declaration

app (DataFile[] dFiles) split(DataFile inputFile, int noOfPartition) {

}

app (ModelFile newModel, FloatFile newParameter) firstStage(DataFile data, ModelFile model) {

}

app (ModelFile newModel, FloatFile newParameter) reduce(ModelFile[] model, FloatFile[]

parameter){

}

app (ModelFile newModel) combine(ModelFile reducedModel, FloatFile reducedParameter,

ModelFile oldModel){

}

app (int terminate) check(ModelFile finalModel){

}](https://image.slidesharecdn.com/nbvtalk-160810054455/85/Swift-A-parallel-scripting-for-applications-at-the-petascale-and-beyond-37-320.jpg)

![38

SLIM: Swift sketch

// Variables to hold the input data

DataFile inputData <"MyData.dat">;

ModelFile earthModel <"MyModel.mdl">;

// Variables to hold the finalized models

ModelFile model[];

// Variables for reduce stage

ModelFile reducedModel[];

FloatFile reducedFloatParameter[];

// Get the number of partition from command line parameter

int n = @toint(@arg("n","1"));

model[0] = earthModel;

//Partition the input data

DataFile partitionedDataFiles[] = split(inputData, n);](https://image.slidesharecdn.com/nbvtalk-160810054455/85/Swift-A-parallel-scripting-for-applications-at-the-petascale-and-beyond-38-320.jpg)

![39

SLIM: Swift sketch

// Iterate sequentially until the terminal condition is met

iterate v {

// Variables to hold the output of the first stage

ModelFile firstStageModel[] < simple_mapper; location = "output",

prefix = @strcat(v, "_"), suffix = ".mdl" >;

file_dat firstStageFloatParameter[] < simple_mapper; padding = 3, location = "output",

prefix = @strcat(v, "_"), suffix = ".float" >;

// Parallel for loop

foreach partitionedFile, count in partitionedDataFiles {

// First stage

(firstStageModel[count], firstStageFloatParameter[count]) = firstStage(model[v] , partitionedFile);

}

// Reduce stage (use the files for synchronization)

(reducedModel[v], reducedFloatParameter[v]) = reduce(firstStageModel, firstStageFloatParameter);

// Combine stage

model[v+1] = combine(reducedModel[v] ,reducedFloatParameter[v], model[v]);

//Check the termination condition here

int shouldTerminate = check(model[v+1]);

} until (shouldTerminate != 1);](https://image.slidesharecdn.com/nbvtalk-160810054455/85/Swift-A-parallel-scripting-for-applications-at-the-petascale-and-beyond-39-320.jpg)

![Fully parallel evaluation of complex

scripts

46

int X = 100, Y = 100;

int A[][];

int B[];

foreach x in [0:X-1] {

foreach y in [0:Y-1] {

if (check(x, y)) {

A[x][y] = g(f(x), f(y));

} else {

A[x][y] = 0;

}

}

B[x] = sum(A[x]);

}](https://image.slidesharecdn.com/nbvtalk-160810054455/85/Swift-A-parallel-scripting-for-applications-at-the-petascale-and-beyond-46-320.jpg)

![A[3] = g(A[2]);

Example distributed execution

• Code

• Evaluate dataflow operations

• Workers: execute tasks

51

A[2] = f(getenv(“N”));

• Perform getenv()

• Submit f

• Process f

• Store A[2]

• Subscribe to

A[2]

• Submit g

• Process g

• Store A[3]

Task put Task put

• Wozniak et al. Turbine: A distributed-memory dataflow

engine for high performance many-task

applications. Fundamenta Informaticae 128(3), 2013

Task get Task get](https://image.slidesharecdn.com/nbvtalk-160810054455/85/Swift-A-parallel-scripting-for-applications-at-the-petascale-and-beyond-51-320.jpg)

![x = g();

if (x > 0) {

n = f(x);

foreach i in [0:n-1] {

output(p(i));

}}

Swift code in dataflow

• Dataflow definitions create nodes in the dataflow graph

• Dataflow assignments create edges

• In typical (DAG) workflow languages, this forms a static graph

• In Swift, the graph can grow dynamically – code fragments are evaluated

(conditionally) as a result of dataflow

• In its early implementation, these fragments were just tasks

56

x = g();

x

n

foreach i … {

output(p(i));

if (x > 0) {

n = f(x); …](https://image.slidesharecdn.com/nbvtalk-160810054455/85/Swift-A-parallel-scripting-for-applications-at-the-petascale-and-beyond-56-320.jpg)

![LAMMPS parallel tasks

• LAMMPS provides a

convenient C++ API

• Easily used by Swift/T

parallel tasks

foreach i in [0:20] {

t = 300+i;

sed_command = sprintf("s/_TEMPERATURE_/%i/g", t);

lammps_file_name = sprintf("input-%i.inp", t);

lammps_args = "-i " + lammps_file_name;

file lammps_input<lammps_file_name> =

sed(filter, sed_command) =>

@par=8 lammps(lammps_args);

}

Tasks with varying sizes packed into big MPI run

Black: Compute Blue: Message White: Idle](https://image.slidesharecdn.com/nbvtalk-160810054455/85/Swift-A-parallel-scripting-for-applications-at-the-petascale-and-beyond-61-320.jpg)

![Swift for Really Parallel BuildsApps

app (object_file o) gcc(c_file c, string cflags[]) {

// Example:

// gcc -c -O2 -o f.o f.c

"gcc" "-c" cflags "-o" o c;

}

app (x_file x) ld(object_file o[], string ldflags[]) {

// Example:

// gcc -o f.x f1.o f2.o ...

"gcc" ldflags "-o" x o;

}

app (output_file o) run(x_file x) {

"sh" "-c" x @stdout=o;

}

app (timing_file t) extract(output_file o) {

"tail" "-1" o "|" "cut" "-f" "2" "-d" " " @stdout=t;

}

Swift code

string program_name = "programs/program1.c";

c_file c = input(program_name);

// For each

foreach O_level in [0:3] {

make file names…

// Construct compiler flags

string O_flag = sprintf("-O%i", O_level);

string cflags[] = [ "-fPIC", O_flag ];

object_file o<my_object> = gcc(c, cflags);

object_file objects[] = [ o ];

string ldflags[] = [];

// Link the program

x_file x<my_executable> = ld(objects, ldflags);

// Run the program

output_file out<my_output> = run(x);

// Extract the run time from the program output

timing_file t<my_time> = extract(out);

65](https://image.slidesharecdn.com/nbvtalk-160810054455/85/Swift-A-parallel-scripting-for-applications-at-the-petascale-and-beyond-65-320.jpg)

![Abstract, extensible MapReduce in

Swiftmain {

file d[];

int N = string2int(argv("N"));

// Map phase

foreach i in [0:N-1] {

file a = find_file(i);

d[i] = map_function(a);

}

// Reduce phase

file final <"final.data"> = merge(d, 0, tasks-1);

}

(file o) merge(file d[], int start, int stop) {

if (stop-start == 1) {

// Base case: merge pair

o = merge_pair(d[start], d[stop]);

} else {

// Merge pair of recursive calls

n = stop-start;

s = n % 2;

o = merge_pair(merge(d, start, start+s),

merge(d, start+s+1, stop));

}}

66

• User needs to implement

map_function() and merg

• These may be implemented

in native code, Python, etc.

• Could add annotations

• Could add additional custom

application logic](https://image.slidesharecdn.com/nbvtalk-160810054455/85/Swift-A-parallel-scripting-for-applications-at-the-petascale-and-beyond-66-320.jpg)

![CCTW: Swift/T application (C++)

bag<blob> M[];

foreach i in [1:n] {

blob b1= cctw_input(“pznpt.nxs”);

blob b2[];

int outputId[];

(outputId, b2) = cctw_transform(i, b1);

foreach b, j in b2 {

int slot = outputId[j];

M[slot] += b;

}}

foreach g in M {

blob b = cctw_merge(g);

cctw_write(b);

}}

69](https://image.slidesharecdn.com/nbvtalk-160810054455/85/Swift-A-parallel-scripting-for-applications-at-the-petascale-and-beyond-69-320.jpg)

![Swift/T: Makefile example: 1

app (object_file o) gcc(c_file c, string cflags[])

{

// gcc -c -O2 -o f.o f.c

"gcc" "-c" cflags "-o" o c;

}

app (x_file x) ld(object_file o[], string ldflags[])

{

// gcc -o f.x f1.o f2.o ...

"gcc" ldflags "-o" x o;

}

app (output_file o) run(x_file x)

{

"sh" "-c" x @stdout=o;

}

71

www.ci.uchicago.edu/swift

www.mcs.anl.gov/exm](https://image.slidesharecdn.com/nbvtalk-160810054455/85/Swift-A-parallel-scripting-for-applications-at-the-petascale-and-beyond-71-320.jpg)

![Swift/T: Makefile example: 2

// For each

foreach O_level in [0:3]

{

// Construct file names

string my_object =

sprintf("programs/program1-O%i.o", O_level);

. . .

string O_flag = sprintf("-O%i", O_level);

string cflags[] = [ "-fPIC", O_flag ];

object_file o<my_object> = gcc(c, cflags);

object_file objects[] = [ o ];

string ldflags[] = [];

x_file x<my_executable> = ld(objects, ldflags);

72](https://image.slidesharecdn.com/nbvtalk-160810054455/85/Swift-A-parallel-scripting-for-applications-at-the-petascale-and-beyond-72-320.jpg)

![Foreach example

75

type file;

app (file o) simulate_app (){

simulate stdout=filename(o);}

foreach i in [1:10] {

string fname=strcat("output/sim_", i, ".out");

file f <single_file_mapper; file=fname>;

f = simulate_app();

}](https://image.slidesharecdn.com/nbvtalk-160810054455/85/Swift-A-parallel-scripting-for-applications-at-the-petascale-and-beyond-75-320.jpg)

![Multiple Apps

76

type file;

app (file o) simulate_app (int time){

simulate "-t" time stdout=filename(o);

}

app (file o) stats_app (file s[]){

stats filenames(s) stdout=filename(o);

}

file sims[];

int time = 5;

int nsims = 10;

foreach i in [1:nsims] {

string fname = strcat("output/sim_",i,".out");

file simout <single_file_mapper; file=fname>;

simout = simulate_app(time); sims[i] = simout;

}

file average <"output/average.out">;

average = stats_app(sims);](https://image.slidesharecdn.com/nbvtalk-160810054455/85/Swift-A-parallel-scripting-for-applications-at-the-petascale-and-beyond-76-320.jpg)

![Multi stage

77

type file;

app (file out) genseed_app (int nseeds){ genseed "-r" 2000000

"-n" nseeds stdout=@out;

}

app (file out) genbias_app (int bias_range, int nvalues){

genbias "-r" bias_range "-n" nvalues stdout=@out;

}

app (file out, file log) simulation_app (int timesteps, int

sim_range, file bias_file, int scale, int sim_count){

simulate "-t" timesteps "-r" sim_range "-B" @bias_file "-x" scale

"-n" sim_count stdout=@out stderr=@log;

}

app (file out, file log) analyze_app (file s[]){

stats filenames(s) stdout=@out stderr=@log;

}](https://image.slidesharecdn.com/nbvtalk-160810054455/85/Swift-A-parallel-scripting-for-applications-at-the-petascale-and-beyond-77-320.jpg)

![Multi stage

78

# Values that shape the runint nsim = 10;

# number of simulation programs to runint steps = 1;

# number of timesteps (seconds) per simulation

int range = 100;

# range of the generated random numbersint values = 10;

# number of values generated per simulation

# Main script and data

tracef("n*** Script parameters: nsim=%i range=%i num

values=%inn", nsim, range, values);

# Dynamically generated bias for simulation ensemblefile

seedfile<"output/seed.dat">;

seedfile = genseed_app(1);

int seedval = readData(seedfile);

tracef("Generated seed=%in", seedval);

file sims[];](https://image.slidesharecdn.com/nbvtalk-160810054455/85/Swift-A-parallel-scripting-for-applications-at-the-petascale-and-beyond-78-320.jpg)

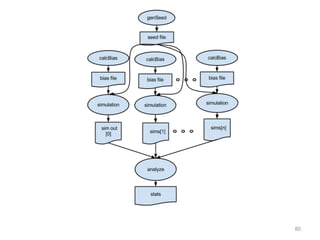

![Multi stage

79

foreach i in [0:nsim-1] {

file biasfile <single_file_mapper;

file=strcat("output/bias_",i,".dat")>;

file simout <single_file_mapper;

file=strcat("output/sim_",i,".out")>;

file simlog <single_file_mapper;

file=strcat("output/sim_",i,".log")>;

biasfile = genbias_app(1000, 20);

(simout,simlog) = simulation_app(steps, range, biasfile,

1000000, values); sims[i] = simout;

}

file stats_out<"output/average.out">;

file stats_log<"output/average.log">;

(stats_out,stats_log) = analyze_app(sims);](https://image.slidesharecdn.com/nbvtalk-160810054455/85/Swift-A-parallel-scripting-for-applications-at-the-petascale-and-beyond-79-320.jpg)