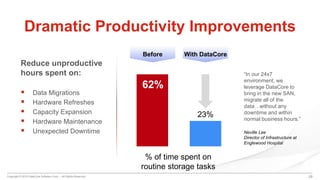

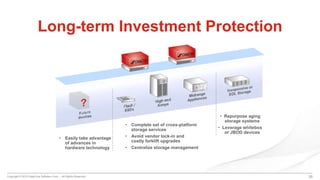

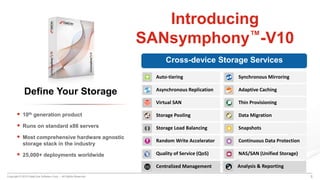

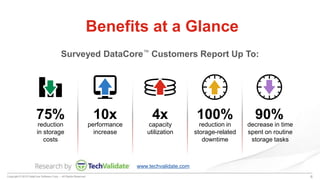

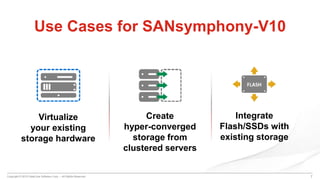

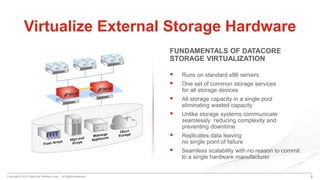

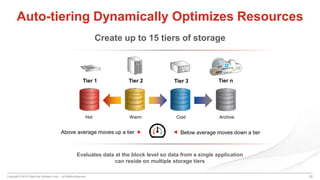

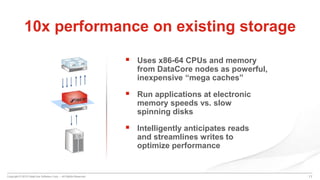

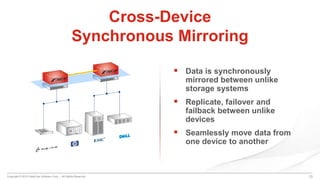

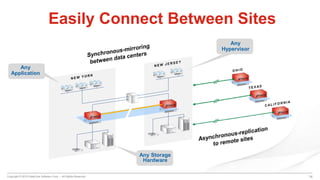

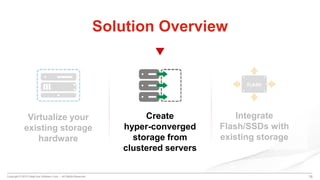

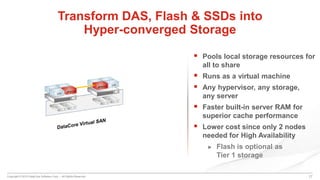

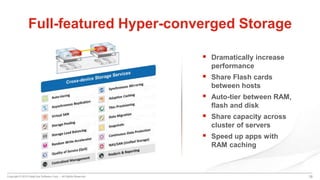

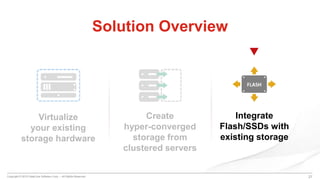

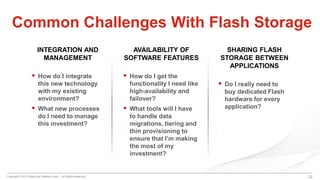

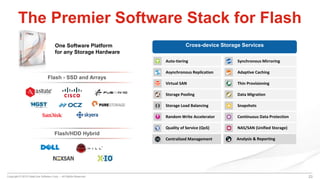

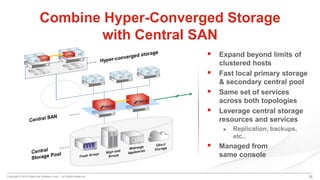

DataCore's SANsymphony-V10 is a software-defined storage solution that centralizes management across diverse storage devices, enhancing performance and reducing costs through features like auto-tiering and synchronous mirroring. It allows virtualization of existing storage hardware and supports hybrid environments, enabling efficient data management and scaling. Users report significant reductions in storage-related downtime and routine tasks, making it an attractive option for modern storage needs.

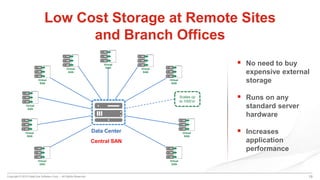

![Copyright © 2015 DataCore Software Corp. – All Rights Reserved. 27

Centrally Managed Environment

Major Data Centers

[Central SANs]

Branch Office

[Hyper-converged]

Cloud Storage

Disaster

Recovery Site

[Hyper-converged]

Branch Office

[Hyper-converged]](https://image.slidesharecdn.com/software-definedstorageinaction-150520185550-lva1-app6891/85/Software-Defined-Storage-In-Action-27-320.jpg)