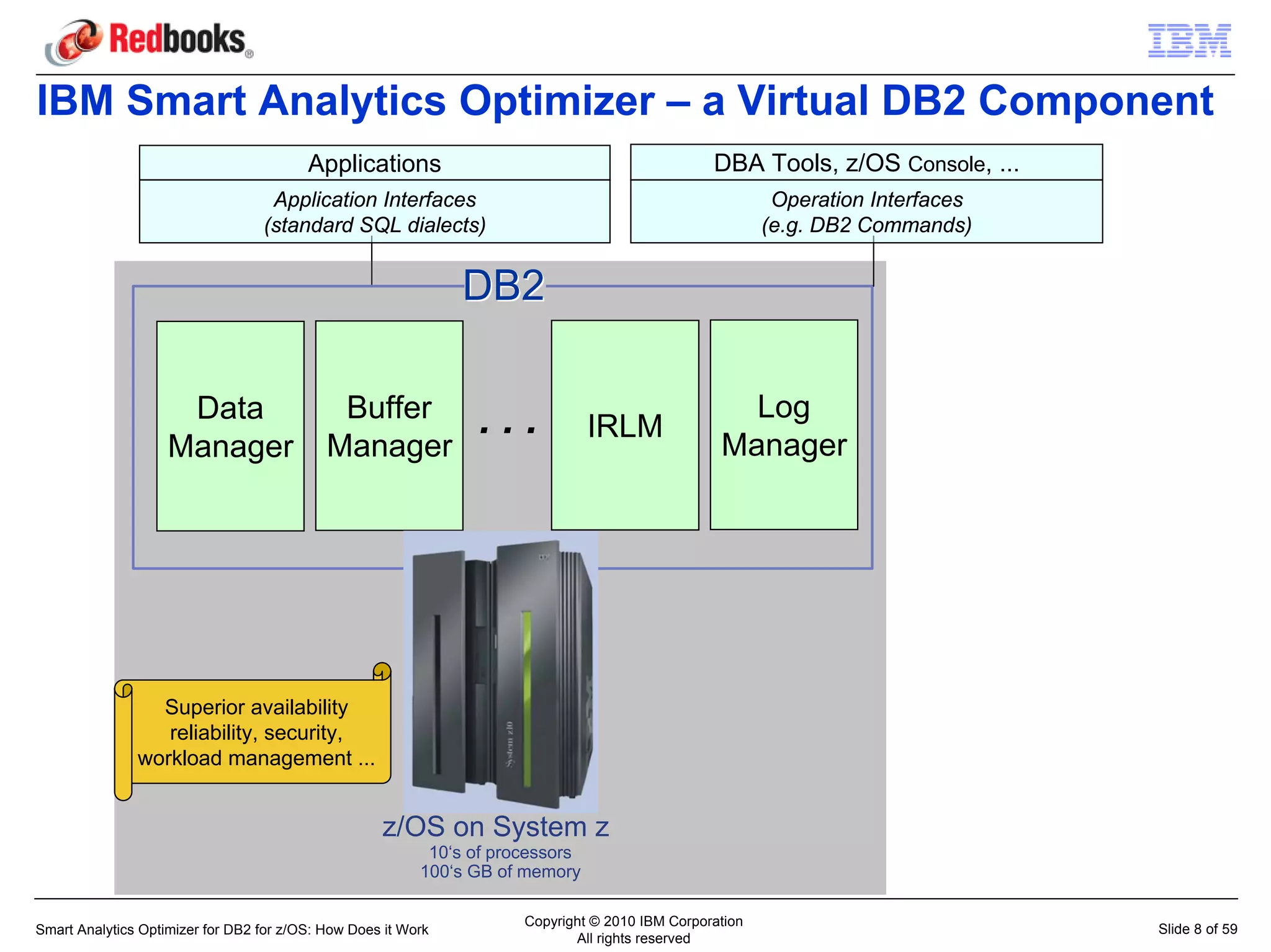

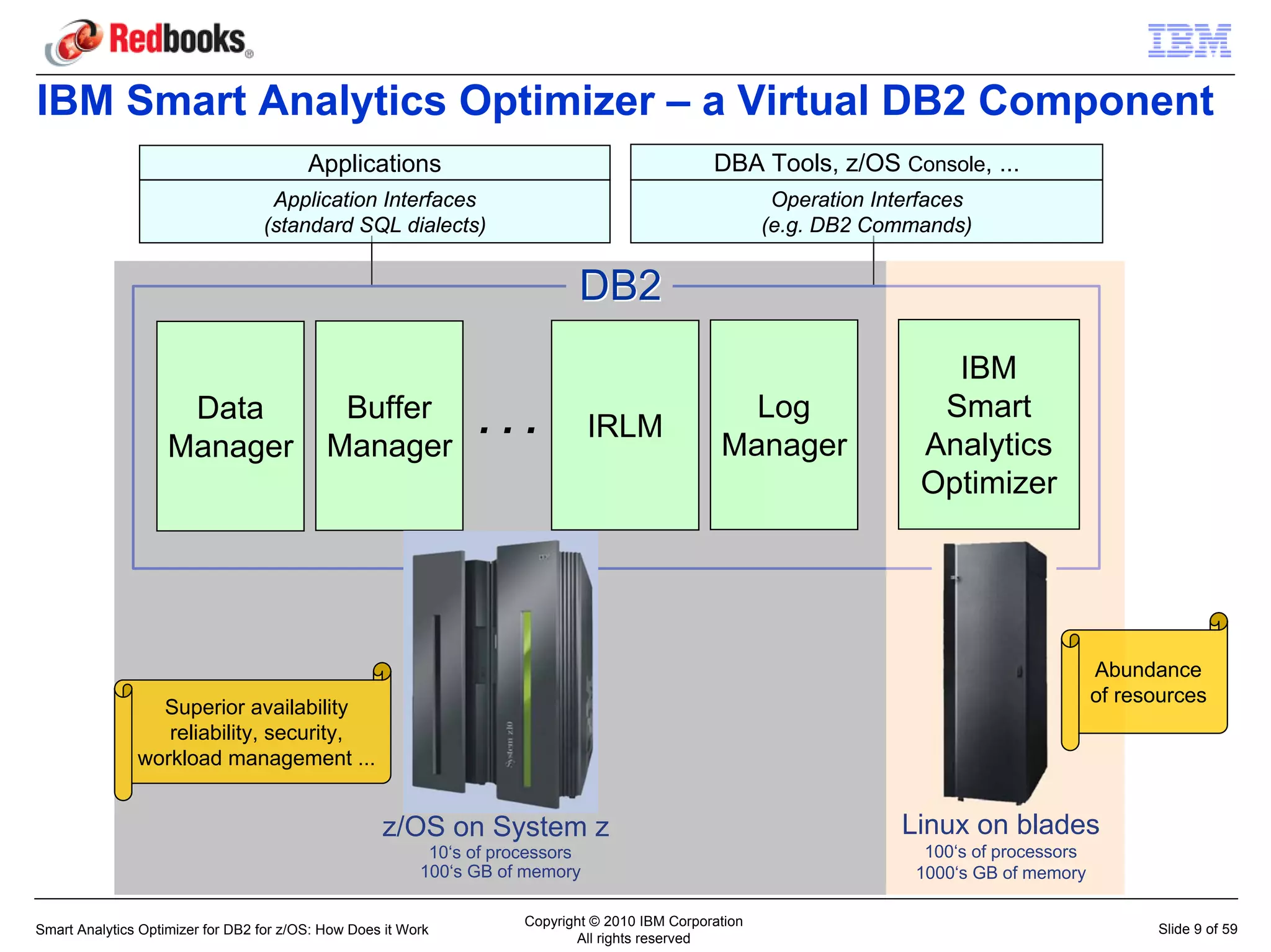

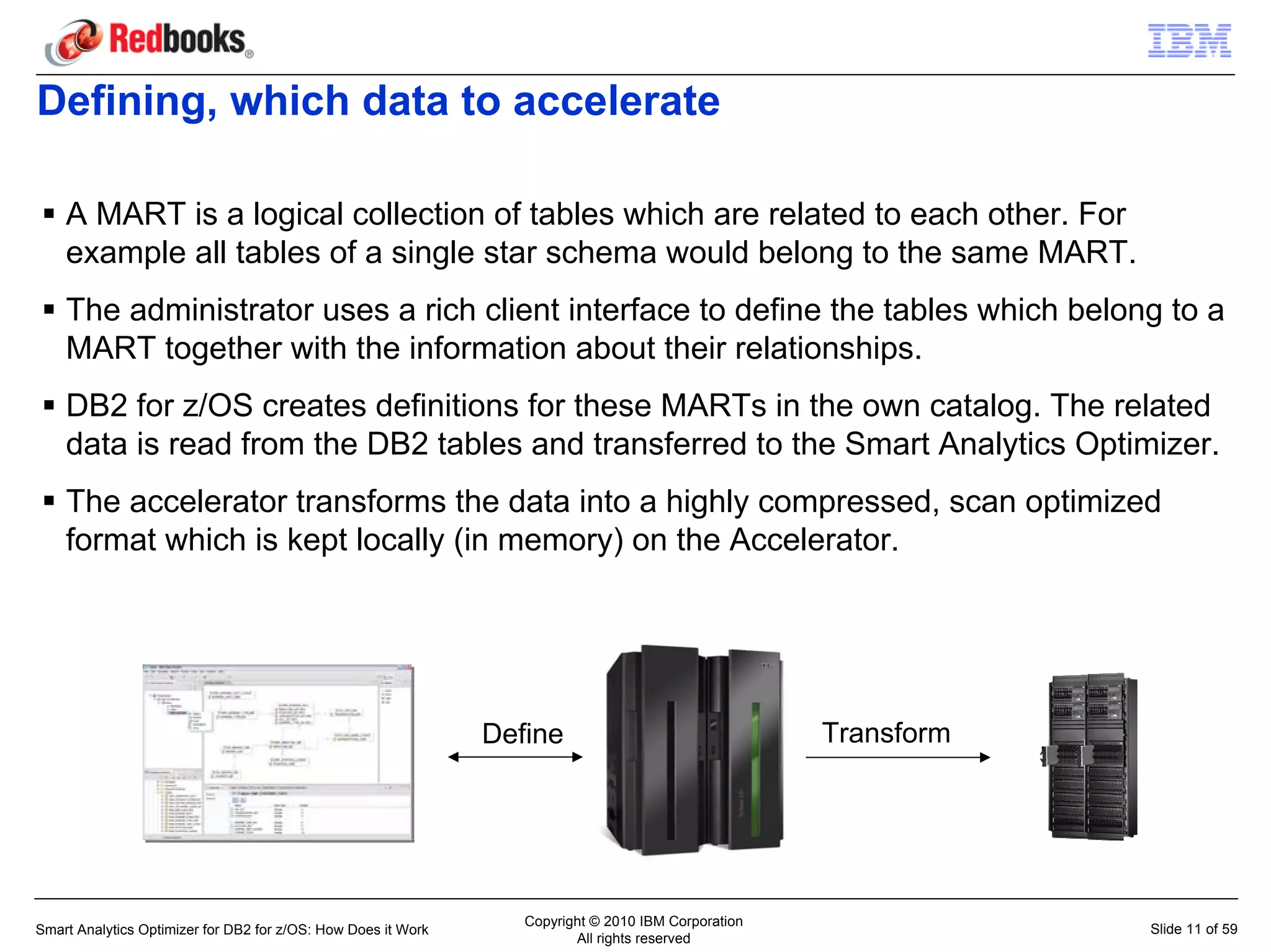

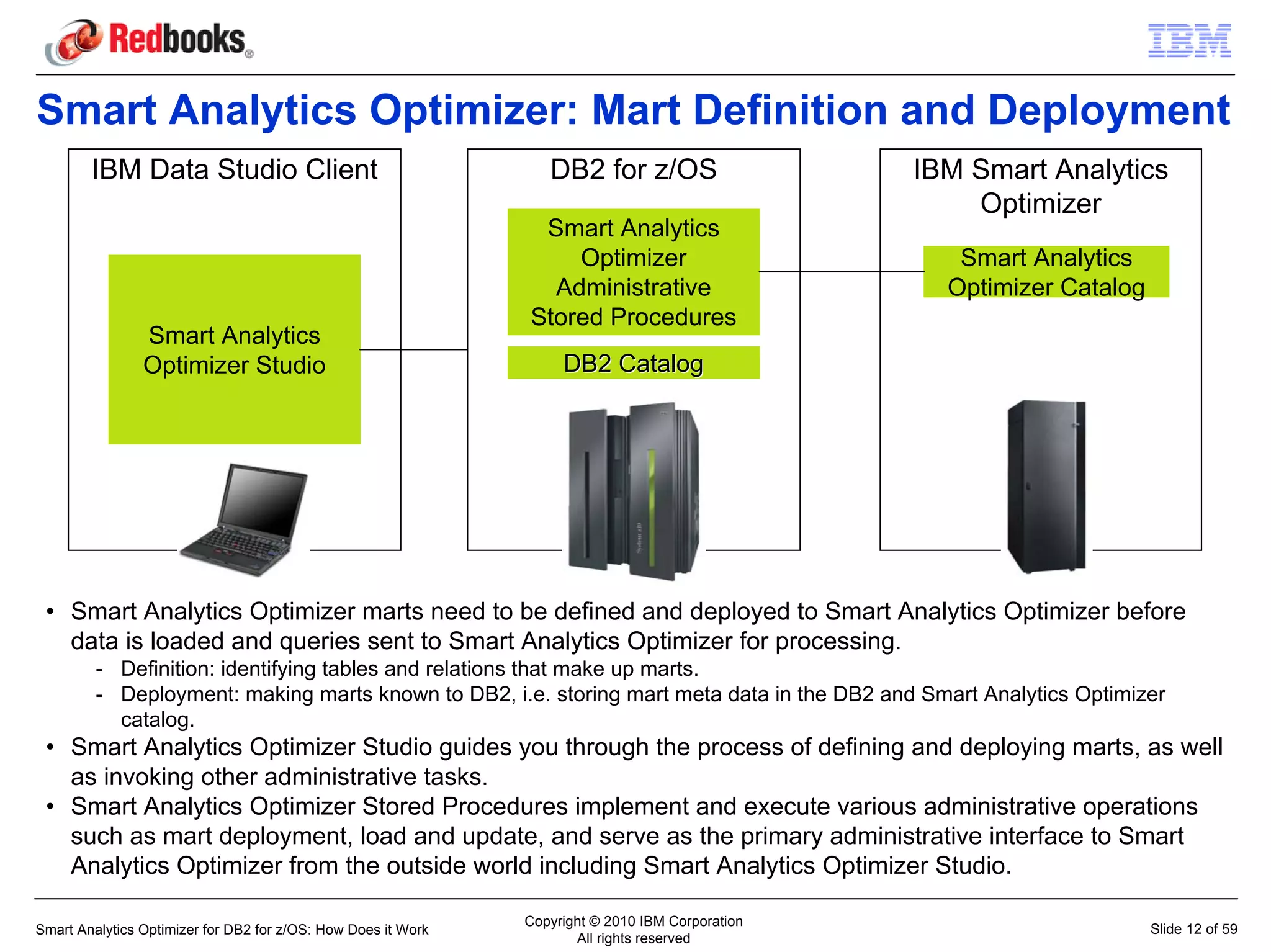

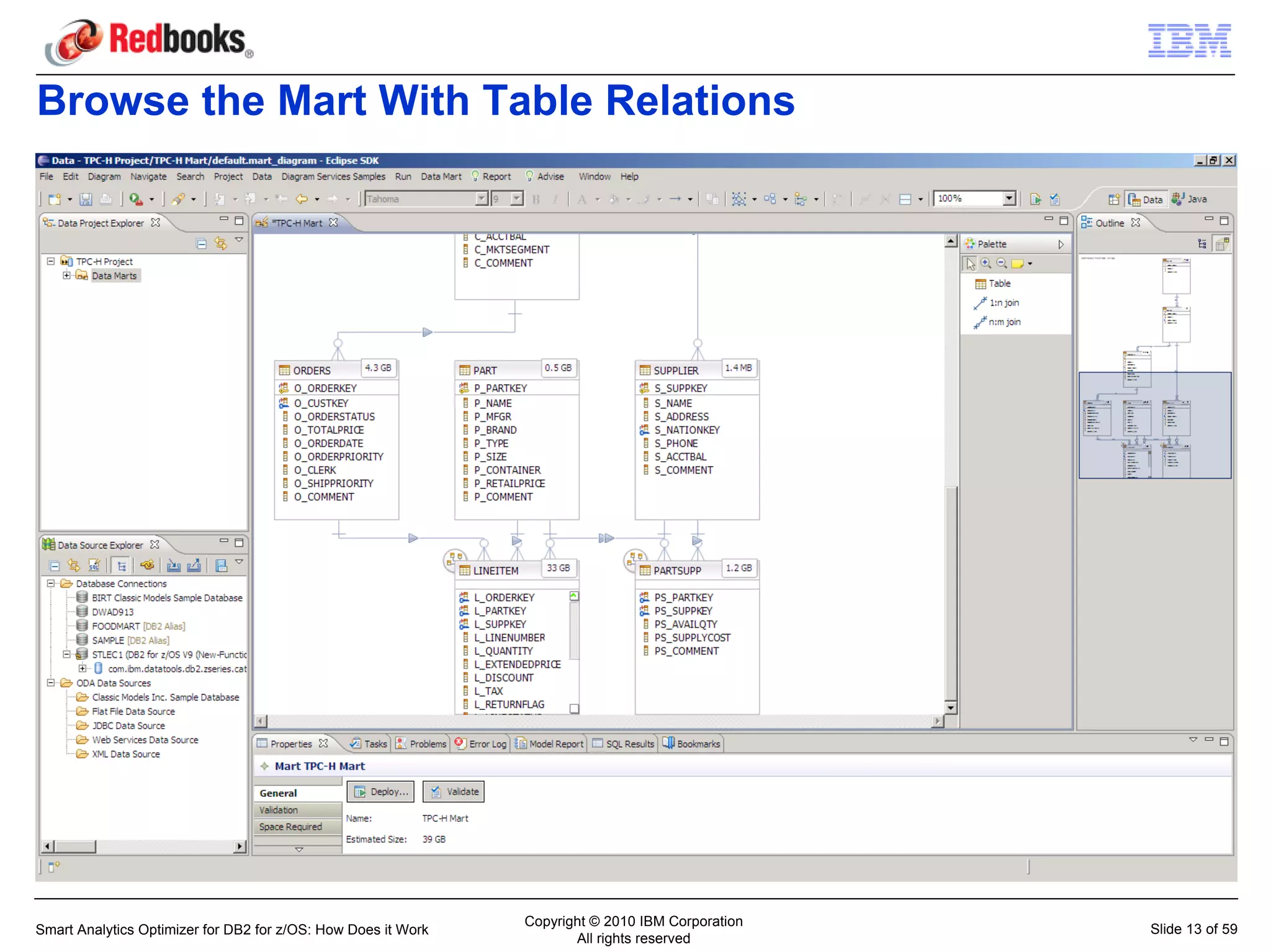

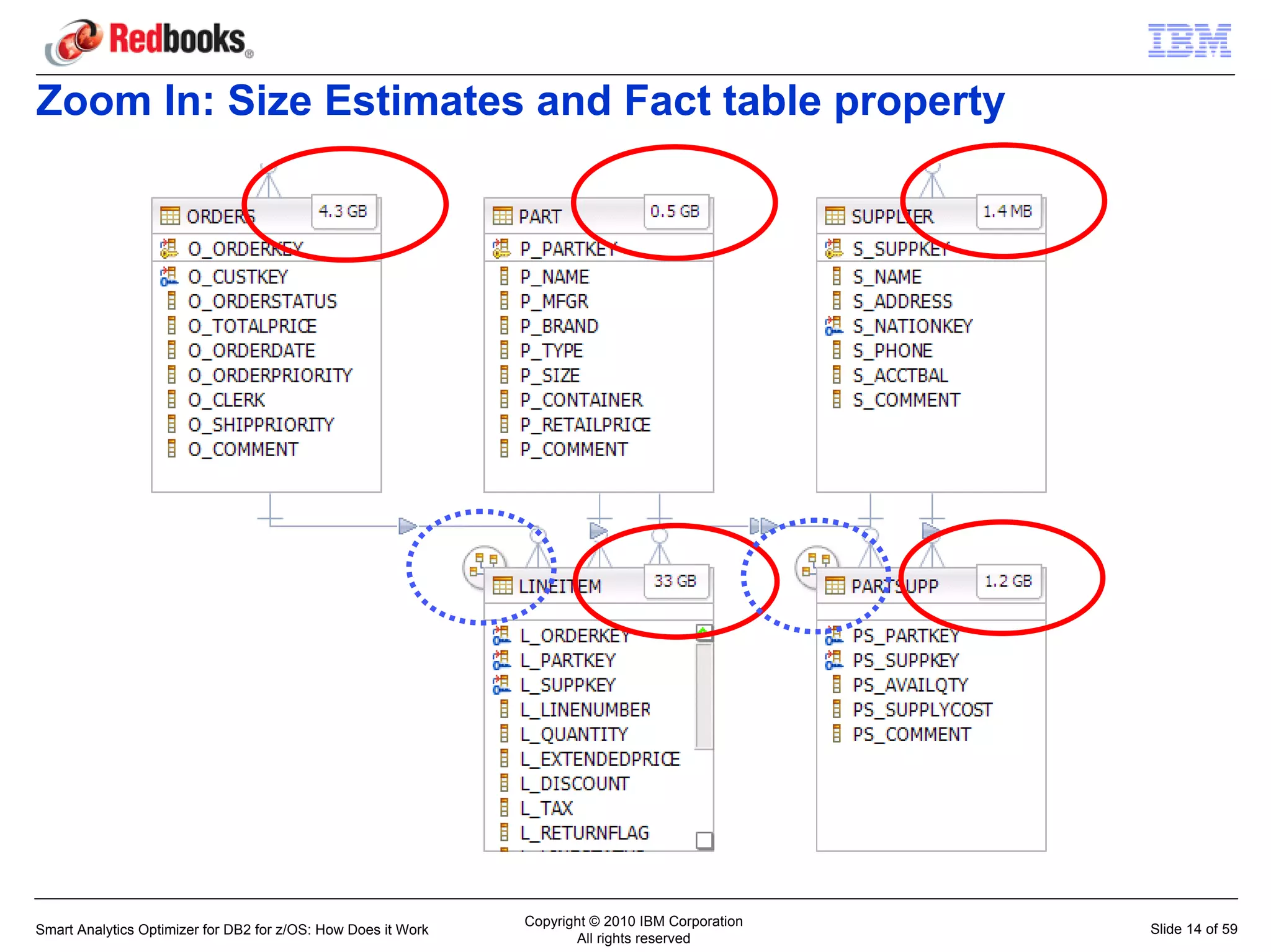

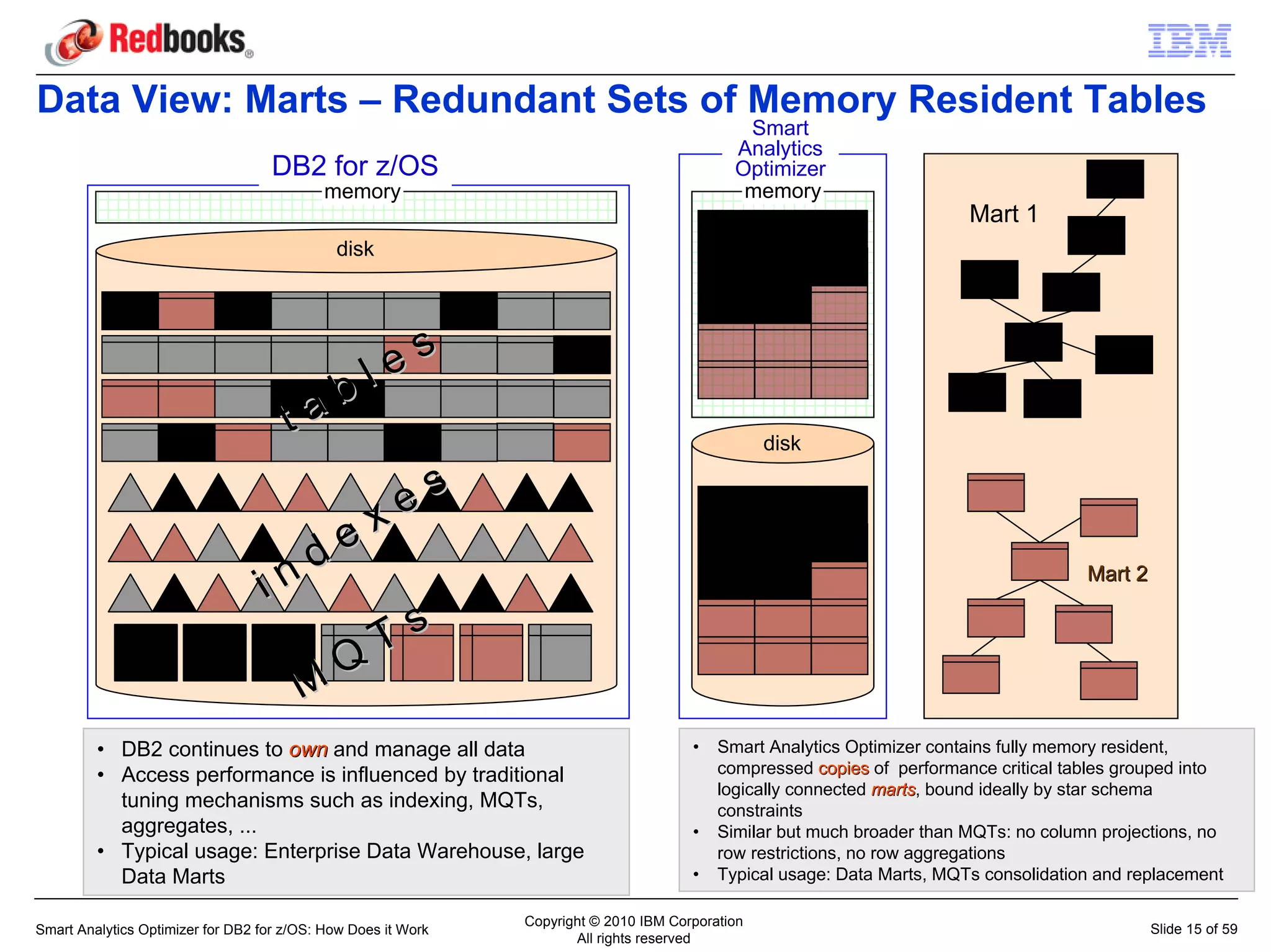

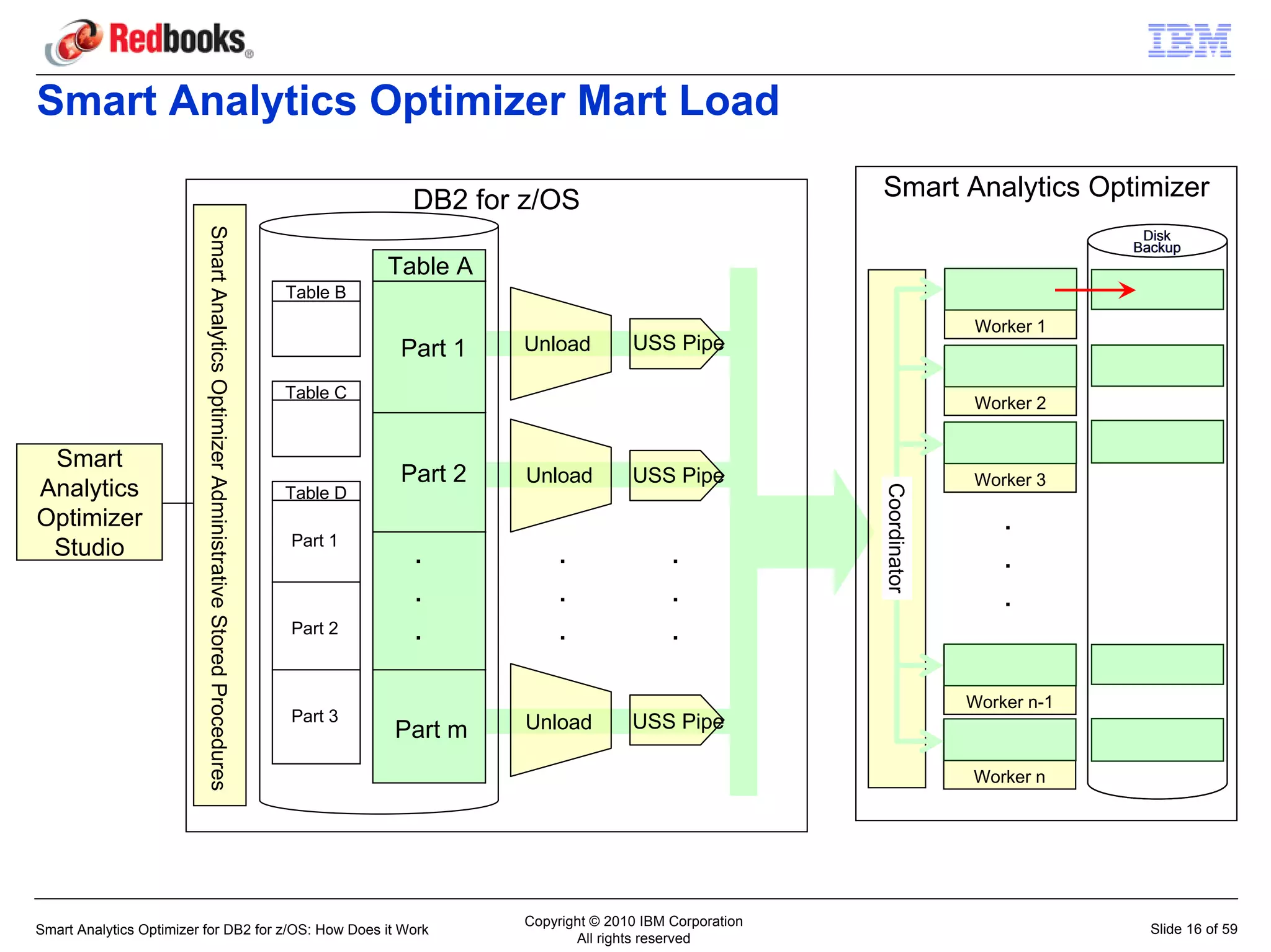

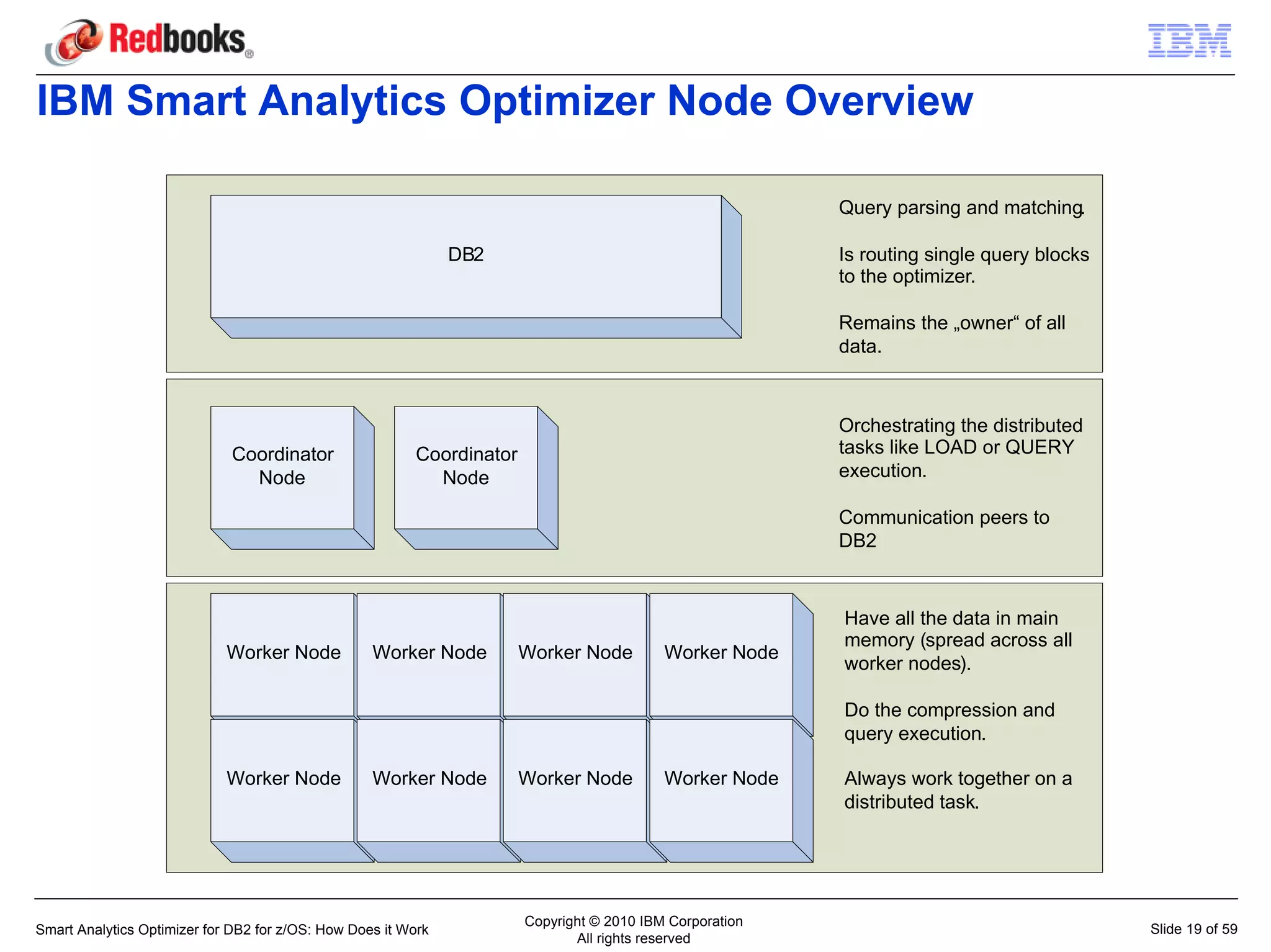

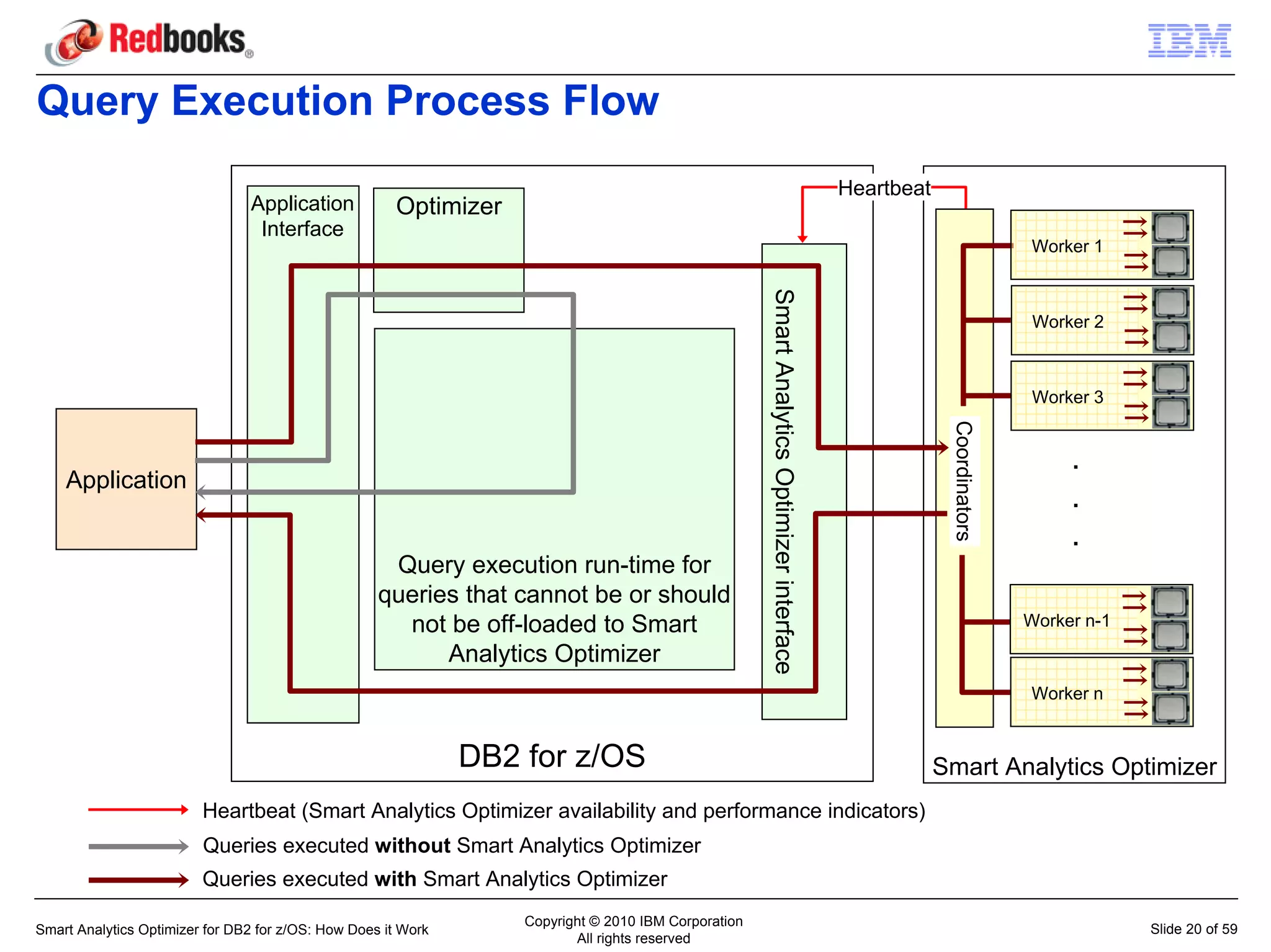

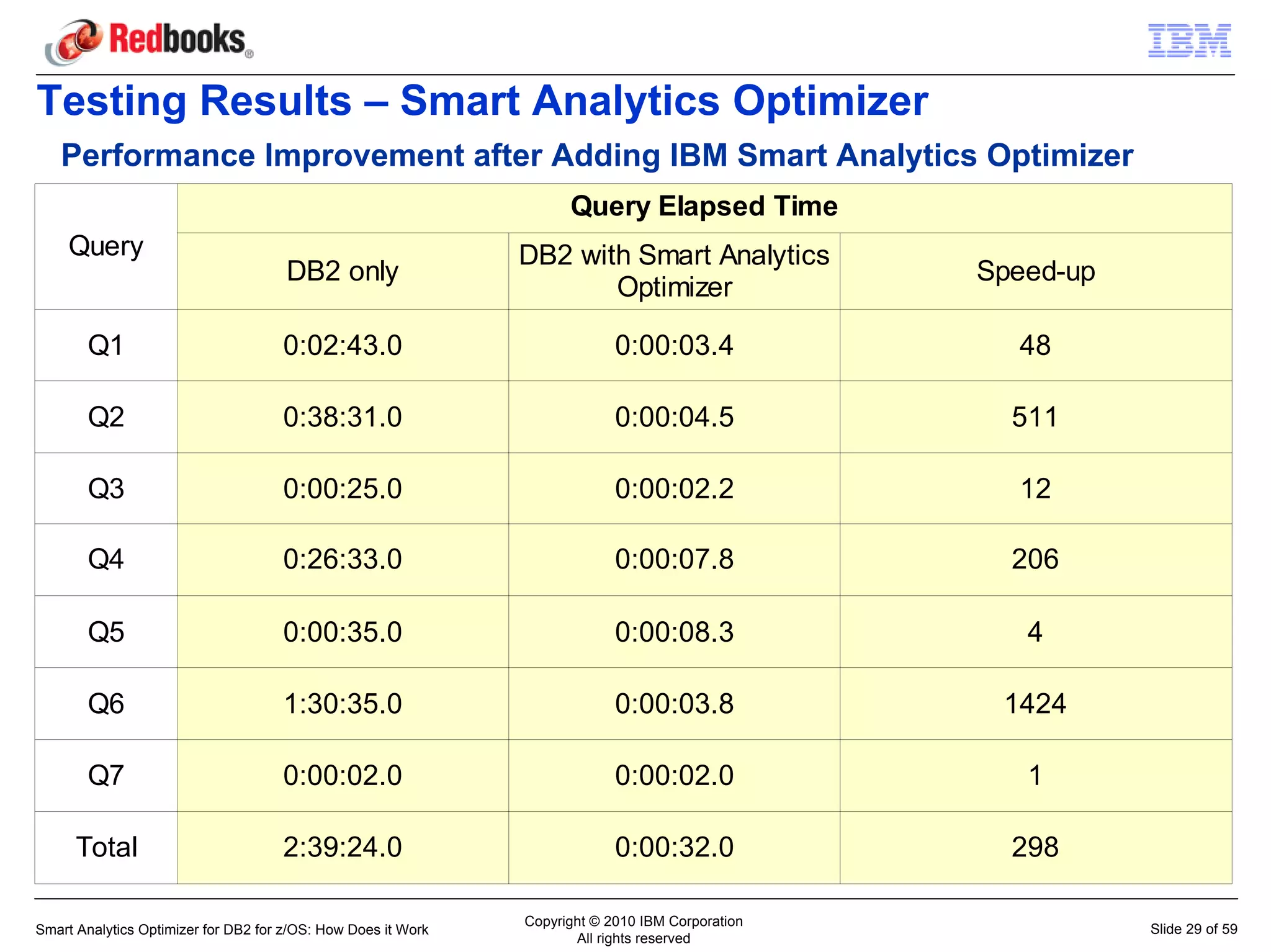

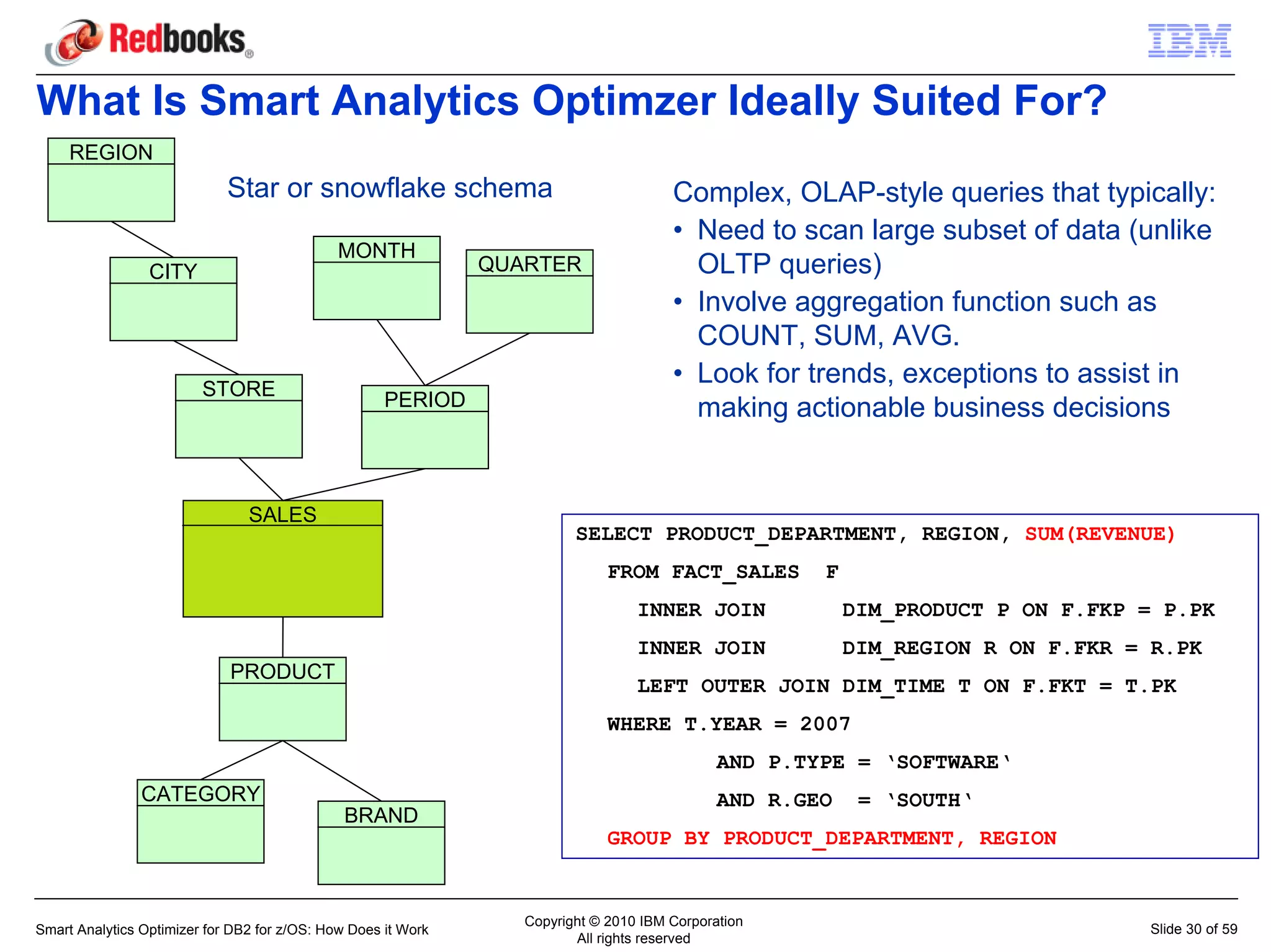

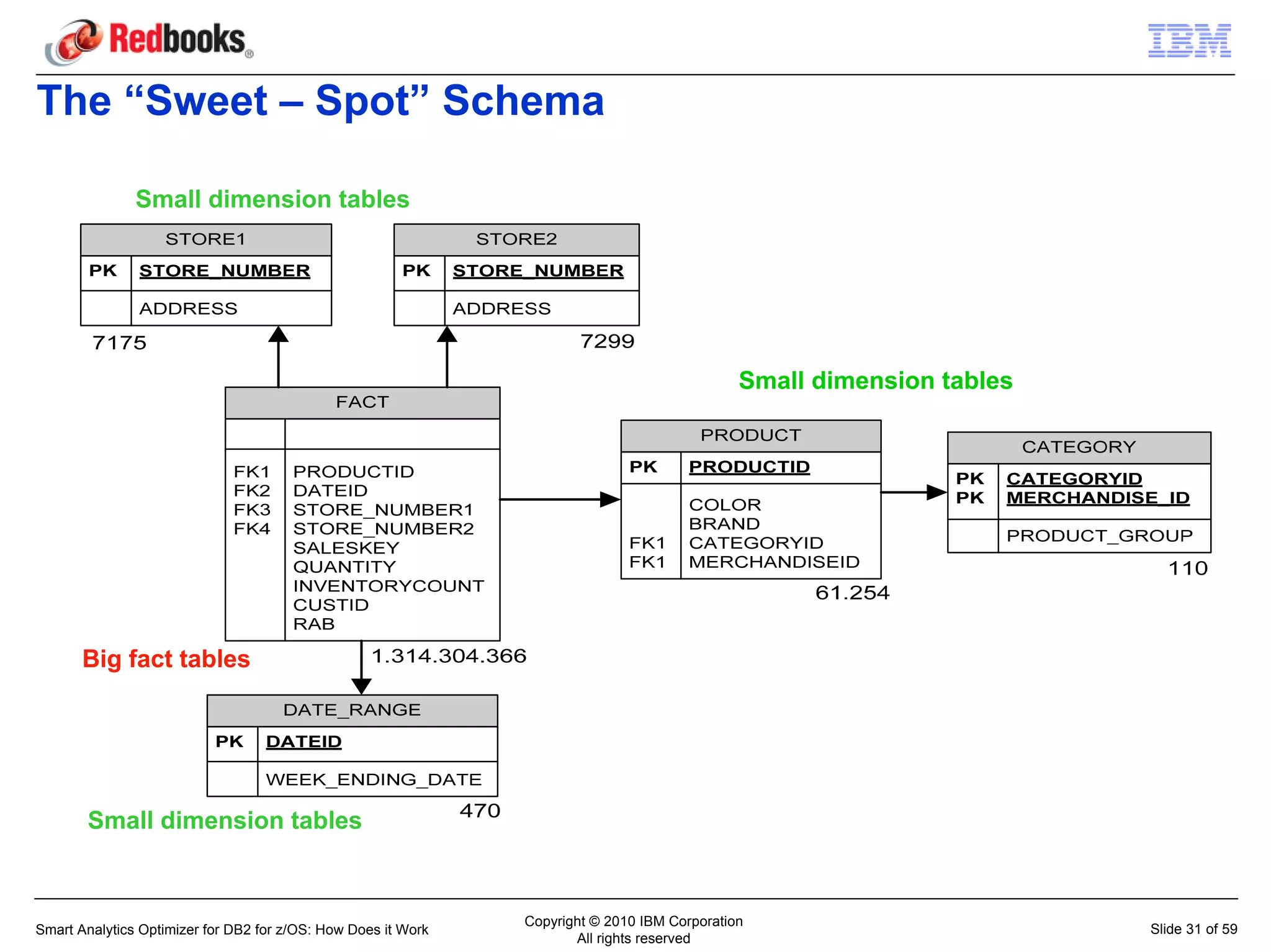

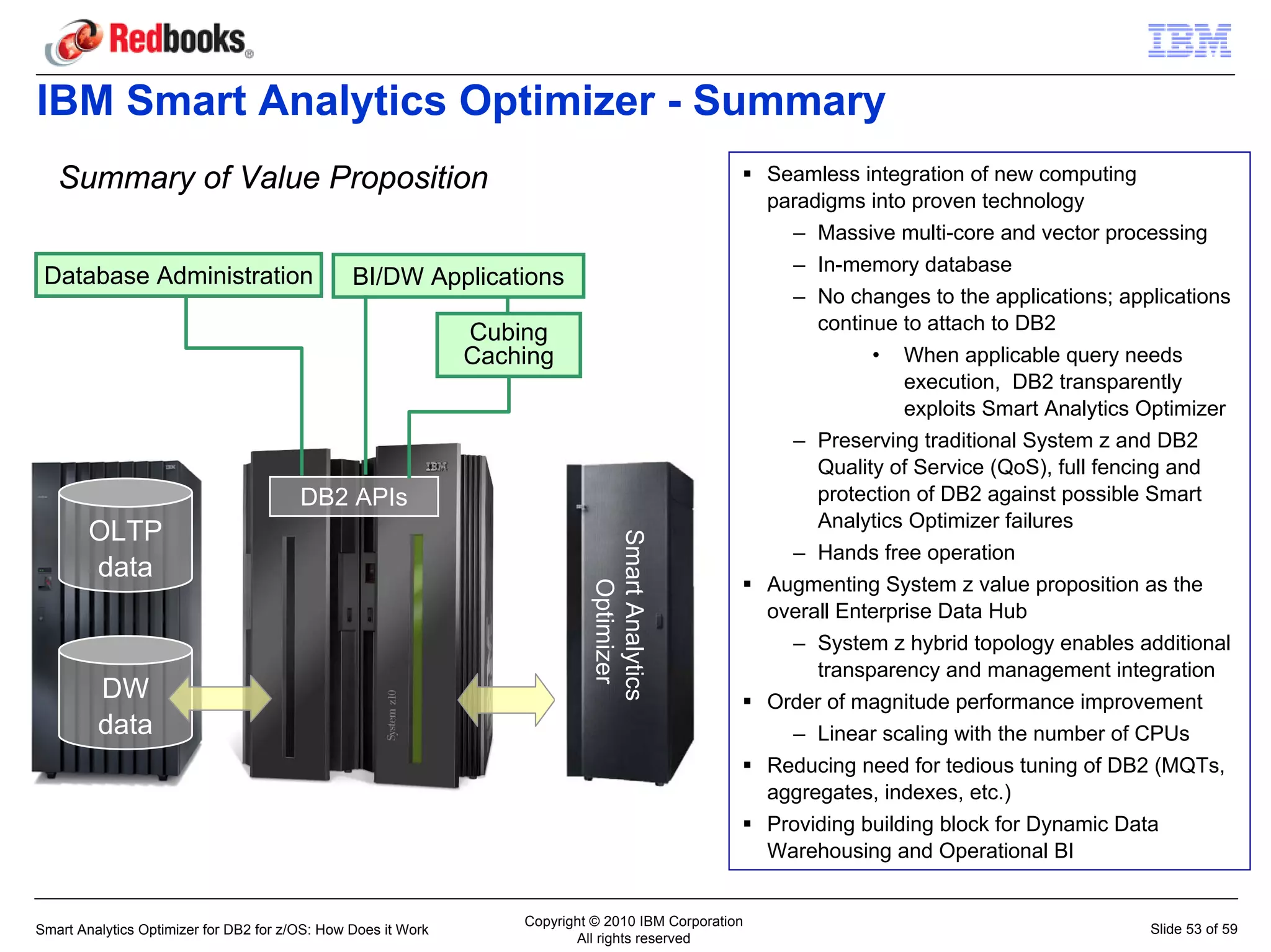

The IBM Smart Analytics Optimizer works by offloading CPU-intensive query processing from DB2 for z/OS to specialized hardware. It defines logical data marts containing related tables and loads them into compressed, memory-resident formats on the accelerator. This provides an order of magnitude performance improvement for queries involving the accelerated tables. The optimizer is transparent to applications and preserves DB2's qualities of service while improving price/performance.