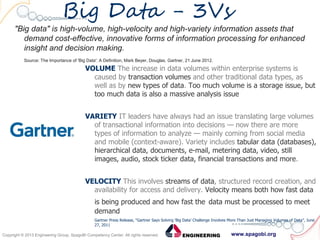

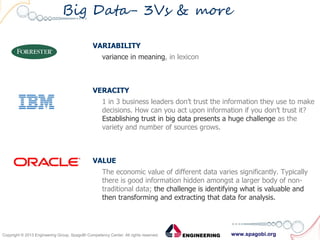

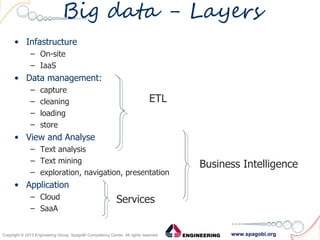

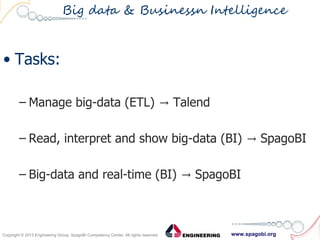

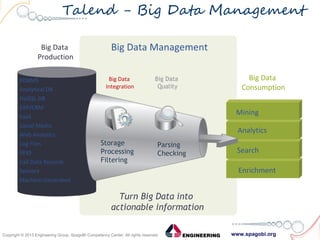

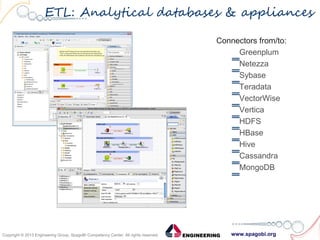

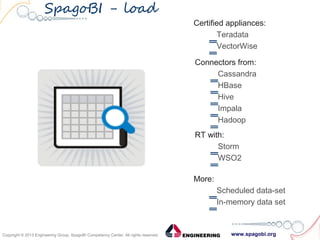

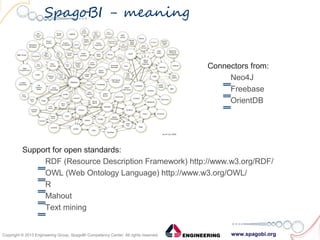

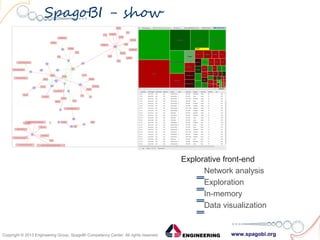

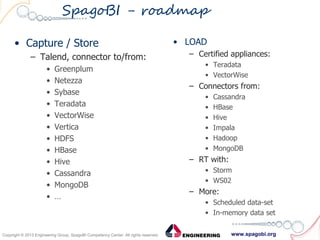

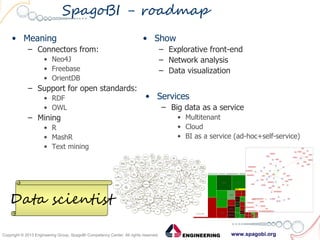

The document outlines the collaboration between SpagoBI and Talend to address big data challenges by leveraging their respective tools for data management and business intelligence. It discusses key big data characteristics such as volume, variety, velocity, variability, veracity, and value, as well as the infrastructure and processes involved in managing big data. Additionally, it highlights the roadmap for integrating both platforms to enhance their capabilities and provide comprehensive data solutions.