Embed presentation

Download as PDF, PPTX

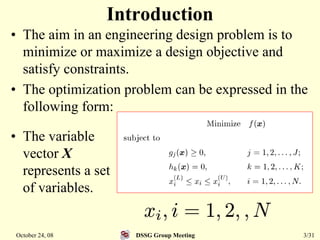

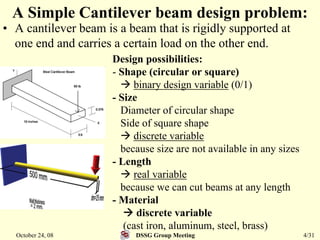

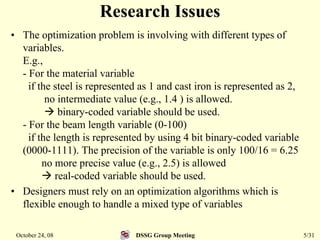

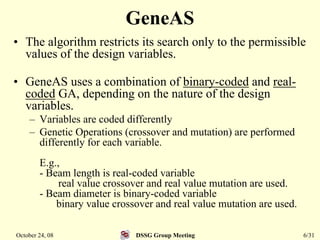

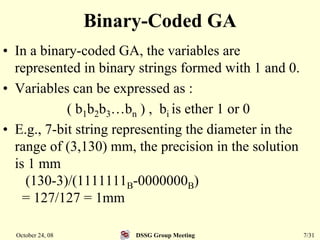

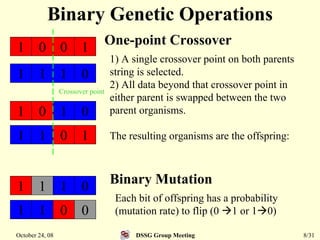

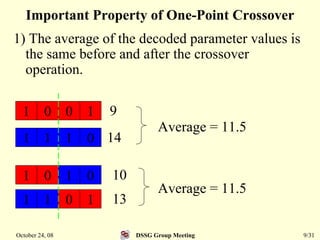

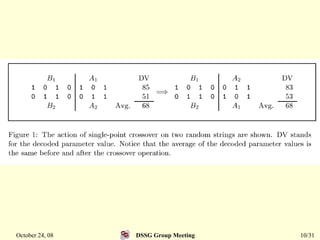

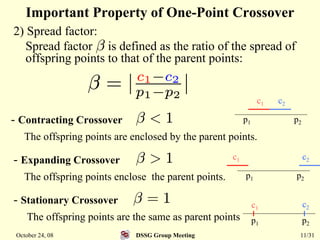

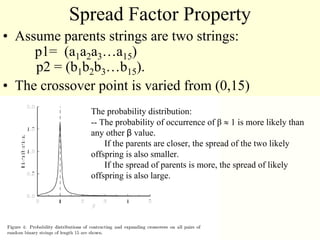

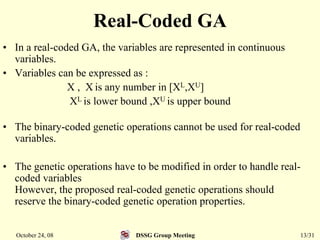

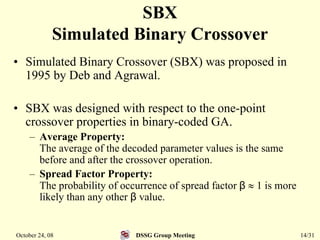

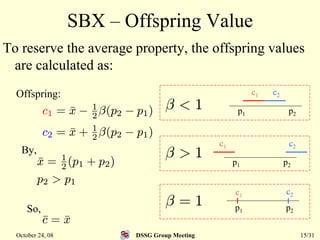

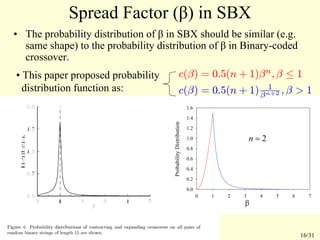

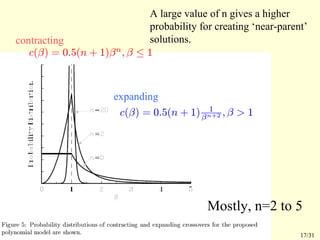

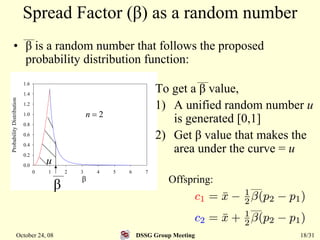

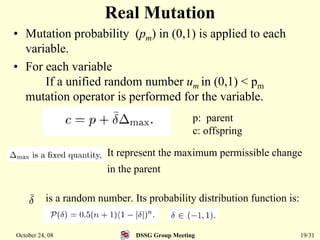

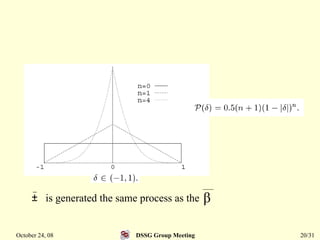

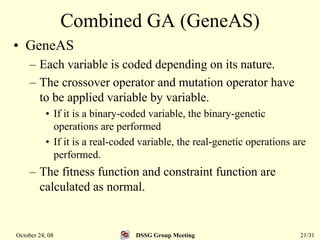

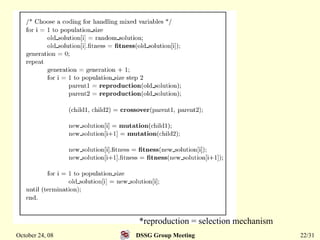

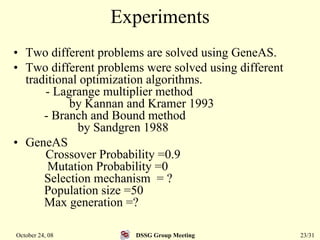

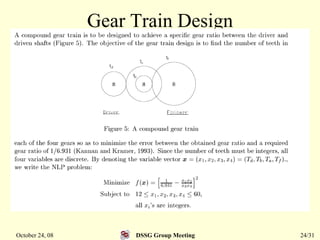

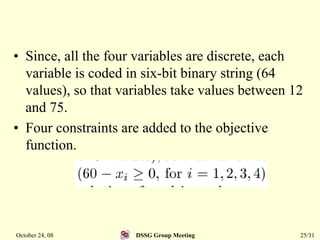

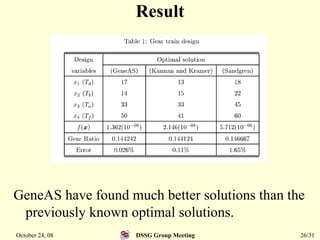

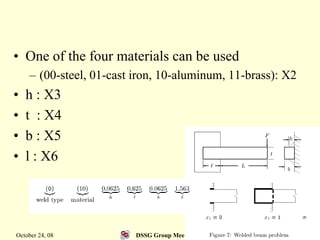

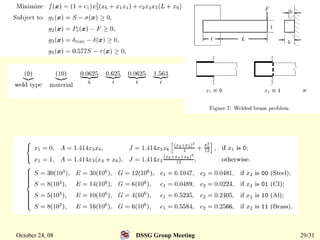

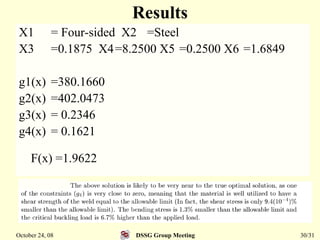

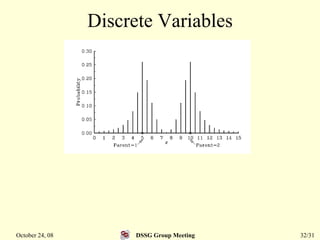

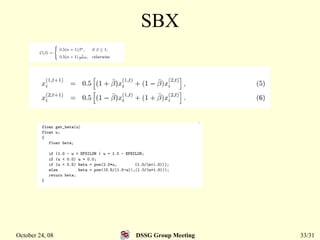

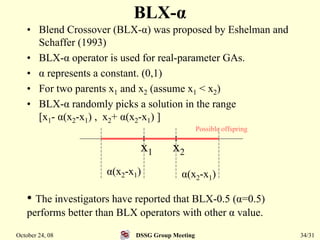

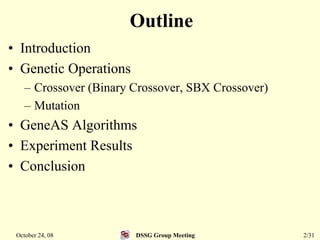

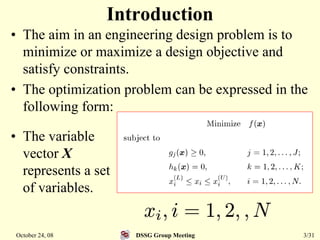

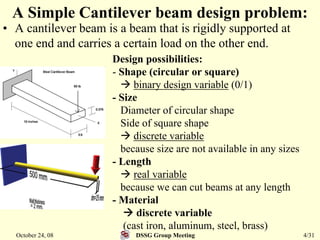

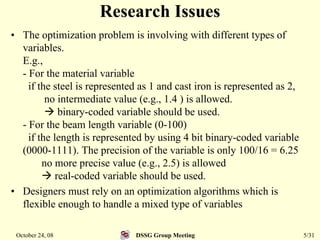

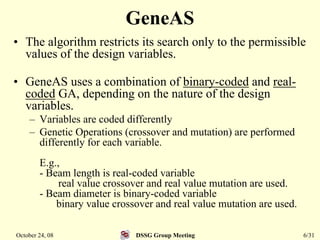

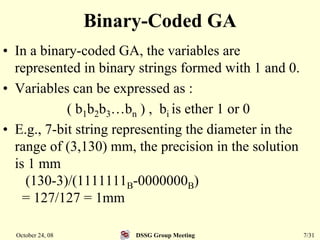

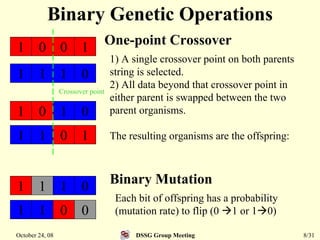

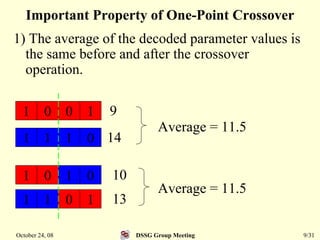

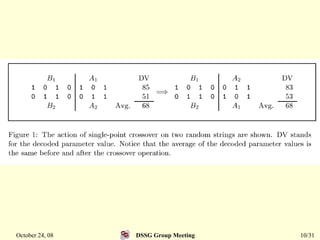

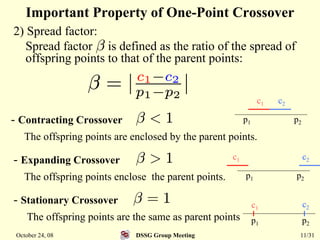

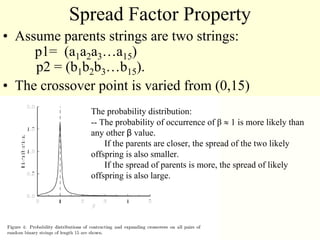

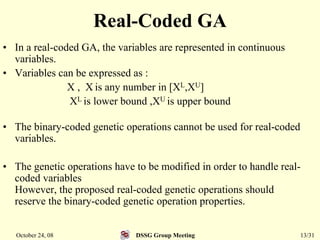

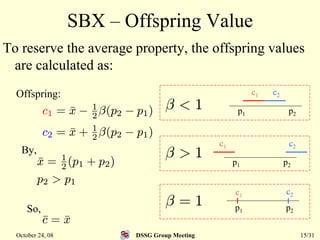

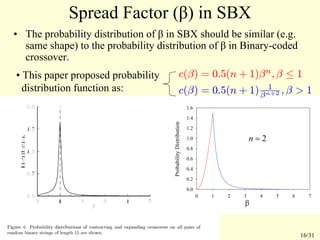

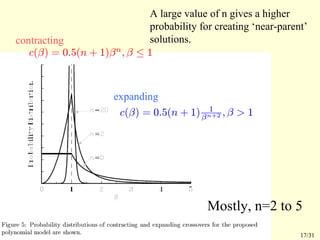

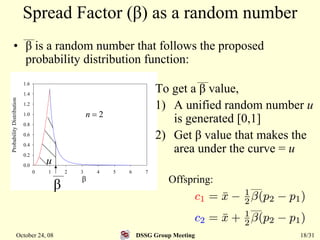

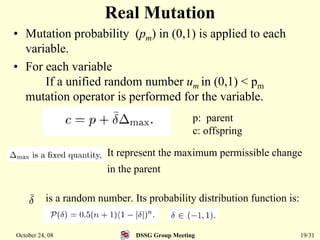

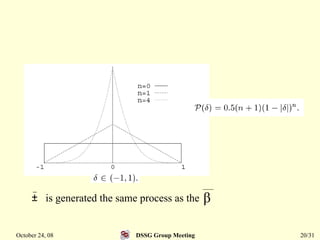

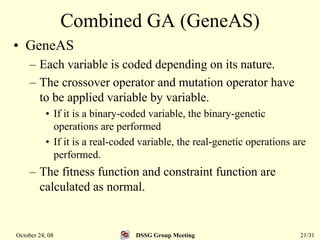

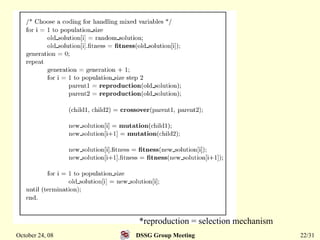

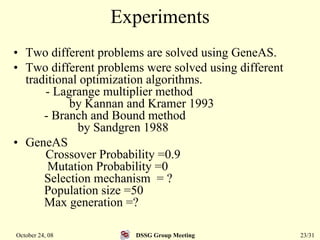

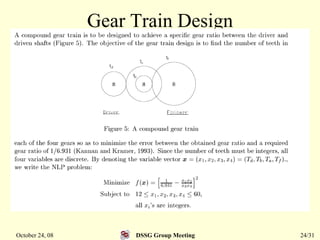

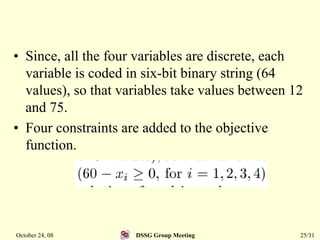

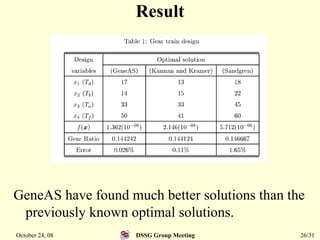

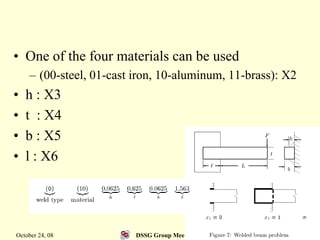

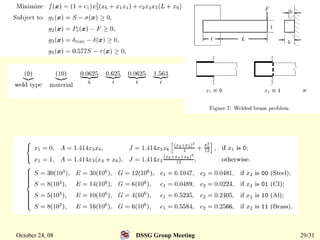

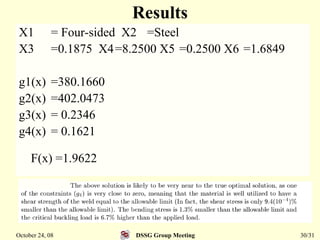

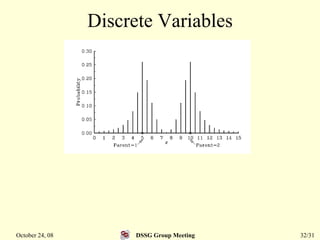

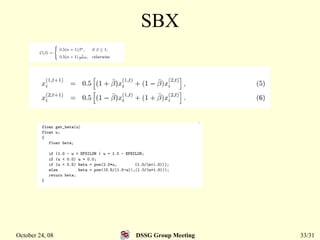

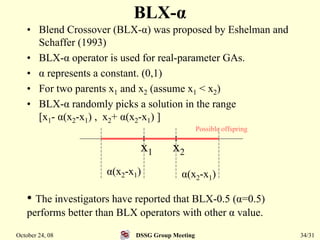

This document summarizes a research paper that proposes a genetic algorithm called GeneAS that can handle optimization problems with mixed variable types. GeneAS uses binary coding for discrete variables and real coding for continuous variables. It employs different genetic operators for each variable type, such as binary crossover for discrete variables and SBX crossover for continuous variables. The paper describes these genetic operators and presents results on example problems that show GeneAS finds very good optimal solutions.