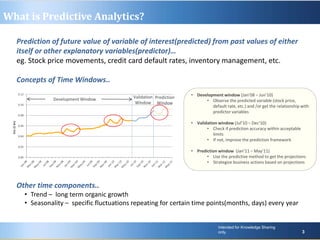

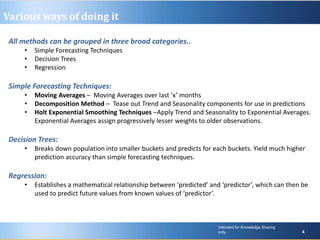

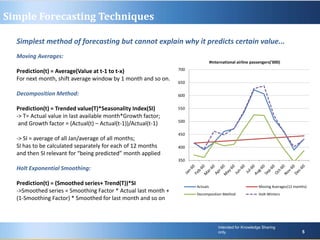

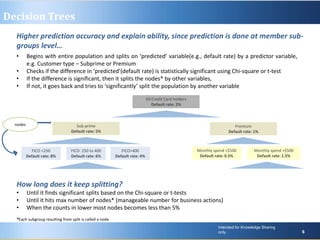

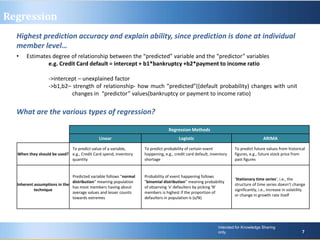

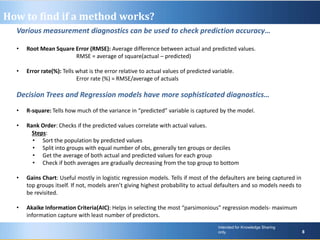

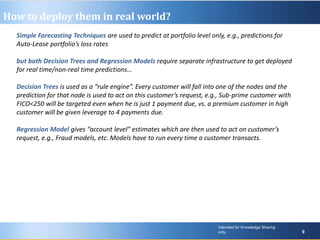

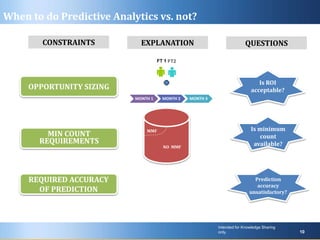

Predictive analytics uses past data to forecast future outcomes. The document discusses various predictive analytics techniques including simple forecasting methods, decision trees, and regression. Simple forecasting techniques like moving averages are easiest to implement but lack explanatory power, while decision trees and regression provide more accurate predictions at an individual level but require more complex deployment. The key is selecting the right technique based on the problem, data, and ability to implement predictive models in real-world applications.