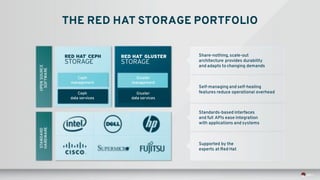

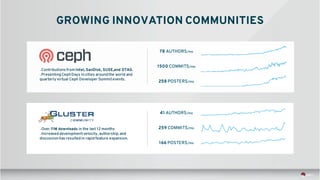

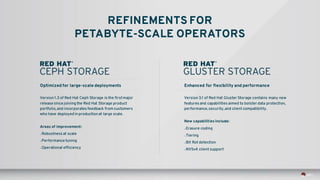

The document discusses Red Hat's software-defined storage (SDS) portfolio, focusing on open-source systems like Ceph and Gluster for modern cloud infrastructures. It highlights the economic advantages, scalability, and flexibility of these systems while predicting significant growth in the SDS market. Key features include data protection, automated tiering, bit rot detection, and support for big data analytics, making them suitable for a variety of enterprise use cases.

![DETAIL:

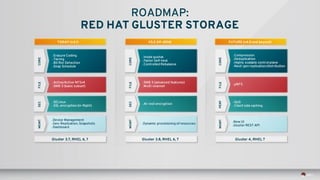

RED HAT GLUSTER STORAGE 3.1

PERF

Optimizations to enhance small file performance,

especially with small file create and write operations.

PERFSECURITYPROTOCOL

Optimizations that result in enhanced rebalance speed at large scale.

Introduction of the ability to operate with SELinux in enforcing

mode, increasing security across an entire deployment.

Support for data access via clustered, active-active NFSv4 endpoints,

based on the NFS-Ganesha project.

Enhancements to SMB 3 protocol negotiation, copy-data offload, and

support for in-flight data encryption [Sayan: what is copy-data offload?]

Small file

Rebalance

SELinux enforcing

mode

NFSv4

(multi-headed)

SMB 3 (subset of

features)

These features were introduced in the most recent release of Red Hat Gluster Storage,

and are now supported by Red Hat.

PROTOCOL](https://image.slidesharecdn.com/scalableposixfsinthecloud-20160121small-160122185259/85/Scalable-POSIX-File-Systems-in-the-Cloud-35-320.jpg)