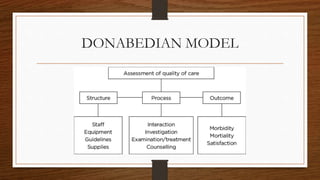

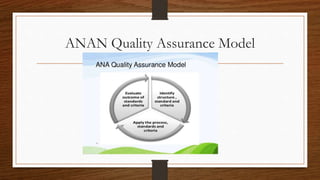

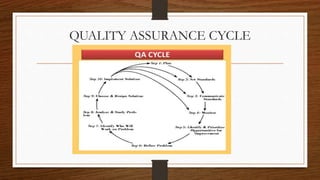

This document provides an overview of quality assurance in healthcare. It discusses key concepts like continuous quality improvement and defines quality assurance. It outlines objectives of quality assurance like designing well-monitored processes to improve patient outcomes. Approaches covered include general approaches like credentialing, licensure, and accreditation, as well as specific approaches like audits and peer review. Models of quality assurance are presented, including the Donabedian model of structure, process and outcomes. Quality tools and the quality assurance cycle are also summarized.