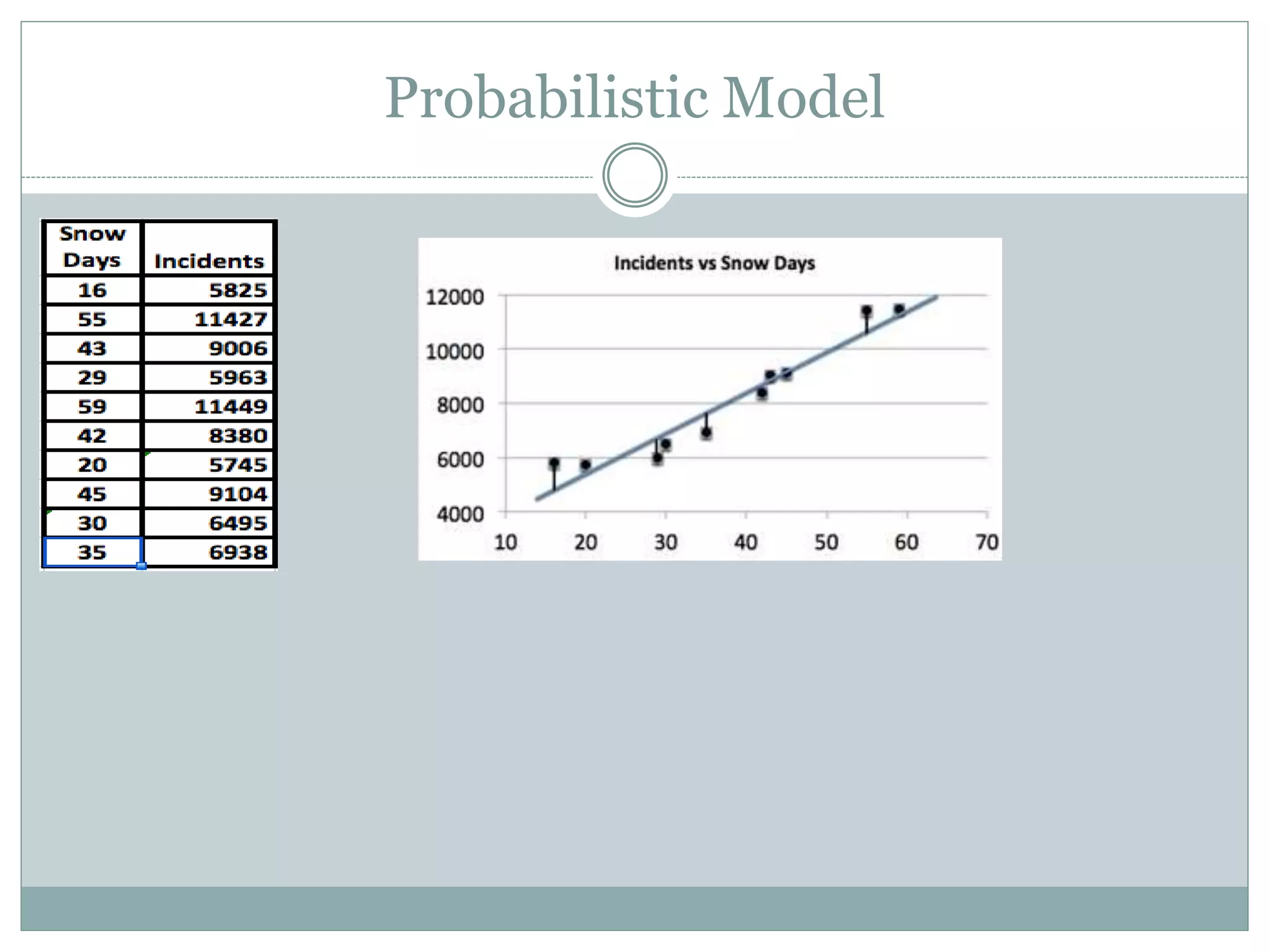

The document discusses different types of mathematical models, including deterministic and probabilistic models. It provides examples of each. It also discusses building, verifying, and refining mathematical models. Additionally, it covers optimization models, their components including objective functions and constraints. Finally, it discusses specific types of optimization models like linear programming, network flow programming, and integer programming.