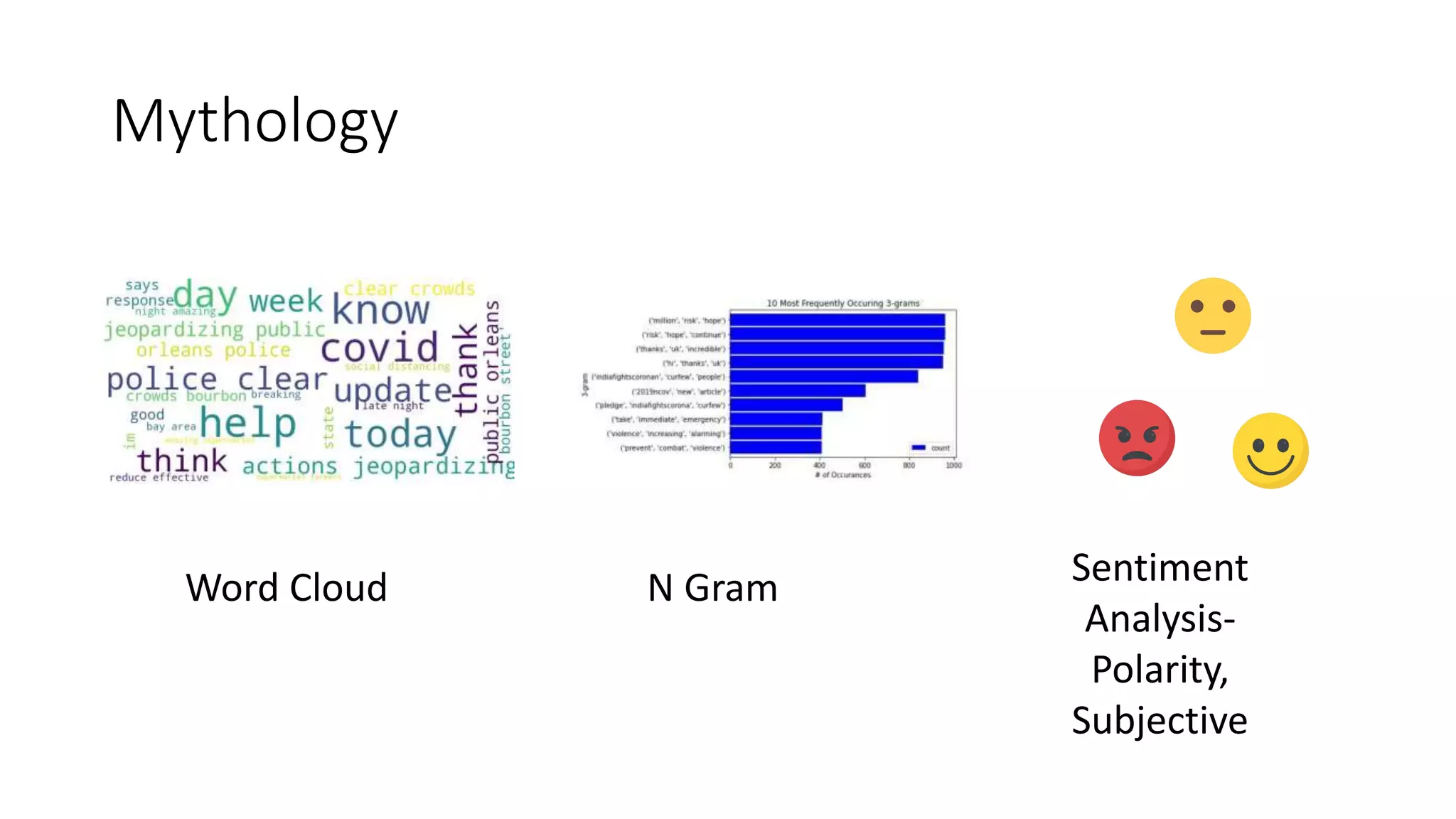

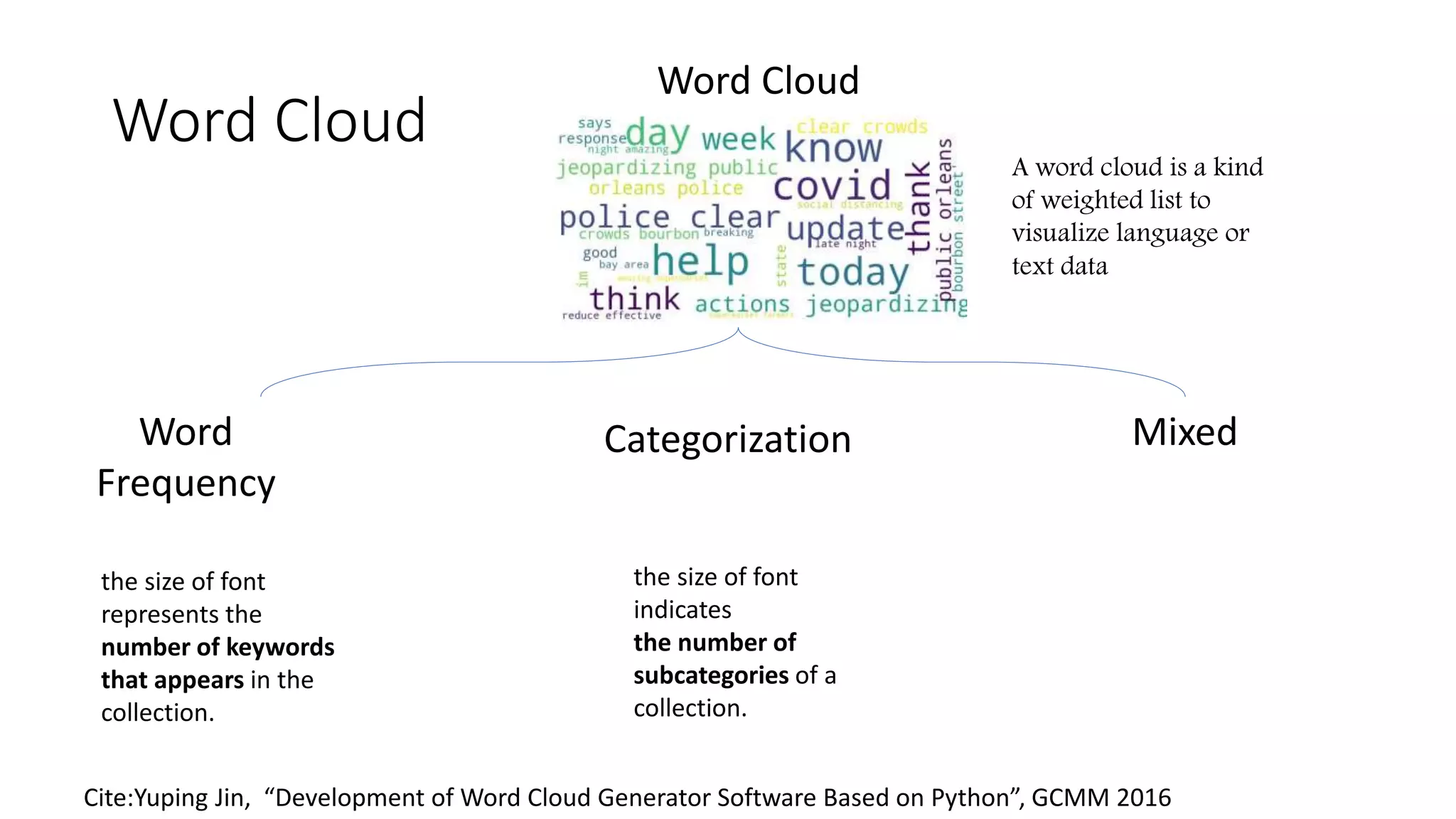

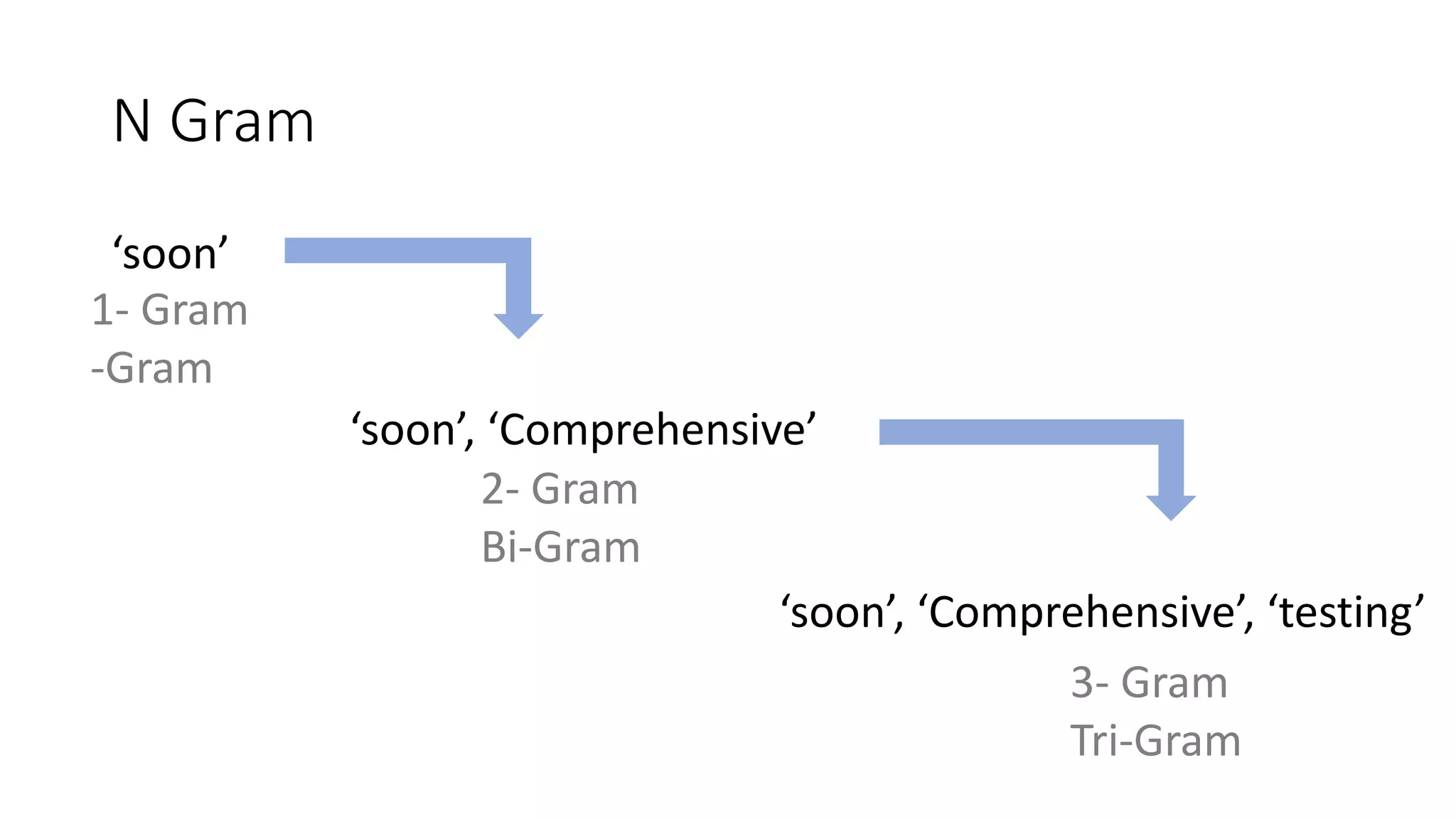

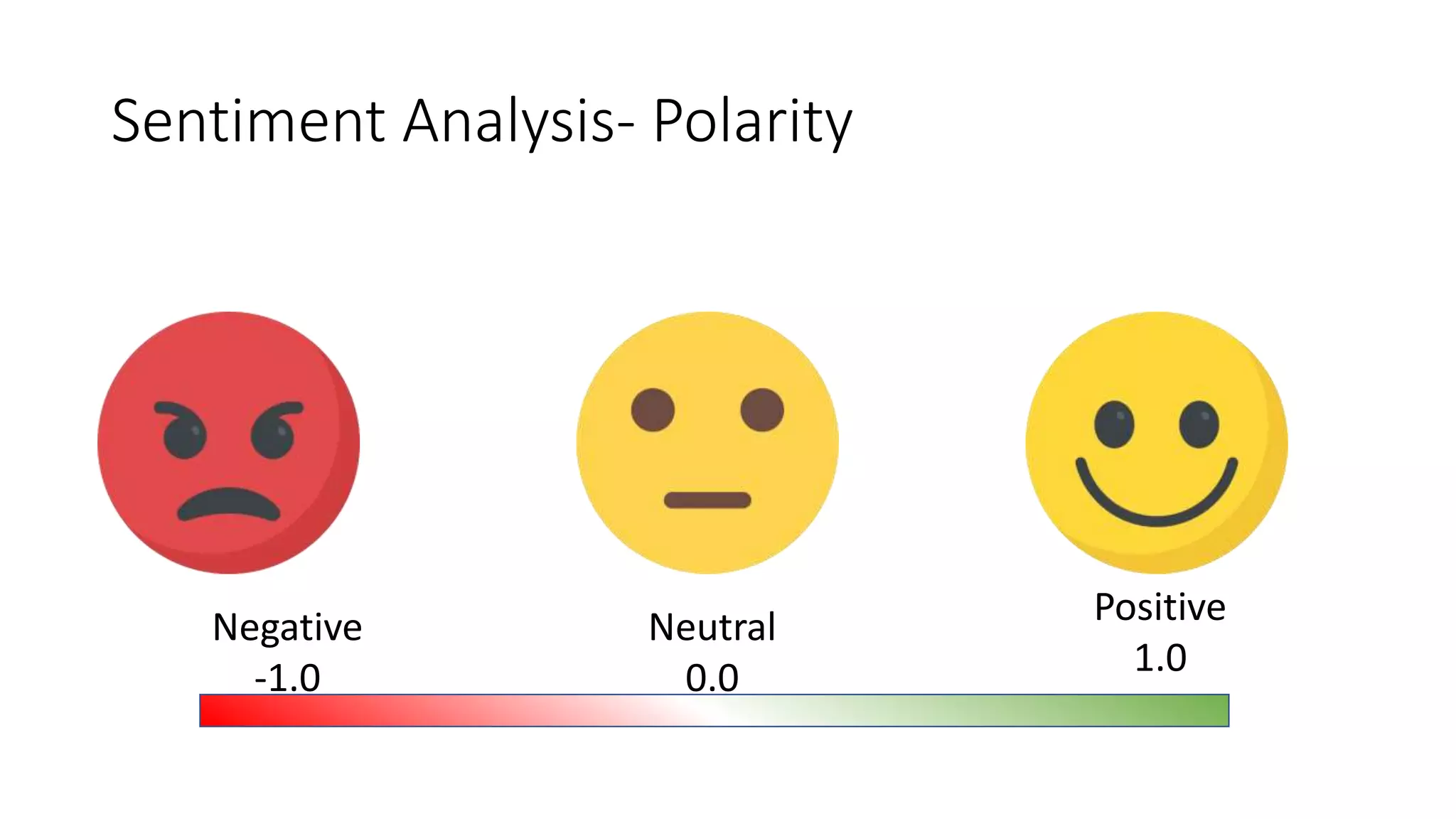

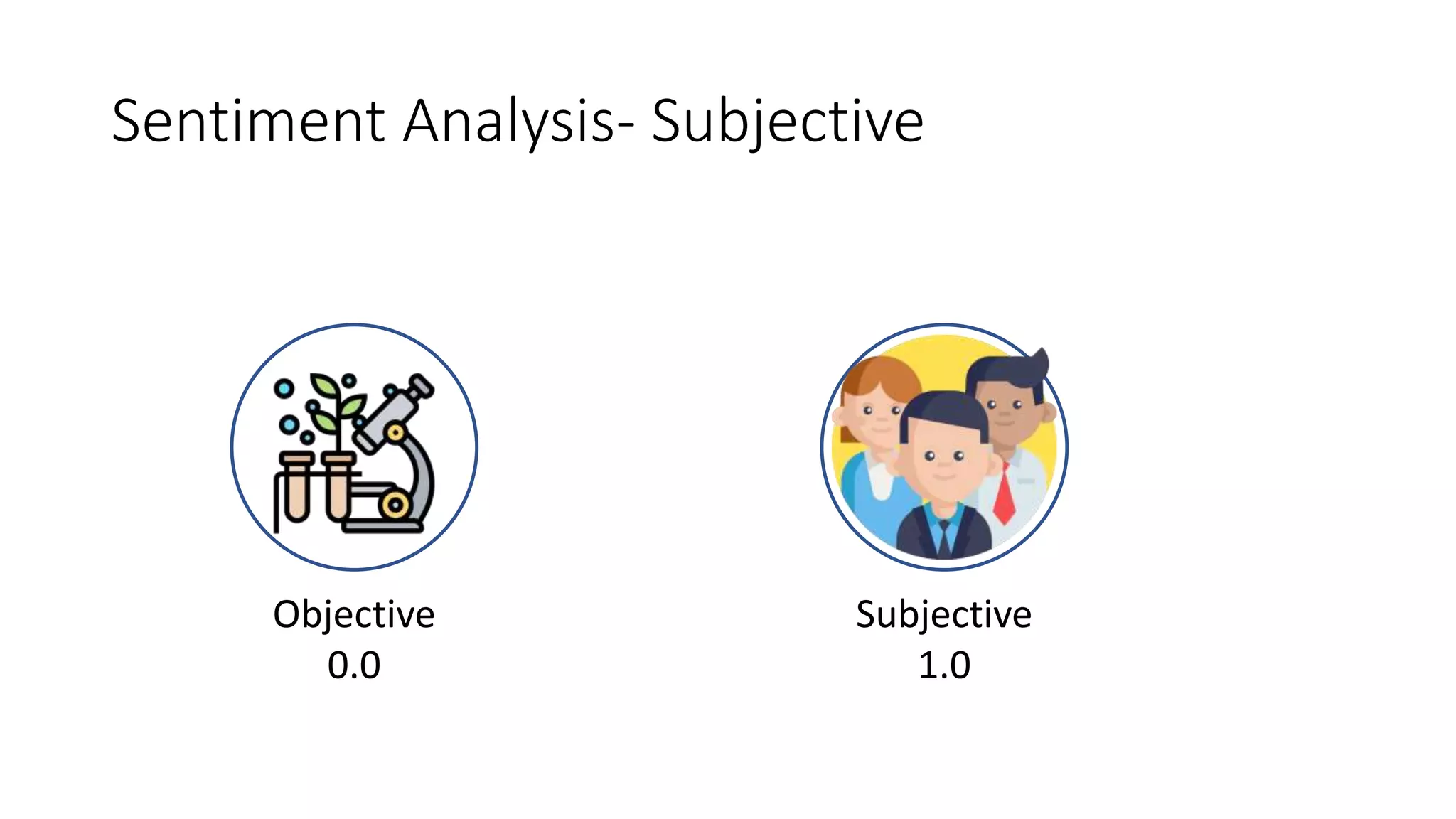

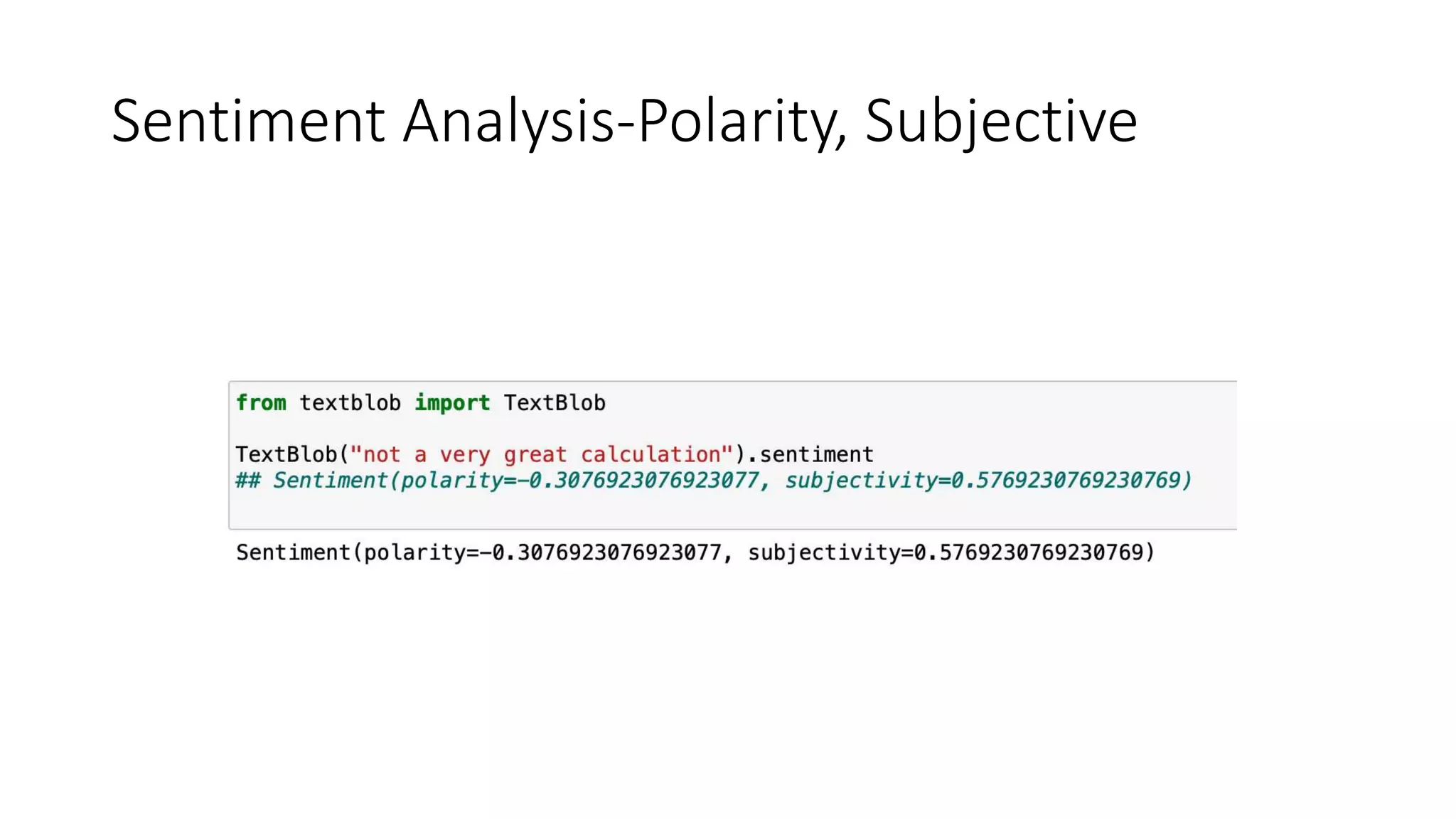

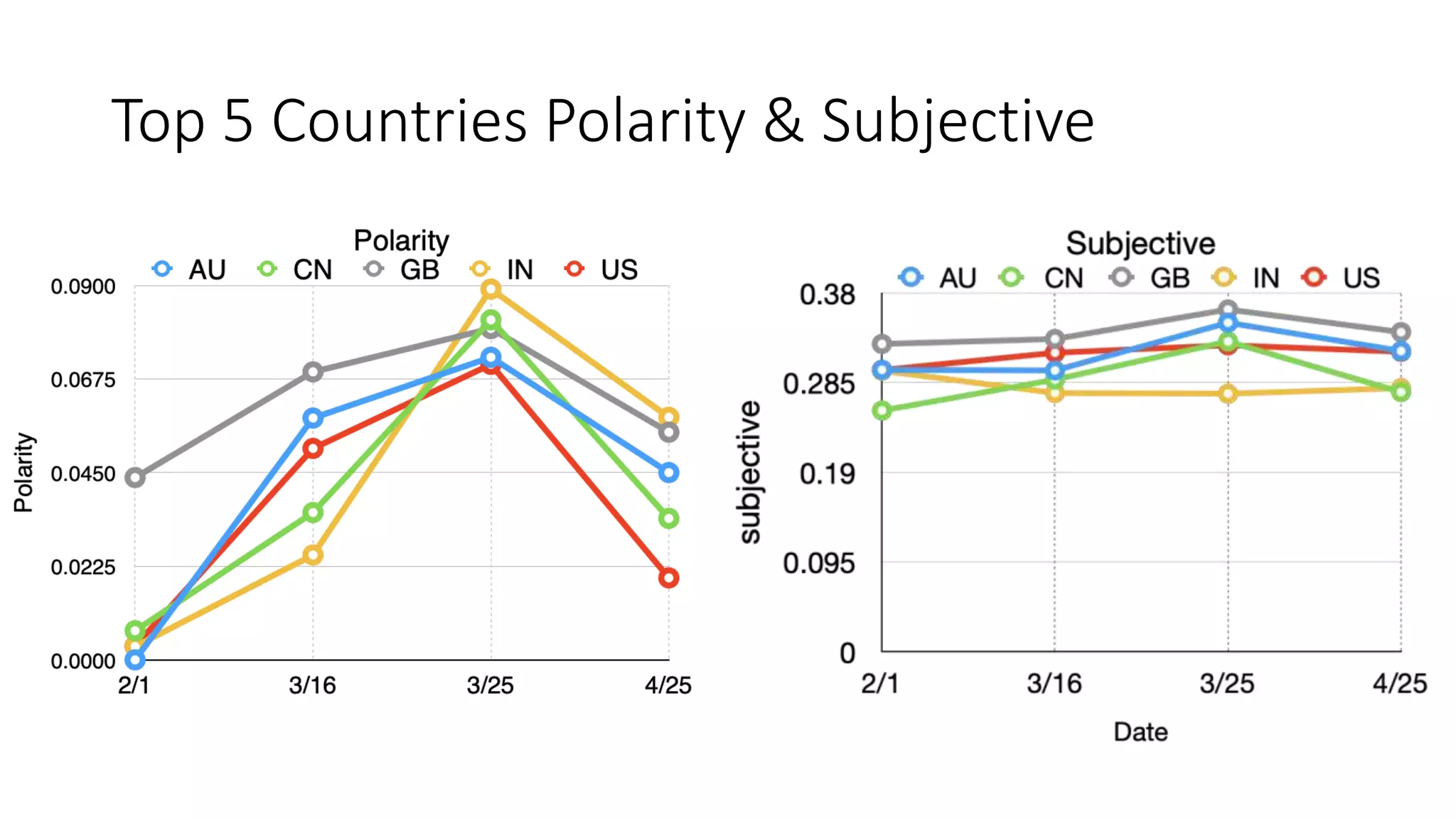

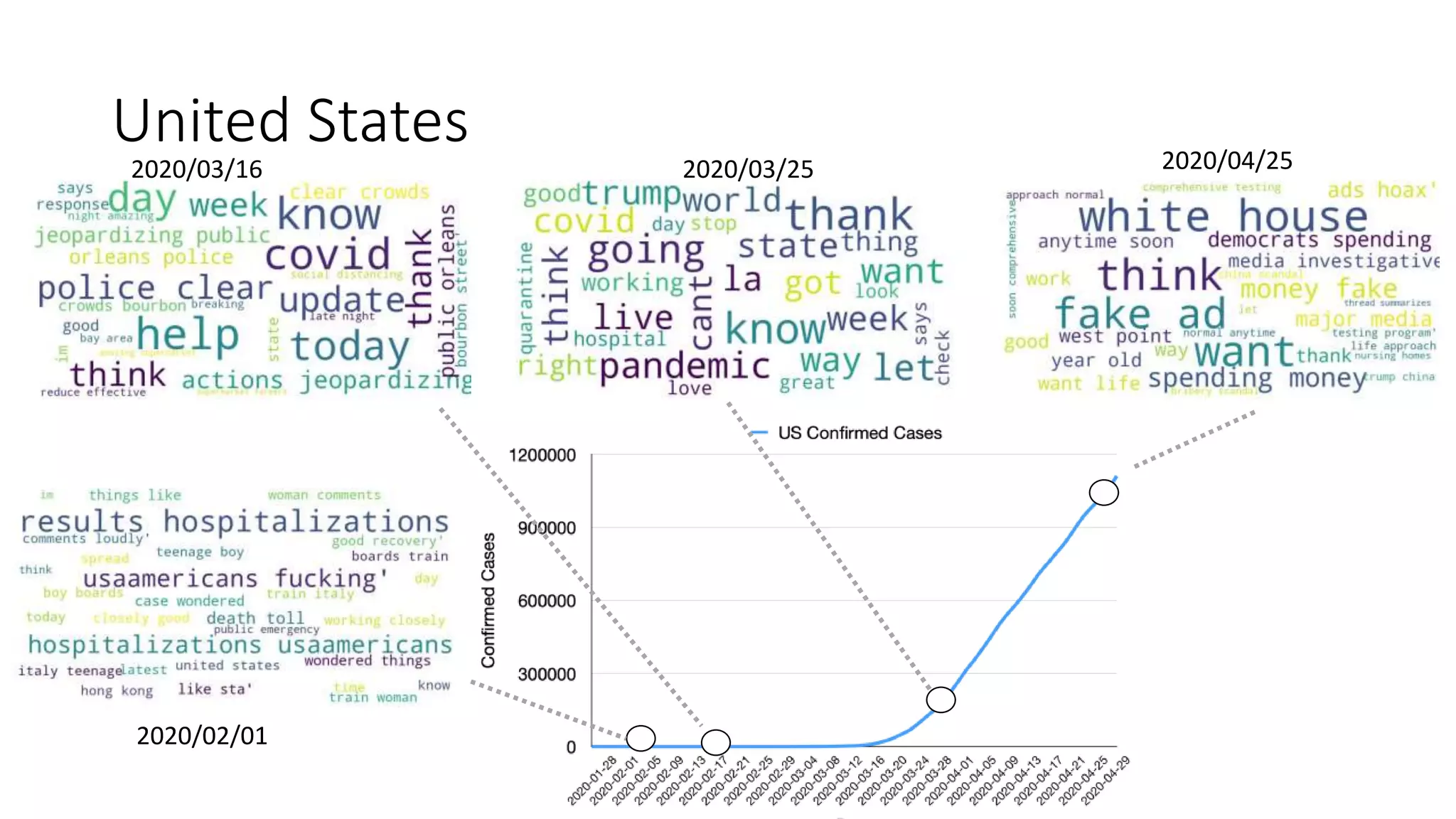

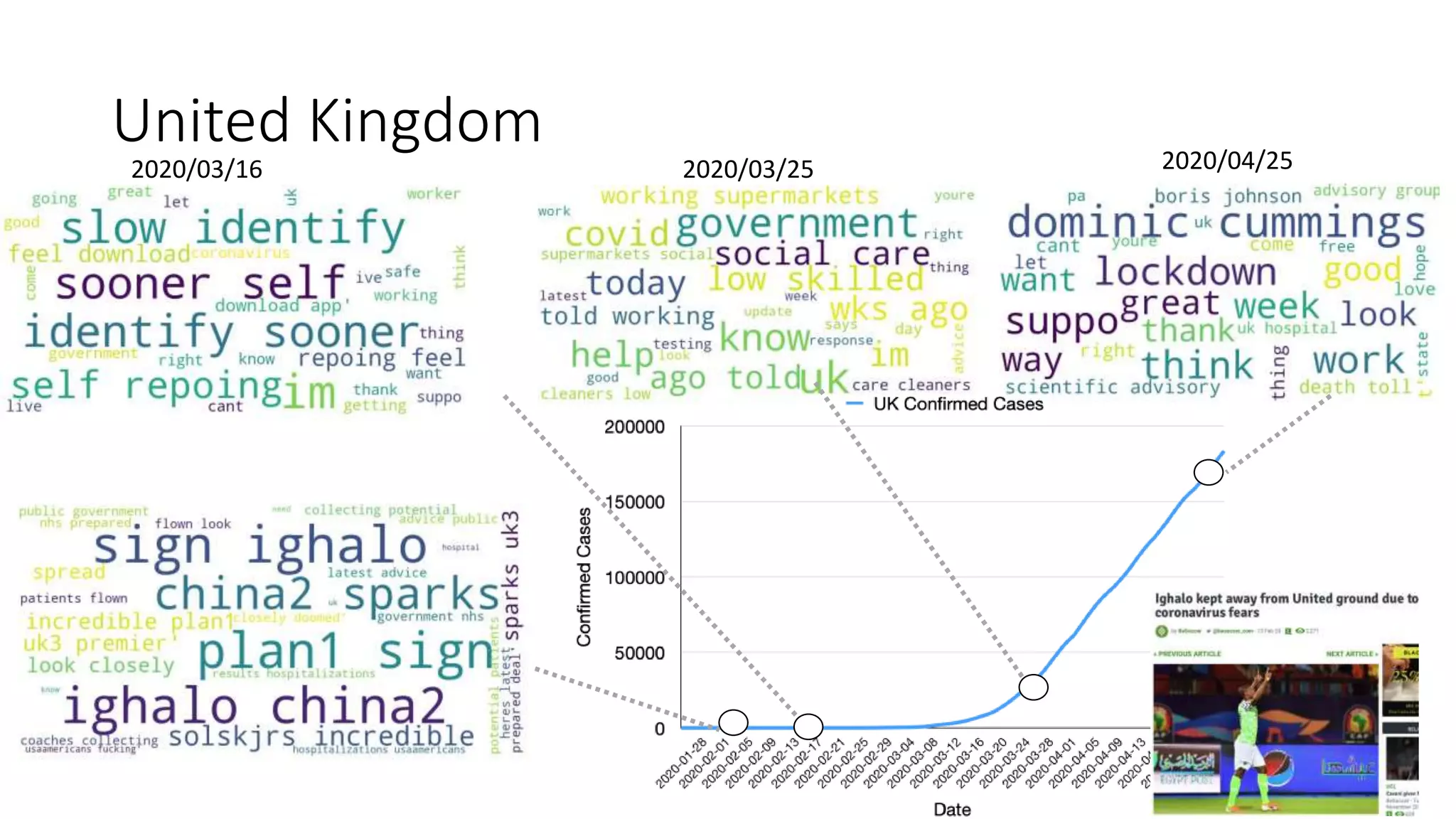

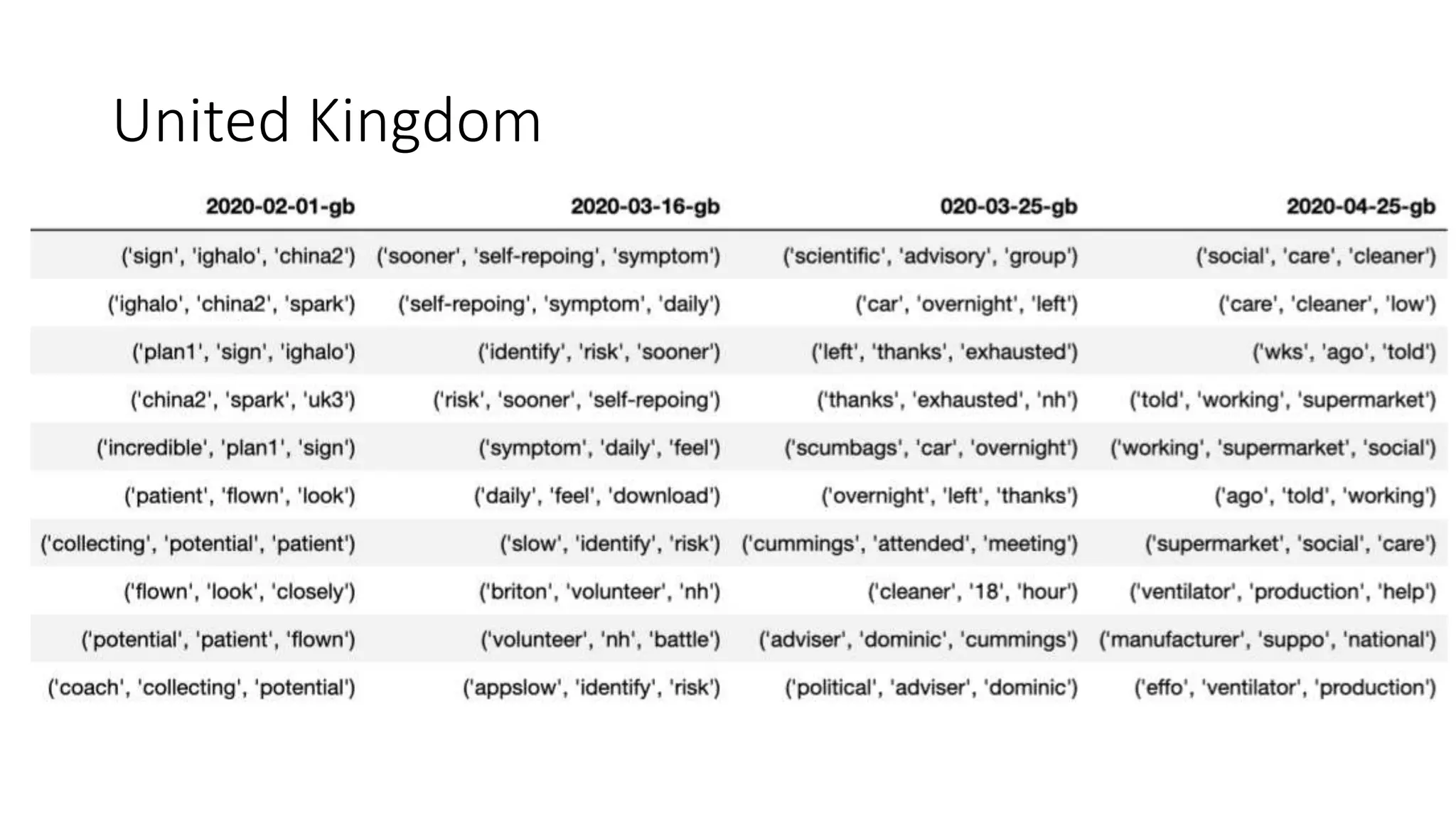

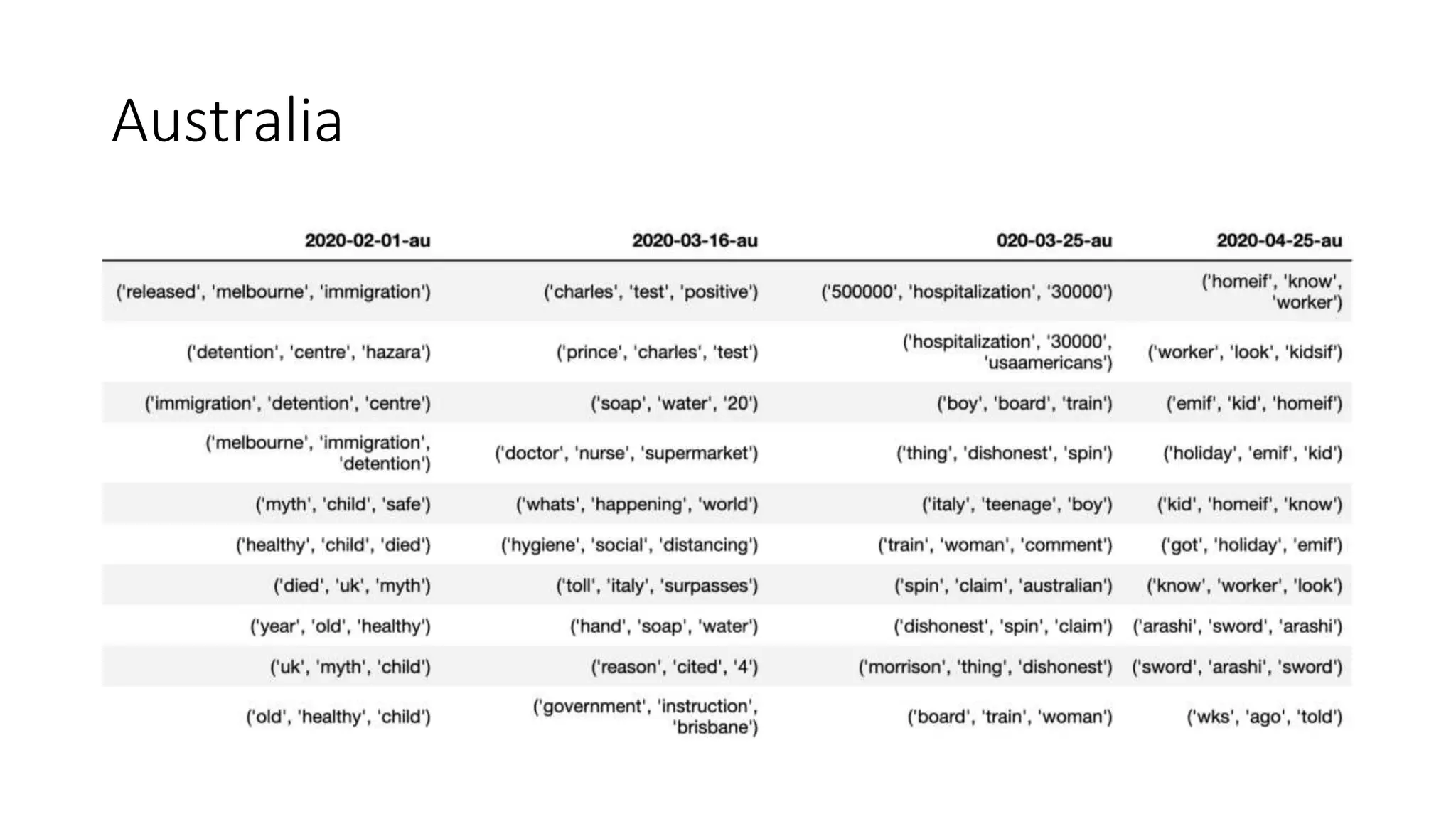

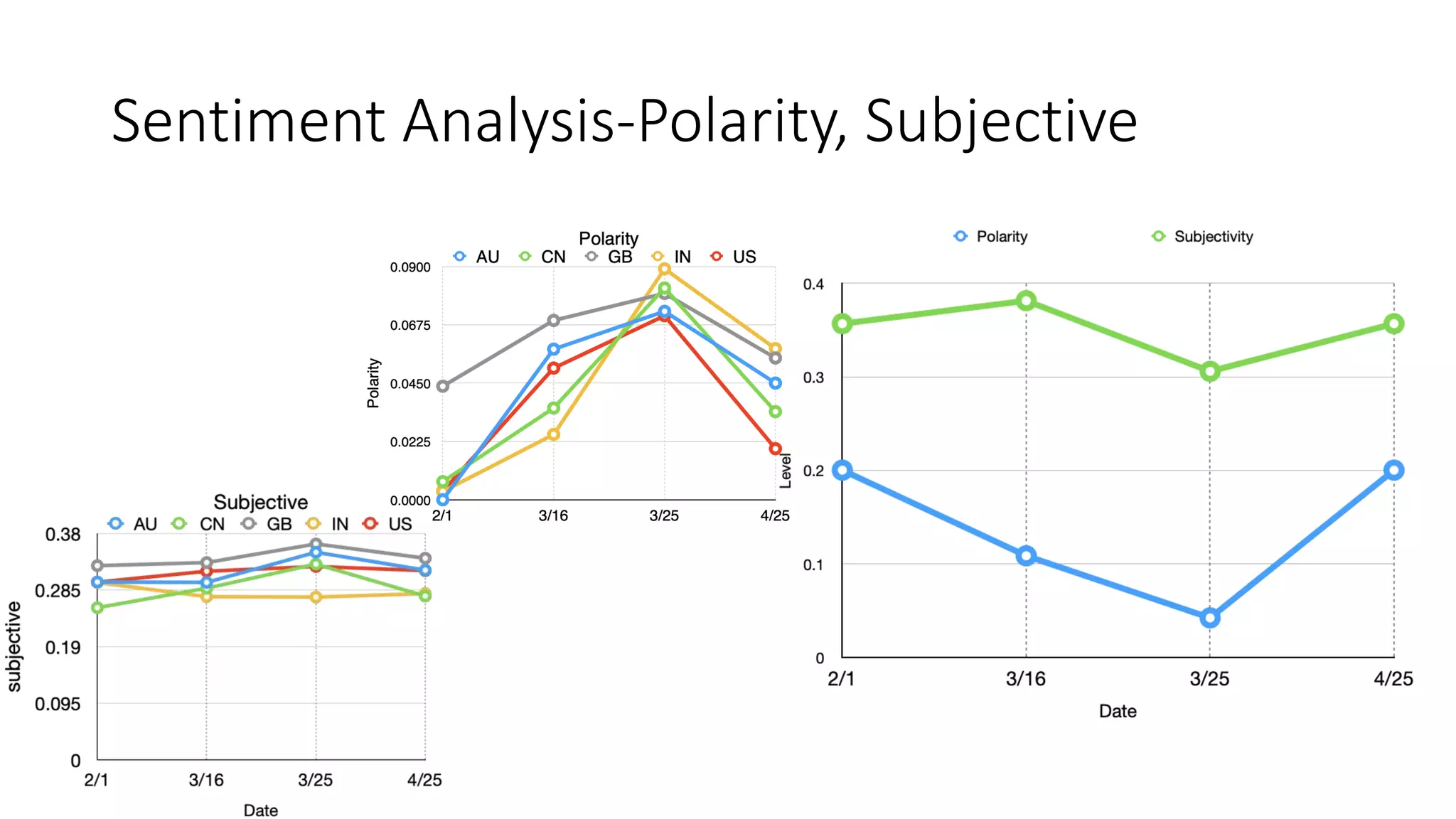

The document discusses the correlation between Twitter users' locations and their opinions on COVID-19, presenting methodologies for data acquisition from Twitter, data pre-processing, and sentiment analysis. It highlights the top five countries (US, India, UK, China, Australia) that tweeted about COVID-19 from February to May, along with sentiment polarity and subjectivity over time. It also briefly contrasts data collection from Twitter and Facebook and mentions tools for sentiment analysis.