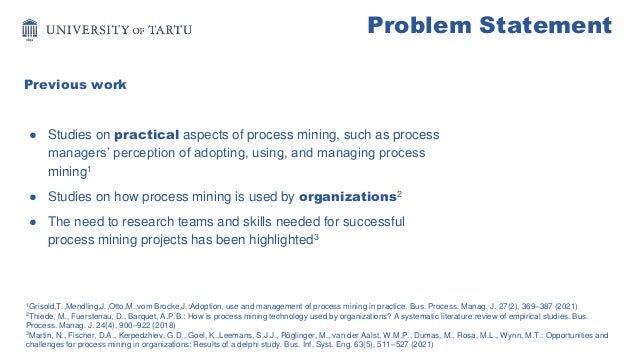

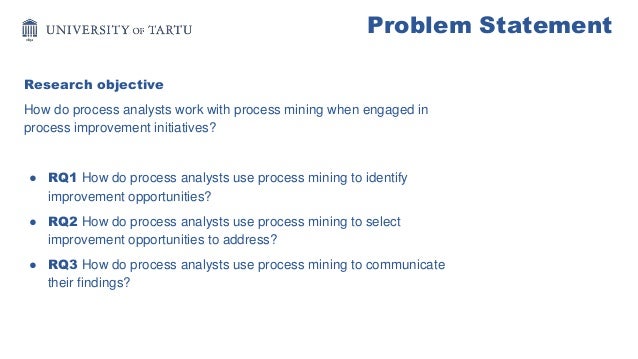

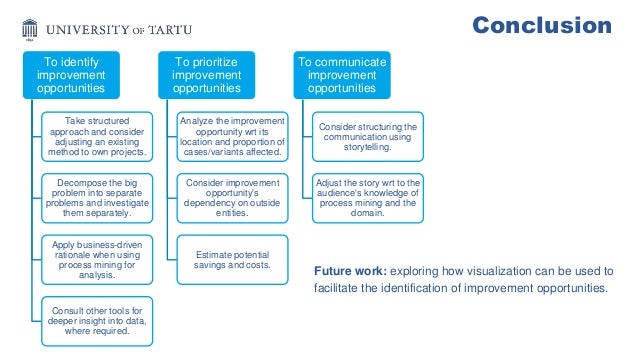

The document presents an evaluation of process mining practices for process improvement initiatives, focusing on how process analysts utilize these tools to identify, select, and communicate improvement opportunities. It includes findings from semi-structured interviews with participants across various industries, highlighting structured approaches, the use of visualizations, and storytelling in communication. The conclusion emphasizes the need for process mining tools to enhance visualizations and explore new research directions to better assist analysts.