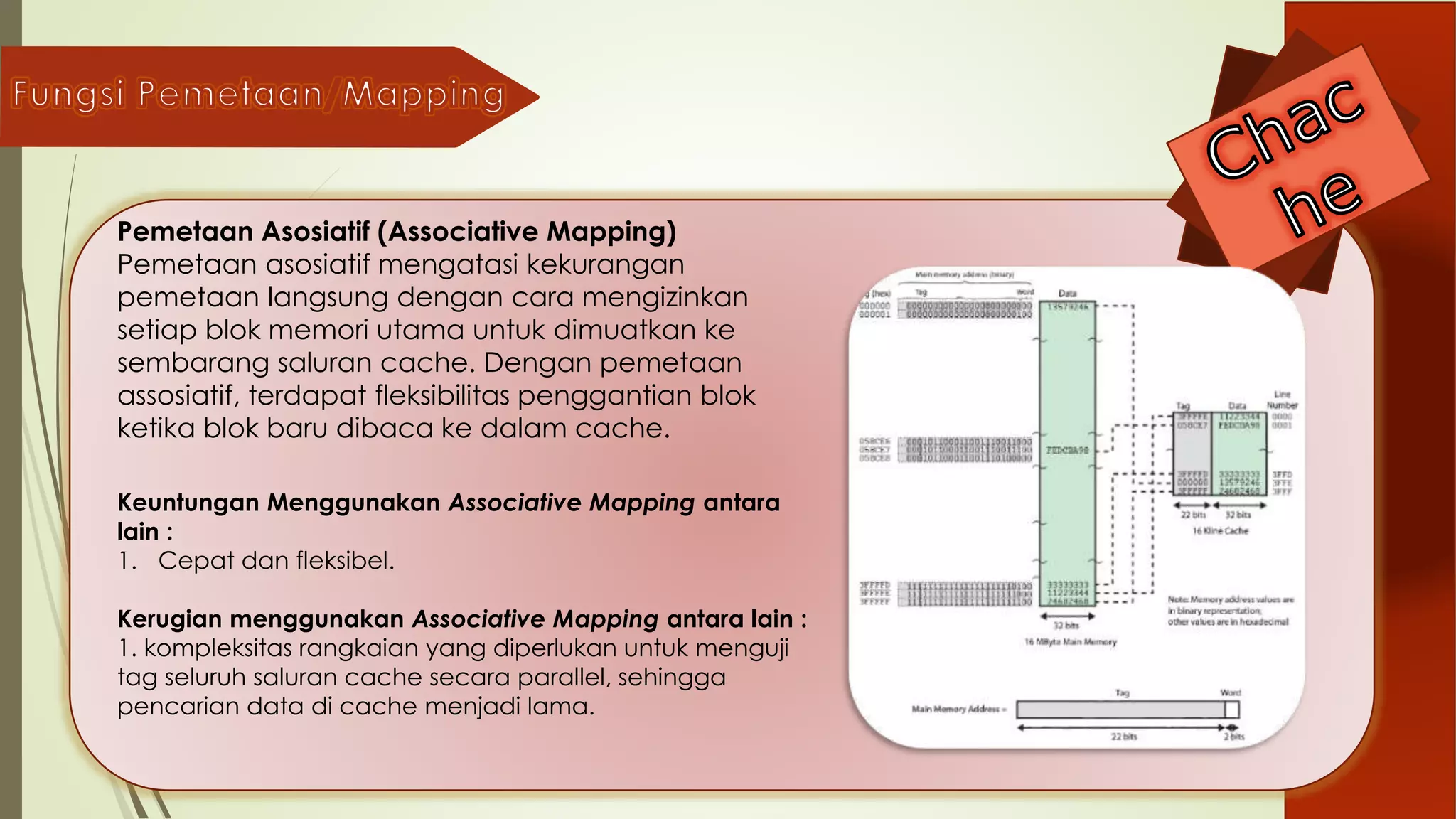

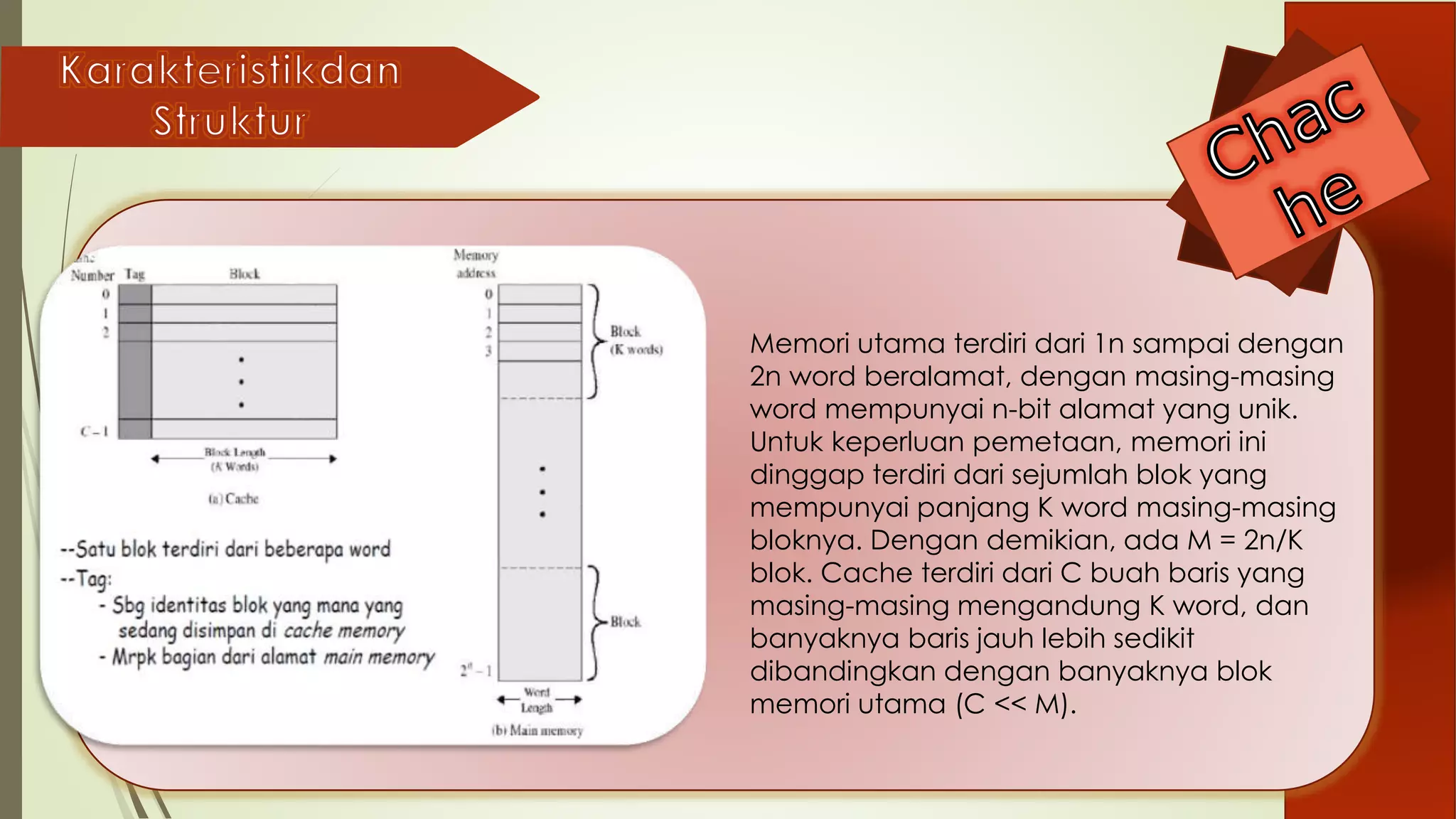

Dokumen ini membahas tentang fungsi dan pentingnya cache memory dalam meningkatkan efisiensi kerja prosesor dengan cara mengurangi latensi akses data. Selain itu, dijelaskan berbagai teknik pemetaan cache seperti pemetaan langsung, pemetaan asosiatif, dan pemetaan asosiatif set yang memiliki kelebihan dan kekurangan masing-masing. Ukuran optimal cache memory juga diungkapkan untuk mendukung performa komputer secara keseluruhan.

![Kapasitas cache memory yang lebih besar dapat

membantu memperbaiki kinerja prosesor, setidak-

tidaknya mempersingkat waktu yang diperlukan dalam

proses mengakses data.

Menentukan ukuran memori cache sangatlah penting

untuk mendongkrak kinerja komputer. Dari segi harga

cache sangatlah mahal tidak seperti memori utama.

Semakin besar kapasitas cache tidak berarti semakin

cepat prosesnya, dengan ukuran besar akan terlalu

banyak gate pengalamatannya sehingga akan

memperlambat proses.

Lalu berapa idealnya kapasitas cache? Sejumlah

penelitian telah menganjurkan bahwa ukuran cache

antara 1KB dan 512KB akan lebih optimum [STA96].](https://image.slidesharecdn.com/cache-151026023653-lva1-app6891/75/Presentasi-Cache-Memori-6-2048.jpg)