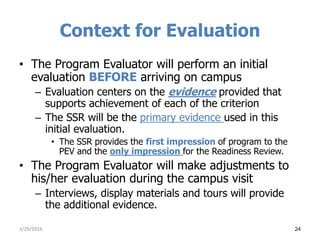

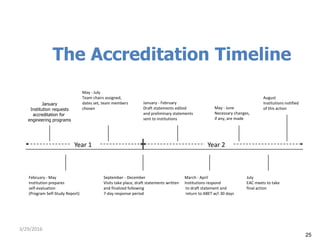

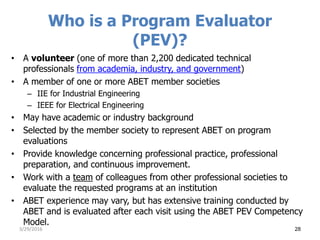

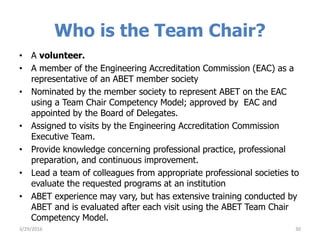

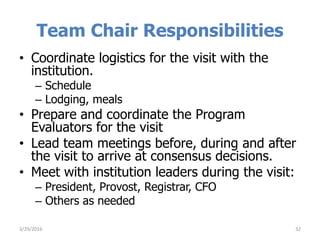

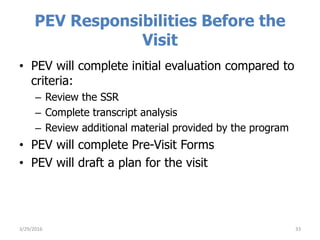

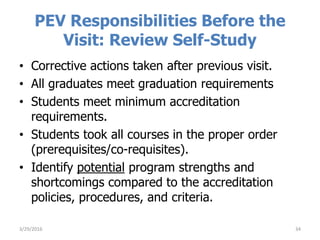

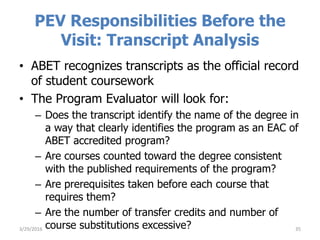

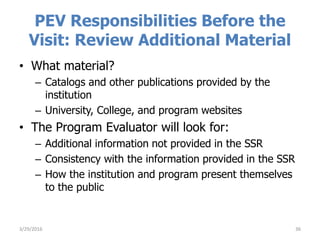

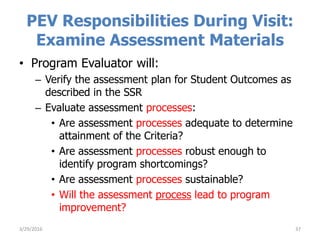

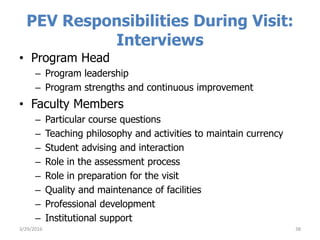

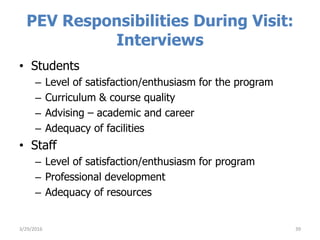

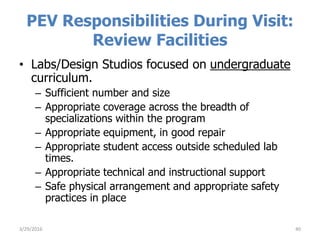

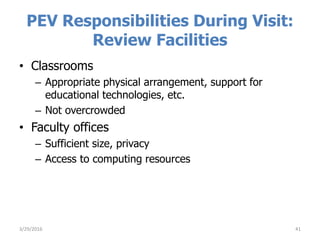

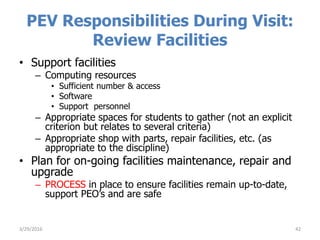

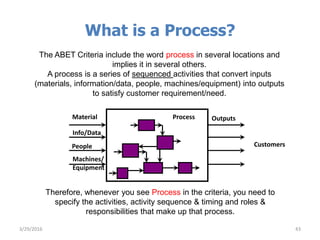

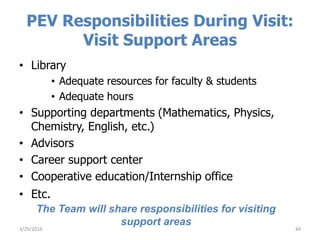

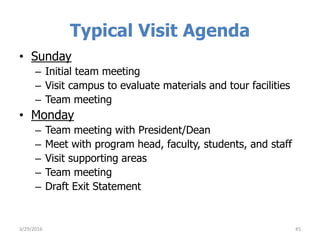

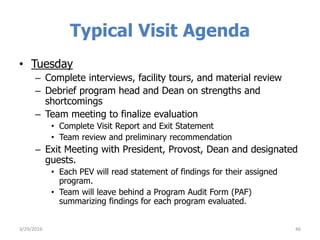

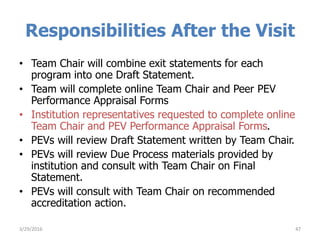

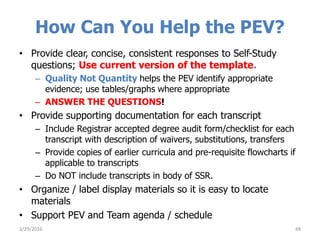

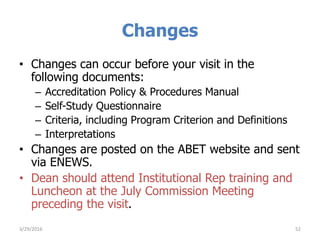

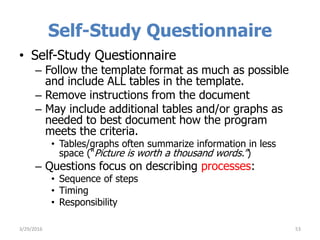

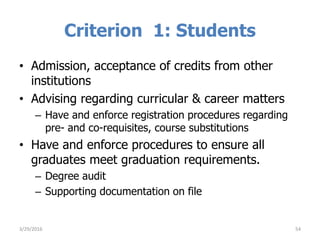

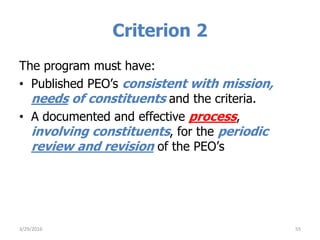

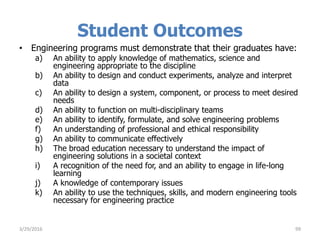

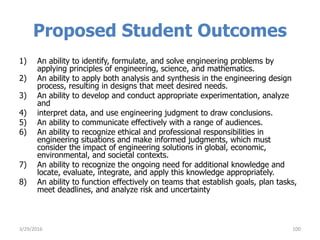

This document provides information about preparing for an ABET accreditation evaluation visit. It discusses what ABET is and its purpose in accrediting engineering programs. It outlines the accreditation timeline and responsibilities of the program evaluator and team chair before, during, and after the visit. These include reviewing the self-study report and conducting interviews and facility tours to evaluate how the program meets ABET's criteria. The typical visit agenda involves initial team meetings and meetings with campus administrators over a 2-day period.