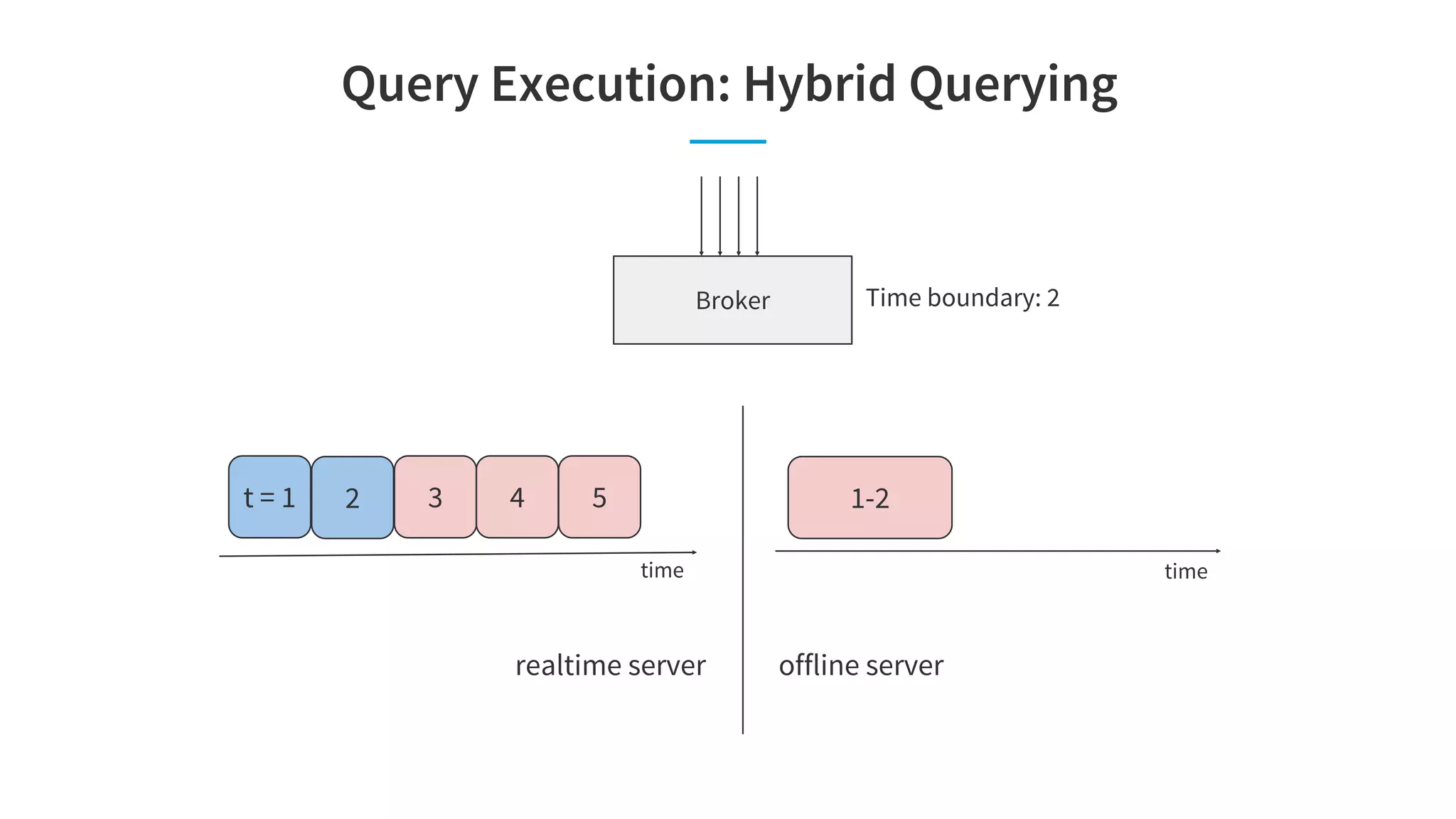

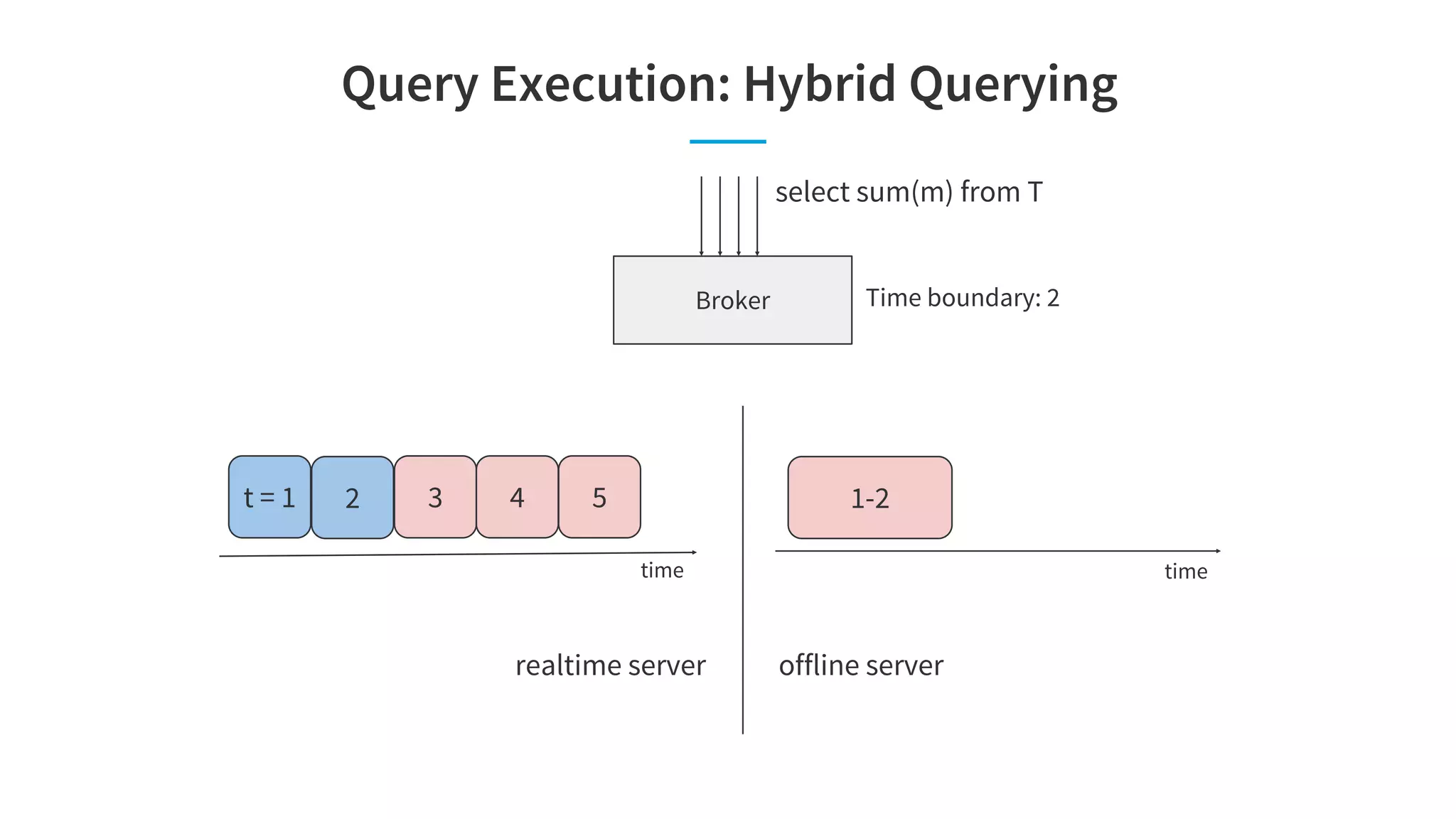

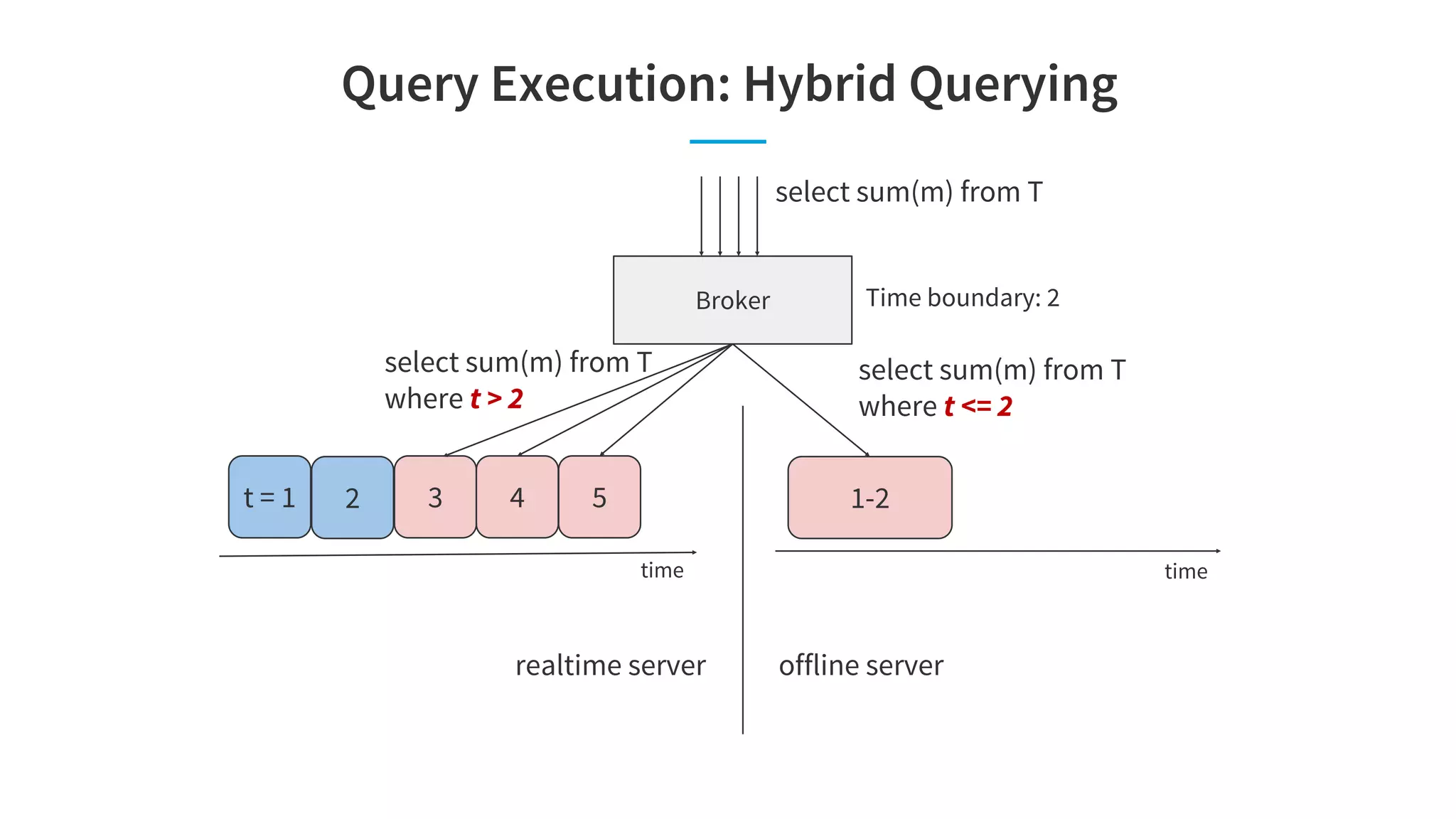

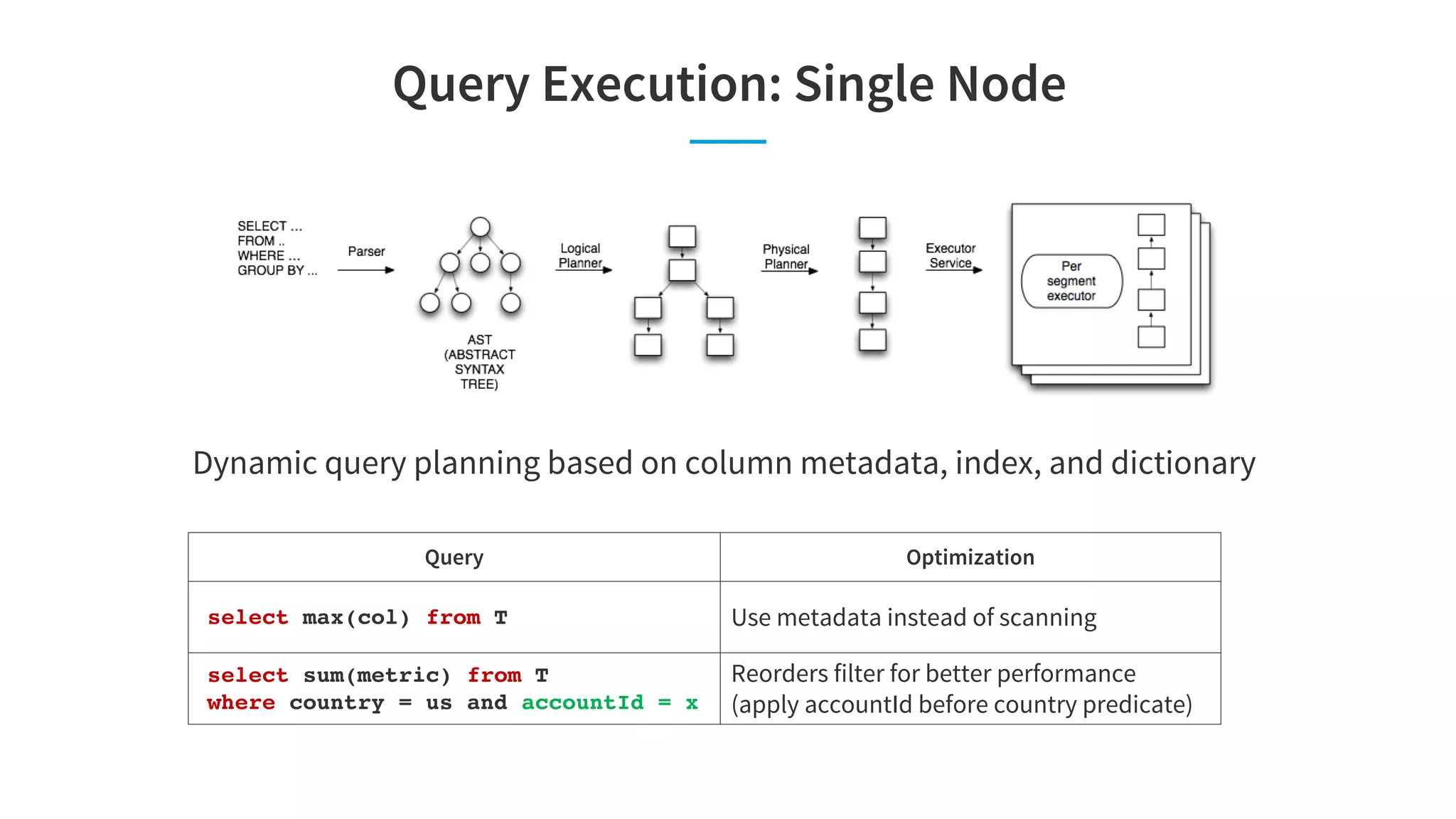

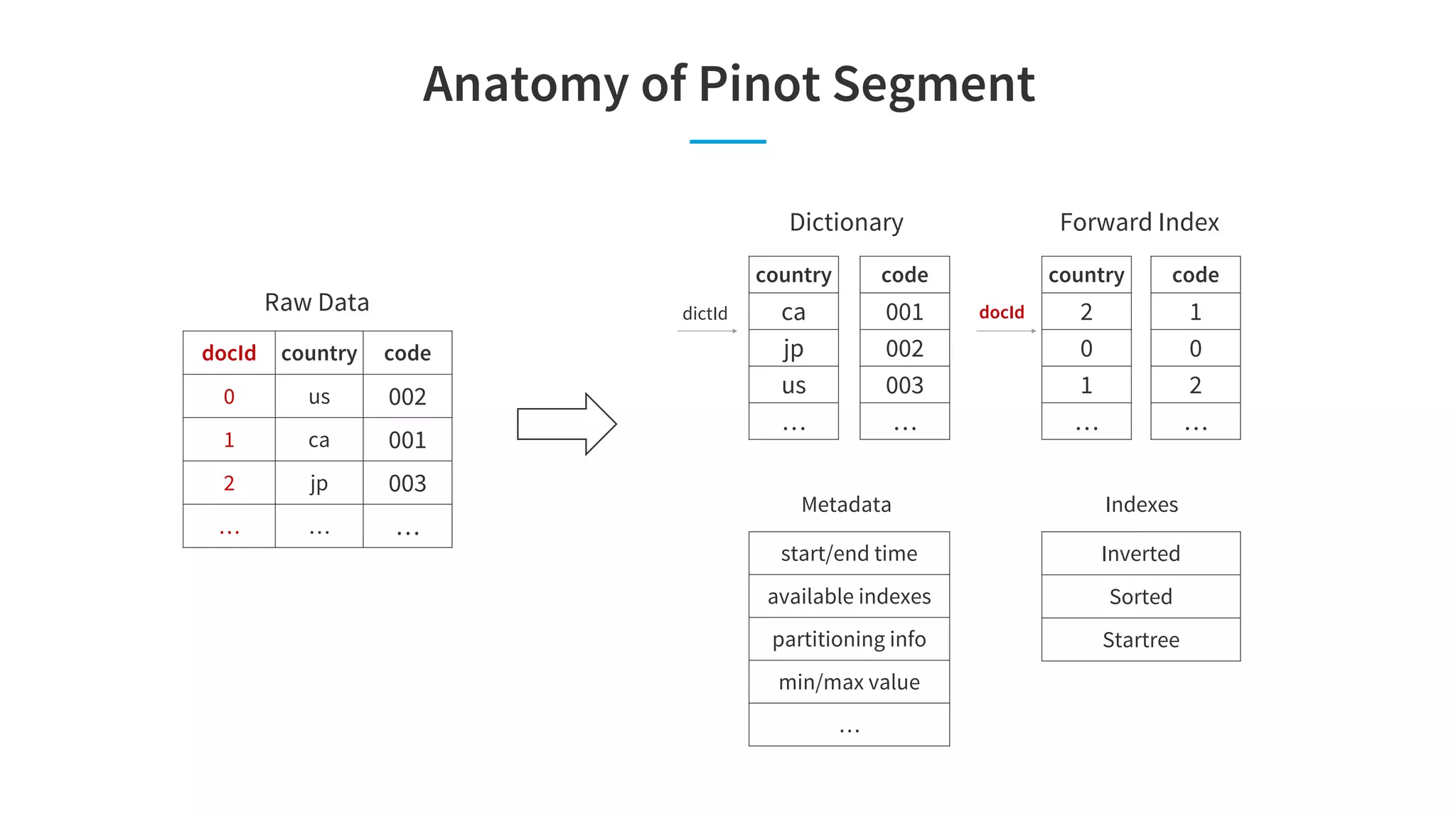

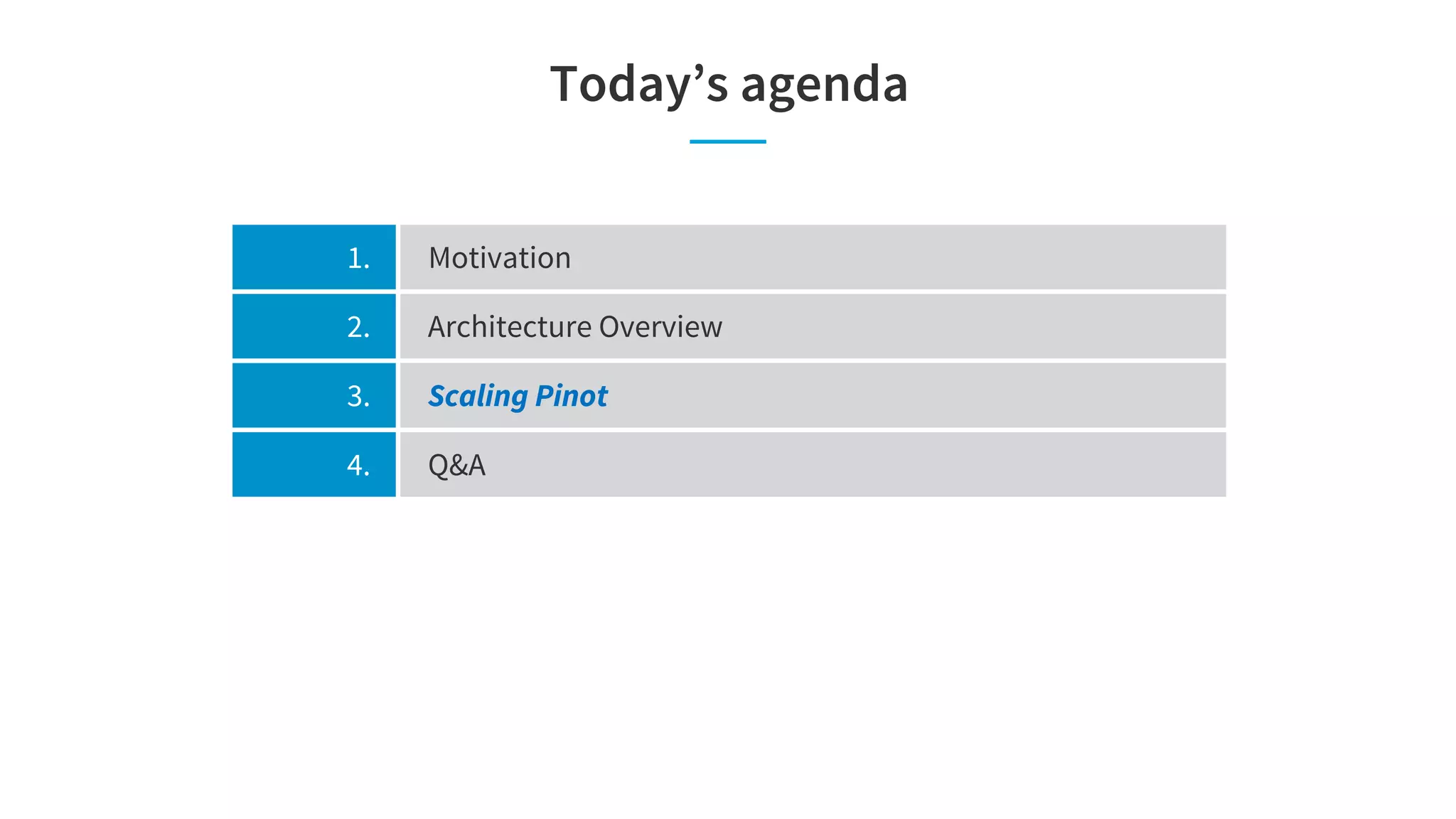

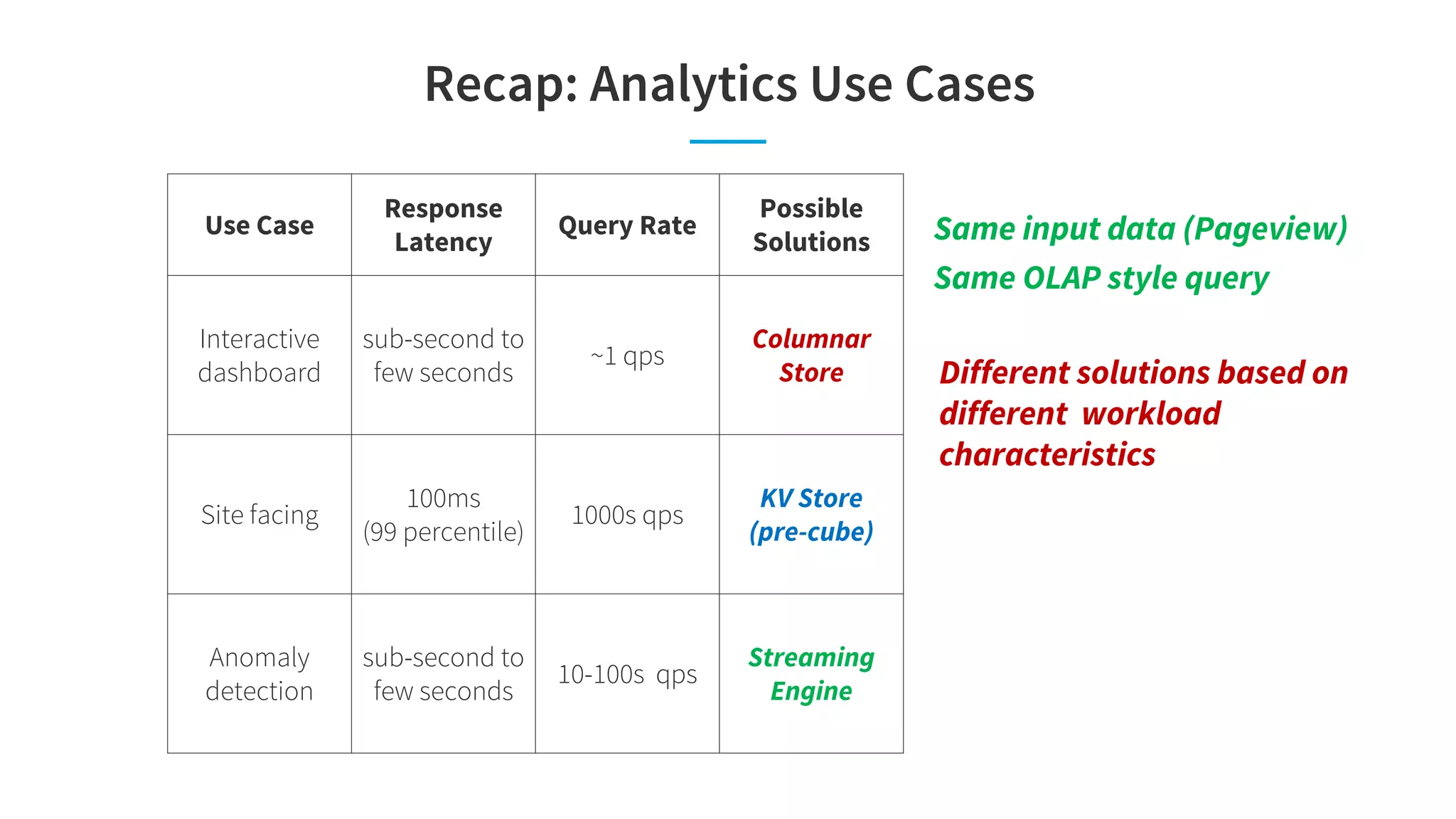

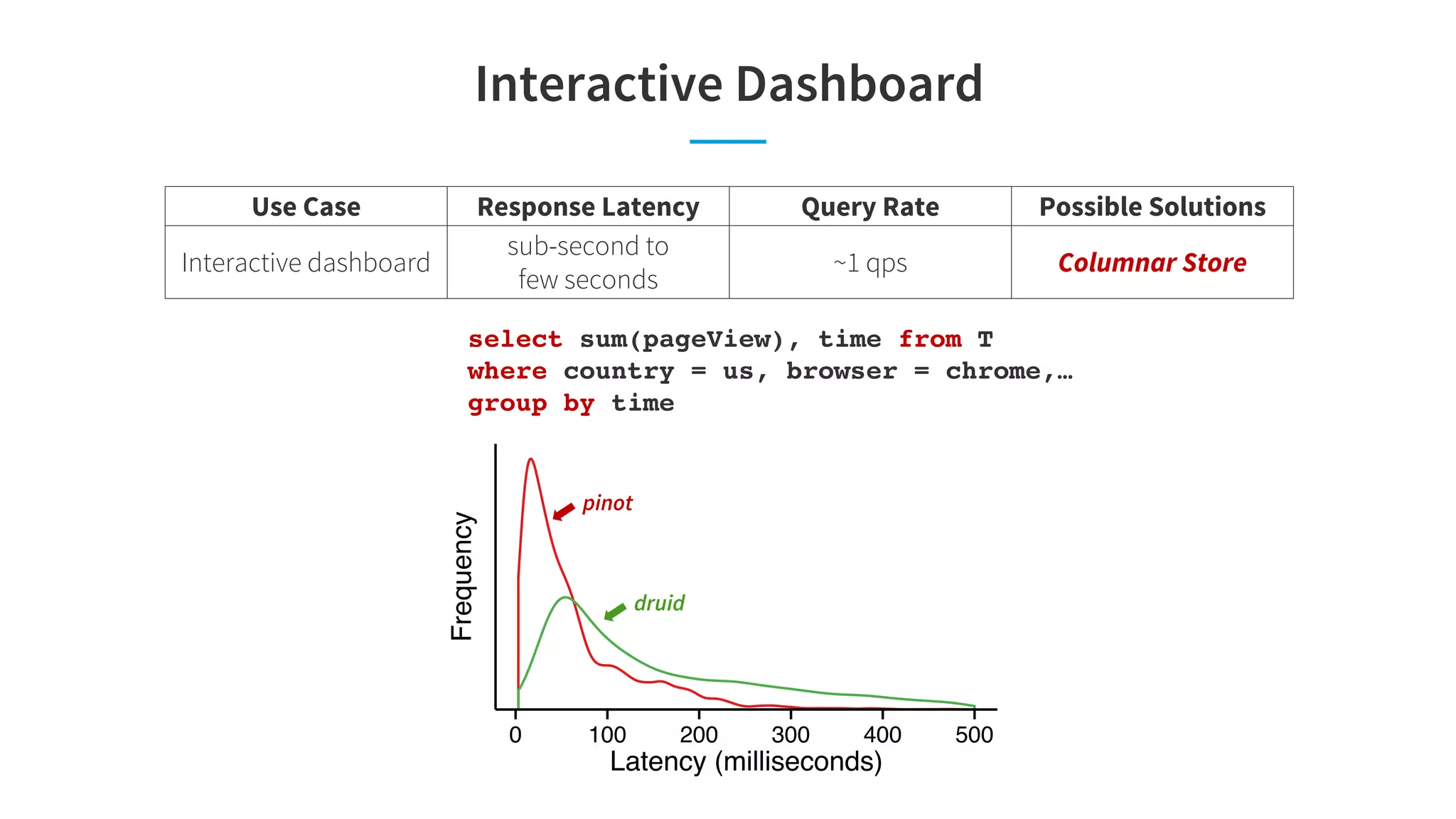

Pinot is a real-time OLAP data store that can support multiple analytics use cases like interactive dashboards, site facing queries, and anomaly detection in a single system. It achieves this through features like configurable indexes, dynamic query planning and execution, smart data partitioning and routing, and pre-materialized indexes like star-trees that optimize for latency and throughput across different workloads. The document discusses Pinot's architecture and optimizations that enable it to meet the performance requirements of these different use cases.

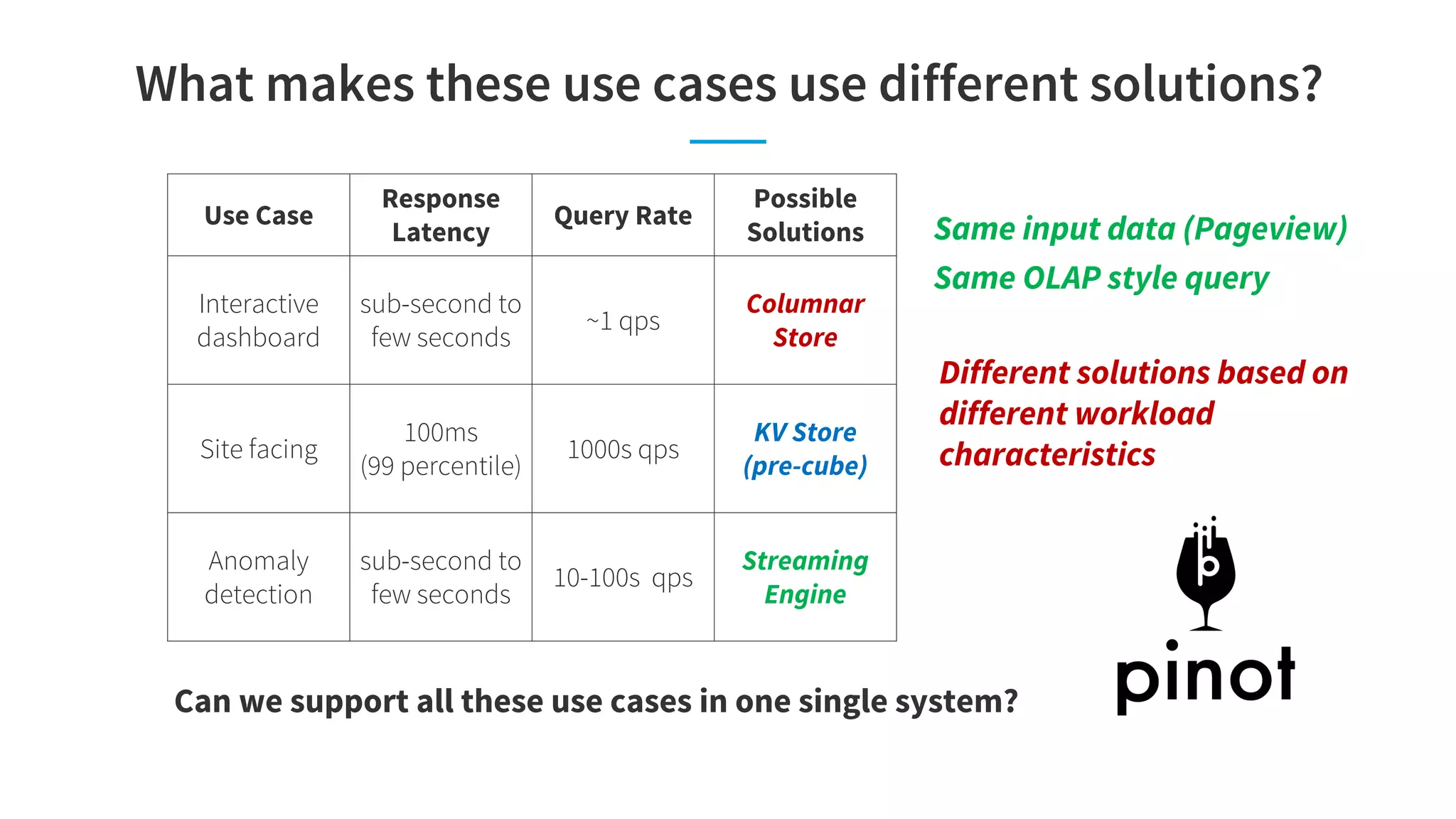

![Analytics Use Case: Site Facing

select sum(pageView) from T

where memberId = 456,

pageKey = “profilePage”,

privacySettings in (…)

group by time,[title|geo|industry]

Pre-defined query format with different

primary key values

Use Case Response Latency Query Rate Possible Solutions

Site facing 100ms (99 percentile) 1000s qps KV Store](https://image.slidesharecdn.com/sigmod-final-180725033925/75/Pinot-Realtime-OLAP-for-530-Million-Users-Sigmod-2018-4-2048.jpg)

![Analytics Use Case: Anomaly Detection

for d1 in [us, ca, … ]

for d2 in [chrome, ie, … ]

…

select sum(pageView), time from T

where country = d1, browser = d2

group by time

Identifying all issues requires us to monitor

all possible combinations

Periodic machine generated queries (bursty)

Use Case Response Latency Query Rate Possible Solutions

Anomaly Detection

sub-second to

few seconds

10-100s qps Streaming Engine](https://image.slidesharecdn.com/sigmod-final-180725033925/75/Pinot-Realtime-OLAP-for-530-Million-Users-Sigmod-2018-5-2048.jpg)

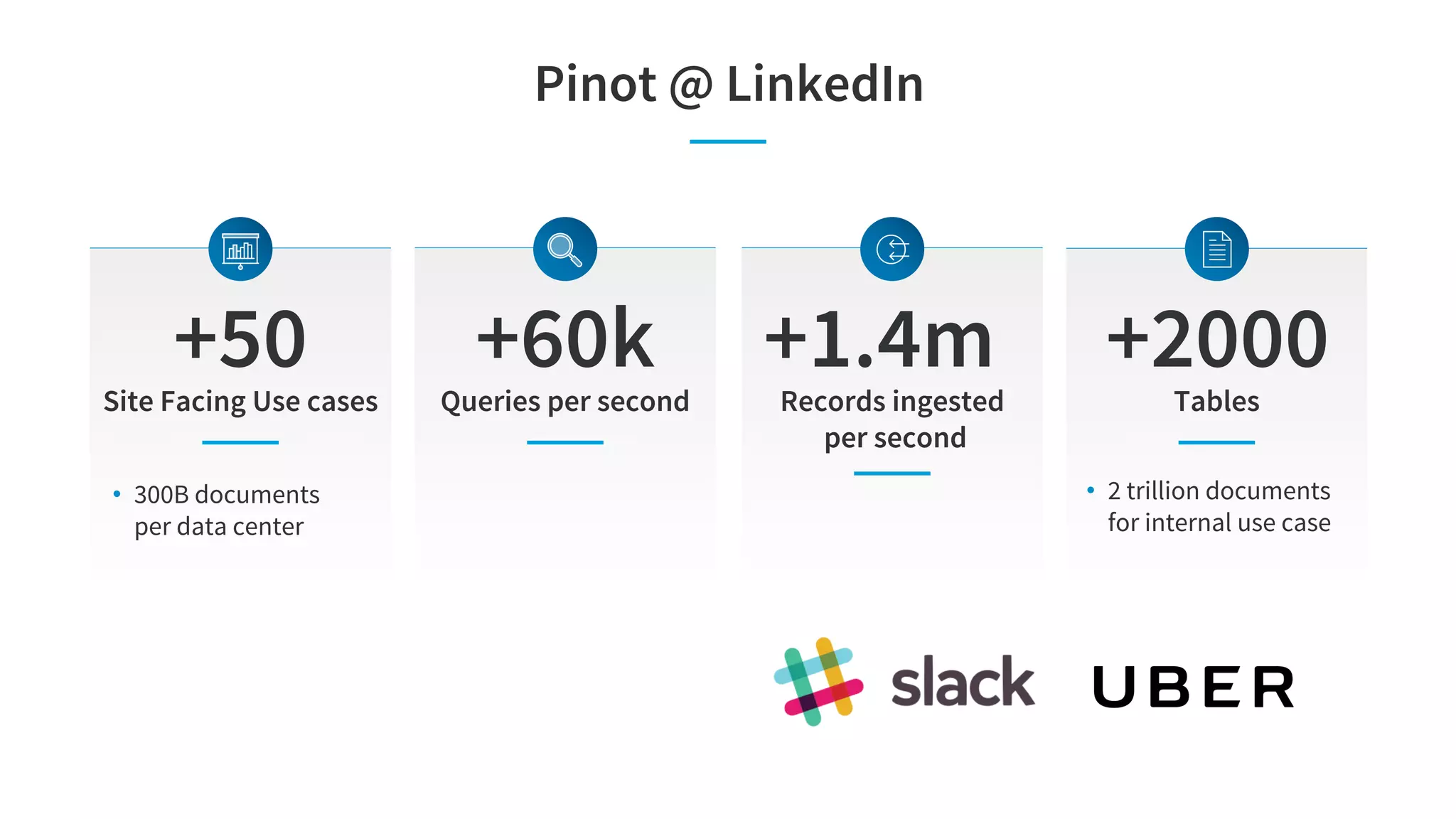

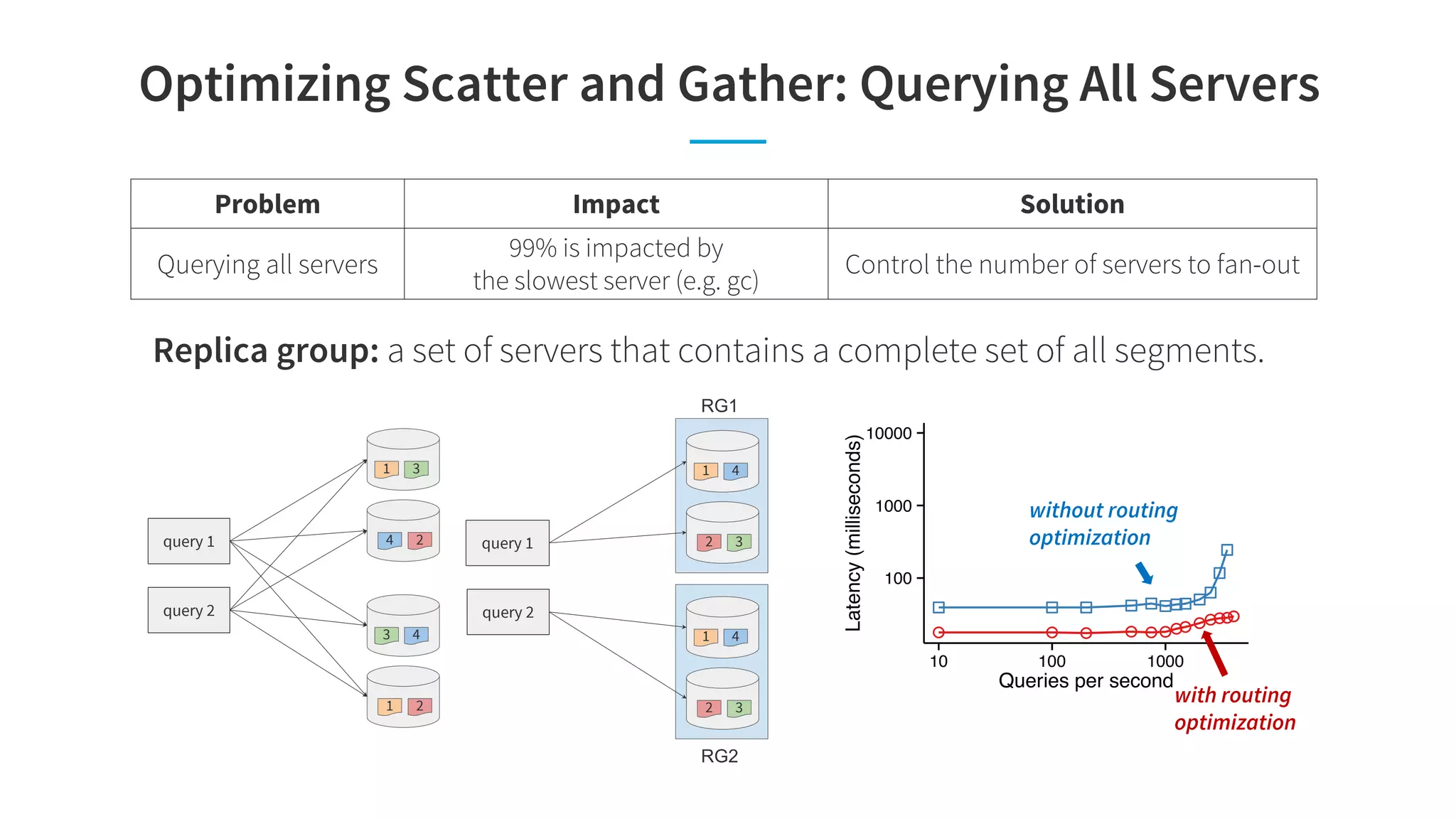

![Site Facing

Use Case Response Latency Query Rate Possible Solutions

Site facing 100ms (99 percentile) 1000s qps KV Store

select sum(pageView) from T

where memberId = xx, privacySettings in…

group by time,[title|geo|industry]

● ● ● ●

● ●

●●●●

● ● ● ●

● ●

●●●●

● ● ● ●

● ●

●● ●●100

1000

10000

10 1000

Queries per second

Latency(milliseconds)

druid

pinot

● ● ● ● ● ●●● ●●●●●

● ● ● ● ● ●●● ●●●●●

● ● ● ● ● ●●● ●●●●●

100

1000

10000

10 100 1000

Queries per second

Latency(milliseconds)

pinot

druid](https://image.slidesharecdn.com/sigmod-final-180725033925/75/Pinot-Realtime-OLAP-for-530-Million-Users-Sigmod-2018-23-2048.jpg)

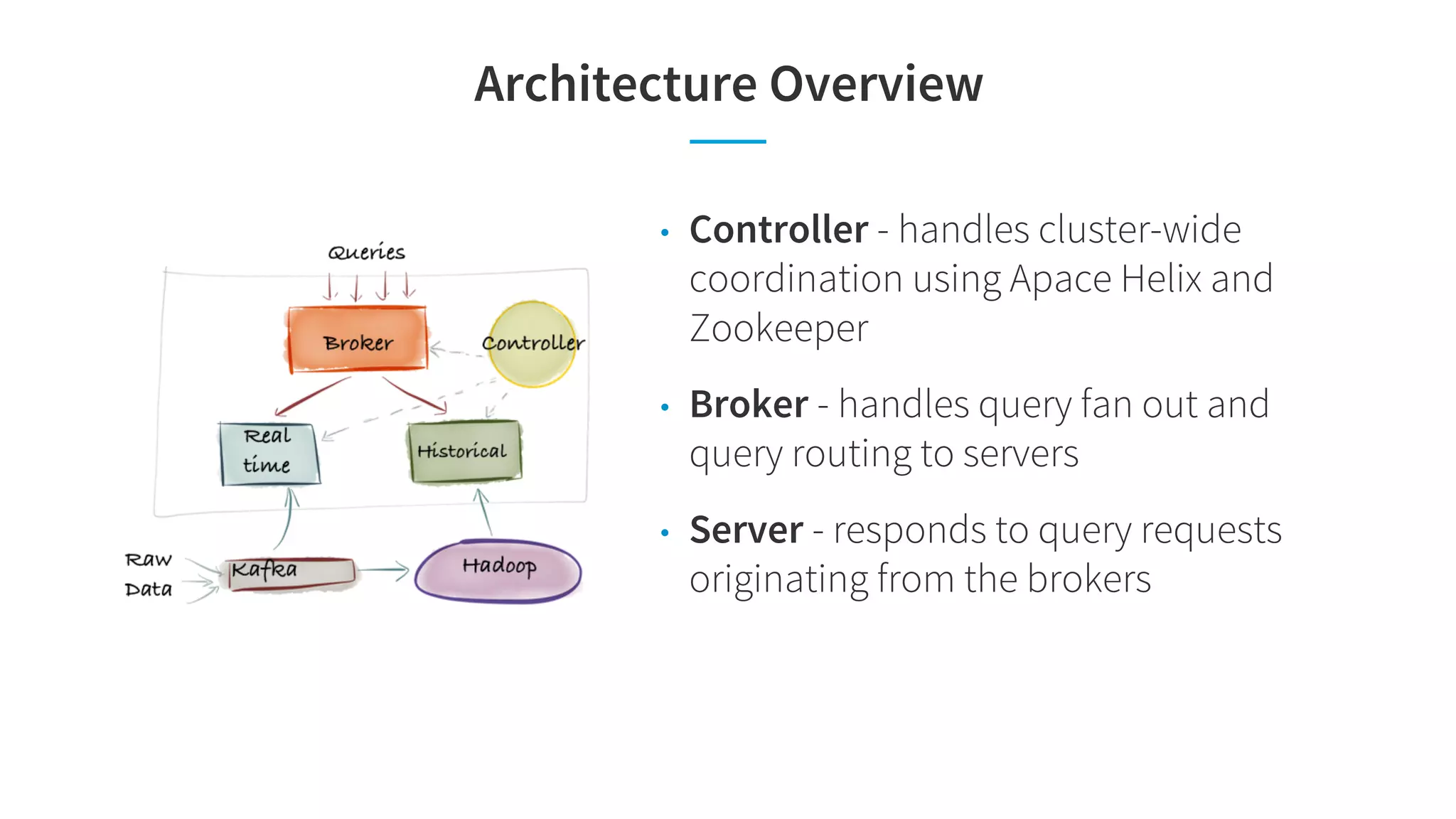

![Anomaly Detection: Challenge

for d1 in [us, ca, …]

for d2 in [key1, key2,…]

…

select sum(pageViews) from T

where country=d1, page_key=d2,

source_app=d3, device_name=d4…

group by country, time

…

Filter Aggregation Latency

select …

where country = us,…

Slow, scan 60-70% data high

select …

where country = kenya,…

Scan less than 1% low

• Latency not predictable depends on the query predicate

• Monitoring all possible combinations makes the problem worse!](https://image.slidesharecdn.com/sigmod-final-180725033925/75/Pinot-Realtime-OLAP-for-530-Million-Users-Sigmod-2018-28-2048.jpg)