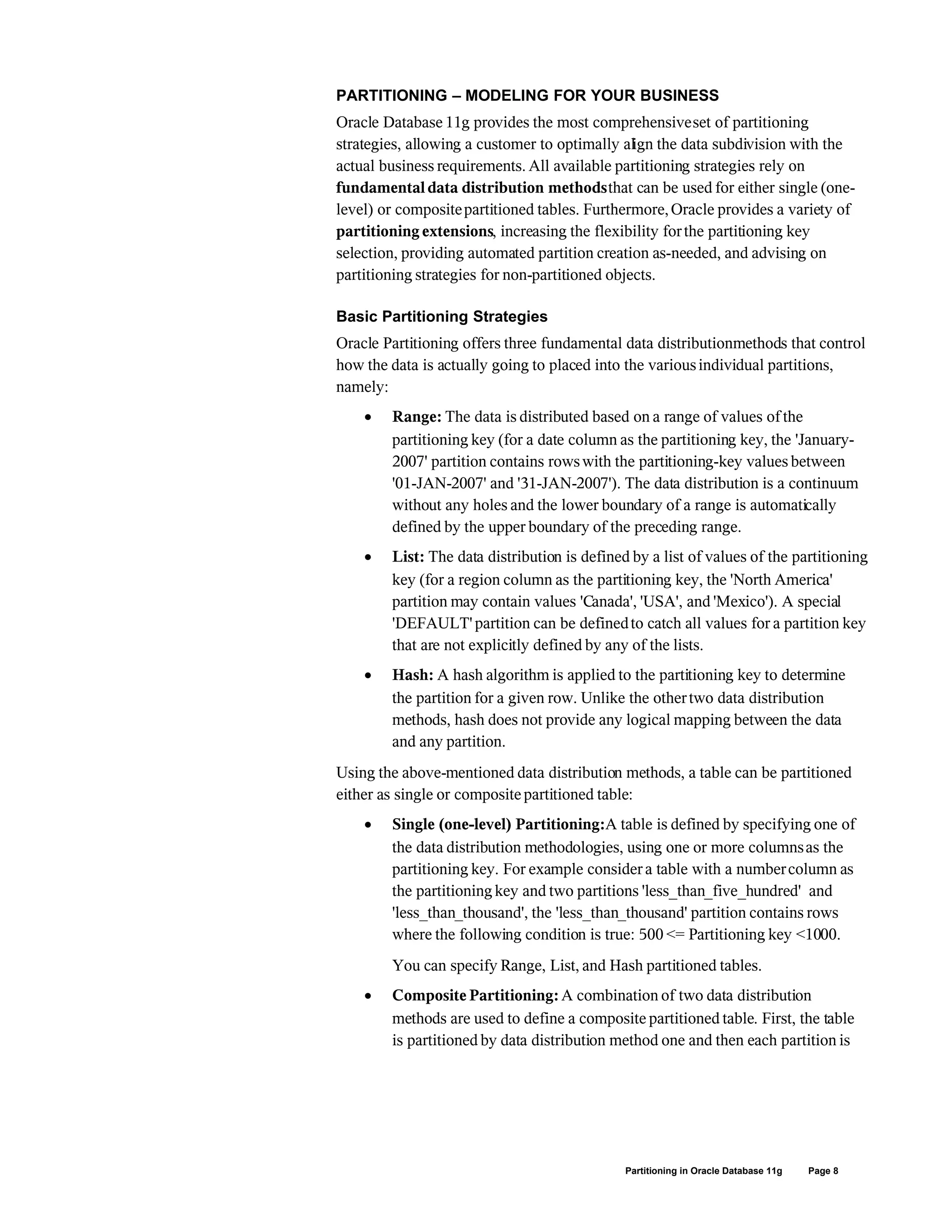

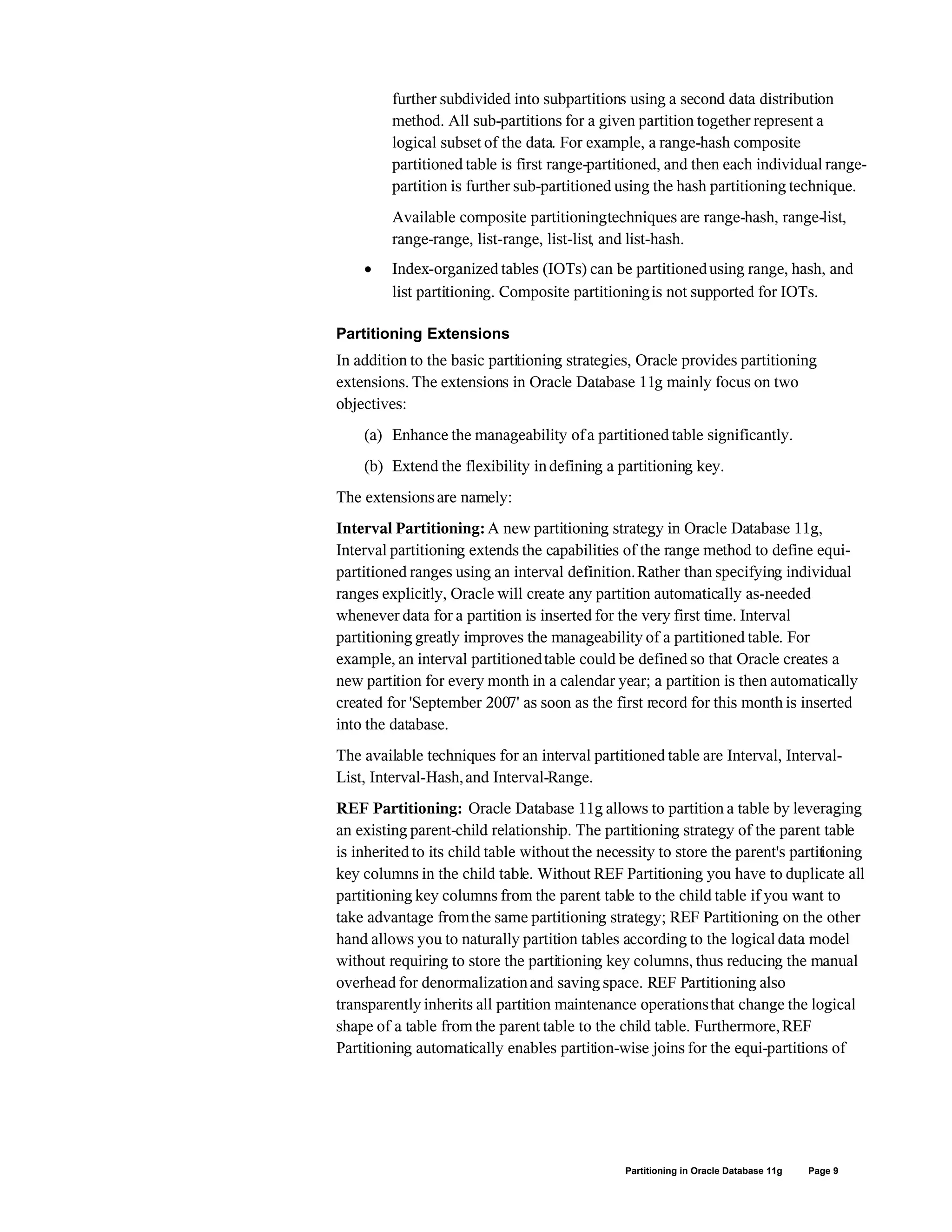

This document discusses partitioning in Oracle Database 11g. It introduces partitioning concepts and strategies including range, list, hash, interval and reference partitioning. It describes how partitioning can improve performance through pruning and partition-wise joins. It also explains how partitioning enhances manageability through maintenance operations on individual partitions and improves availability through partition independence. The document outlines Oracle Database 11g's extensions to partitioning including interval partitioning, reference partitioning, and virtual column-based partitioning.