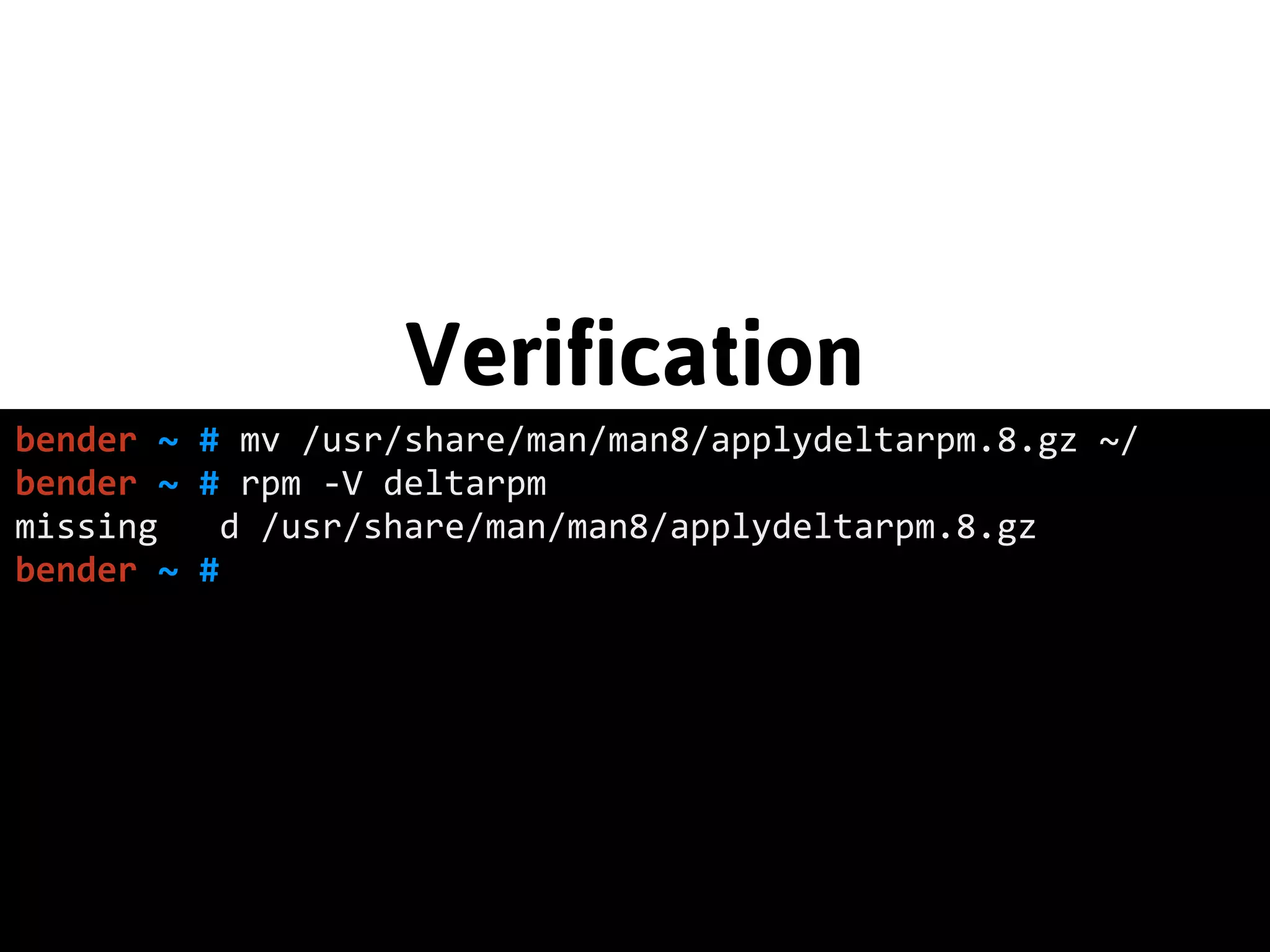

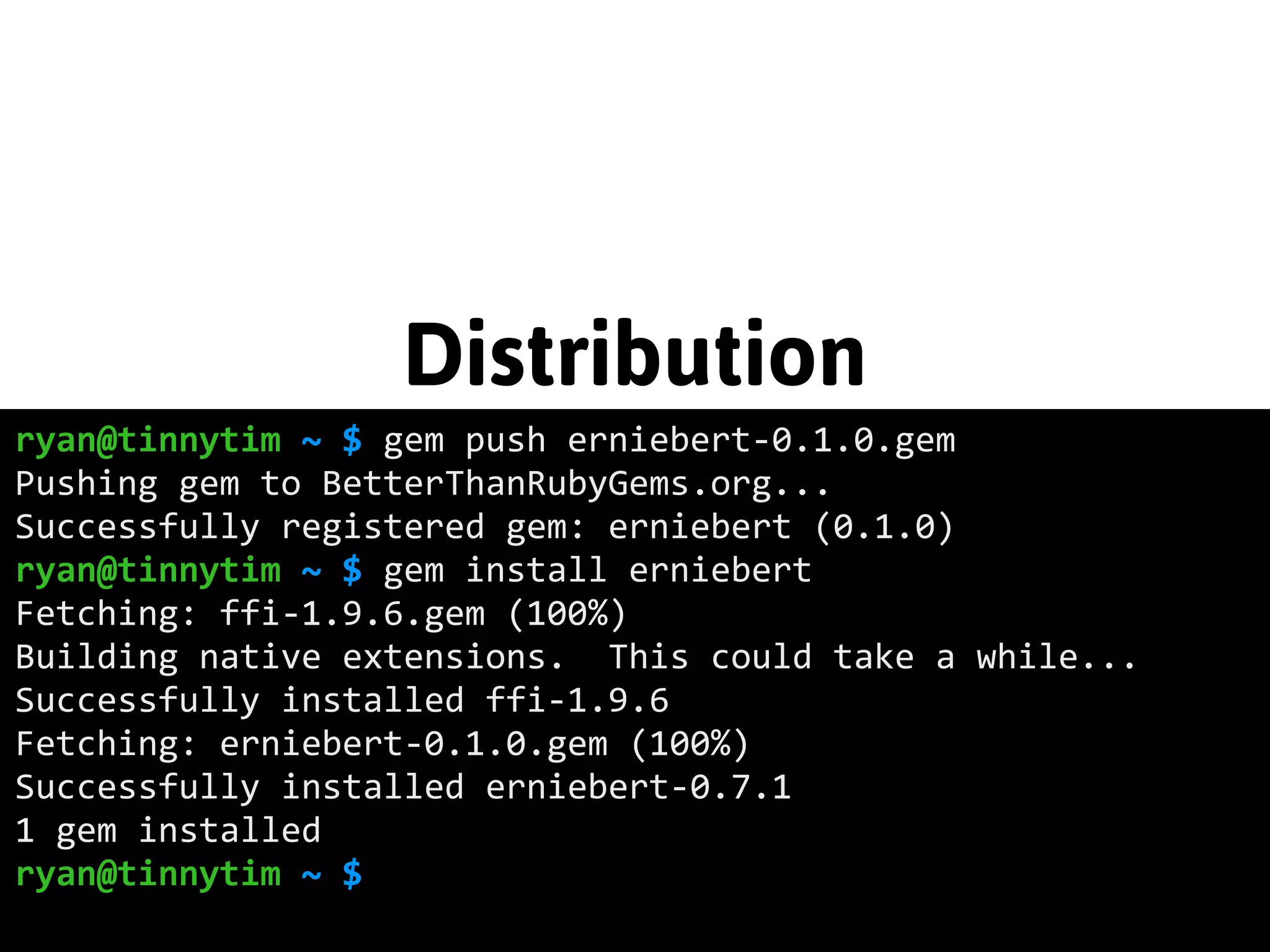

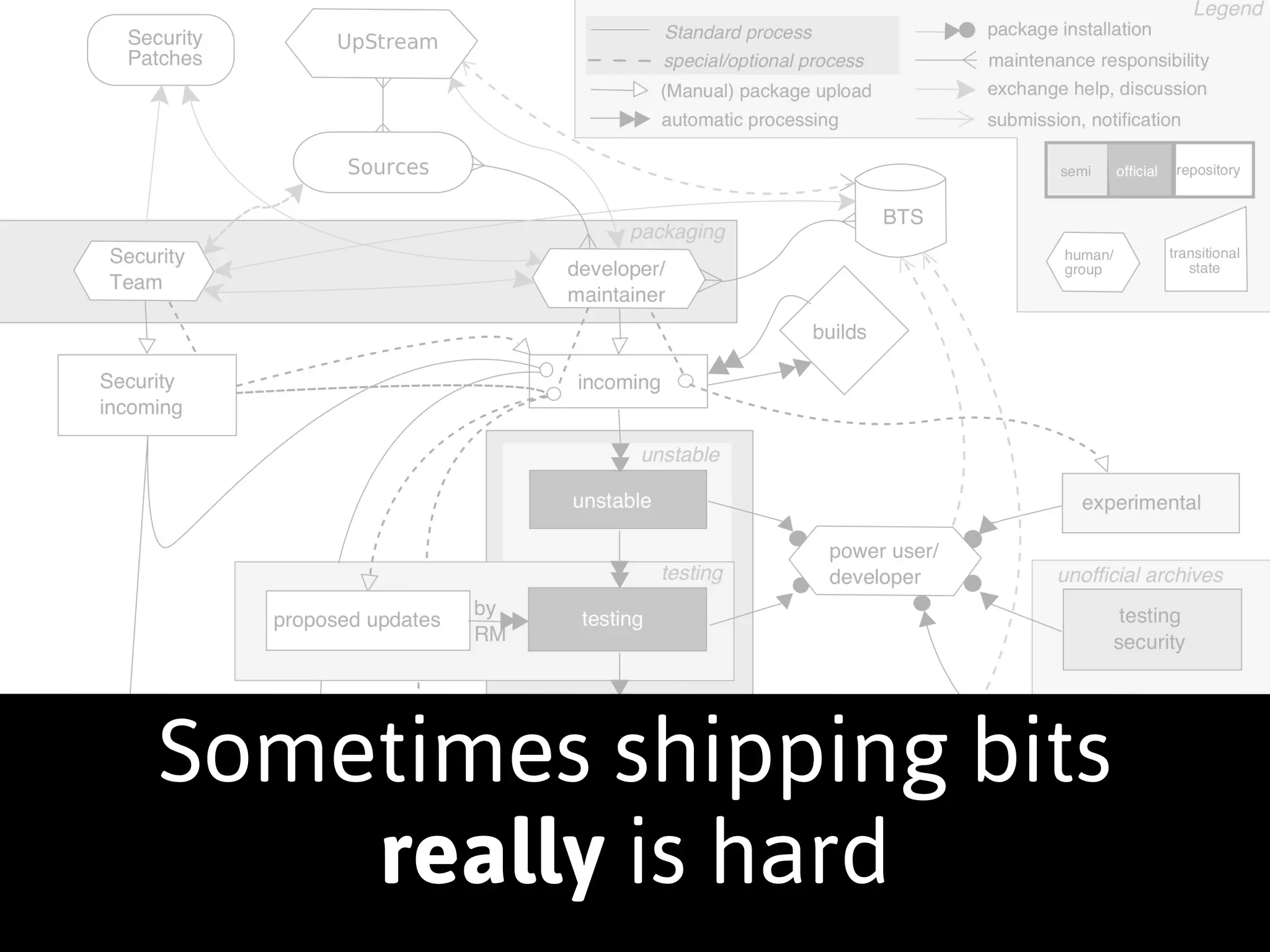

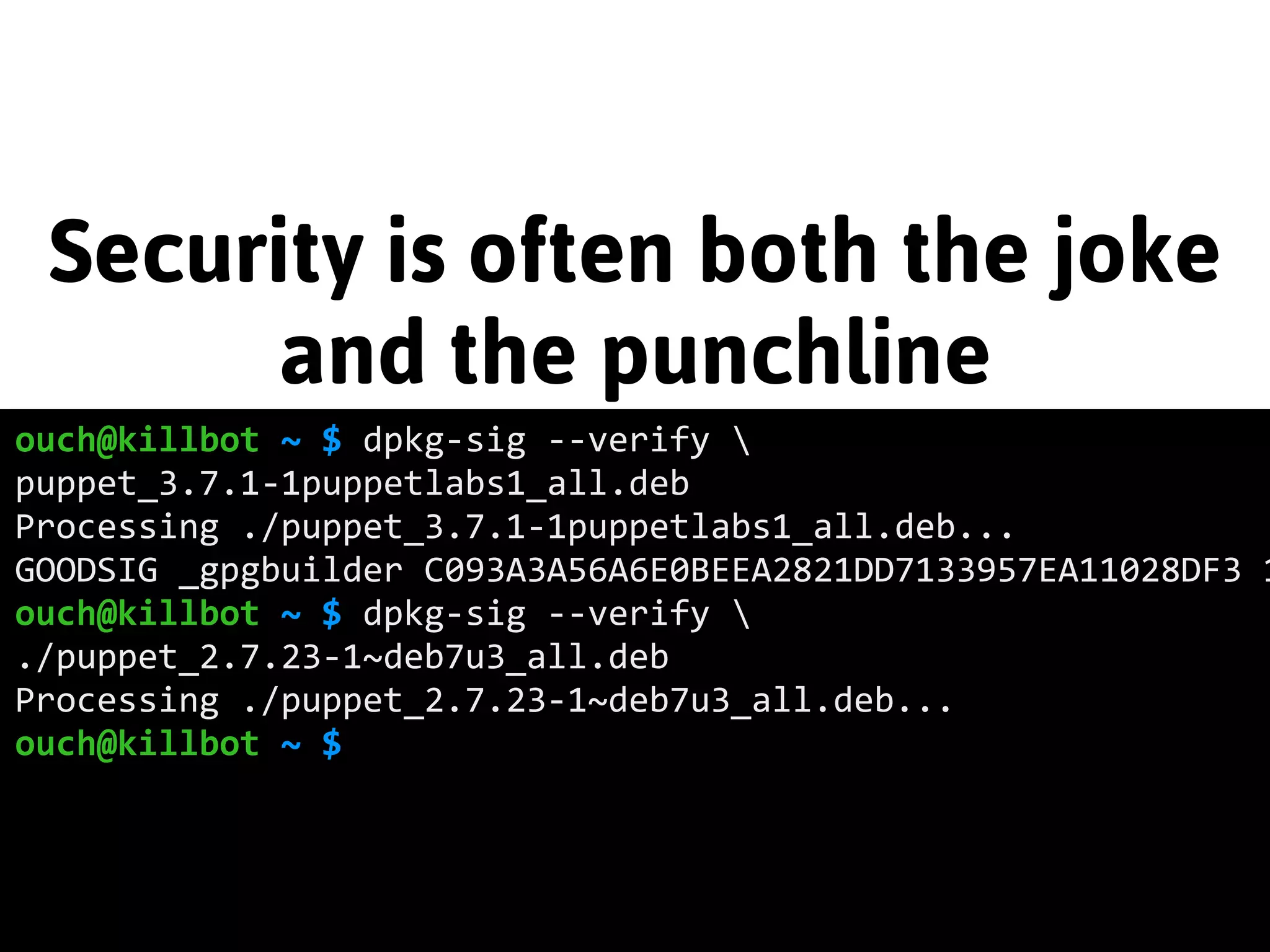

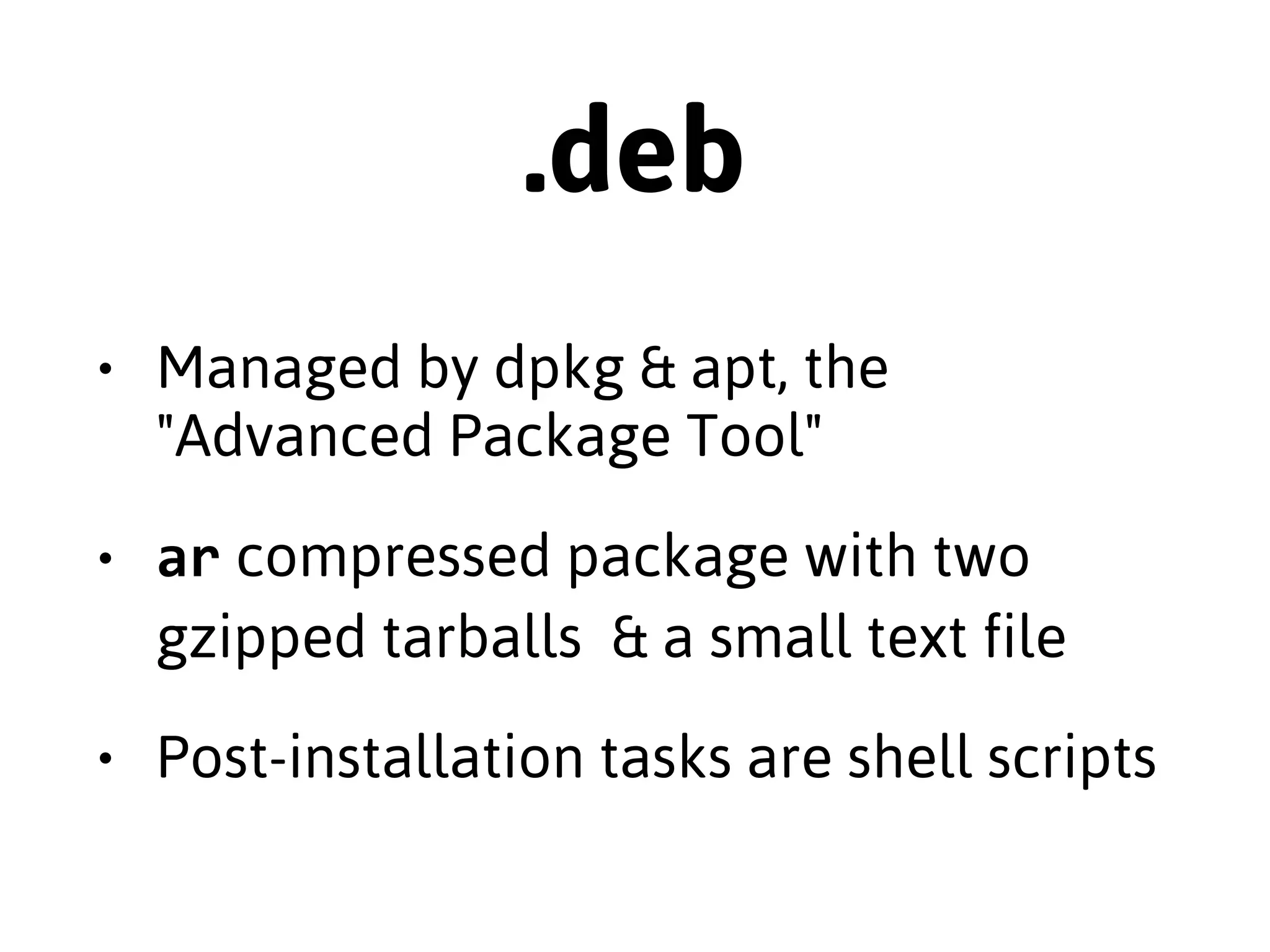

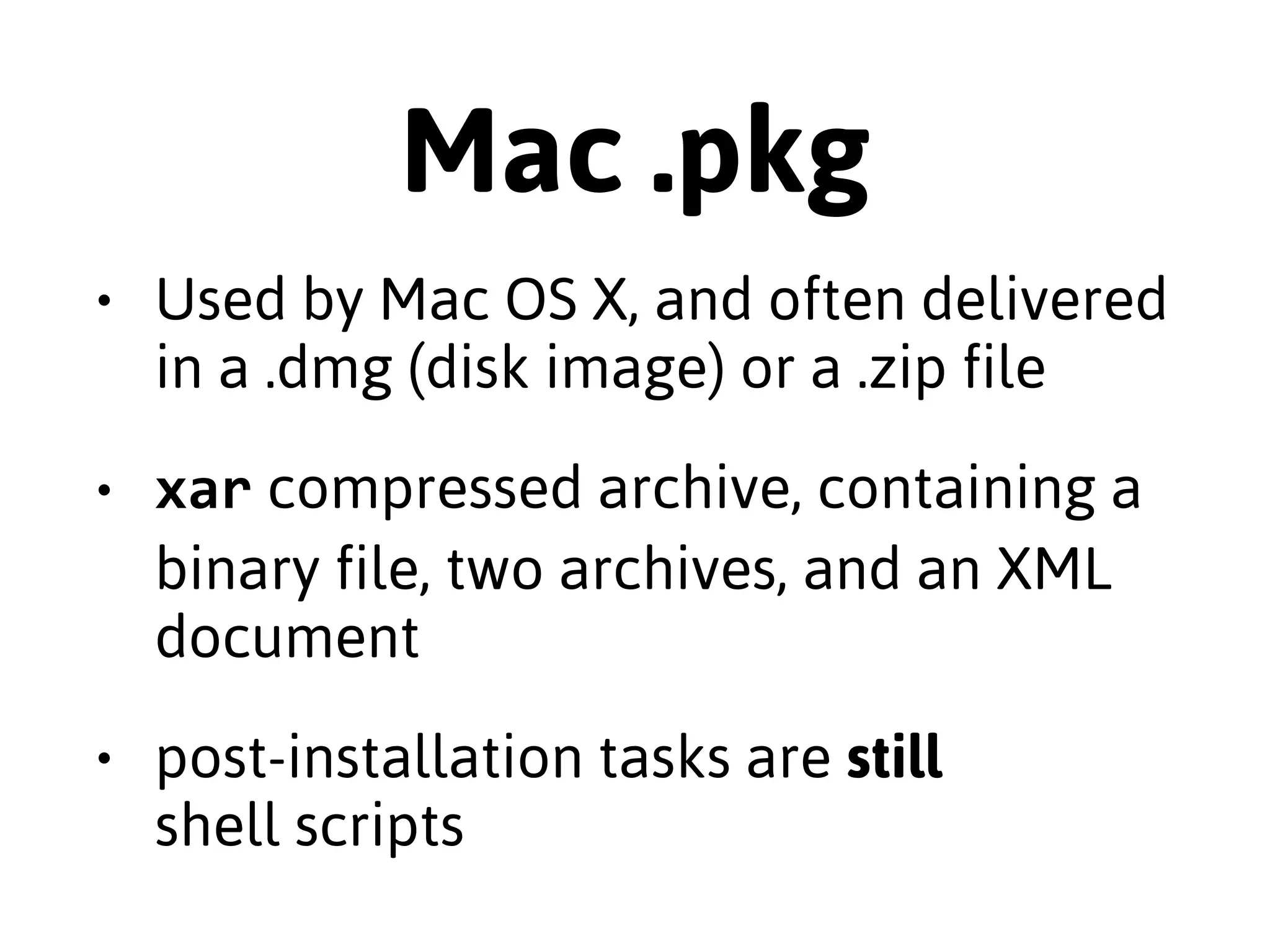

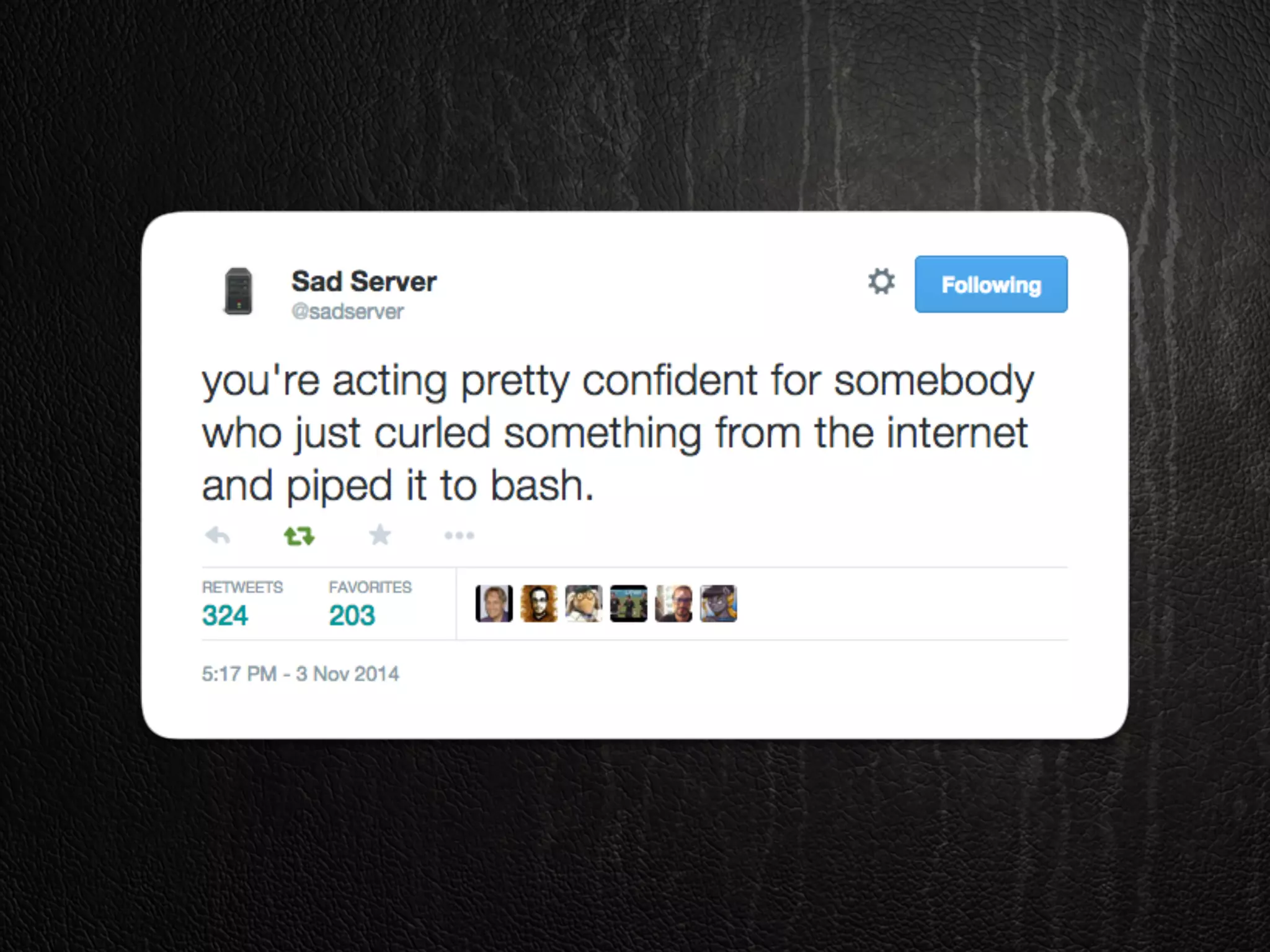

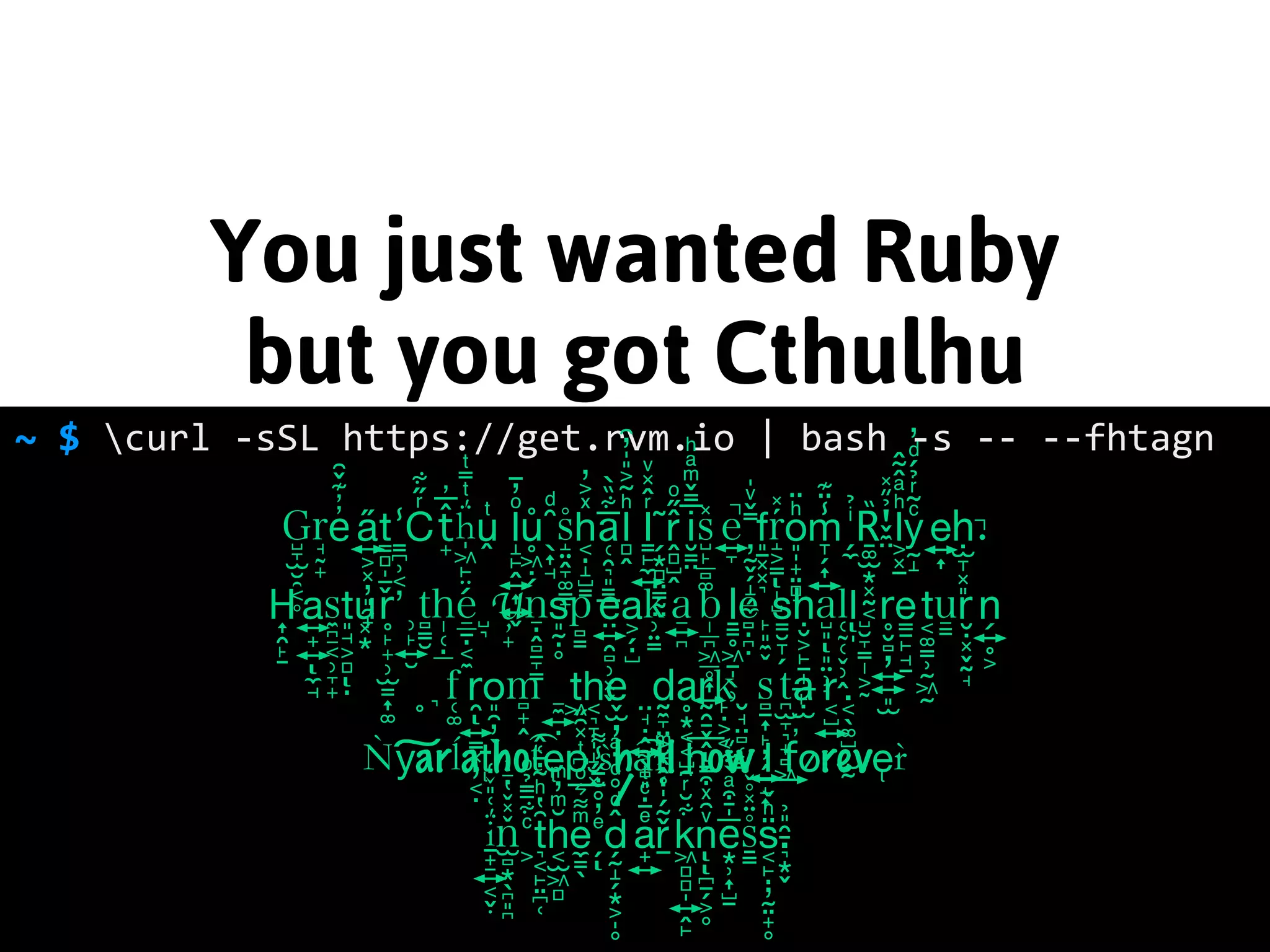

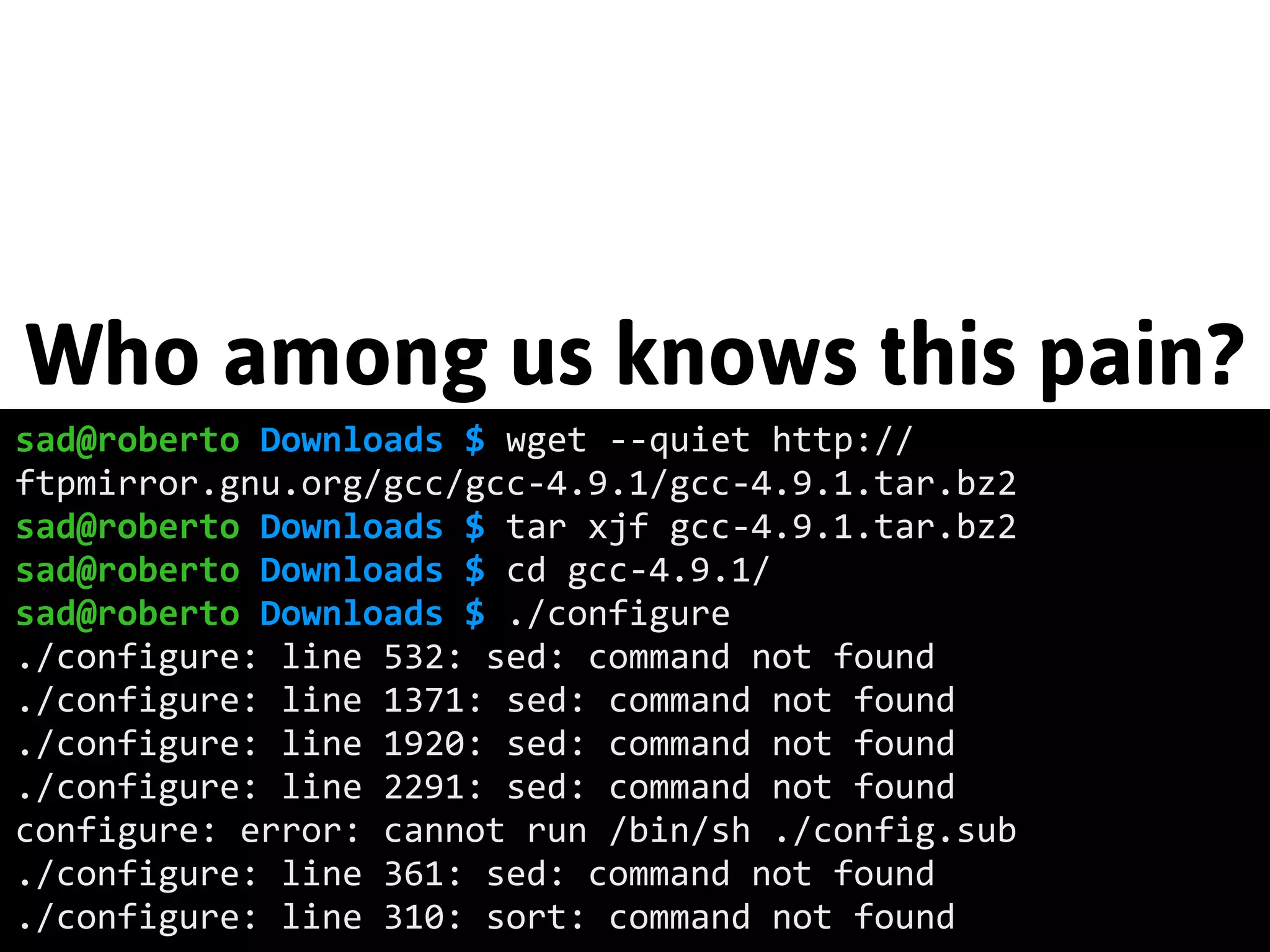

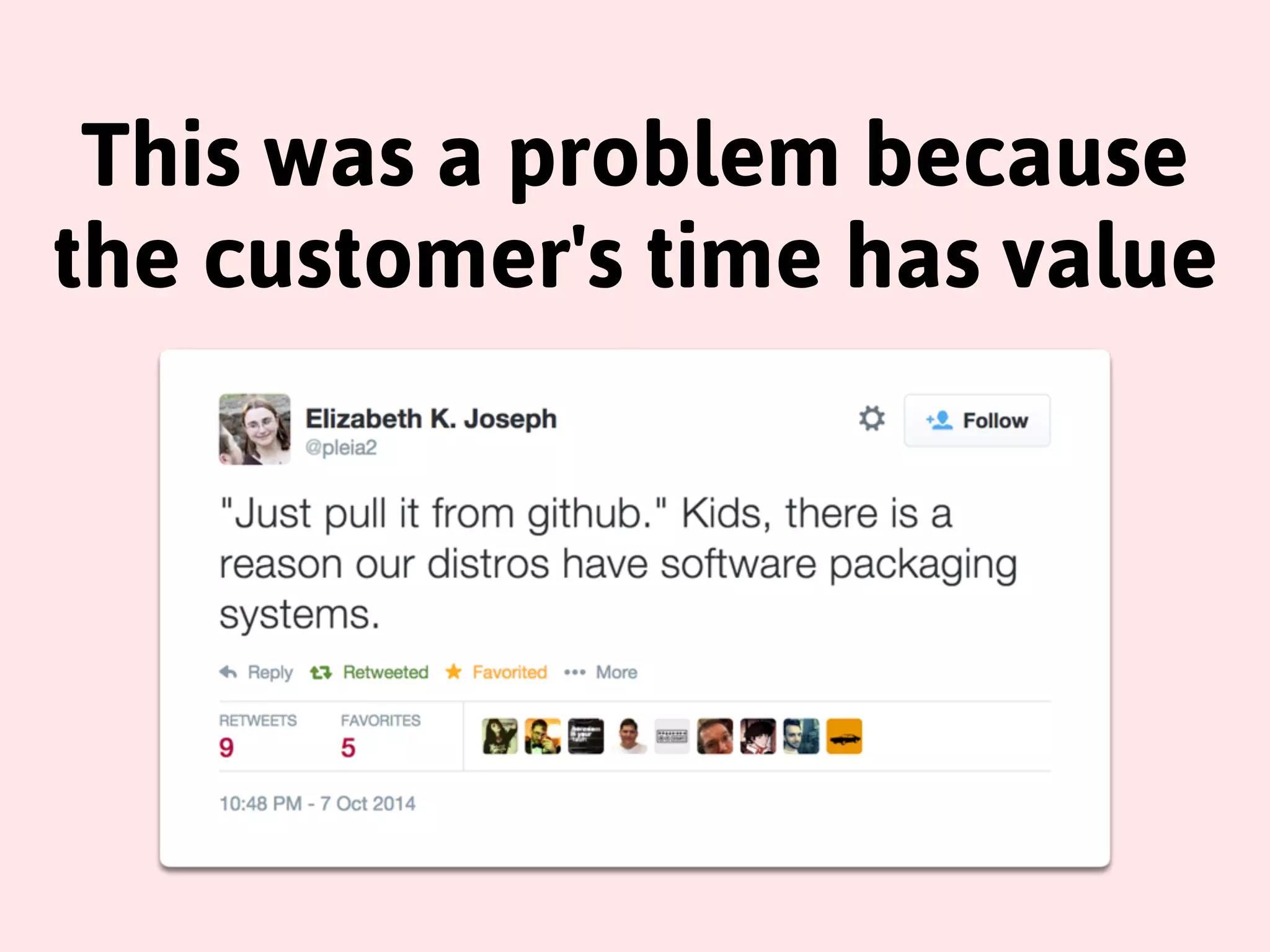

The document discusses the challenges and complexities of software packaging and distribution, emphasizing that while packaging is crucial for dependency management and installation ease, it often leads to significant frustrations for developers and users alike. It references various packaging formats like RPM and DEB, highlights security concerns with installations, and critiques existing practices while proposing alternatives such as using tools like omnibus and fpm. Ultimately, the author suggests a focus on improving packaging solutions rather than reinventing them, advocating for the use of best practices for customer satisfaction.

![Behold!

ryan@animatronio ~ $ sudo rpm -‐Uvh http://my.mirror.co/pub/

el/7/x86_64/nano-‐2.3.1-‐10.el7.x86_64.rpm

Retrieving http://my.mirror.co/pub/el/7/x86_64/

nano-‐2.3.1-‐10.el7.x86_64.rpm

Preparing...

################################# [100%]

Updating / installing...

1:nano-‐2.3.1-‐10.el7

################################# [100%]

ryan@animatronio ~ $](https://image.slidesharecdn.com/ures14-141110133449-conversion-gate02/75/Packaging-is-the-Worst-Way-to-Distribute-Software-Except-for-Everything-Else-14-2048.jpg)