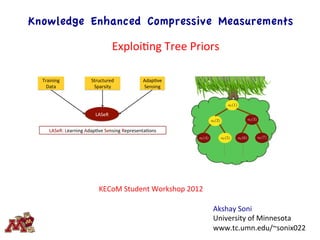

Exploiting Tree Sparse Priors

- 1. Knowledge Enhanced Compressive Measurements Training' Data' Structured' Sparsity' Adap3ve' Sensing' LASeR' LASeR:'Learning'Adap3ve'Sensing'Representa3ons' a`(1) a`(2) a`(5) a`(3) a`(4) a`(6) a`(7) Akshay Soni University of Minnesota www.tc.umn.edu/~sonix022 KECoM Student Workshop 2012 ExploiEng Tree Priors

- 2. A Sparse Signal Model

- 3. 0 20 40 60 80 100 120 0 5 10 15 20 25 30 #projections ReconstructionSNR(dB) 0 20 40 60 80 100 120 0 2 4 6 8 10 12 14 16 #projections ReconstructionSNR(dB) Can knowledge buy something? 12 dB gain CS DCT Lasso CS Random Lasso

- 4. 0 20 40 60 80 100 120 0 2 4 6 8 10 12 14 16 #projections ReconstructionSNR(dB) 0 20 40 60 80 100 120 0 5 10 15 20 25 30 #projections ReconstructionSNR(dB) Can knowledge buy something? 15 dB gain CS DCT Lasso CS Random Lasso

- 5. A Sparse Signal Model |xi| ⇢ > µ > 0 i 2 S, 0 i /2 S.

- 6. Exact Support Recovery (ESR) CS: Non adaptive & Non structured |xi| ⇢ > µ > 0 i 2 S, 0 i /2 S.

- 7. The Big Picture: Minimum Signal Amplitudes for ESR Can we exploit structure or adaptivity or both? [*] D. Donoho and J. Jin, “Higher criEcism for detecEng sparse heterogeneous mixtures,” Ann. StaEst., vol. 32, no. 3, pp. 962–994, 2004. [*] [*] S. Aeron, V. Saligrama, and M. Zhao, "InformaEon TheoreEc Bounds for Compressed Sensing," InformaEon Theory, IEEE TransacEons on , vol.56, no.10, pp.5111-‐5130, Oct. 2010 Uncompressed / compressed µ q n R log n

- 8. M. Malloy and R. Nowak, “On the limits of sequenEal tesEng in high dimensions,” preprint, 2011. [*] [*] SequenEal but non structured / uncompressed The Big Picture: Minimum Signal Amplitudes for ESR J. Haupt, R. Baraniuk, R. Castro and R. Nowak, “SequenEally Designed Compressed Sensing,” SSP, 2012. [*] µ q n R log n µ q n R log k

- 9. Tree Sparse Signal Model Can we exploit this tree structure for ESR problem?

- 10. 0 20 40 60 80 100 120 0 5 10 15 20 25 30 #projections ReconstructionSNR(dB) Can structure buy something? Tree Structured 0 20 40 60 80 100 120 0 2 4 6 8 10 12 14 16 #projections ReconstructionSNR(dB) Random CS DCT CS

- 11. 0 20 40 60 80 100 120 0 2 4 6 8 10 12 14 16 #projections ReconstructionSNR(dB) 0 20 40 60 80 100 120 0 5 10 15 20 25 30 #projections ReconstructionSNR(dB) Can structure buy something? Random CS DCT CS

- 12. [*] [*] The Big Picture: Minimum Signal Amplitudes for ESR Arias-‐Castro, E., Candès, E. J., Helgason, H. and Zeitouni, O. (2008). Searching for a trail of evidence in a maze. Ann. StaEst. 36 1726–1757. Uncompressed search for simple trail µ q n R log k µ q n R log n µ q n R

- 13. The Big Picture: Minimum Signal Amplitudes for ESR [*] A. Soni and J. Haupt, “Efficient adapEve compressive sensing using sparse hierarchical learned dicEonaries,” in Proc. Asilomar Conf. on Signals, Systems, and Computers, 2011, pp. 1250–1254. µ q n R log k µ q n R log n µ q n R µ q k R log k

- 14. Structure Dependent Adaptive Support Recovery – An Example 1 2 5 3 4 6 7 Stack&/&Queue&(both&ini1alized&to&index&of&root)& ! Repeat&&&&&&&&&&for&next&queue/ stack&element.& & Pop if Queue/Stack not empty Queue: Insert indices of children of node Unknown signal 1& No 1& |y(i, k)| ?y(j) = ( dj)T x + N(0, 1)

- 15. Theorem (2011): A. Soni & J. Haupt Tree Structured Adaptive Support Recovery

- 16. 0 5 10 15 20 25 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 1 αm in Pr(S=ˆS) The Big Picture: Minimum Signal Amplitudes for ESR µ q n R log k µ q n R log n µ q n R µ q k R log k

- 17. 0 5 10 15 20 25 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 1 αm in Pr(S=ˆS) The Big Picture: Minimum Signal Amplitudes for ESR Sufficient condiEon May we improve? necessary condiEons

- 18. Tree Structured Signal Reconstruction Two-‐step ReconstrucEon AdapEve Support Recovery Measure Support LocaEons Corollary (2011): A. Soni & J. Haupt

- 19. Learning Adaptive Sensing Representations (LASeR) Learning Tree Sparsifying DicEonary [hop://spams-‐devel.gforge.inria.fr/]

- 20. R = (128 x 128) Qualitative Results - I Direct Wavelet Sensing PCA CS LASSO CS Tree LASSO LASeR m = 20 m = 50 m = 80 Image from PICS database

- 21. R = (128 x 128)/32 Qualitative Results - II Direct Wavelet Sensing PCA CS LASSO CS Tree LASSO LASeR m = 50 m = 80 m = 20 Image from PICS database

- 22. Quantitative Results 0 20 40 60 80 100 120 140 0 5 10 15 20 25 30 35 #projections ReconstructionSNR(dB) 0 20 40 60 80 100 120 140 0 2 4 6 8 10 12 14 16 18 #projections ReconstructionSNR(dB) 0 20 40 60 80 100 120 140 0 5 10 15 20 25 #projections ReconstructionSNR(dB)

- 23. Future Directions for Tree Sensing Thank You. Contact: Akshay Soni sonix022@umn.edu 1. LASeR with clutter signal model: y = (x + c) + w (clever regularization for di↵erent signal classes – eg., di↵usion of clutter over whole signal space using `2 rather that `1 penalty) 2. LASeR with non-orthonormal learned dictionaries. 3. Exploiting signal amplitude correlation in LASeR.