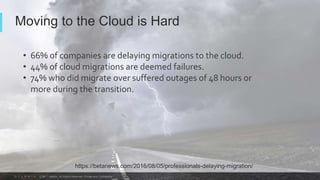

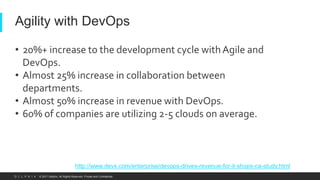

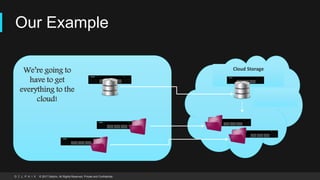

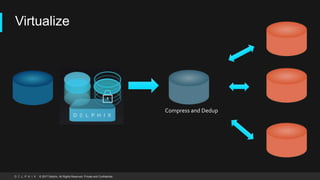

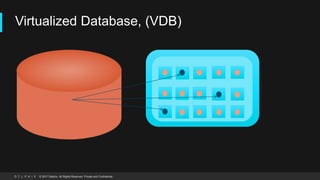

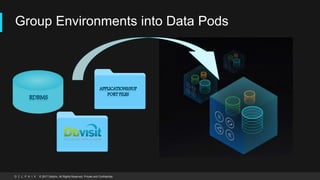

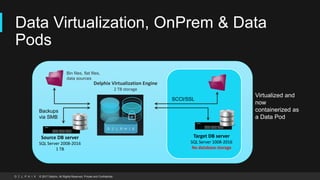

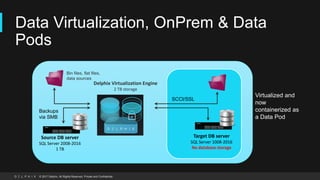

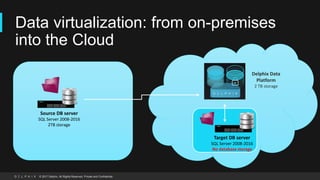

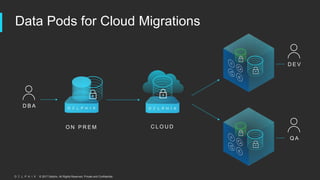

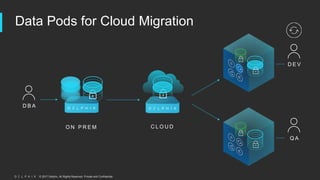

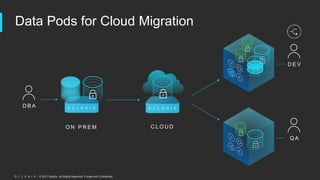

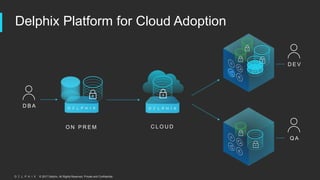

The document discusses challenges with moving databases to the cloud and proposes a solution using data virtualization. It summarizes that virtualizing databases with tools like Delphix and DBVisit allows for instant provisioning of development environments without physical copies. Databases are packaged into "data pods" that can be easily replicated and kept in sync. This streamlines cloud migrations by removing bottlenecks around copying and moving large amounts of database data.