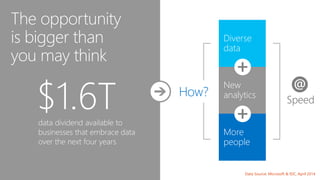

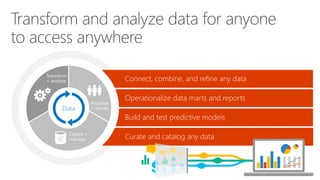

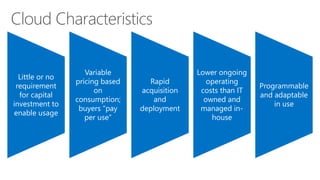

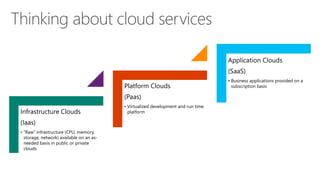

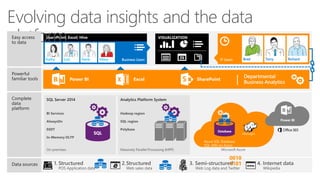

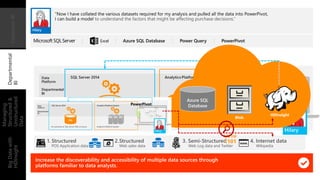

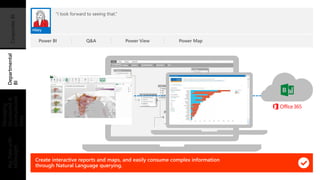

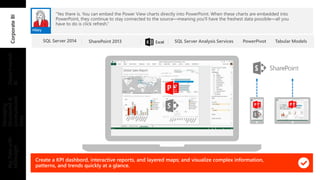

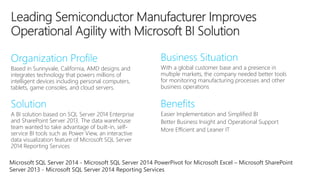

The document discusses the potential of leveraging data and analytics for businesses, highlighting a projected $1.6 trillion 'data dividend' available to companies that embrace innovative data practices. It outlines Microsoft's comprehensive end-to-end data platform and cloud services designed to facilitate real-time data analysis, empower employees, and enhance decision-making. Key trends such as the rise of IoT, diverse data types, and cloud computing are also emphasized, along with real-world examples of organizations benefiting from these technologies.