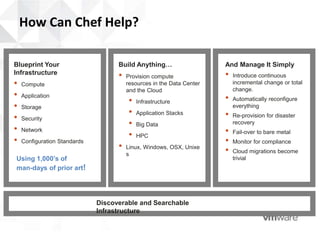

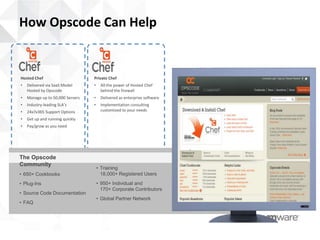

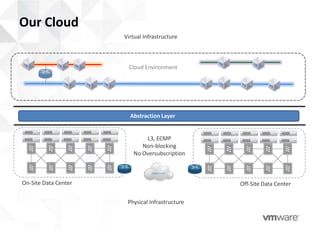

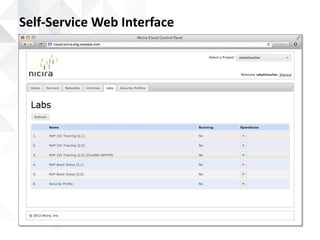

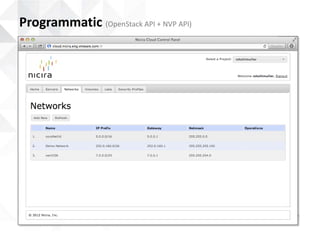

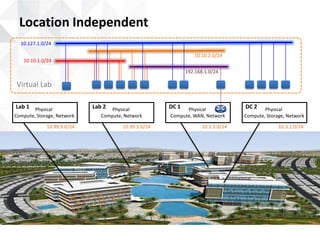

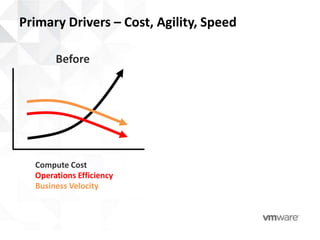

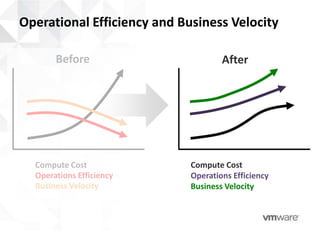

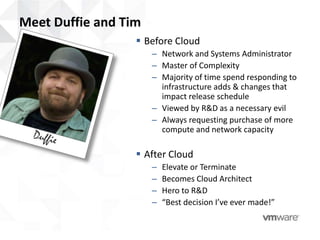

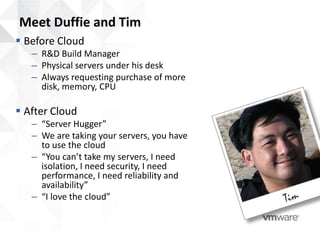

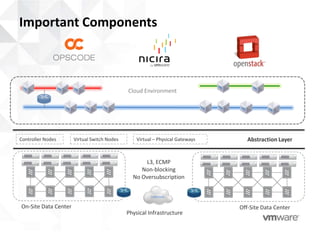

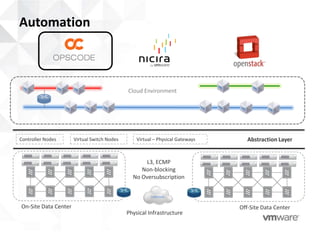

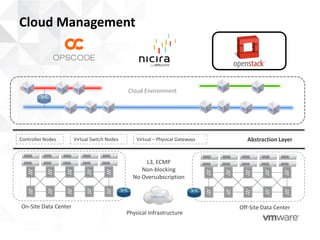

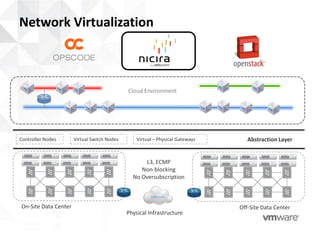

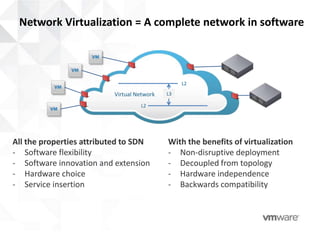

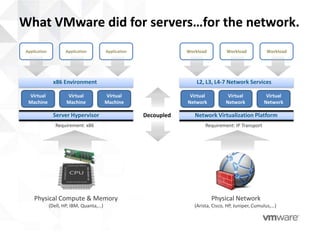

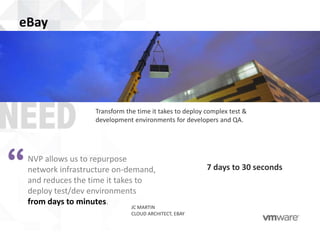

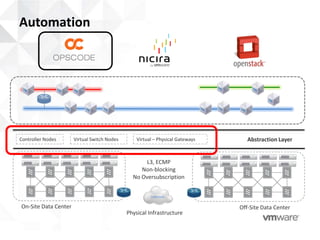

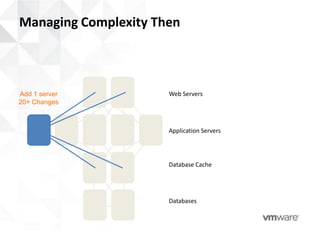

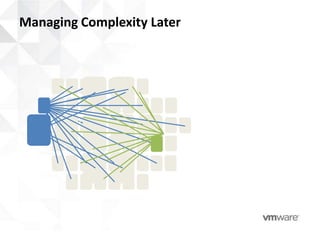

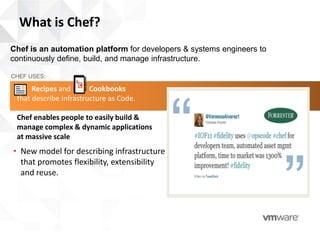

This document provides an agenda and overview for a presentation on network virtualization and IT infrastructure automation. The agenda includes presentations from Rod Stuhlmuller of Nicira/VMware on network virtualization, Stathy Toulomis of Opscode on Chef for infrastructure automation, and a demo of Nicira's private cloud platform by Jacob Cherkas. The document also provides background on how Nicira/VMware built their own private OpenStack cloud using OpenStack, Chef, and Nicira Network Virtualization to increase efficiency, speed, and agility while reducing costs and roadblocks. It highlights the importance of automation, network virtualization, and components like virtual switches and controllers.

![Dynamic configuration management

pool_members = search('node','role:webserver')

template '/etc/haproxy/haproxy.cfg' do

source 'haproxy-app_lb.cfg.erb'

owner 'root'

group 'root'

mode '0644'

variables :pool_members => pool_members.uniq

notifies :restart, 'service[haproxy]'

end](https://image.slidesharecdn.com/nicirachefwebinar-merged-130302175341-phpapp02/85/Nicira-chef-webinar-merged-36-320.jpg)