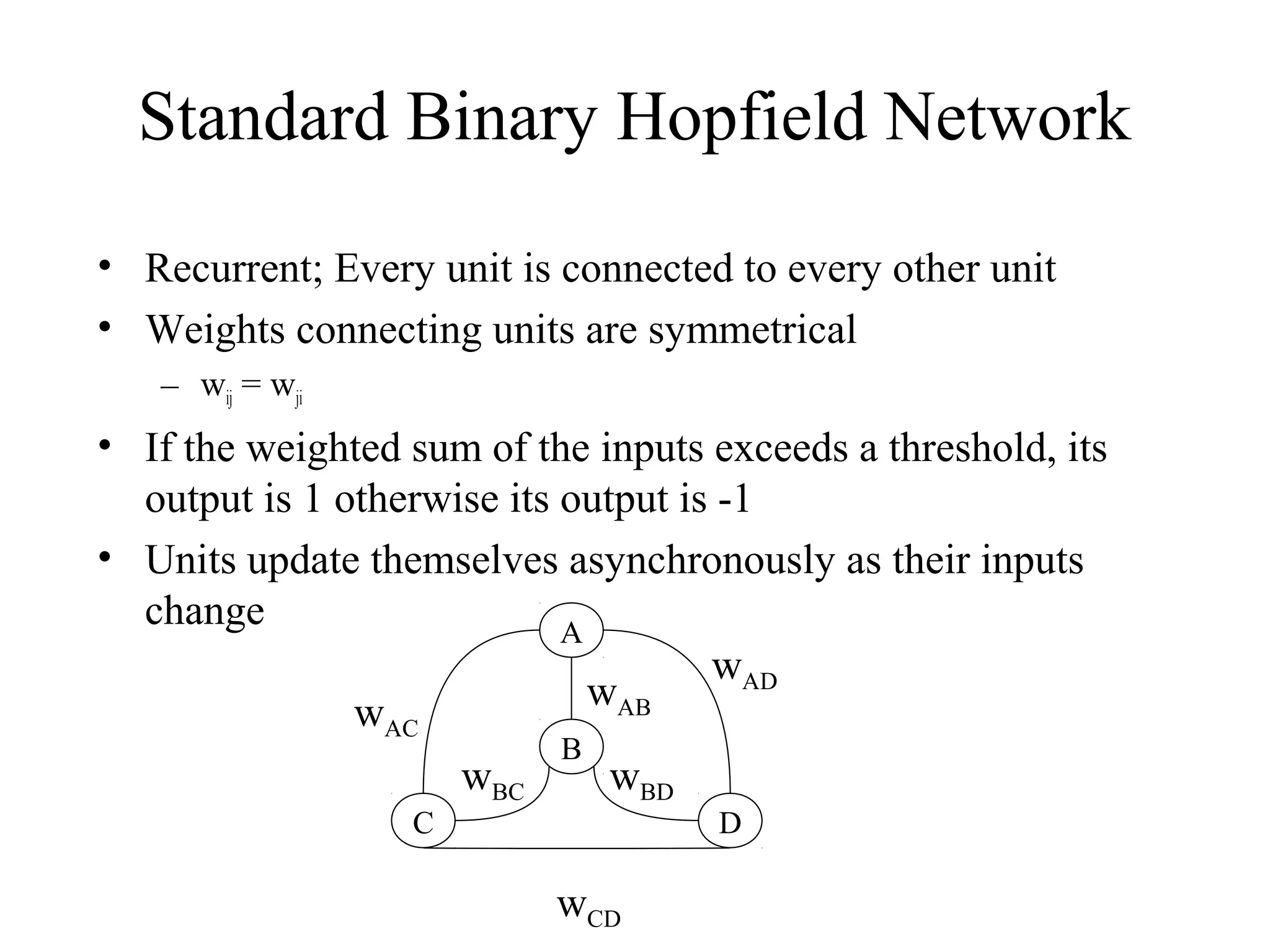

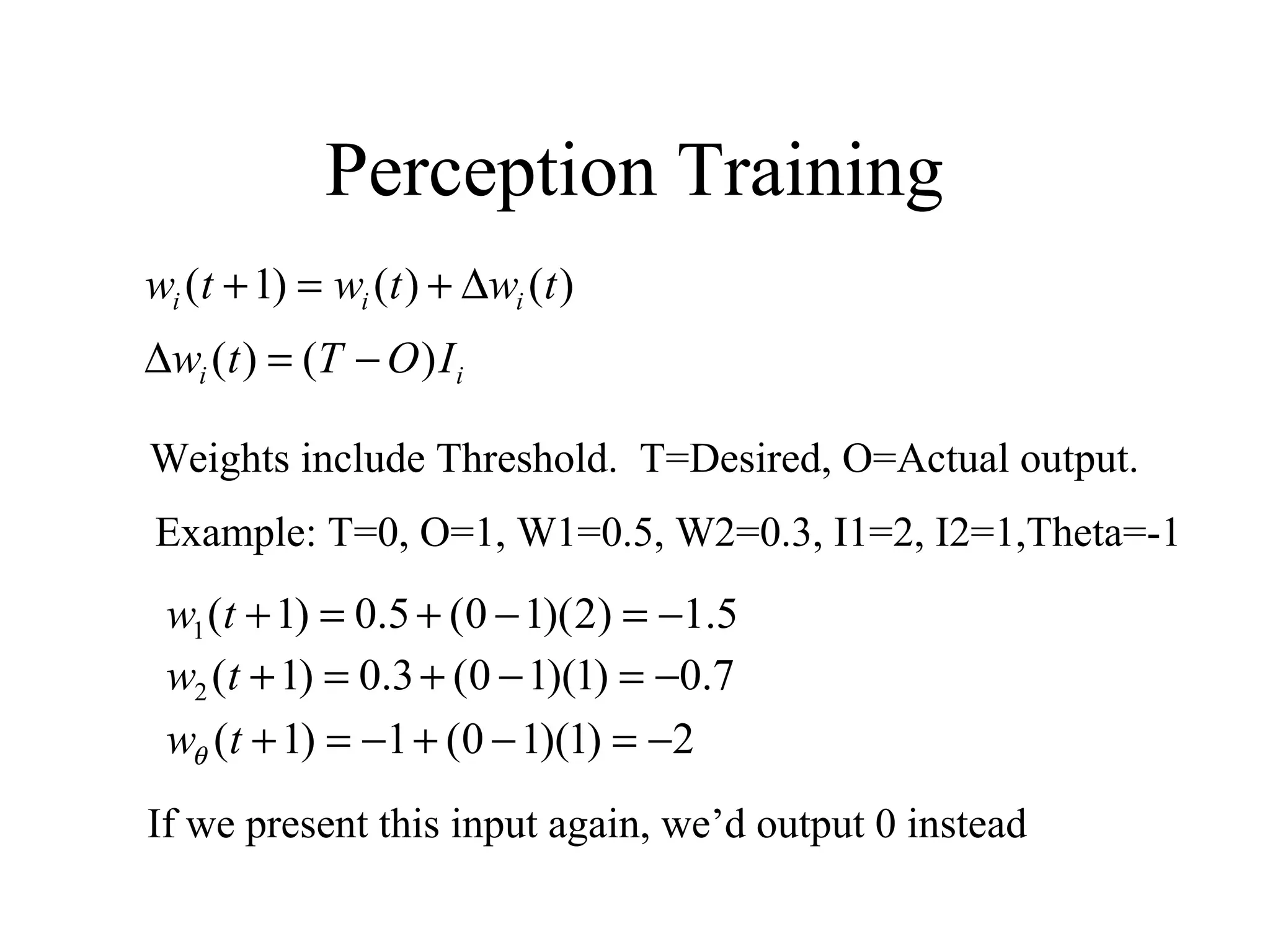

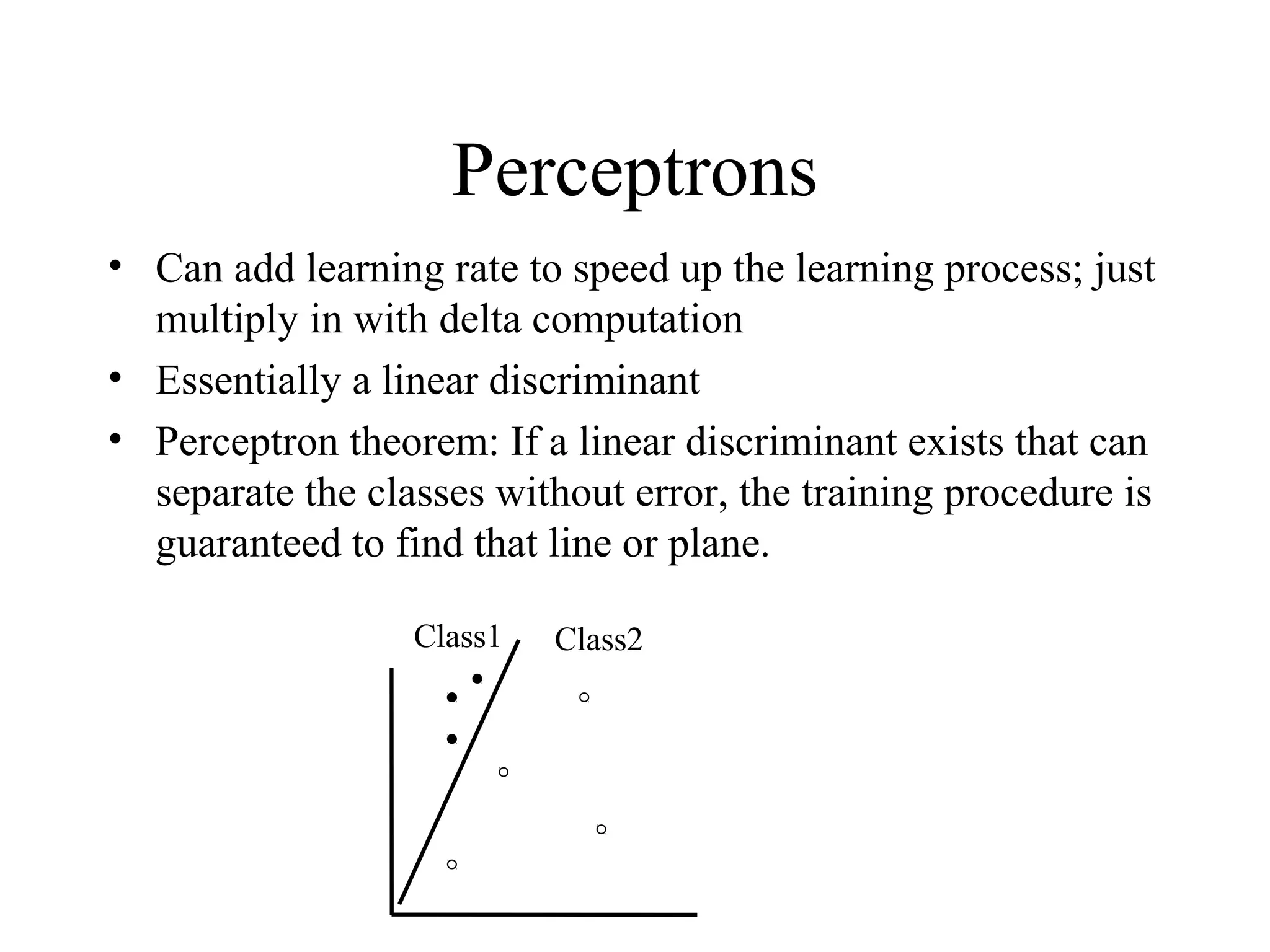

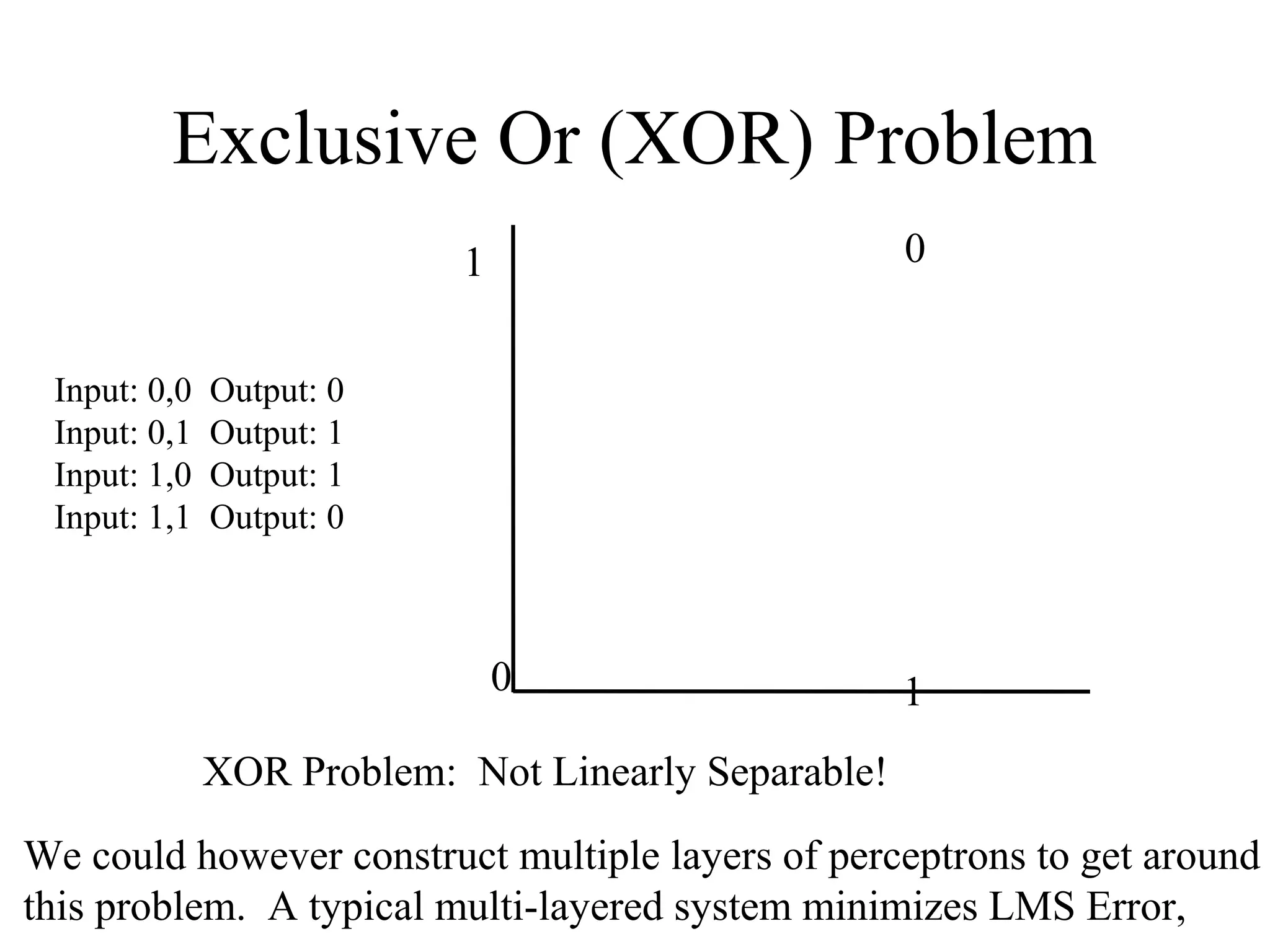

This document provides an introduction to neural networks and connectionism. It discusses what neural networks are, including their basic components and how they learn through gradual changes in connection strengths. The history of neural networks is reviewed, from early work in the 1950s to today's applications in areas like computer vision and autonomous vehicles. Biological inspiration for neural networks from the brain is described. Early models like perceptrons and limitations like the XOR problem are covered. Backpropagation networks that can learn any function are introduced. Finally, unsupervised learning techniques like Hopfield networks and self-organizing maps are briefly discussed.

![LMS Learning

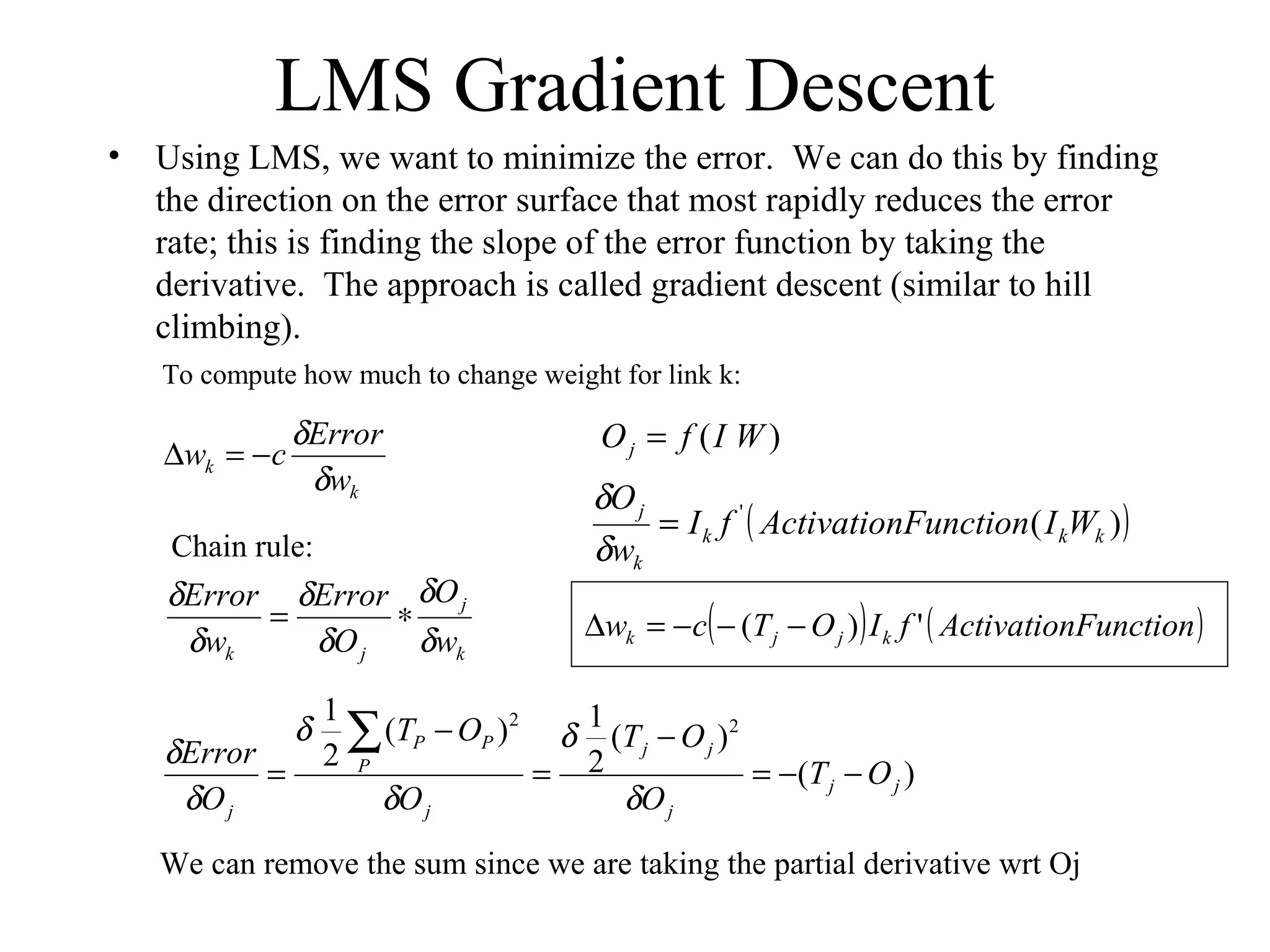

LMS = Least Mean Square learning Systems, more general than the

previous perceptron learning rule. The concept is to minimize the total

error, as measured over all training examples, P. O is the raw output,

as calculated by

( )∑ −=

P

PP OTLMSceDis

2

2

1

)(tan

E.g. if we have two patterns and

T1=1, O1=0.8, T2=0, O2=0.5 then D=(0.5)[(1-0.8)2

+(0-0.5)2

]=.145

We want to minimize the LMS:

E

W

W(old)

W(new)

C-learning rate

∑ +

i

ii Iw θ](https://image.slidesharecdn.com/nn-150514085137-lva1-app6892/75/neuros-14-2048.jpg)