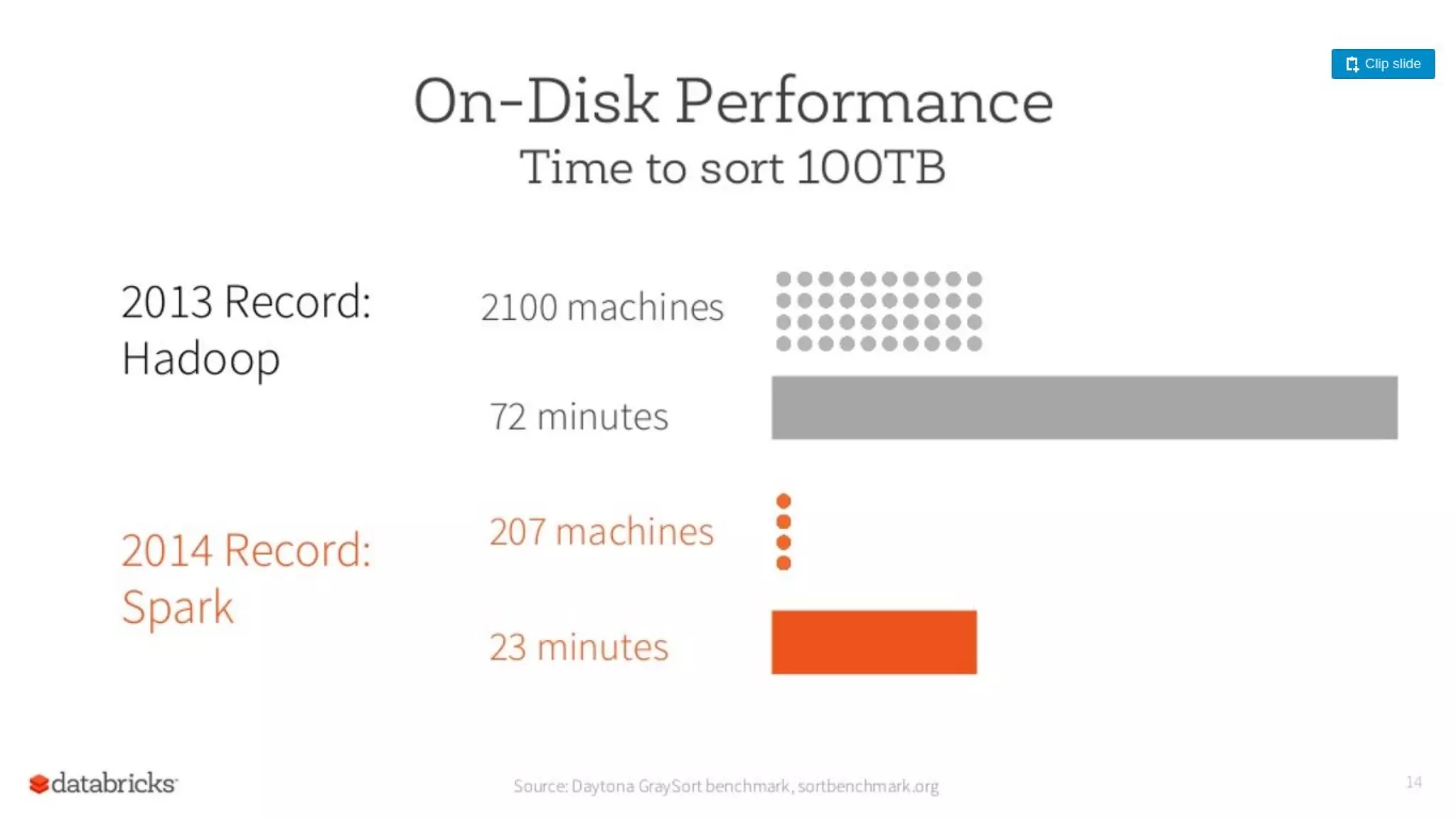

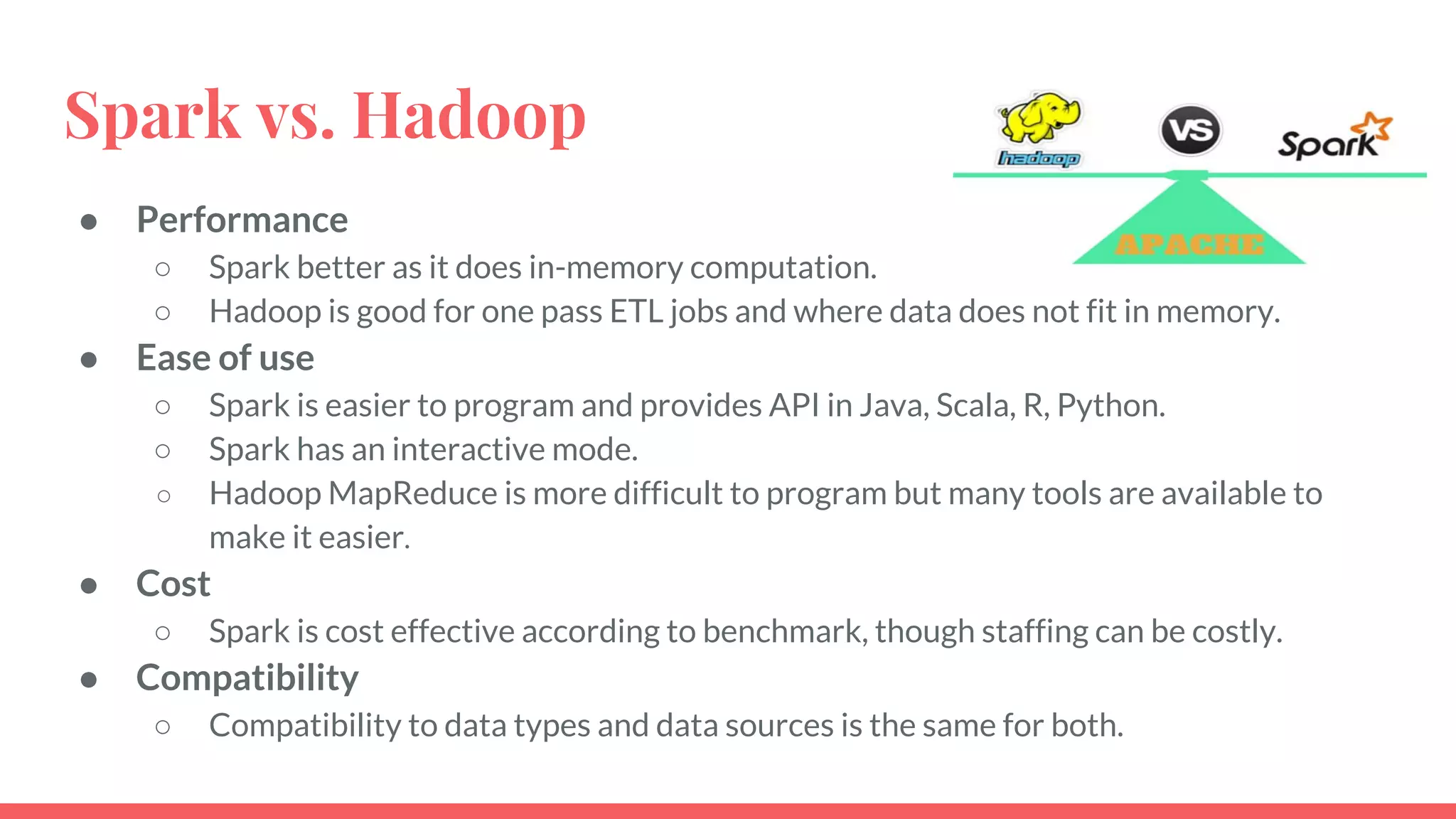

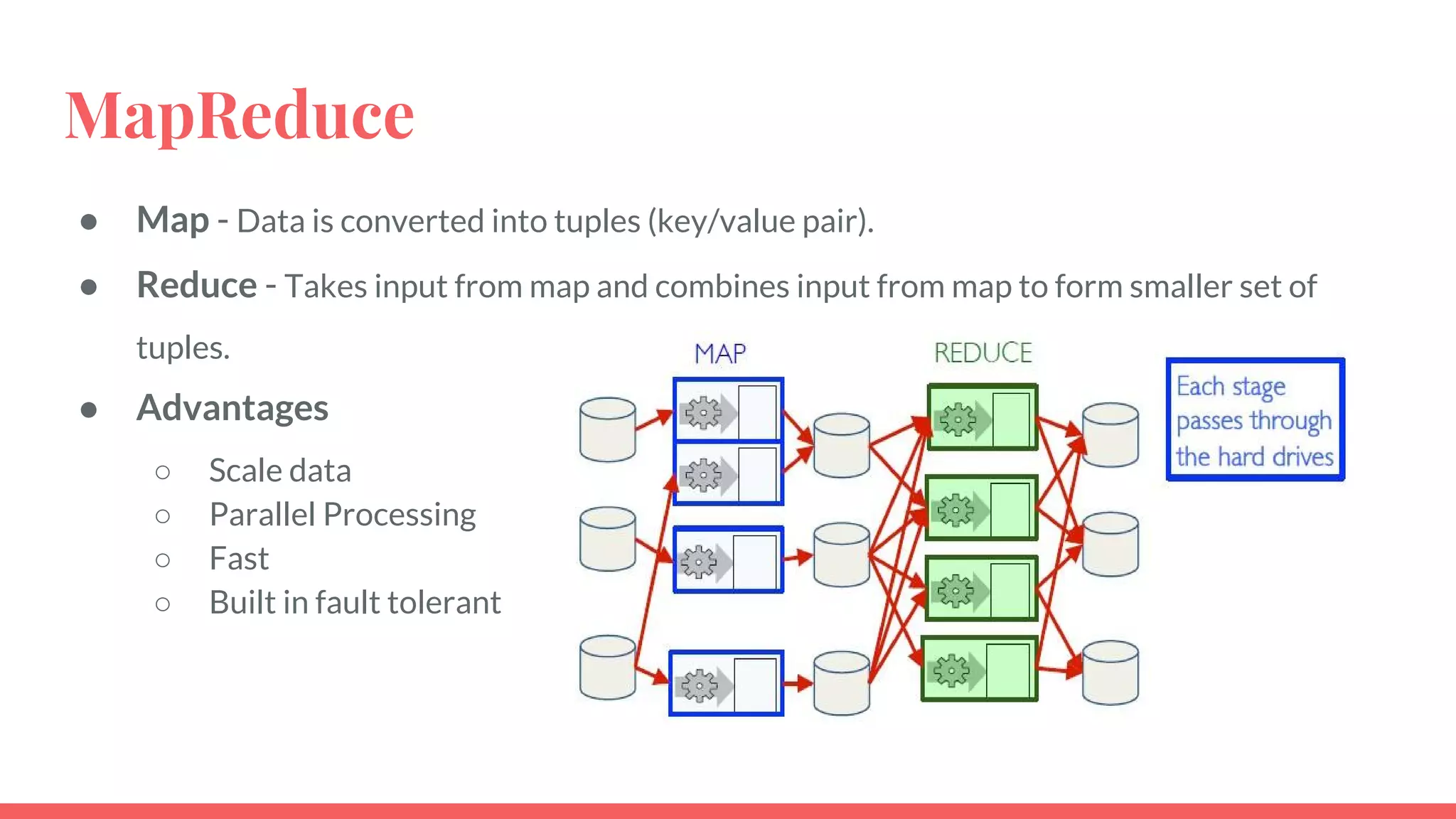

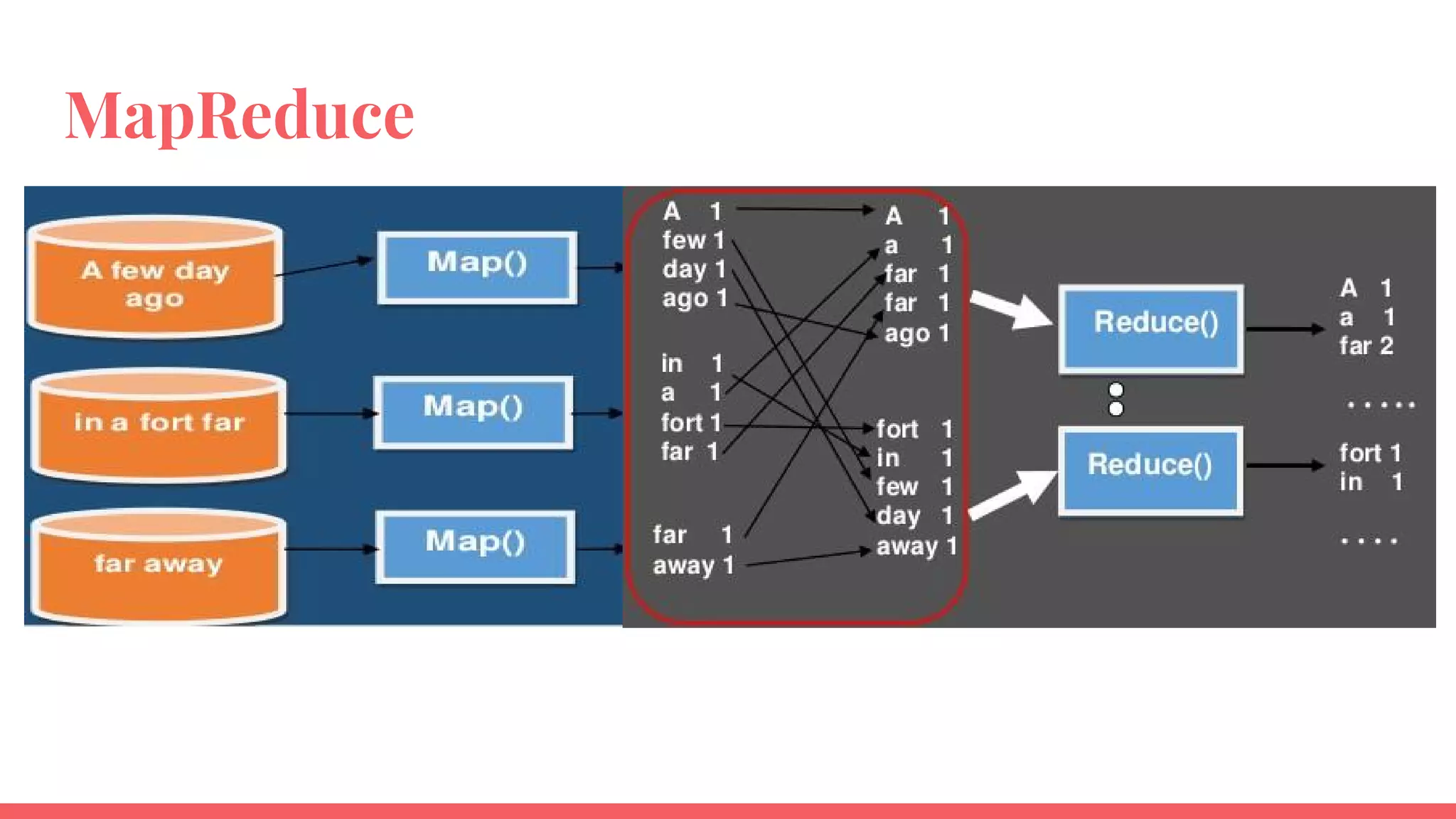

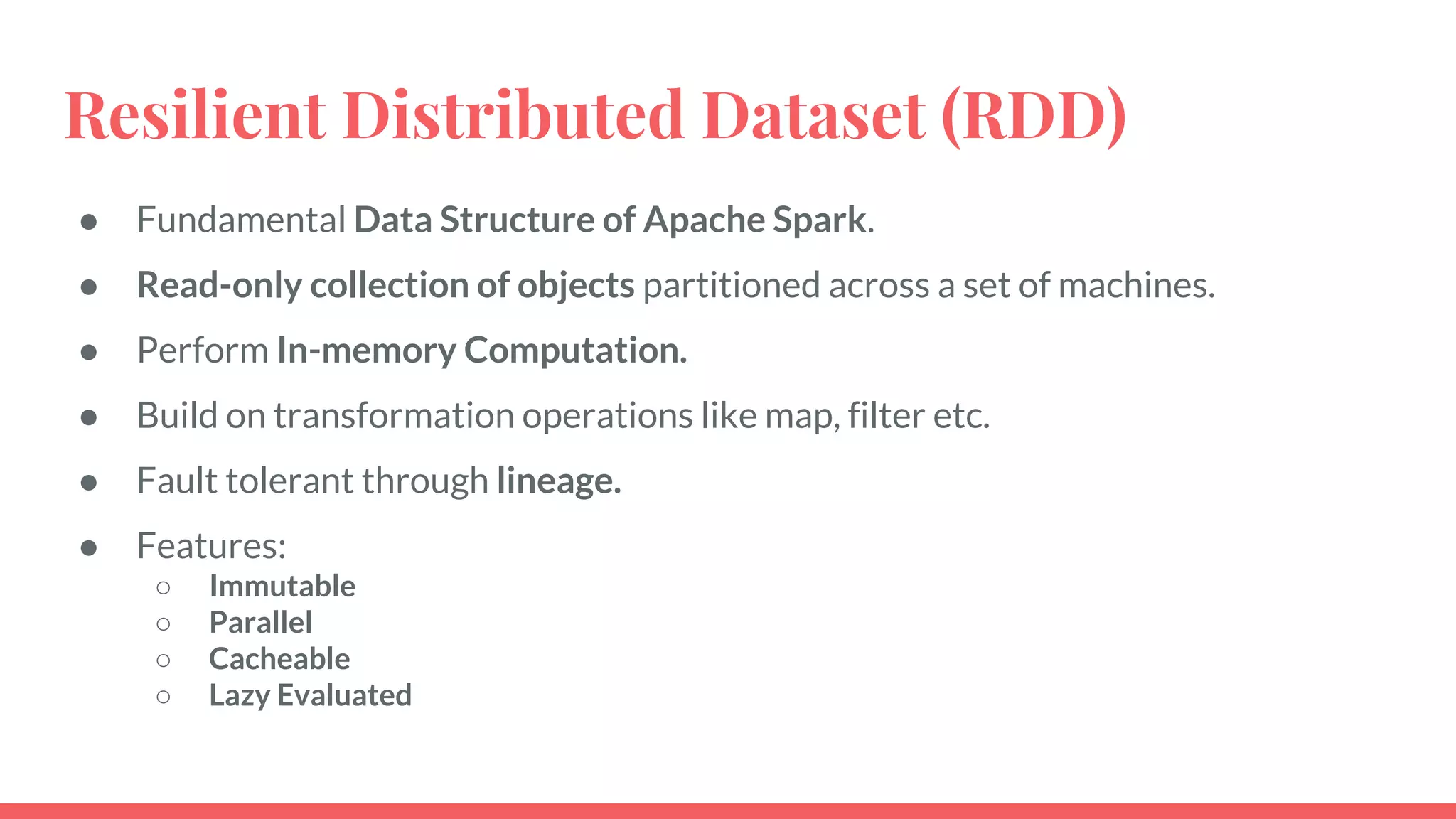

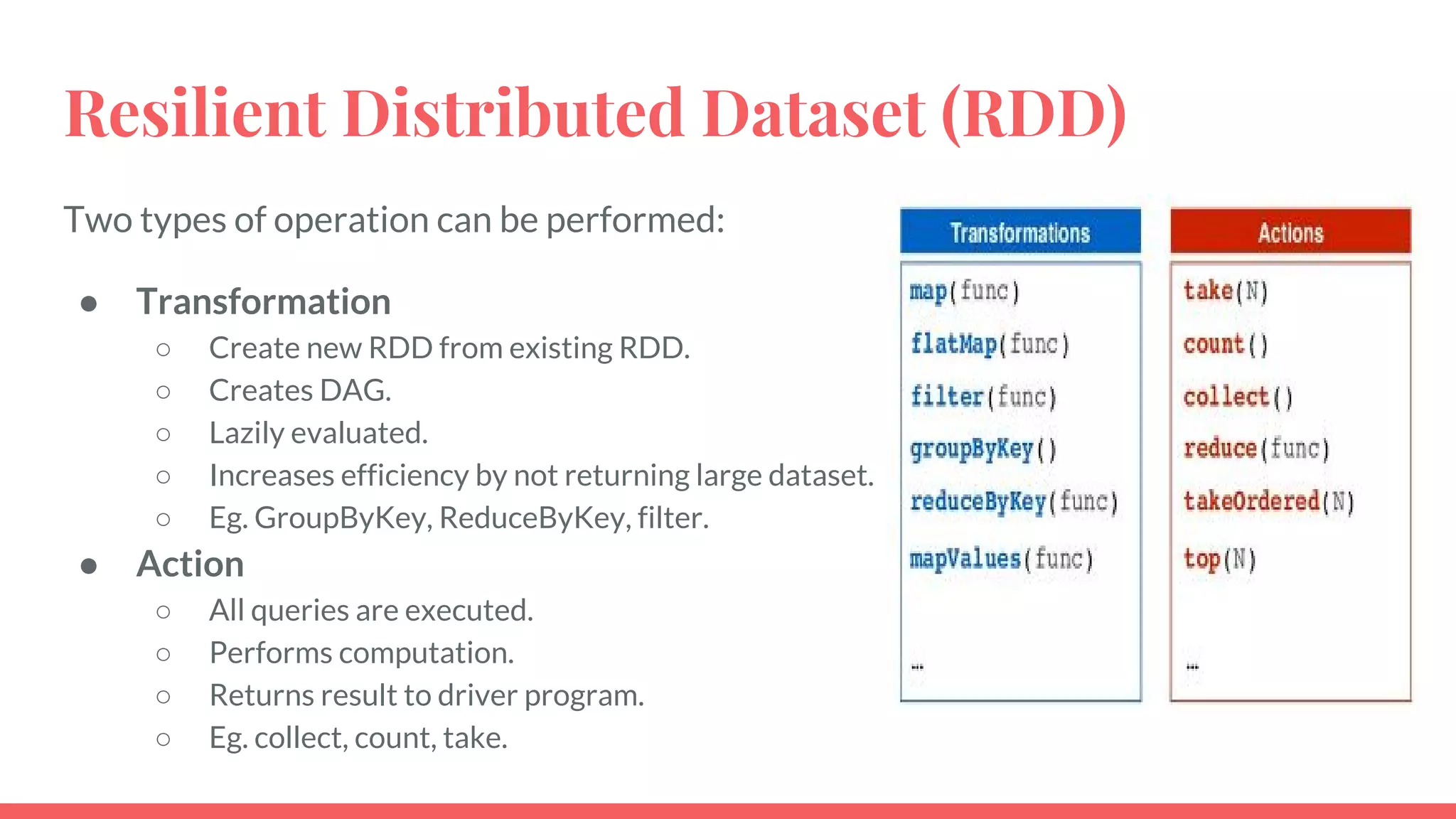

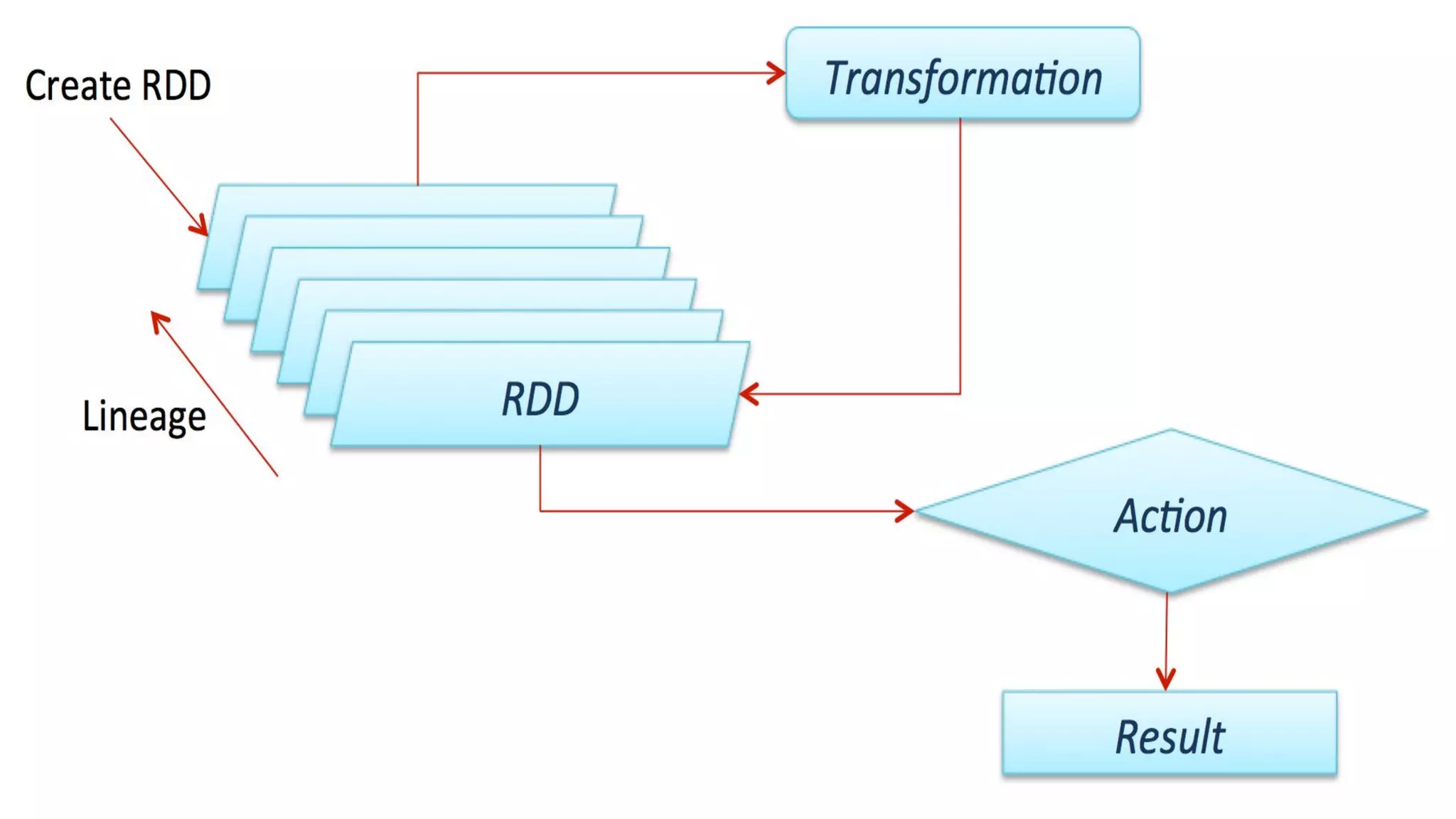

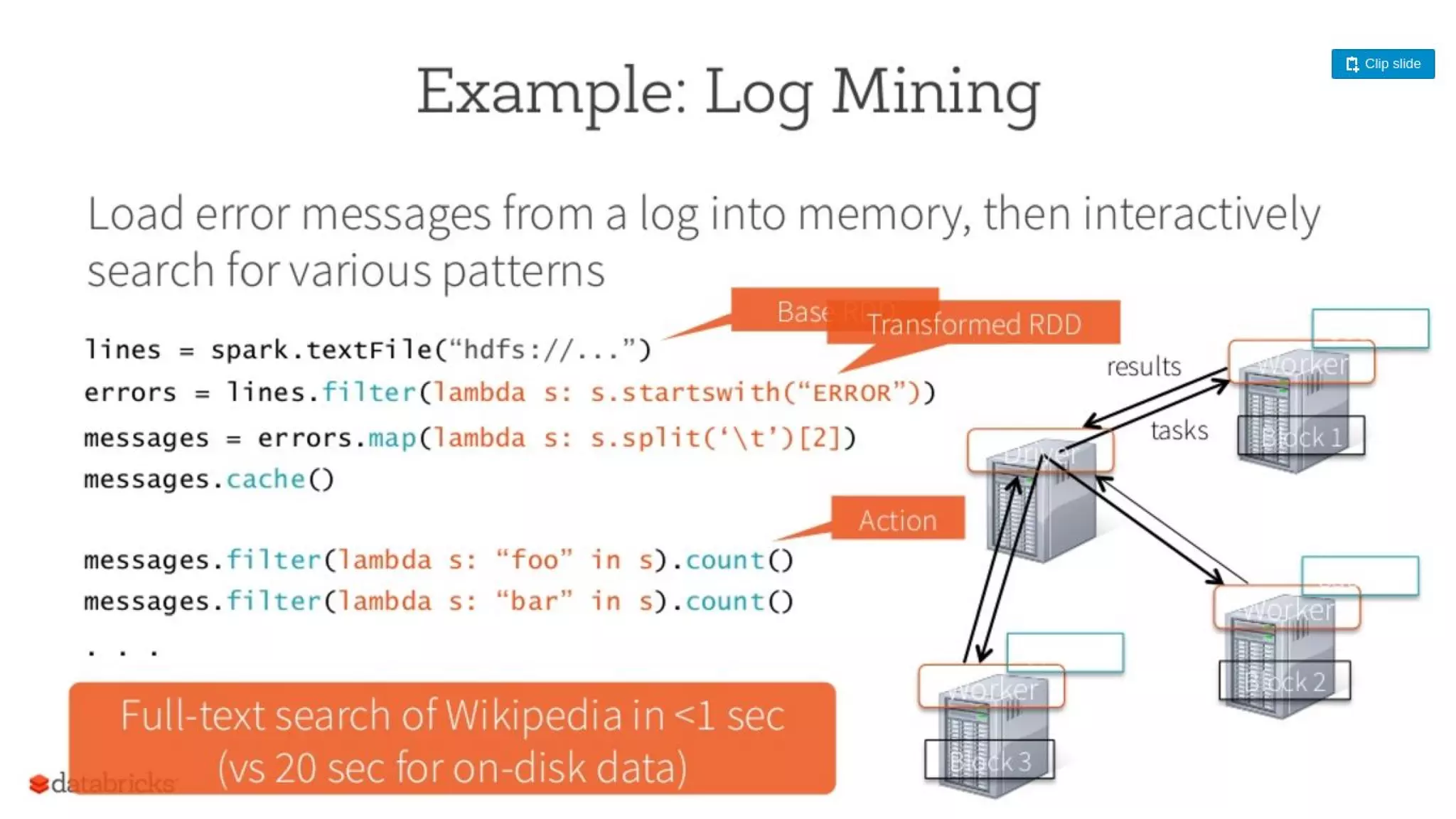

This document provides an overview of Apache Spark and compares it to Hadoop MapReduce. It defines big data and explains that Spark is a solution for processing large datasets in parallel. Spark improves on MapReduce by allowing in-memory computation using Resilient Distributed Datasets (RDDs) which makes it faster, especially for iterative jobs. Spark is also easier to program with rich APIs. While MapReduce is tolerant, Spark caching improves performance. Both are widely used but Spark sees more adoption for real-time applications due to its speed.

![Creating RDD

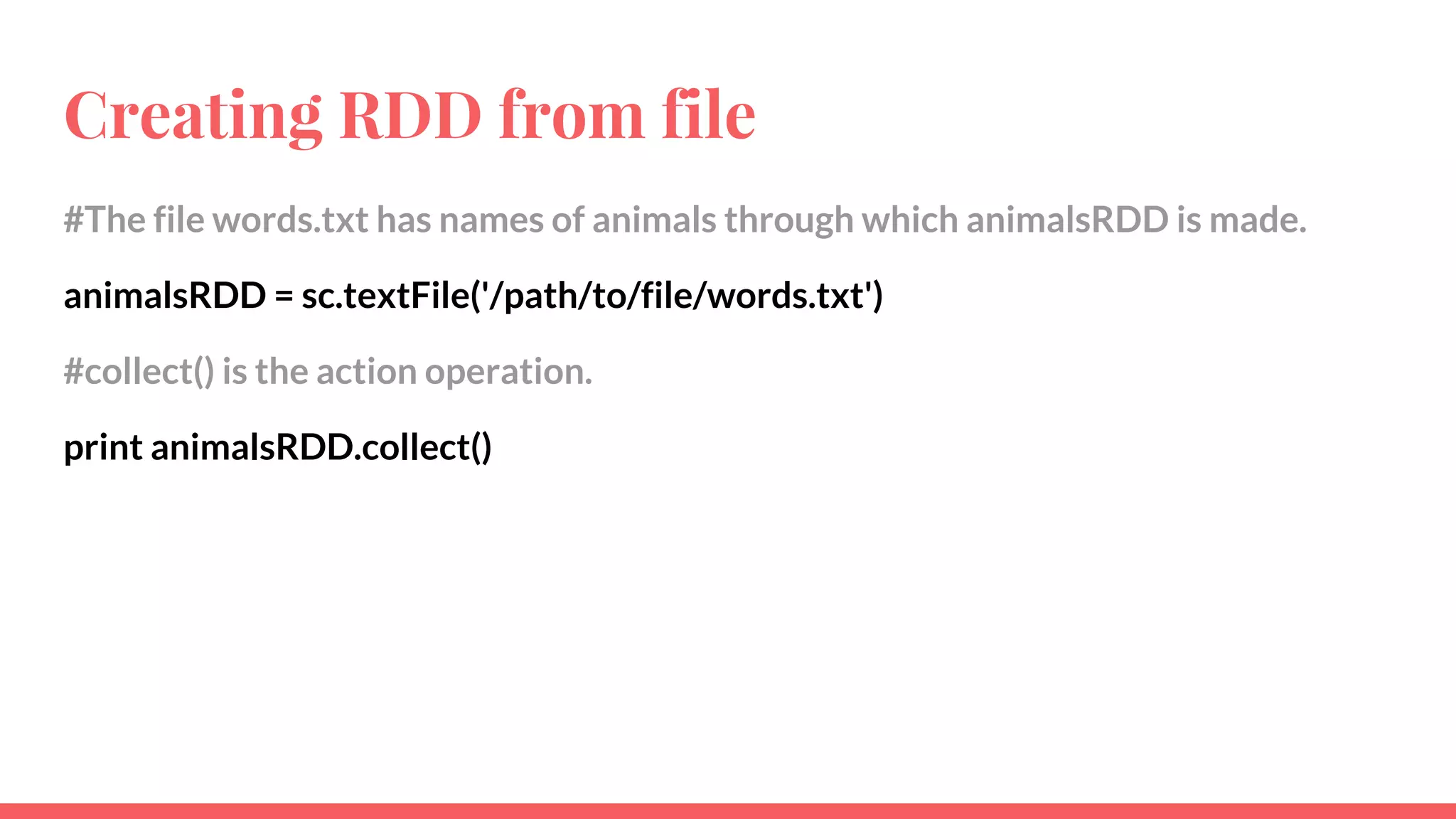

# Creates a list of animal.

animals = ['cat', 'dog', 'elephant', 'cat', 'mouse', ’cat’]

# Parallelize method is used to create RDD from list. Here “animalRDD” is created.

#sc is Object of Spark Context.

animalRDD = sc.parallelize(animals)

# Since RDD is lazily evaluated, to print it we perform an action operation, i.e.

collect() which is used to print the RDD.

print animalRDD.collect()

Output - ['cat', 'dog', 'elephant', 'cat', 'mouse', 'cat']](https://image.slidesharecdn.com/apachesparkppt-170105054406/75/NaukriEngineering-Apache-Spark-16-2048.jpg)

![Map operation on RDD

‘’’’’ To count the frequency of animals, we make (key/value) pair - (animal,1) for all

the animals and then perform reduce operation which counts all the values.

Lambda is used to write inline functions in python.

‘’’’’

mapRDD = animalRDD.map(lambda x:(x,1))

print mapRDD.collect()

Output - [('cat',1), ('dog',1), ('elephant',1), ('cat',1), ('mouse',1), ('cat',1)]](https://image.slidesharecdn.com/apachesparkppt-170105054406/75/NaukriEngineering-Apache-Spark-18-2048.jpg)

![Reduce operation on RDD

‘’’’’ reduceByKey is used to perform reduce operation on same key. So in its

arguments, we have defined a function to add the values for same key. Hence, we

get the count of animals.

‘’’’’

reduceRDD = mapRDD.reduceByKey(lambda x,y:x+y)

print reduceRDD.collect()

Output - [('cat',3), ('dog',1), ('elephant',1), ('mouse',1)]](https://image.slidesharecdn.com/apachesparkppt-170105054406/75/NaukriEngineering-Apache-Spark-19-2048.jpg)

![Filter operation on RDD

‘’’’’ Filter all the animals obtained from reducedRDD with count greater than 2. x is

a tuple made of (animal, count), i.e. x[0]=animal name and x[1]=count of animal.

Therefore we filter the reduceRDD based on x[1]>2.

‘’’’’

filterRDD = reduceRDD.filter(lambda x:x[1]>2)

print filterRDD.collect()

Output - [('cat',3)]](https://image.slidesharecdn.com/apachesparkppt-170105054406/75/NaukriEngineering-Apache-Spark-20-2048.jpg)