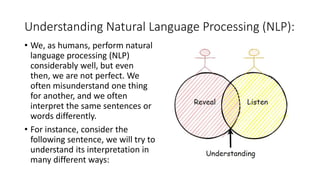

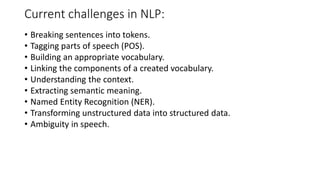

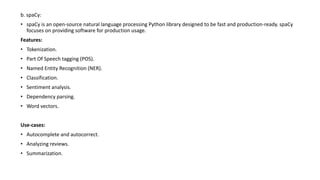

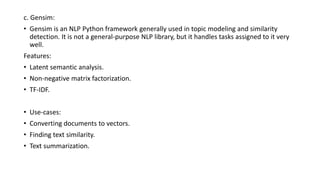

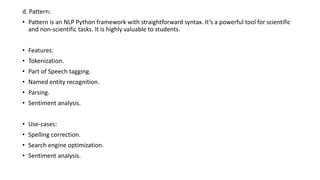

Natural language processing (NLP) involves making computers understand human language to interpret unstructured text. NLP has applications in machine translation, speech recognition, question answering, and text summarization. Understanding language requires analyzing words, sentences, context and meaning. Common NLP tasks include tokenization, tagging parts of speech, and named entity recognition. Popular Python NLP libraries that can help with these tasks are NLTK, spaCy, Gensim, Pattern, and TextBlob.