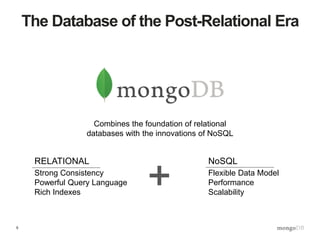

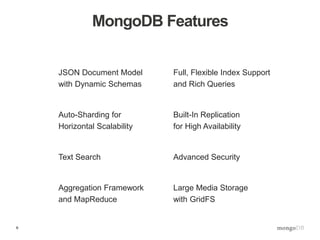

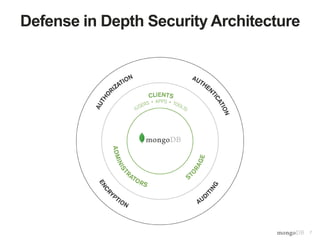

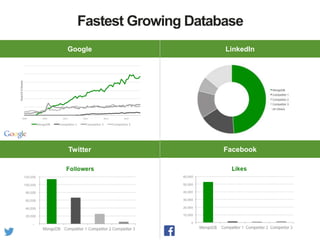

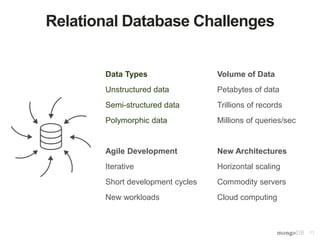

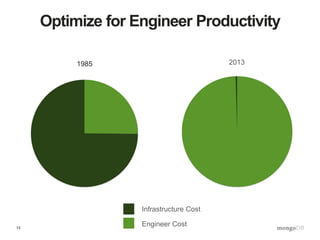

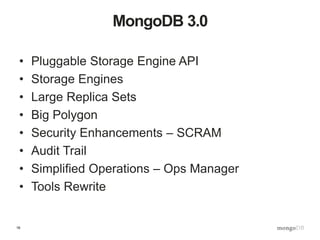

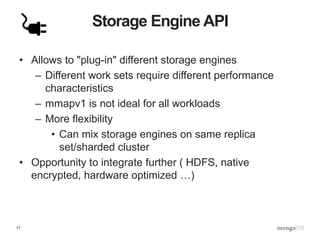

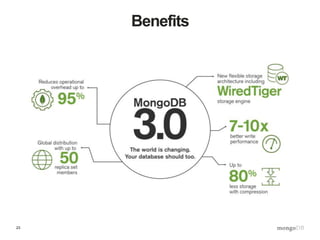

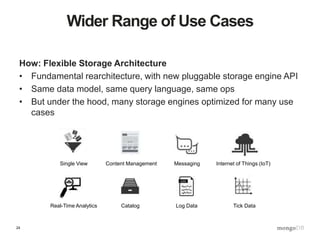

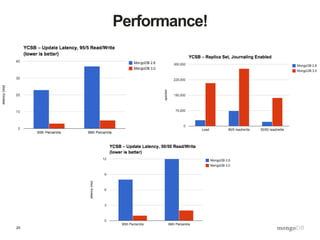

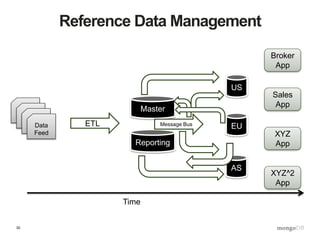

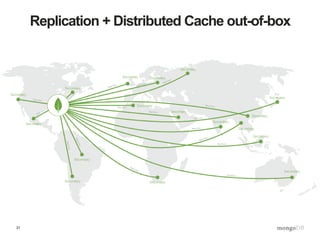

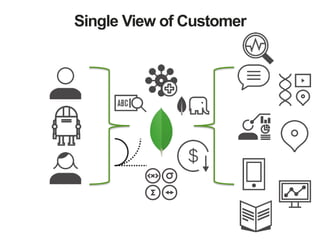

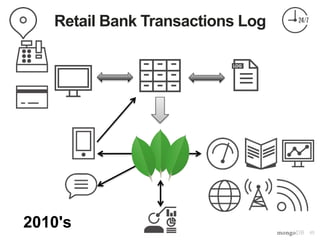

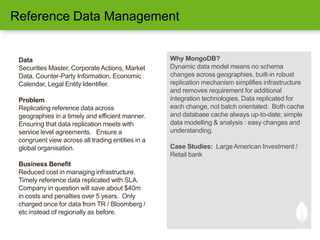

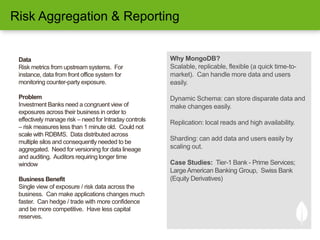

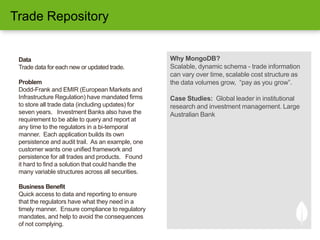

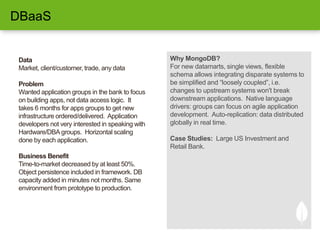

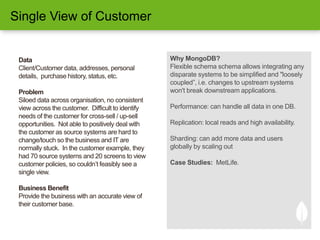

The document presents an overview of MongoDB 3.0, highlighting its features such as a flexible JSON document model and pluggable storage engines, designed to address the limitations of traditional relational databases. It outlines various use cases in financial services, including risk aggregation and reference data management, emphasizing MongoDB's scalability and efficiency for handling large datasets in real-time. The document promotes MongoDB as a solution for dynamic, high-availability applications that require rapid deployment and integration across diverse data sources.