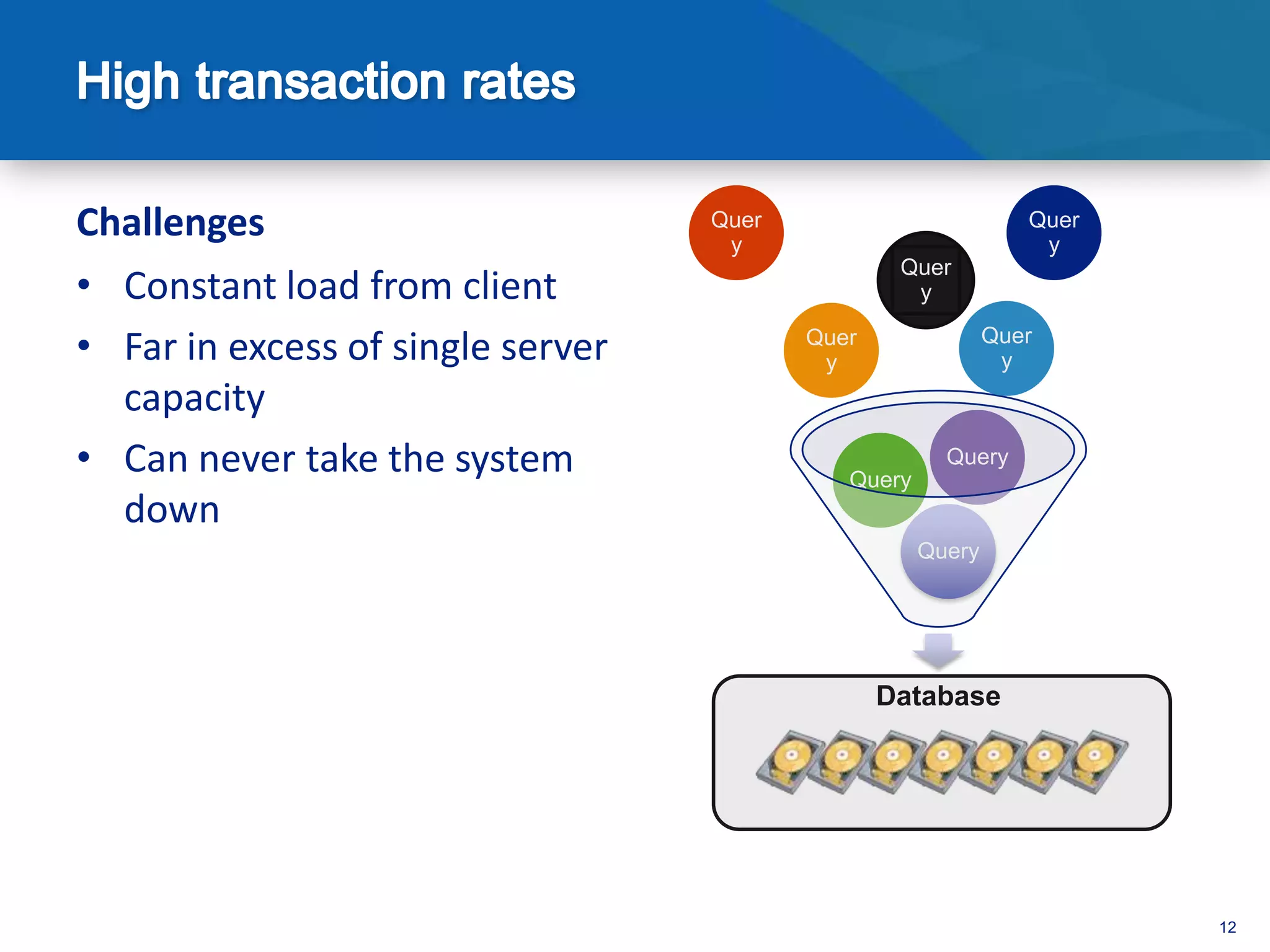

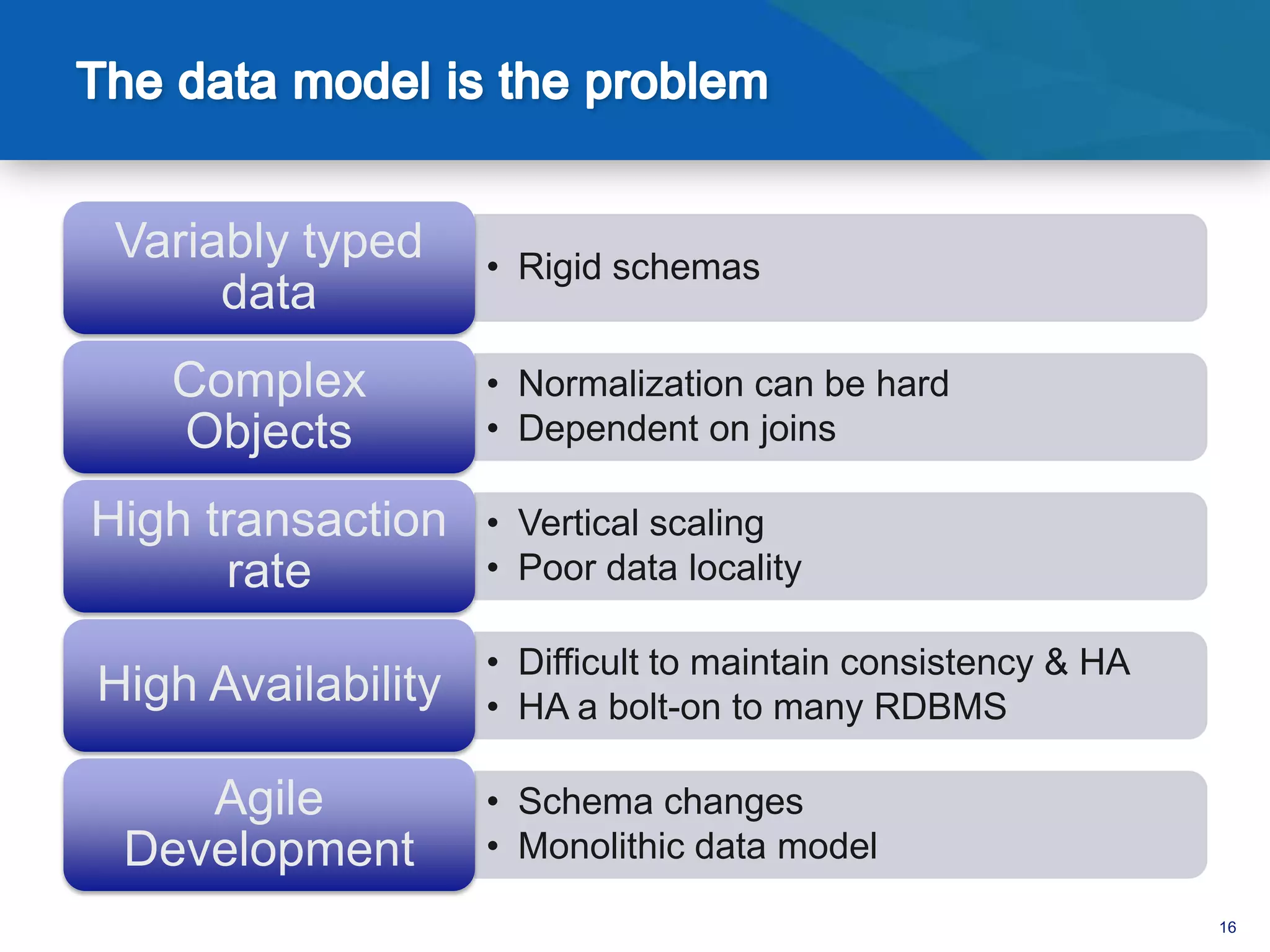

An open source, high performance database provides the following key benefits:

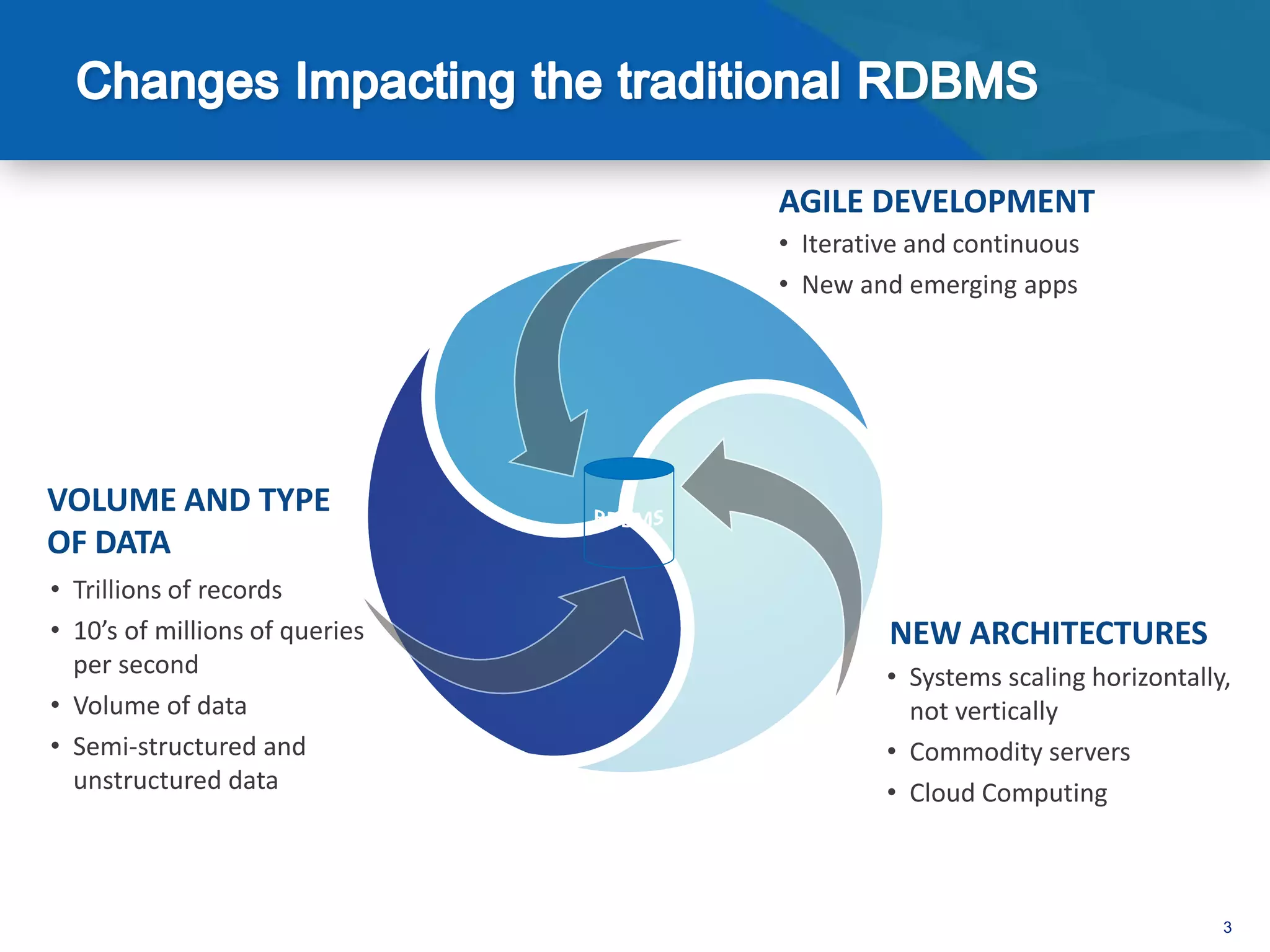

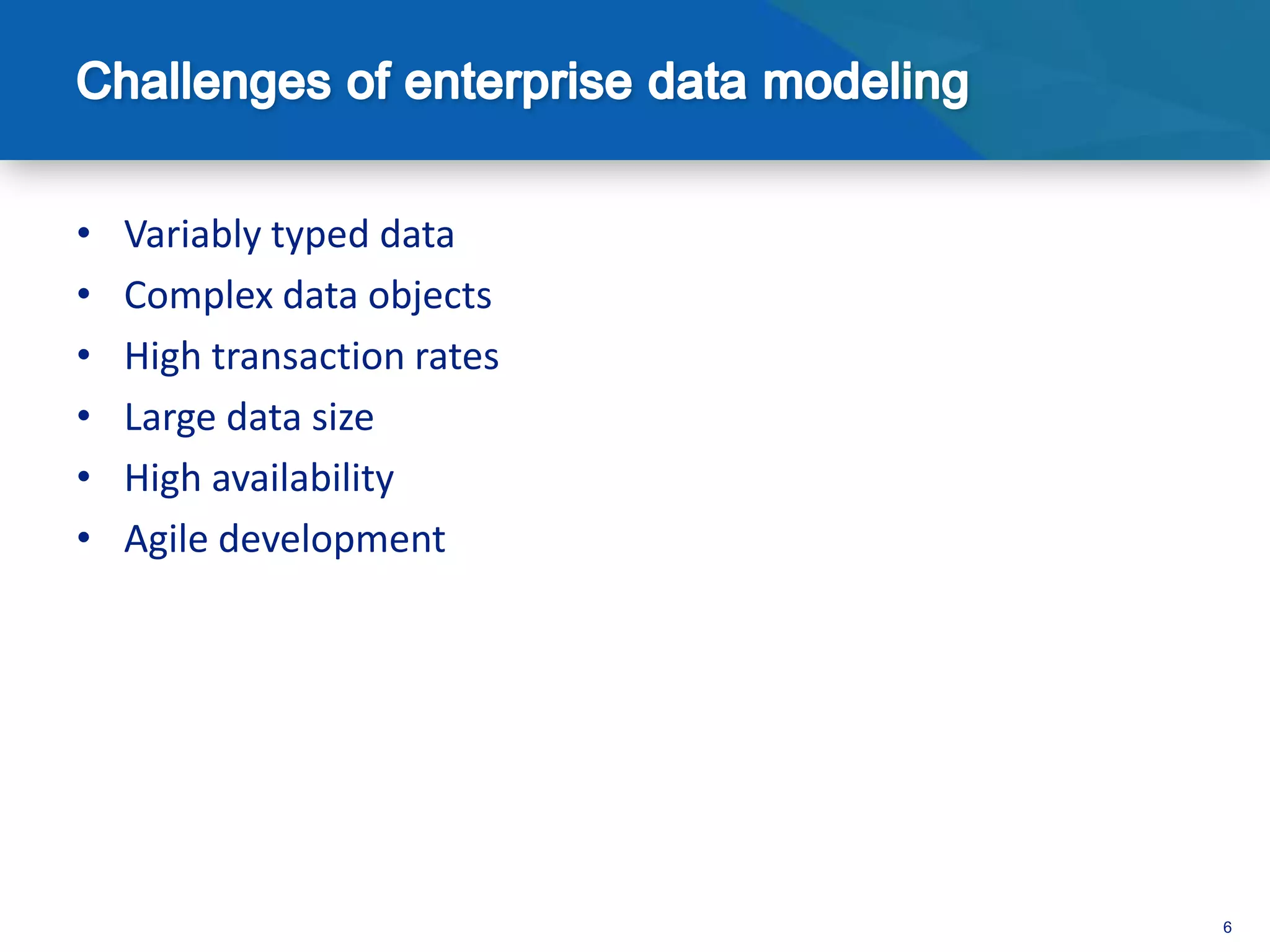

- It can handle variably typed data, complex objects, high transaction rates, and large data sizes while maintaining high availability.

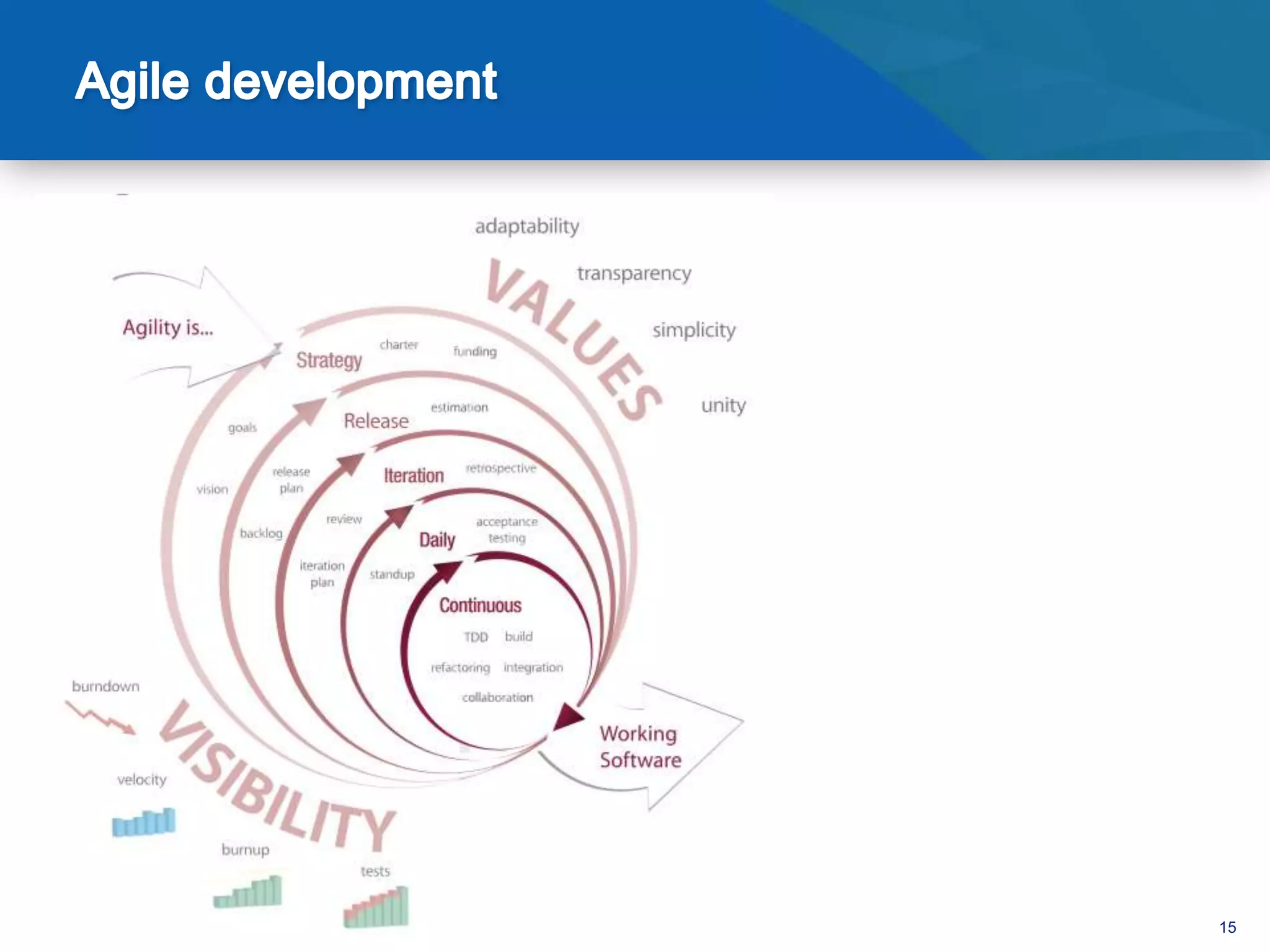

- Its agile development approach allows for iterative development and support for emerging applications.

- It can scale horizontally to massive volumes and types of data by distributing data and queries across commodity servers and cloud computing resources.

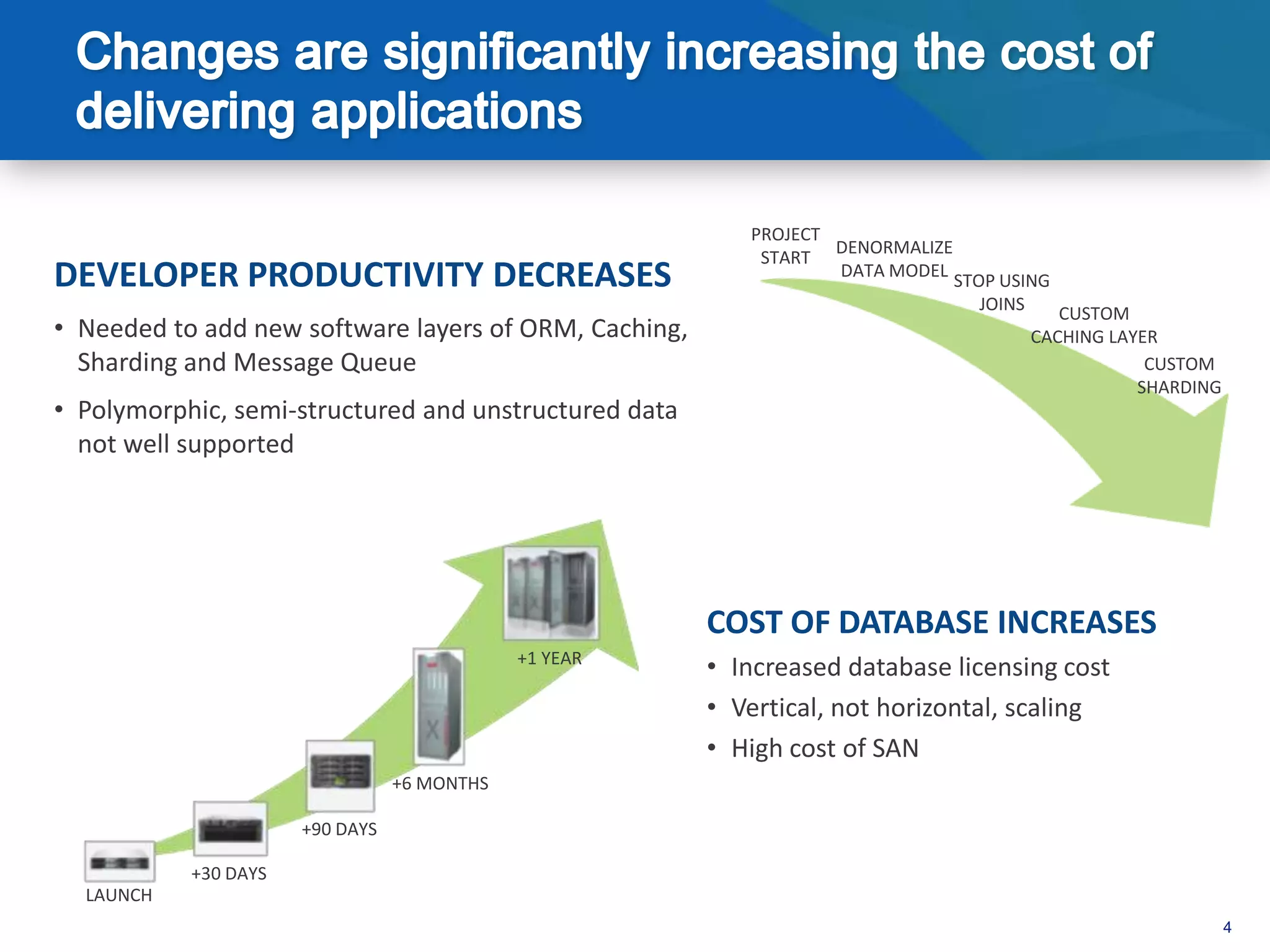

- This improves developer productivity over traditional relational databases by avoiding custom caching, sharding, and other layers needed to support modern application requirements.

![var post = { author: “Jared”,

date: new Date(),

text: “NoSQL Now 2012”,

tags: [“NoSQL”, “MongoDB”]}

> db.posts.save(post)

18](https://image.slidesharecdn.com/nosqlnow2012-120821120151-phpapp02/75/MongoDB-at-NoSQL-Now-2012-Benefits-and-Challenges-of-Using-MongoDB-in-the-Enterprise-18-2048.jpg)

![>db.posts.find()

{ _id : ObjectId("4c4ba5c0672c685e5e8aabf3"),

author : ”Jared",

date : "Sat Jul 24 2010 19:47:11 GMT-0700 (PDT)",

text : ”NoSQL Now 2012",

tags : [ ”NoSQL", ”MongoDB" ] }

Notes:

- _id is unique, but can be anything you’d like

19](https://image.slidesharecdn.com/nosqlnow2012-120821120151-phpapp02/75/MongoDB-at-NoSQL-Now-2012-Benefits-and-Challenges-of-Using-MongoDB-in-the-Enterprise-19-2048.jpg)

![{ _id : ObjectId("4c4ba5c0672c685e5e8aabf3"),

author : ”Jared",

date : "Sat Jul 24 2010 19:47:11 GMT-0700 (PDT)",

text : ”NoSQL Now 2012",

tags : [ ”NoSQL", ”MongoDB" ],

comments : [

{

author : "Brendan",

date : "Sat Jul 24 2010 20:51:03 GMT-0700 (PDT)",

text : ”I want a freakin pony"

}

]} 23](https://image.slidesharecdn.com/nosqlnow2012-120821120151-phpapp02/75/MongoDB-at-NoSQL-Now-2012-Benefits-and-Challenges-of-Using-MongoDB-in-the-Enterprise-23-2048.jpg)

![// Index nested documents

> db.posts.ensureIndex( “comments.author”:1 )

db.posts.find({‘comments.author’:’Brendan’})

// Index on tags

> db.posts.ensureIndex( tags: 1)

> db.posts.find( { tags: ’MongoDB’ } )

// geospatial index

> db.posts.ensureIndex( “author.location”: “2d” )

> db.posts.find( “author.location” : { $near : [22,42] } )

24](https://image.slidesharecdn.com/nosqlnow2012-120821120151-phpapp02/75/MongoDB-at-NoSQL-Now-2012-Benefits-and-Challenges-of-Using-MongoDB-in-the-Enterprise-24-2048.jpg)

![Rich profiles

collecting multiple

complex actions

1 See Ad

Scale out to support { cookie_id: ‚1234512413243‛,

high throughput of advertiser:{

apple: {

activities tracked actions: [

2 See Ad { impression: ‘ad1’, time: 123 },

{ impression: ‘ad2’, time: 232 },

{ click: ‘ad2’, time: 235 },

{ add_to_cart: ‘laptop’,

sku: ‘asdf23f’,

time: 254 },

Click { purchase: ‘laptop’, time: 354 }

3 ]

}

}

}

Dynamic schemas

make it easy to track

Indexing and

4 Convert vendor specific

querying to support

attributes

matching, frequency

capping

78](https://image.slidesharecdn.com/nosqlnow2012-120821120151-phpapp02/75/MongoDB-at-NoSQL-Now-2012-Benefits-and-Challenges-of-Using-MongoDB-in-the-Enterprise-78-2048.jpg)

![Geo spatial indexing

Flexible data model for location based

GridFS for large

for similar, but searches

object storage

different objects

{ camera: ‚Nikon d4‛,

location: [ -122.418333, 37.775 ]

}

{ camera: ‚Canon 5d mkII‛,

people: [ ‚Jim‛, ‚Carol‛ ],

taken_on: ISODate("2012-03-07T18:32:35.002Z")

}

{ origin: ‚facebook.com/photos/xwdf23fsdf‛,

license: ‚Creative Commons CC0‛,

size: {

dimensions: [ 124, 52 ],

units: ‚pixels‛

Horizontal scalability }

for large data sets }

83](https://image.slidesharecdn.com/nosqlnow2012-120821120151-phpapp02/75/MongoDB-at-NoSQL-Now-2012-Benefits-and-Challenges-of-Using-MongoDB-in-the-Enterprise-83-2048.jpg)